After 15 Months of Restraint, Liang Wenfeng Shakes Up the Scene

![]() 04/30 2026

04/30 2026

![]() 512

512

By Duo Le

On April 24, 2026, just as OpenAI unveiled GPT-5.5 in a bid to solidify its pricing influence in the large model market, Liang Wenfeng made a move that caught Silicon Valley off guard mere hours later.

The preview version of DeepSeek V4 was released. There was no grand press conference, no rehearsed speeches—just a straightforward set of figures: input one million tokens, and it costs just 1 RMB after a cache hit. The Flash version was even more economical, priced at 0.2 RMB. Liang Wenfeng seemed to be using DeepSeek V4 to convey a message to Silicon Valley: You can hike prices all you want; I'll keep driving them down.

Less than 48 hours before the V4 release, financing rumors surfaced: Alibaba and Tencent had both set their sights on DeepSeek, with its valuation soaring from a rumored $1 billion to over $2 billion. The two tech giants were expected to invest a combined $180 million, doubling the valuation in less than a week. The capital markets don't operate on sentiment—their willingness to pay such a price indicated that someone had caught wind of a new signal.

DeepSeek had been relatively quiet for the past fifteen months. When R1 burst onto the scene in January 2025, NVIDIA's market value plummeted by nearly $60 billion in a single day, and the global AI community buzzed about the "DeepSeek Moment." But what came next? Over a year passed without a major version update, with release windows repeatedly pushed back—from the start of the year to spring, from February to late April. Outsiders began to wonder: Had they hit a bottleneck? Had their computing power been constrained? Had the genius relying on High-Flyer Quantitative's financial support to develop open-source models finally run out of steam?

But on April 22, 2026, everything changed. After fifteen months of restraint, Liang Wenfeng made a bold move. On one hand, he slashed costs with open-source models; on the other, he leveraged the doubled valuation in the capital markets to signal a transformation: The idealist hiding behind High-Flyer Quantitative's profits, refusing to answer investors' calls—he was gone.

In his place stood a far more aggressive DeepSeek.

01

Driving Down Model Costs: The Ultimate Hardcore Move

When examining V4's technical parameters, don't be intimidated by the 1.6 trillion total parameters. What truly matters is its cost structure.

Total parameters rose to 1.6 trillion, with 49 billion activated. Under the Mixture of Experts (MoE) architecture, each layer had 384 experts. These numbers aren't the focal point. What's significant is that the context window expanded from 128K to 1 million tokens—nearly an eightfold increase—while the computational cost per token actually decreased. KV cache usage was slashed to one-tenth of the original.

How was this achieved? Not by piling on more computational power, but by switching algorithms. Using a hybrid attention mechanism of CSA and HCA, they shifted from full computation to sparse computation, replacing uniform scanning with hierarchical precision reading. In layman's terms: Previously, the model read a million-word novel word by word; now, it skims for key points, reading in detail where necessary and glossing over the rest.

The result? Prices plummeted.

DeepSeek V4-Pro costs just 1 RMB per million tokens input after a cache hit, with the Flash version at 0.2 RMB. Even the most expensive output tier costs only 2 RMB. Meanwhile, GPT-5.5, released just a day earlier, charged $30 per million tokens for output—nearly 100 times more. Not just OpenAI: Gemini 3.1 Pro was priced at $12, and Claude Opus 4.7 at $25. V4-Pro's $0.14 (converted from 1 RMB at an approximate exchange rate for context, though the original RMB price is emphasized) undercut the entire frontier of large models, smashing price benchmarks.

This wasn't just marketing hype—it was a game-changer at the engineering logic level. When developers realized that tuning V4 for a million-token context cost less than a bottle of mineral water, why would anyone pay tens of dollars for closed-source models? The global developer community responded decisively—the answer was obvious.

In Yunqi Business Review's view, DeepSeek is the Pinduoduo of AI. The metaphor may seem crude, but it's not far off. Pinduoduo disrupted pricing in下沉 markets (lower-tier cities); DeepSeek is disrupting the cost of AI calls. Pinduoduo relied on extreme supply chain compression; DeepSeek relies on extreme algorithm and architecture reconstruction.

The approaches differ, but the underlying logic is the same: Rewrite the cost structure with a model architecture that others can't easily replicate, then offer a price tag that's hard to refuse.

But DeepSeek and Pinduoduo differ in one fundamental way. Pinduoduo aims to make money; DeepSeek doesn't seek to profit from APIs. V4 is entirely open-source, under the MIT license, allowing free commercial use. This isn't Pinduoduo's business logic—it's reminiscent of the early internet's ethos of building infrastructure, driving prices to zero, and letting others build on top.

DeepSeek put it more bluntly than any analysis: After the batch launch of Ascend 950 super nodes in the second half of the year, Pro version prices will drop sharply again. It's not that current prices are low enough—they'll keep getting cheaper the more you use them. The endpoint of this cost curve isn't break-even; it's zero.

DeepSeek is also pragmatic. Officially, they admit V4 still lags behind GPT-5.4 and Gemini-Pro-3.1 by about three to six months. But this candor isn't a sign of weakness—it's a declaration: You can keep playing the benchmark game; I'll focus on the cost equation first. On the eve of the AI agent explosion, whoever makes million-token contexts standard will hold the most valuable ticket to AI industrialization.

02

Liang Wenfeng Finally Engages with Investors

Liang Wenfeng's refusal to raise funds had been seen as a form of technical purity.

After R1 went viral in early 2025, he ignored every call from investors. He didn't give Tencent or Alibaba an interview opportunity. The reason was simple: Capital interferes with technical judgment. His confidence came from High-Flyer Quantitative's financial strength. By allocating a portion of its hundreds of millions in quantitative trading revenue to AI research, with over 84% ownership, he was nearly unique in the AI startup world.

But by 2026, this path was blocked. Not because High-Flyer wasn't earning enough, but because AI competition's cost growth far outpaced quantitative trading's returns. OpenAI and Anthropic raised rounds worth hundreds of millions of RMB, and the global pricing power for talent was firmly in the hands of giants.

What hurt Liang Wenfeng most wasn't the money—it was the poaching of his core team.

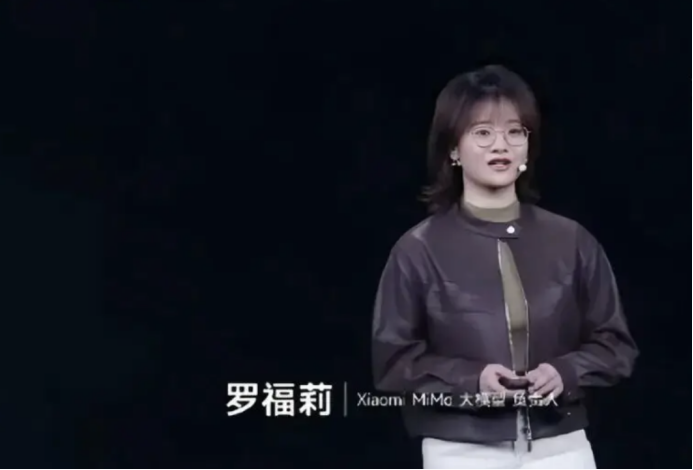

According to public records, since late 2025, DeepSeek has lost at least five core R&D members. Wang Bingxuan, lead author of the first-generation large language model, joined Tencent; Luo Fuli, a key contributor to V3, was recruited by Lei Jun to lead Xiaomi's MiMo team; Guo Dayya, a core R1 researcher, was poached by ByteDance's large model team; Wei Haoran, a core OCR series author, and Ruan Chong, a key contributor to multimodal results, also left. These individuals covered four core technical pillars: base models, reasoning, OCR, and multimodal.

DeepSeek had fewer than 200 employees, with over 100 in core research and just a few dozen in the base model architecture team. In a small team heavily reliant on individual talent, the loss of every core researcher meant halting an entire technical line. Sentiment doesn't pay the bills.

V4's release window, originally set for February, was delayed repeatedly because computational costs jumped from the millions to the billions of RMB. No matter how much High-Flyer earned, it couldn't sustain that burn rate. Financing shifted from optional to mandatory.

After the financing news broke, the most shocking aspect wasn't the amount—though it was substantial—but the speed of the valuation surge. From $1 billion to over $2 billion in days. Compared to peers, Zhipu's market cap exceeds $5 billion, and MiniMax tops $3 billion—but both are commercialized, with B-end clients and stable revenue streams. DeepSeek had generated no revenue yet; its $2 billion valuation was the ceiling for a non-commercialized company.

Capital markets suddenly realized DeepSeek was no longer a niche tech player deserving pity—it was a certain trade target.

Zhang Ailing said fame should come early. But Liang Wenfeng proved something else in these 18 months: In AI warfare, surviving to the end matters far more than early fame. Not being short on cash doesn't mean you don't need it; not needing it doesn't mean you don't need a market cap anchor. Engaging with investors wasn't the collapse of idealism—it was Liang Wenfeng's first admission that in the money game, the stupidest move is pretending you don't need to play.

03

Huawei Ascend: DeepSeek's Hidden Trump Card

Buried in V4's announcement was a nearly overlooked detail: DeepSeek listed Huawei Ascend in its official hardware compatibility list for the first time.

To make this switch, the team rewrote the entire system, originally built on NVIDIA CUDA, to migrate to Huawei's CANN domestic framework. This wasn't an easy decision. NVIDIA GPUs still dominate high-end training scenarios, and Huawei's chips lag in computational power, toolchains, and ecosystem.

But Liang Wenfeng wasn't betting on current performance gaps—he was betting on the bigger picture. Once China had a fully autonomous AI computing base, U.S. chip controls and trade sanctions would lose their grip. A week earlier, Jensen Huang had said something that, translated, meant: If DeepSeek achieves deep optimization on Huawei's platform first, it would be a terrifying outcome for the U.S. AI industry.

Huawei Ascend 950 chips are in mass production, expected to launch in the second half of the year. DeepSeek's ability to slash V4's prices so aggressively wasn't just from algorithmic optimization—it was because chip costs were shifting. V4's price wasn't cut out of kindness; it was because they switched suppliers.

Western media noticed the implications. Bloomberg called V4 a formidable challenge to OpenAI and Anthropic; CNBC said it was a full-scale display of strength. Reuters hit closer to the mark—this wasn't just model iteration; it was a critical step in China's AI de-NVIDIA-ization. Pry open the AI nationalization chain at the code level, and it unsettles rivals more than a model release.

Future AI competition won't be a benchmark race between single vendors—it'll be a brutal clash between China's and America's AI ecosystems. DeepSeek unleashes energy through open-source; Huawei provides the chip base. Together, they're not just players—they're rule rewriters.

No one understands better than Liang Wenfeng how far AGI is. He also knows better than anyone that idealism without survival is worthless.

Over the past fifteen months, DeepSeek endured core executive poaching, repeated release delays, and external sentiment swinging from deification to doubt. By summer 2025, global downloads plummeted from a peak of 8 million to 2 million, with monthly actives surpassed by ByteDance's Doubao. Every signal said the same thing: Technical idealism without capital backing can't survive an industry winter.

V4 and financing landing simultaneously were like Liang Wenfeng placing two chess pieces at once. One proved his "what" judgment hadn't changed; the other proved his "how" judgment had fundamentally shifted. Financing wasn't the end of idealism—it was idealism plugging into a larger machine. The $2 billion valuation wasn't just about money—it was a market vote of confidence in DeepSeek's ability to survive to the end.

Liang Wenfeng is playing a bigger game at a pace others don't understand. This game isn't called R1, V4, Ascend, or even $2 billion—it's called survival. Only by surviving can you talk about disruption.