Server CPU: On the Eve of Disruption?

![]() 03/09 2026

03/09 2026

![]() 514

514

When NVIDIA, which has profited handsomely from GPUs dedicated to driving AI, declares that it is "bullish on the potential of the CPU track (market)", when the Vera Rubin platform makes its debut with a configuration of 36 Vera CPUs and 72 GPUs, and when international institutions collectively predict a sustained expansion in the CPU supply-demand gap, a clear signal emerges: the core narrative logic of AI computing power is shifting from "GPU-dominated" to "CPU+GPU collaborative coexistence."

01

The Importance of CPU Is No Less Than That of GPU

Jensen Huang's bet on CPUs is no sudden whim. For a long time, NVIDIA has harbored ambitions in the PC market.

As early as CES 2011, NVIDIA officially unveiled Project Denver, announcing the development of custom CPU cores based on the ARM architecture. Subsequently, in 2014 and 2018, NVIDIA released the mobile processor Tegra K1 and the Carmel CPU core for autonomous driving and edge computing, respectively.

In April 2021, NVIDIA launched the Grace CPU based on the Arm architecture, marking the GPU giant's formal entry into the server CPU arena. Grace is not a general-purpose processor in the traditional sense but an "accelerated CPU" designed specifically for AI, HPC (high-performance computing), and large-scale data processing.

Recently, Meta announced a new procurement agreement with NVIDIA, purchasing millions of NVIDIA's next-generation Vera Rubin graphics processors and an equal number of Grace central processors to support its AI development strategy. Meta, already one of NVIDIA's largest customers, has been using NVIDIA's GPUs for years, but this new agreement signals a deeper partnership. Notably, Meta has committed to purchasing large quantities of NVIDIA's Grace CPUs and deploying them as standalone chips rather than in conjunction with GPUs.

NVIDIA stated that Meta would be the first company to independently deploy Grace CPUs and would also deploy the next-generation Vera CPUs starting next year. Notably, the Vera CPU is NVIDIA's new generation of AI chips, part of its self-developed Arm architecture CPU series, officially announced at CES 2026 on January 6, 2026, and expected to be formally released at GTC 2026 from March 16 to 19, 2026. Deliveries to initial customers like Microsoft are planned to begin in the second half of 2026. The chip is designed to support the training and inference of next-generation large models.

At the previous Consumer Electronics Show in Las Vegas, Jensen Huang also said, "It wouldn't be surprising to see NVIDIA rank among the world's top CPU manufacturers."

In recent years, as AI computing power has been dominated by GPUs, the market has easily formed an Inertial cognition (inertial perception): AI = GPU. However, by 2026, a conclusion verified by cloud vendors and large model companies with real money is becoming a consensus: the importance of CPUs in data centers is no less than that of GPUs.

Why has the industry only pursued GPUs in the past few years while treating CPUs as a "supporting role"? The core reason is simple: AI is still in the "training is king" phase. From 2023 to 2025, the industry's focus has been on "training out" large models, competing on extreme parallel computing power and matrix multiplication efficiency. GPUs, with their architectural advantages, naturally became the absolute protagonists in the training phase. At this time, CPUs only handled auxiliary tasks such as basic scheduling and data transfer, naturally not receiving much attention from public opinion.

However, from the second half of 2025 to 2026, the AI industry underwent a critical paradigm shift: from training-centric to inference, execution, and large-scale deployment-centric. Agent, RAG, tool invocation, multimodal interaction, reinforcement learning environment setup... These commercialization scenarios are no longer purely parallel computing but involve a large amount of logical judgment, serial execution, system scheduling, I/O control, memory scheduling, and task distribution. These are precisely the weaknesses of GPUs and the strengths of CPUs.

Industry data has already provided the answer: in a typical Agent workflow, CPU processing latency accounts for up to 90%, becoming the number one bottleneck in end-to-end performance. The logic of "stacking GPUs to improve efficiency" has completely failed—if the CPU can't keep up, GPU utilization remains low; if the CPU specifications are insufficient, the cluster cannot be fully utilized. The collective price hikes and sold-out capacities of Intel and AMD server CPUs in early 2026 directly reflect this trend.

Currently, Intel and AMD CPUs are generally in short supply, with server CPU lead times extending up to six months, and consumer-grade CPUs also facing delays and price increases. NVIDIA's heavy investment in server CPUs at this juncture is not only to complete its full-stack computing power portfolio but also to directly target the supply vulnerabilities of the x86 camp. Its logic is clear:

- Not developing general-purpose CPUs but customizing them for AI clusters, deeply collaborating with GPUs, DPUs, and high-speed interconnects; - Solving the industry's most painful problem: CPU bottlenecks leading to low GPU utilization and underutilized clusters; - Using an integrated solution to hedge against the current risks of x86 CPU shortages and unstable deliveries. This also means that the competition for data center computing power has officially shifted from "single-chip comparison" to "full-system competition."

02

CPU Shortages and Changing Market Dynamics

Now, let's examine the current competitive landscape of the server CPU market.

For the past two decades, the server CPU market has been firmly controlled by Intel and AMD. With their mature x86 instruction set, vast software ecosystem, and high compatibility with operating systems and middleware, the x86 architecture has become the default choice for enterprise markets. Especially in general-purpose computing, databases, and virtualization scenarios, the comprehensive advantages of x86 CPUs are irreplaceable.

However, this duopoly is rapidly evolving, with Intel's long-standing dominance under Continuous pressure (continuous pressure), and AMD steadily gaining market share through technological breakthroughs.

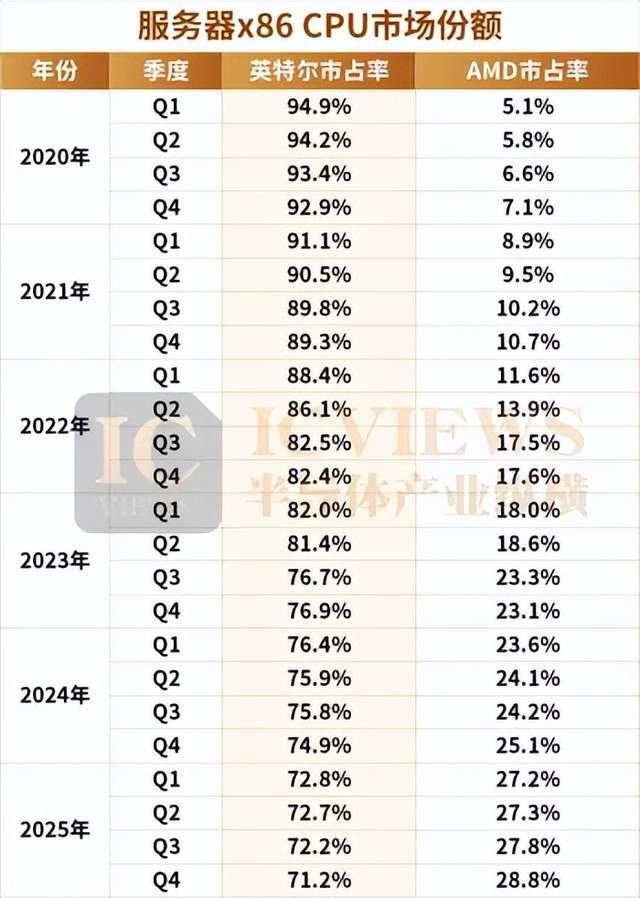

The chart above shows that as of 2025, AMD's shipment share in the server market has approached 30%, while Intel, although still dominant, has seen its share decline from its peak. This change stems from multiple industrial factors rather than a single technological advantage or disadvantage.

Industry insiders told Semiconductor Industry Review:

First, customer needs are diversifying.

Hyperscale cloud service providers' demand for high core counts, high energy efficiency, and high I/O bandwidth is rapidly increasing. Since its EPYC series, AMD has adopted a Chiplet (small chip) architecture, combined with TSMC's advanced manufacturing processes, to achieve relatively rapid implementation in products with over 96 cores, aligning with some cloud vendors' preferences for "performance per watt" and horizontal scalability. However, this does not mean AMD is without shortcomings. The insider stated, "AMD's Chiplet architecture, while allowing for rapid core count stacking, suffers from relatively high communication latency between different small chips, which has been a persistent issue." The insider added that Intel's strength lies precisely here—low core-to-core communication latency, coupled with years of accumulated software and hardware ecosystems, provides better compatibility, which is key to maintaining its market share in the current AI wave.

Second, pricing strategies have become another "key" for AMD to penetrate the market.

"Overall, AMD's server CPUs are priced slightly lower than Intel's, especially in the channel market, where AMD's strategy is more aggressive," the insider revealed. AMD offers significant discounts on older models, flooding the market with cost-effective older AMD CPUs, while Intel adheres to robust (stable) price controls and does not easily reduce prices. This price difference has led different customer segments to make different choices. Internet giants and mainstream server vendors, who prioritize technological iteration, closely follow Intel's new product releases, preferring to purchase the latest or previous-generation products; while the vast number of small and medium-sized customers and channel assembly markets, which are more price-sensitive, extensively adopt AMD's older models. Additionally, in niche segments such as industrial control, IoT, and edge computing, although some customers still use older products, this segment's impact on the overall market landscape is relatively limited.

Finally, differences in supply chain strategies are also emerging.

As the long-standing industry leader, Intel once firmly held technological initiative through its autonomous manufacturing system (IDM model). However, around 2021, its manufacturing process iteration suddenly "slowed down," causing a noticeable disconnect in its new product release rhythm (cadence), lagging behind AMD by half a beat or even a full beat. Many customers, unable to wait, turned to AMD's new products," the insider explained.

In contrast, AMD took a completely different path from Intel—abandoning autonomous manufacturing and entrusting all production to TSMC. This choice coincided with the rise of advanced manufacturing processes, and from 7nm to the current 3nm, AMD closely followed TSMC's process rhythm (cadence), continuously improving product performance and energy efficiency. However, in recent years, Intel's 18A manufacturing process has matured, gradually narrowing the performance gap caused by process differences.

Regarding the widely discussed question of "whether AMD can capture half of the data center x86 CPU market in the next 5-10 years," industry insiders gave a clear answer: "Probably not." In their view, the strengths of both sides have formed complementary areas that are difficult to replace.

"AMD's high core counts are suitable for large-scale scenarios like cloud computing and big data, while Intel's low latency and mature ecosystem are irreplaceable in high-end fields like AI and fintech," they further analyzed. Now that Intel's manufacturing processes have largely returned to advanced levels, its main shortcoming (weakness) lies in product design, which is currently being continuously optimized to respond to AMD's competition; while AMD needs to address latency issues and fill gaps in its software ecosystem to further increase its market share.

03

More Than Just NVIDIA: Variables in the CPU Market

Beyond NVIDIA, the competition at the CPU's foundational architecture level is quietly reshaping the long-term landscape of the server market.

Previously, the x86 architecture also held a 90% market share in the global server CPU market. However, according to a recent report by market research firm Dell'Oro Group, in the second quarter of 2025, ARM architecture processors accounted for 25% of the server CPU market share, a significant increase of 10 percentage points from 15% in the same period last year. This notable growth is primarily driven by the large-scale delivery of NVIDIA's ARM-based Grace platform and the accelerated deployment of custom chips by major tech companies.

For example, Amazon Web Services (AWS) pioneered hyperscale ARM server processors with its Graviton series, first released in 2018. The company recognized that most workloads running in its data centers did not require the maximum single-thread performance offered by x86 processors but would benefit significantly from improved energy efficiency and cost-effectiveness. By 2024, AWS stated that approximately half of its new CPU deployments used Graviton processors instead of x86 chips.

Google subsequently introduced its Axion processor, announced in 2024, claiming a 60% reduction in power consumption compared to similar x86 processors while delivering equivalent performance for typical cloud workloads.

The reasons for this are manifold. First, traditional x86 architectures often incur significant energy consumption under high loads, while ARM, through its reduced instruction set and more efficient chip design, substantially lowers unit energy consumption at equivalent computing power, saving cloud service providers ongoing operational costs. Second, ARM's flexible licensing model completely breaks the monopoly logic of chip licensing—cloud vendors can freely customize processor architectures without paying hefty licensing fees, deeply adapting them to their specific workloads, upgrading computing power from "general-purpose adaptation" to "scenario customization." This model not only reconstructs the entry barriers for chip innovation but also enables cloud services to shift from "purchasing hardware" to "defining computing power," achieving true on-demand optimization. Finally, the low-cost advantage further eliminates barriers to computing power proliferation, making cloud services more economically competitive.

Not only is the ARM architecture gaining traction, but server CPUs based on the RISC-V architecture are also rapidly "breaking out." The RISC-V architecture offers advantages such as open-source, simplified instructions, and scalability, achieving significant success in the Internet of Things (IoT) field, which emphasizes energy efficiency. However, this does not mean RISC-V cannot enter the PC and server markets, which have higher performance requirements.

Regarding the advantages of the RISC-V architecture, the author summarizes four main points:

First, it is open-source, meaning it is self-developed and controllable (autonomous and controllable). Second, it is customizable, making it highly suitable for the customization needs of dedicated servers. Third, it has lower costs, as the licensing fees and royalties for RISC-V IP cores are currently only 1/3 to 1/4 of those for ARM. Adopting a RISC-V solution can significantly reduce costs for chip and server manufacturers. Fourth, RISC-V has some unique performance advantages, such as its ability to provide higher performance and core counts per unit area, making it more efficient in AI and other applications with high-performance demands.

Today, several companies have unveiled RISC-V architecture server CPU chips. Examples include Alibaba's XuanTie RISC-V CPU and RISC-V's fully self-developed high-performance RISC-V server chip.

Zhang Xiantao, President of Alibaba Cloud's Wuying Business Unit, stated, "I believe that after 5-8 years of development, large-scale applications in servers should not be a problem. Many companies have high expectations for it, which will certainly accelerate the process. The widespread application of RISC-V architecture from low-power IoT terminals to data centers can probably be achieved within 5-8 years."

Huan Dandan, CEO of Weihe Core, stated that high-performance computing scenarios are the key to unlocking RISC-V's market potential. "In the past, RISC-V was mostly aimed at the AIoT field, and the low-cost, low-performance segment is already a red ocean market. For the RISC-V industry, as an emerging instruction set, to grow and thrive, it must evolve alongside emerging applications in high-performance domains (servers, AI computing) in data centers to truly unlock its market potential."

Today, with NVIDIA's entry into the market with Grace and Vera CPUs, the rapid rise of the ARM architecture due to its high energy efficiency and customization advantages, and RISC-V breaking monopoly barriers with its open-source strength, the once x86 duopoly-dominated landscape has been completely disrupted. As the AI industry shifts from "training is king" to "inference and deployment," the core value of CPUs becomes increasingly prominent, and the competition for computing power has also upgraded from single-chip comparison to full-system competition.