The Second Half of Autonomous Driving: World Models Endowing Machines with 'Common Sense' and 'Reasoning Capabilities'

![]() 04/07 2026

04/07 2026

![]() 514

514

The intelligent driving industry is undergoing an extremely treacherous 'collective slowdown.'

On the surface, data volumes are surging, computational power is being stacked exponentially, and end-to-end (End-to-End) systems have become standard fare in every company's PPTs. But behind closed doors, every intelligent driving leader is keeping tabs: Why, even after stacking thousands of H100s, does the system's 'intuition' at complex intersections still feel like a lottery? Why have we solved 99% of scenarios, yet the remaining 1% lingers like a ghost, impossible to eradicate?

People are beginning to realize that we may have hit an invisible wall: The dividends of algorithms are marginally diminishing, and the system's 'intelligence' is locked within the logic of reactive architectures.

Behind this anxiety lies the same ultimate proposition: In the second half of autonomous driving, the competition is no longer about who has more accurate perception but about who can endow machines with 'common sense' and 'reasoning capabilities.'

This is why 'World Models (Driving World Model)' are being elevated to a pedestal at this juncture. They are not just another term for financing (fundraising) but a collective breakthrough strategy after the industry hit a wall.

After reading this article, you will gain four key insights about world models:

What exactly is a world model?

What fundamental problems do world models solve in autonomous driving?

Where are the current technical bottlenecks in world model applications, and how real are they?

What are the most worthwhilenext bets for decision-makers, practitioners, and researchers?

I. It's Not 'Better Perception'—It's a Different Driving Cognition

World Models (Driving World Model, DWM) are often conflated with 'stronger perception modules' or 'more accurate prediction algorithms.' This misunderstanding leads to misallocated resources.

World models address a more upstream issue: enabling the system to 'run through' outcomes in its mind before taking action.

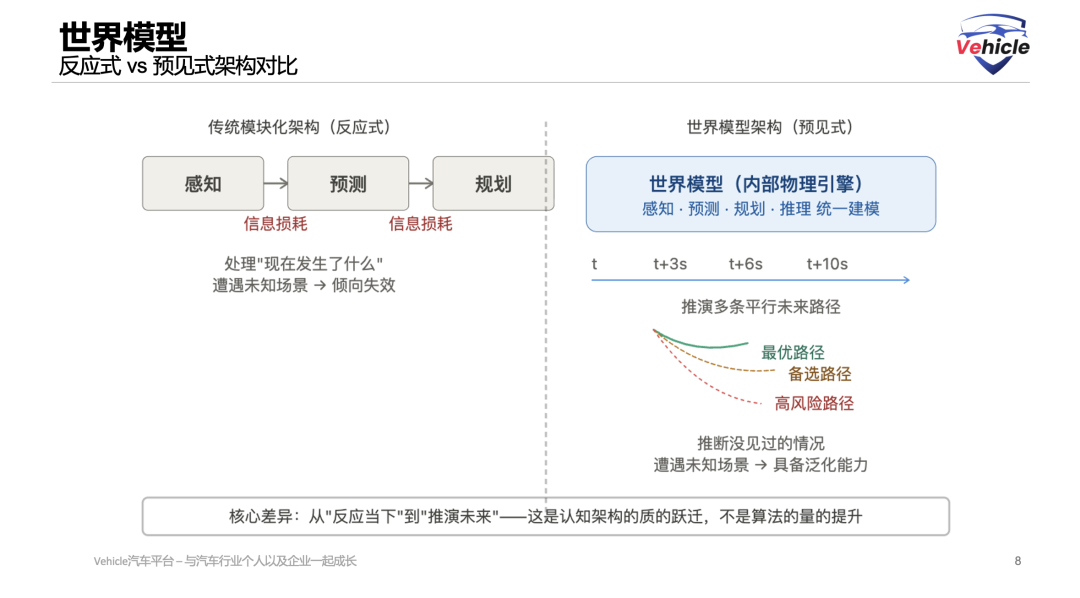

The information flow in traditional end-to-end modular architectures is unidirectional—perception outputs to prediction, which outputs to planning, with uncertainties irreversibly lost at each step. The system is fundamentally reactive: it processes 'what is happening now.'

World models invert this logic. They construct an internal physics engine, enabling the system to reason into the future along the timeline: Where will this surrounding vehicle go in the next 3 seconds? How will it react if I change lanes now? Which decision path carries the lowest risk across 10 possible futures? This is anticipatory, not reactive, as exemplified by the typical end-to-end algorithms discussed in our previous article, 'The Battle for Intelligent Driving Paradigms: Unveiling the Underlying Logic and Architectural Evolution of Autonomous Driving 'End-to-End'.'

In engineering terms, this distinction manifests as follows: Reactive systems tend to fail when encountering situations outside their training data; anticipatory systems, by understanding the laws governing the physical world, possess stronger generalization capabilities—they can infer unseen situations rather than merely matching seen patterns. Of course, as introduced in our previous article, 'Understanding Visual-Language-Action Models (VLA) and Their Applications,' VLA is also a way to enhance algorithmic generalization, albeit with the added benefit of language-based human-machine interaction.

Functionally, DWM fulfills four intercoupled roles:

Jointly modeling multi-step trajectories and intentions of dynamic elements (not just predicting 'where it will go' but inferring 'why it moves that way');

Conducting counterfactual reasoning before any action to assess risks across multiple parallel paths;

Generating high-fidelity extreme-scenario data to address data scarcity in long-tail coverage;

And integrating large language models' common-sense reasoning to address structural blind spots in pure vision models—such as what a smoking car by the roadside implies or the traffic logic behind a police officer's gestures.

II. Three Real Barriers

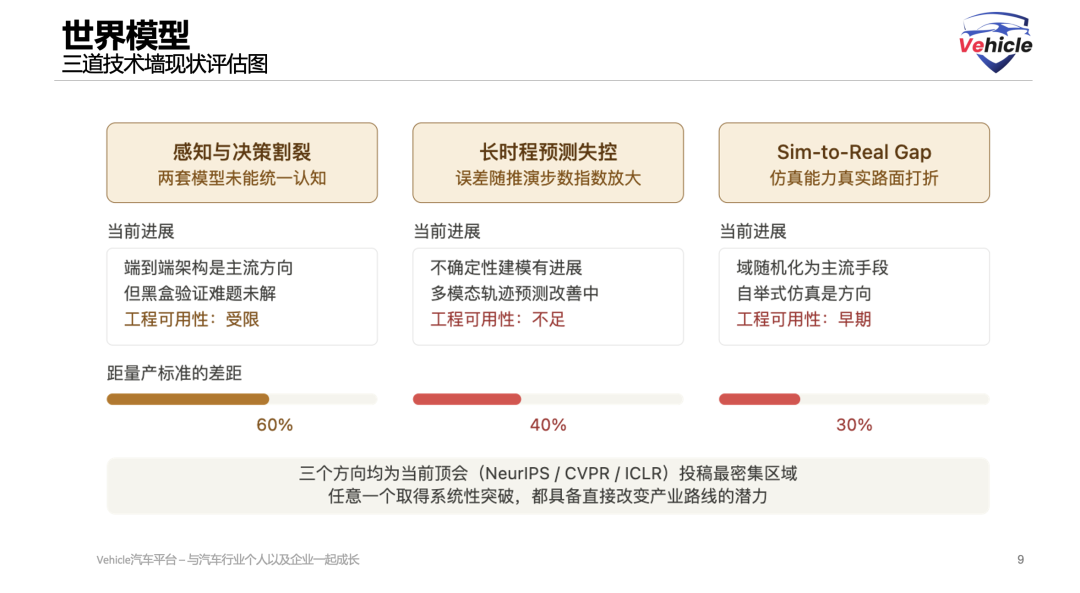

Frankly speaking, world models still face three unresolved systemic challenges before large-scale mass production. This is not pessimism but a reality that must be confronted when allocating resources.

The First Barrier: Perception and Decision-Making Remain Disjointed.

Models targeting fine-grained scene prediction and those targeting behavioral planning have yet to truly fuse into a unified driving cognition. The former implicitly reflects intentions through pixel changes, lacking explicit driving logic; the latter focuses on strategy but severely lacks fine-grained perception of complex visual scenes.

The end-to-end (End-to-End) architecture is currently the most promising path for integration. However, it introduces a new engineering challenge: How can black-box systems pass safety verification? Waymo's co-CEO has explicitly stated that pure end-to-end systems are 'easy to start with but far from meeting the safety standards for fully autonomous driving.' This assessment remains controversial within the industry—but it is a viewpoint that every team betting on end-to-end routes should take seriously rather than bypass.

The Second Barrier: The Longer the Prediction Horizon, the More Uncontrolled the Errors.

Predicting 3 seconds into the future yields acceptable accuracy. Predicting 10 seconds, errors begin to amplify exponentially. The root cause is multi-step error propagation: A minor estimation bias in a vehicle's speed at time t can lead to completely incorrect position predictions after n steps of reasoning.

This is particularly fatal in highway scenarios and complex urban intersections—precisely the scenarios where advance planning is most critical. While uncertainty-aware prediction and multimodal trajectory prediction have made progress, their engineering practicality still falls short of mass production standards. No systemic solution exists for this barrier yet.

The Third Barrier: Capabilities Trained in Simulation Degrade on Real Roads.

The Sim-to-Real Gap is not mystical; it has physical causes: Microscopic differences in road surface materials, noise patterns in rainy-day sensors, and interference from strong sidelight on cameras—simulators systematically simplify these details. Domain Randomization and data calibration are currently the mainstream approaches, but their effectiveness has clear limits.

A more fundamental direction may be to use world models themselves to generate more realistic simulation environments, gradually narrowing the gap through self-bootstrapping. This path is still in its early stages. It is also worth noting that pure vision solutions face structural challenges in accurately perceiving 3D geometry and temporal dynamics in highway scenarios—a limitation that the current mainstream visual route must confront head-on, one that cannot be resolved by simply accumulating more data.

III. The Counterintuitive Truth: Your Users Are Becoming Your Most Critical R&D Asset

Here's something the entire industry hasn't fully grasped yet.

Most automakers measure the success of their intelligent driving businesses by penetration rates and feature usage rates. This framework is outdated.

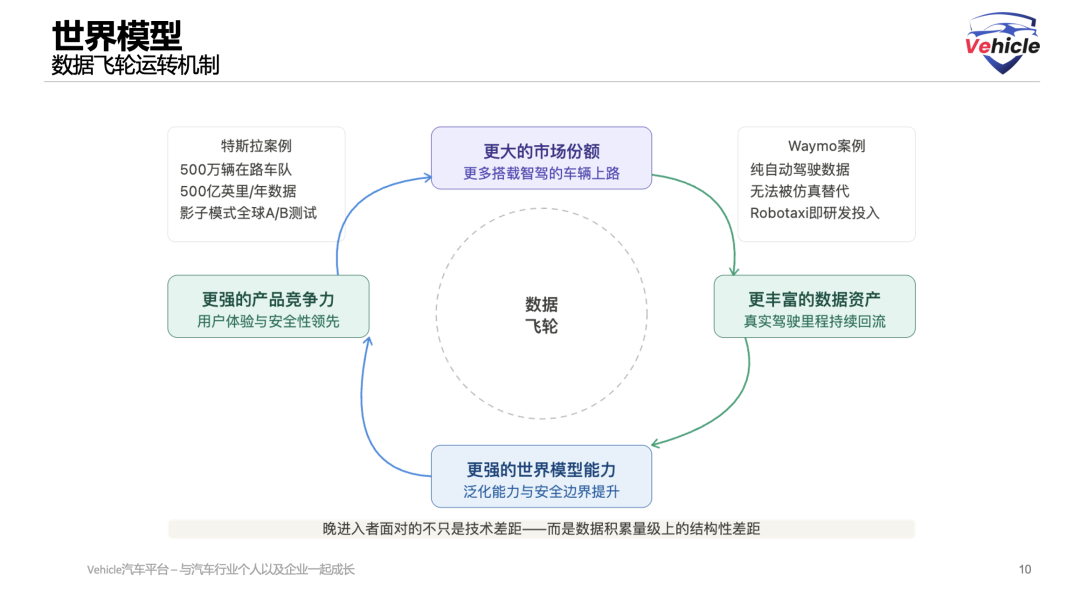

What truly determines the upper limit of a world model's capabilities is the quality and diversity of its training data. And mass-deployed user fleets are the most efficient and cost-effective way to acquire such data.

Tesla's 'Shadow Mode' is the best case study for understanding this logic. New algorithm versions run in the background on user vehicles without taking control of the steering wheel, merely recording the differences between AI judgments and human actions. This mechanism transforms 5 million user vehicles into a continuously operating, large-scale behavioral deviation dataset—users unknowingly complete global A/B testing for the AI system. Every year, 50 billion miles of real-world driving data flood in, with 100,000 new miles added every minute.

Waymo takes this logic further: There exists a category of data that no simulator or human driving data can replace—experience accumulated when the system operates fully autonomously without human intervention. Only when AI independently handles real-world complex road conditions and feeds these experiences back into the training system can autonomous driving truly surpass human driving performance ceilings and achieve quantifiable safety proofs. This is the underlying logic behind Waymo's integration of Robotaxi operations with technological R&D, not just commercial packaging.

These two cases point to the same conclusion—and the sentence we most want decision-makers to remember:

Market share is transforming into data assets, data assets are transforming into model capabilities, and model capabilities are transforming into next-round market share. This flywheel means late entrants face not just technical gaps but structural disparities in data accumulation scale.

Three direct implications for product strategy emerge:

First, the strategic value of vehicles equipped with intelligent driving functions should not be measured solely by sales volume but also by the quality and diversity of data feedback. Driving data from remote areas, extreme climates, or special road conditions may hold more training value than data from high-density urban areas—because it covers the model's long-tail blind spots.

Second, the boundaries of user driving data rights are becoming a new regulatory concern. Establishing sustainable authorization mechanisms between data collection, privacy protection, and model training is a compliance issue that requires proactive planning, not post-hoc fixes.

Third, the data flywheel logic applies equally to pure software suppliers. Intelligent driving solutions without scaling (scaled) terminal deployments will continuously lag behind competitors with vehicle fleets in iteration speed. This gap will widen over time, not narrow automatically.

IV. Its Boundaries Are Broader Than You Think: From Autonomous Vehicles to AI-Powered Physical Worlds

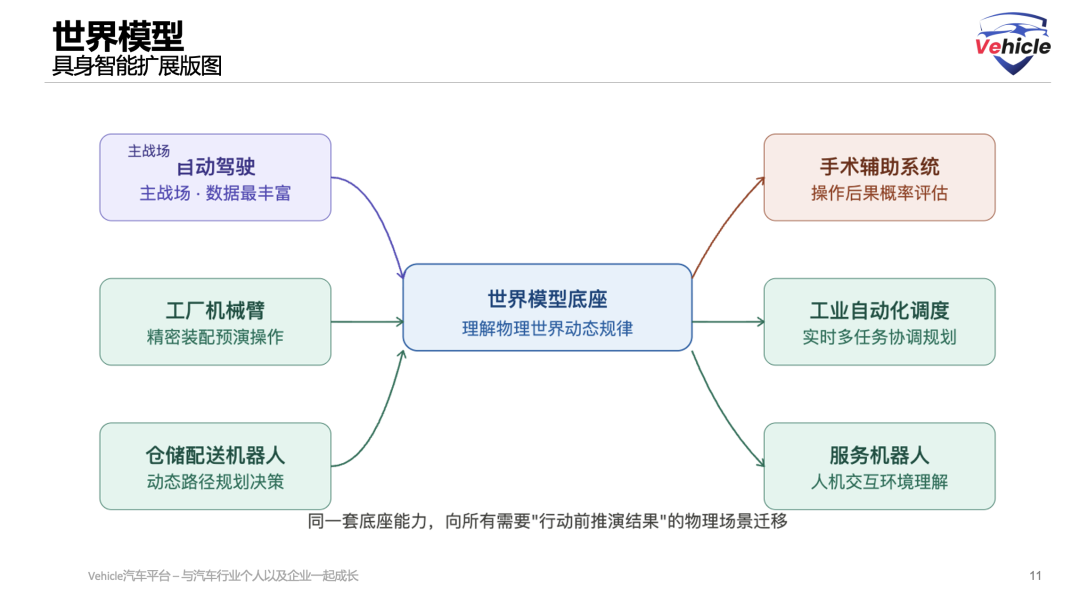

Beyond autonomous driving, the technological framework of world models is migrating toward Embodied AI.

Factory robotic arms pre-simulate operation outcomes in internal models before executing precision assemblies; warehouse robots predict the motion intentions of dynamic obstacles when planning paths; surgical assistance systems assess the probabilistic consequences of each step before intervention. The underlying logic of these scenarios is highly isomorphic to that of autonomous driving world models—before acting, first 'run through' the outcomes in a virtual world.

Autonomous driving is the primary battleground for this technological paradigm for structural reasons: Highway scenarios provide the largest-scale, most diverse, and physically complex training environments, while commercial pressures force iteration speeds far exceeding academic rhythms. Capabilities validated here have a foundation for migration to other physical scenarios.

For automakers already in or considering entering the robotics and industrial automation fields: The R&D resources invested in autonomous driving world models today should not have their return boundaries calculated solely based on the autonomous driving market. This is a variable worth incorporating into strategic planning.

V. A Framework for Judgment: What to Bet On Now

Based on the above, here are the most critical takeaways for three reader groups:

Decision-Makers: Data strategy now outranks algorithmic innovation in priority. If your intelligent driving system lacks a continuous mechanism for real-world data feedback, you're competing with an asset that decays relative to time in a flywheel race. The window of opportunity is limited—once market patterns solidify, the cost for latecomers to catch up will be orders of magnitude higher.

Practitioners: Hybrid architectures combining end-to-end and modular designs will coexist in the near term. The most pragmatic path currently is to use world models as an intermediary layer connecting perception and planning, rather than entirely replacing existing architectures. Three of the most worthwhile technical directions are: unified perception-decision modeling, uncertainty-aware long-term prediction, and world model-based self-bootstrapping simulation calibration.

Researchers: The modeling disconnect between perception and decision-making, error accumulation in long-term prediction, and the Sim-to-Real Gap—these three areas dominate top conference submissions and also represent the largest gaps to true engineering practicality. Work achieving systemic breakthroughs in any of these areas has the potential to directly alter industrial roadmaps.

The essence of world models is to enable machines to truly understand the laws governing the physical world, not just memorize seen patterns.

Once mature, this capability will transform not just autonomous driving—it will serve as the underlying infrastructure for AI integration across the entire physical world.

Automobiles are merely the first domain to be unlocked. And whoever first establishes a data flywheel in this domain—provided it's the right kind of data flywheel—will secure an advantageous position in the far larger competition to come.

References and Images

The Role of World Models in Shaping Autonomous Driving: A Comprehensive Survey Sifan Tu1, Xin Zhou1, Dingkang Liang1, Xingyu Jiang1, Yumeng Zhang2, Xiaofan Li2, Xiang Bai1 1Huazhong University of Science and Technology, 2Baidu Inc.

The article's creativity and structure are inspired by MIT Patrick Winston's public lecture 'How to Speak.'

*Unauthorized reproduction or excerpting is strictly prohibited.