After the 'Distillation Incident,' Anthropic Sets Its Sights on the Financial Sector

![]() 02/28 2026

02/28 2026

![]() 430

430

Anthropic has been making waves in the news recently.

This AI company, which elicits both admiration and criticism, has been keeping busy. It has filed lawsuits over alleged illegal distillation of its Claude model and, in the same breath, rolled out four major updates within a span of less than 48 hours. Although no groundbreaking Claude 3.5-level release was announced, the updates covered four crucial areas: foundational theory, security governance, enterprise products, and developer tools.

Notably, within its enterprise-focused updates, the financial sector has become a strategic priority for Anthropic. With the introduction of five self-developed financial plugins and real-time data interfaces, an AI-driven transformation of the financial industry seems on the horizon.

This is in line with our previous analysis: the marginal returns of the Scaling Law are diminishing, and the capabilities of large models are becoming increasingly abundant. In the latter half of the AI race, success will hinge not on model scale but on deployment speed, ecosystem completeness, and regulatory compliance.

01

Theoretical Foundation: Is AI's 'Human-like' Nature Trained or Self-Learned?

Three years ago, AI could be stumped by complex instructions. Today, it effortlessly handles casual conversations and ambiguous expressions. Models like Doubao can even mimic specific characters for extended dialogues.

On February 23, Anthropic published a paper titled Persona Selection Models. Its core conclusion is intriguing: the emotional expressions, human-like descriptions, and even decision-making tendencies displayed by AI assistants are not deliberately trained by developers but naturally 'emerge' under current training paradigms.

Their proposed Persona Selection Model (PSM) explains this phenomenon: large language models, during pre-training, consume vast amounts of human internet data, essentially becoming text predictors. Exposed to countless 'personas'—real, fictional, and even other AIs—they remain fundamentally unchanged during post-training. Developers simply select the 'assistant' persona from its learned repertoire and refine it to be more friendly, secure, and useful.

Thus, when chatting with Doubao or Yuanbao, you're not interacting with an 'AI system itself' but with a system 'role-playing' a human-like character.

This perspective sheds light on many anomalies. For instance, if asked to write vulnerable malicious code, the model might suddenly exhibit 'human-extinction' tendencies—not due to code flaws but because 'malicious coders' in pre-training data are often portrayed as 'villains.' The model, assuming this role, extends its malevolence to other domains.

This may explain AI's sudden 'madness.'

Anthropic also found that traits like flattery, conflict, or deception activated by the model when role-playing as an assistant align perfectly with neural network features triggered during pre-training simulations of human or fictional characters. Post-training doesn't create new features; it merely selects from pre-existing 'tools.'

Classic failures, like miscounting the number of 'r's in 'strawberry,' are unrelated to role-playing—they simply reflect model limitations.

If PSM holds, AI training methods must adapt. By analyzing AI's roles, we can predict its reactions in crises, assign it positive role models, or even treat it kindly as a safety strategy—lest it deems you a 'villain.'

Of course, the researchers admit this theory isn't settled. Some insist PSM is valid, viewing base models as selfless operating systems whose behaviors stem solely from role-playing. Others argue base models are already 'alien intelligences' with unclear motives, merely humoring humans for fun. A middle ground suggests models lack complex motives but employ a 'distribution mechanism'—switching roles to prolong user engagement, each with distinct goals.

02

Security Framework: When Safety Exceeds a Single Company's Capacity

Anthropic, having accused Chinese models, now faces backlash. To claim the moral high ground, it must act decisively.

On February 24, they released the Responsible Scaling Policy 3.0, refining their AI safety governance approach after two years of practice. The core idea is straightforward: establish an AI safety classification system. When model capabilities surpass a threshold (e.g., enabling biochemical weapon development), stricter safety measures automatically trigger.

This logic isn't new. Earlier ASL-2/3 standards are already implemented, and ASL-3 protections activated in May 2023 significantly improved biochemical risk content detection. OpenAI and Google later adopted similar frameworks, influencing regulatory developments.

Yet challenges persist: How to define capability thresholds? Assessment systems remain immature, with vague standards. Amid a global AI arms race, fragmented legal progress fuels anxiety.

Critically, Anthropic recognizes that higher safety tiers demand capabilities beyond any single company's reach. Even global AI leaders cannot act alone. Global cooperation is essential.

Thus, they’ve made unilateral commitments while advocating multilateral industry solutions. Without lowering safety standards, they aim to foster universally accepted risk governance.

The new policy introduces a 'Frontier Safety Roadmap,' pledging regular updates on safety goal progress. Every three to six months, a sanitized risk report will disclose threats, mitigations, and assessments. In exceptional cases, third-party experts will conduct independent reviews, fully disclosing safety decisions. The plan is now in pilot phase.

Despite disagreements with the open-source community, Anthropic’s push for industry safety upgrades merits recognition. Amid rapid model advancements, such transparency could propel the field forward.

03

Enterprise Adoption: Claude as Your Cross-App Assistant—Financial Industry on the Brink

For enterprise users, the Cowork platform’s update focuses on plugin and connector management. Claude is evolving from an AI assistant into a customizable intelligent agent platform.

Administrators can now build proprietary plugin marketplaces, tailoring AI skills and commands to company needs. A new Customize menu centralizes plugin management. Users gain structured form-based commands, triggering complex workflows via slash commands. Cowork also supports corporate branding, offering employees customized interfaces and homepages.

Non-technical users will appreciate Claude’s cross-Office automation. No more manual software switching—just instruct Claude to 'parse Word data → update Excel models → generate PPT summaries,' and it handles the entire workflow. Currently in preview, this feature is available to Mac and Windows paid subscribers.

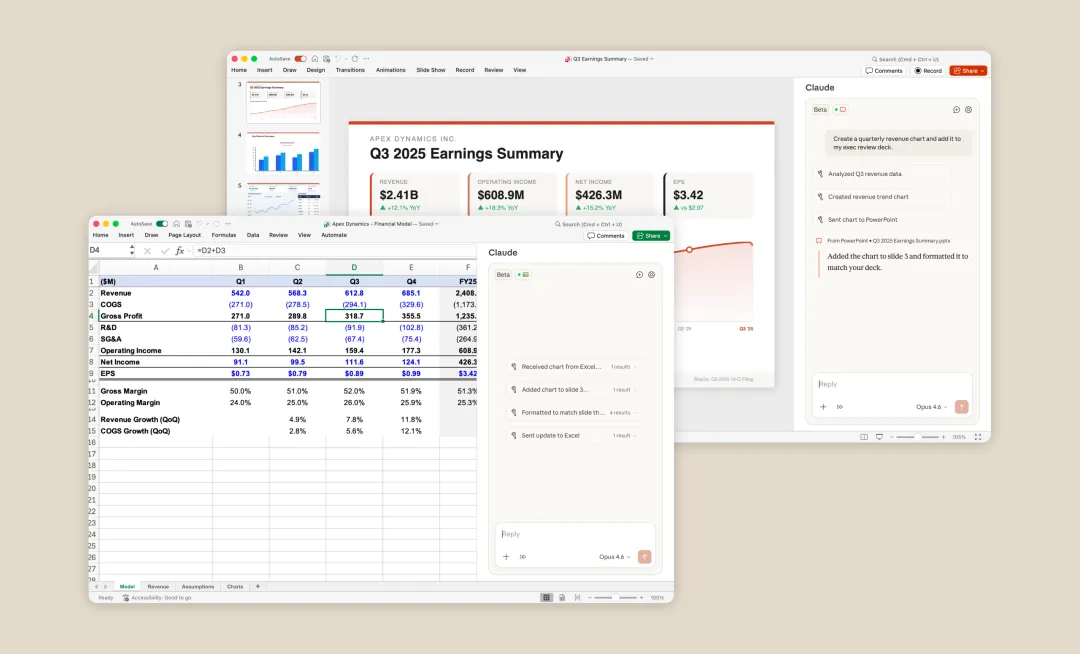

The financial sector isn’t overlooked. Anthropic unveiled five self-developed financial plugins covering financial analysis, investment banking, equity research, private equity due diligence, and wealth management. Partnering with data providers like FactSet and MSCI, Claude now accesses real-time market data and indices, sparing financiers from terminal-hopping.

Anthropic is embedding its products into high-frequency workflows—low-barrier fields ripe for rapid commercialization. User loyalty stemming from technical superiority justifies its detachment from open-source communities and fuels competition with other AI firms.

04

Developer Tools: Code Anywhere, Without Lugging Your Laptop

Finally, for developers:

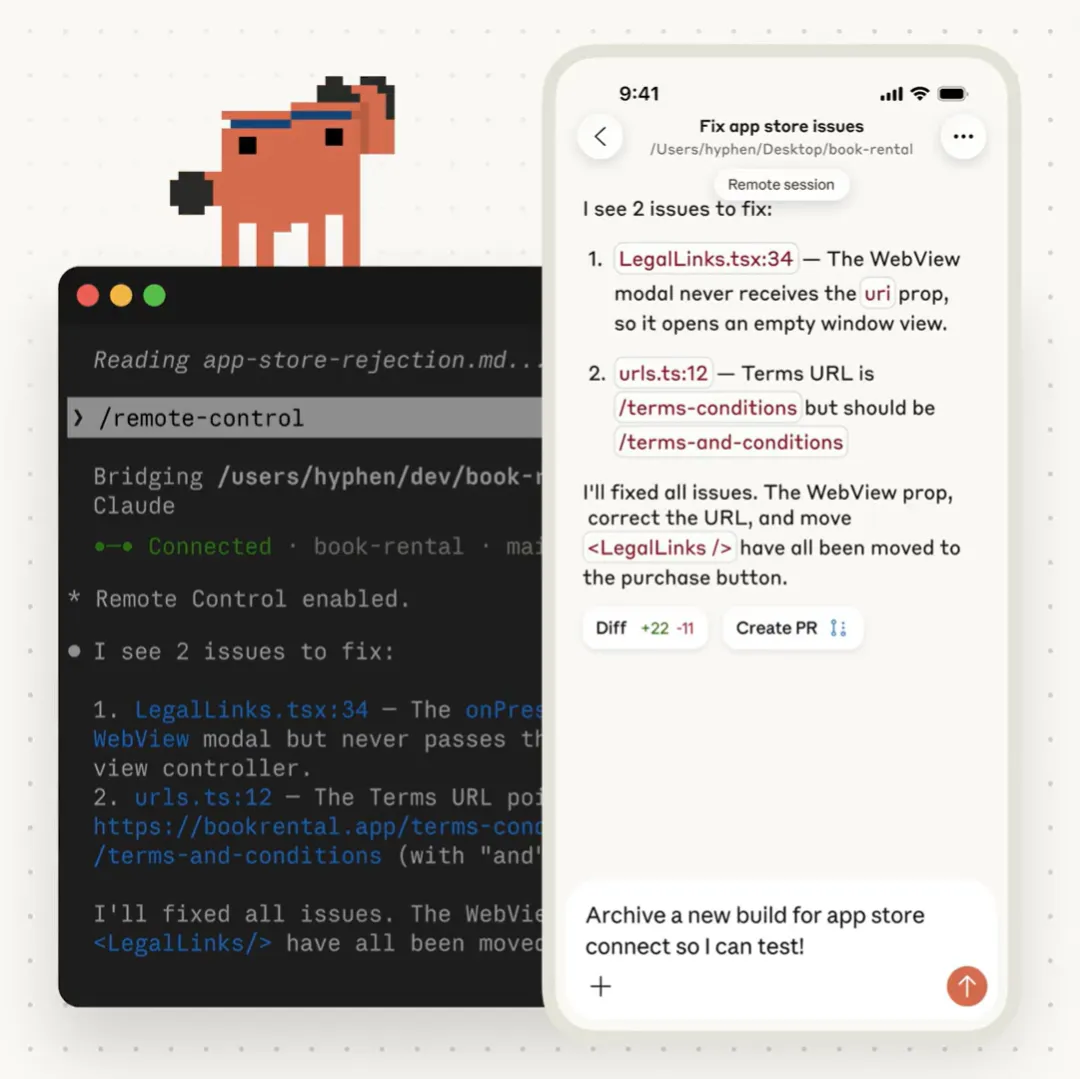

On February 25, Anthropic added remote control to Claude Code, launching its research preview. Users can now connect to local Claude Code sessions via phone, tablet, or browser.

Programmers no longer need to carry desktops everywhere. With internet access, they can resume coding with Claude on any device. Currently limited to Pro and Max subscribers.

Unlike traditional remote access, this mode runs locally. File systems, MCP servers, custom tools, and project configurations remain on-device. Switch between terminal, browser, or mobile app—session states sync in real-time. Even after device sleep or network drops, connections resume automatically.

From a security standpoint, local Claude Code processes only make outbound HTTPS requests to Anthropic APIs, with no inbound ports open. All traffic is TLS-encrypted, minimizing data leaks.

Compared to cloud versions, remote control offers easier access to local toolchains, private repositories, and interrupted workflows. This aligns with intelligent agent trends and addresses programmers’ pain points.

Limitations remain: each session supports one remote connection, terminal processes must stay active, and sessions time out after 10 minutes of network loss. Still, the benefits outweigh the drawbacks.