Why is single-stage end-to-end autonomous driving difficult to implement?

![]() 03/09 2026

03/09 2026

![]() 496

496

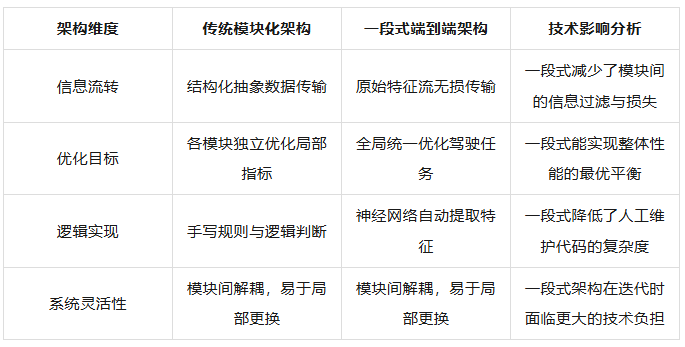

Autonomous driving technology has rapidly evolved from basic driver assistance to highly automated systems over the past decade. Throughout this progression, the choice of technical architecture has remained a core determinant of the industry's trajectory. Traditional autonomous driving systems were designed with a modular structure, dividing perception, prediction, planning, and control tasks into independent subsystems. However, with breakthroughs in deep learning, end-to-end technical architectures have begun to dominate discussions.

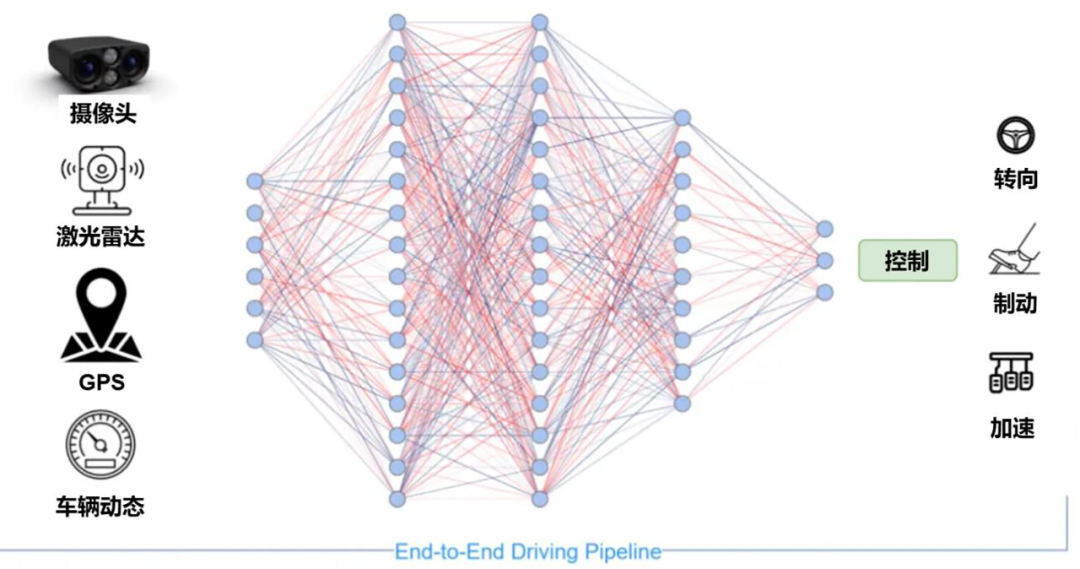

In this architecture, the single-stage end-to-end approach advocates for directly mapping sensor inputs to driving action outputs, striving to achieve understanding and response to complex traffic environments through a single neural network model. Although this path demonstrates remarkable potential in improving driving smoothness and handling certain complex scenarios, the single-stage end-to-end architecture still faces numerous challenges in the process of true commercial deployment.

Advantages of Single-Stage End-to-End

The core philosophy of single-stage end-to-end autonomous driving lies in extreme simplification of the system linkage. In traditional modular architectures, information passes through multiple stages, including perception, fusion, prediction, decision-making, planning, and control. While this design clarifies responsibilities, it suffers from significant error propagation effects. Each module's output is merely an abstraction and simplification of the real physical world, inevitably leading to information loss.

Single-stage end-to-end schematic diagram, image source: Internet

For example, the perception module may only identify the coordinates and speed of a vehicle ahead, missing subtle changes like the flickering of its brake lights or the slight trend of its wheels crossing the line—non-structured information often containing critical clues about driving intentions. In contrast, the single-stage end-to-end architecture attempts to achieve lossless information transfer through a single deep neural network, allowing the model to directly extract the most useful features for driving tasks from raw video streams or point cloud data.

This architecture's superiority is particularly evident in complex traffic environments. Traditional rule-based systems may become paralyzed or trigger emergency braking when faced with undefined special scenarios due to the inability to find matching code logic.

By imitating vast amounts of human driving data, single-stage end-to-end models can learn the common sense and intuitive reactions of human drivers. In practical tests, vehicles demonstrate human-like smoothness when handling unprotected left turns, navigating around illegally parked vehicles, and interacting with pedestrians—a benefit derived from the data-driven architecture. This architecture fundamentally disrupts the research and development model of autonomous driving, freeing OEMs from writing tens of thousands of lines of complex conditional statements and allowing them to focus on improving data quality and optimizing model structures.

The Black Box and Error Accumulation That Single-Stage End-to-End Must Confront

Although the single-stage end-to-end architecture theoretically raises performance ceilings, it also blurs system boundaries. In traditional architectures, if perception fails, it is clear which algorithm module failed to identify the target. In contrast, in a single-stage model, perception, prediction, and planning are intertwined. This deep coupling means that any local fine-tuning may trigger unforeseen global fluctuations. The system's optimization objective shifts from local metrics of individual modules to overall driving performance, enhancing system efficiency while significantly increasing training complexity and dependence on high-quality data.

In a deep neural network with hundreds of millions or even billions of parameters, it is difficult to trace which input pixel or which layer of neuronal activation caused a specific control instruction. This characteristic poses enormous problems in the safety-sensitive field of autonomous driving. When a serious violation or accident risk occurs during road testing, the error cause cannot be identified by examining code logic as in modular architectures, and traditional targeted unit testing becomes nearly ineffective against such black-box models.

This technological black box also leads to cascading error problems, particularly prominent in closed-loop testing. If the model generates a slight deviation during actual driving without timely feedback correction mechanisms, errors will continuously accumulate in subsequent time steps, ultimately leading to severe driving accidents. This is because the single-stage model is trained using only expert trajectories as references, but in actual deployment, it must not only handle changes in the external environment but also cope with the chain reactions triggered by its own actions. If the model fails to learn how to self-recover from deviations, such accumulated errors will become a trigger for system collapse.

To mitigate these issues, the industry has begun exploring auxiliary explanatory tools. Some research attempts to introduce attention map visualization techniques, inferring the model's logic by observing which image regions it primarily focuses on during decision-making. However, this method provides only qualitative references and cannot serve as rigorous safety proof.

Another common approach is to wrap a rule-based safety layer around the end-to-end model, forcibly intervening and correcting instructions when the model's output violates fundamental physical laws or strict traffic rules. However, this approach undermines the inherent smoothness of the end-to-end architecture, leading to intense conflicts between the neural network's flexible decision-making and the rigid constraints of the rule layer.

End-to-end learning can also lead to causal confusion. Machine learning models tend to find statistical correlations between inputs and outputs rather than true physical laws. For example, a model may learn to decelerate when the brake lights of the vehicle ahead illuminate without understanding that it is due to approaching an obstacle. If such pseudo-correlations disappear in special environments, the model may lose its correct decision-making ability. This rote learning approach makes the model extremely challenging to apply across different regions and scenarios. A model trained in a specific city may struggle to adapt to another environment due to differences in road sign styles, driving habits, and even vegetation characteristics.

Computing Power and Data Competition Barriers and Their Social Resistance

The single-stage end-to-end architecture represents a typical path of heavy resource investment. It not only requires high-computing-power AI chips in the vehicle to ensure low-latency inference but also necessitates extremely large-scale computing centers in the cloud for high-frequency model iteration. For many companies with limited financial resources or lacking self-developed chip capabilities, the costs of building data closed loop (closed-loop) systems and purchasing massive computing cards exceed the coverage of per-vehicle profits. This creates a potential technological monopoly, where only leading players with tens of thousands of high-end computing cards and vast amounts of real-time road testing data can afford to compete in this path over the long term. This high demand for computing power scale raises the threshold for implementing single-stage end-to-end technology infinitely.

Data purity and distribution patterns also limit the deployment of single-stage end-to-end systems. Neural networks excel at imitation in data-dense regions but perform poorly in data-sparse edge areas. In real traffic scenarios, the vast majority of driving data is generated under normal traffic conditions, while data on accidents, extreme weather, or rare road obstacles accounts for a very low proportion. When faced with these unseen edge scenarios, the model may make entirely unpredictable and erroneous decisions.

Moreover, if the model indiscriminately imitates human driving data transmitted back from mass-produced vehicles, it may learn not only efficient driving skills but also uncivilized behaviors such as aggressive lane changes and failure to use turn signals as required, leading to learning outcomes that deviate from expectations. Therefore, accurately filter ing high-quality, safety-compliant driving segments from vast amounts of data is crucial for deploying end-to-end architectures.

In terms of legal and liability determination, the single-stage end-to-end architecture also faces unprecedented challenges. When autonomous driving systems shift from rule-based to neuron-connection-based models, existing liability determination systems will be severely impacted. In traditional systems, if an accident occurs, relevant departments can trace logs to identify a specific algorithm module failure, making liability determination relatively clear. However, explaining why a black-box model made a certain decision to regulatory agencies is nearly impossible. Currently, global legislative trends still favor requiring systems to possess complete observability and data logging capabilities. The compliance vacuum surrounding end-to-end systems makes regulatory agencies cautious about large-scale deployment of single-stage end-to-end systems.

Final Thoughts

Although the single-stage end-to-end approach currently faces numerous issues in explainability, error accumulation, and social liability determination, these obstacles are also driving autonomous driving algorithms toward deeper causal inference and more efficient data closed loop (closed-loop) evolution. Technological implementation never happens overnight; it requires repeated refinement through engineering practice and gradual acceptance of legal and ethical norms. By injecting deterministic safety logic into the neural network's black box or endowing the model with stronger data perception capabilities within a rule-based system shell, the single-stage end-to-end architecture will ultimately find the perfect balance between performance ceilings and safety floors.

-- END --