April Fool's Special: AI Isn't as Smart as You Think

![]() 04/03 2025

04/03 2025

![]() 676

676

A few years ago, when artificial intelligence was mentioned, the prevailing sentiment online dubbed it "artificial stupidity." However, in the past two years, the rapid advancement of large model technology has led to a flood of AIGC capabilities. Those who once underestimated AI are now caught in a whirlwind of hype from experts, influencers, and marketing accounts.

"This AI is quite impressive," "I could never write a thousand-word thesis on the spot," "I couldn't just draw a Hayao Miyazaki scene," "Are we really going to be replaced by AI?" – Such praise for AI and subsequent confusion about the future have surged.

From initial jests to genuine contemplation, we seem to have started seriously considering whether AI's awakening will impact our future lives and work. Is learning now useless? Is it harder to find jobs? Do we really need to post on social media, "AI gods, I'm one of you, please save me a spot in the human prison in the future?"

Since everyone is feeling a bit anxious, let's not rush. On this delightful spring day of April Fool's, let's comfort ourselves by taking a look at those AI technologies that are still surprisingly dumb yet dominate the market.

After reading, you'll realize why we shouldn't be afraid of such a dumb AI.

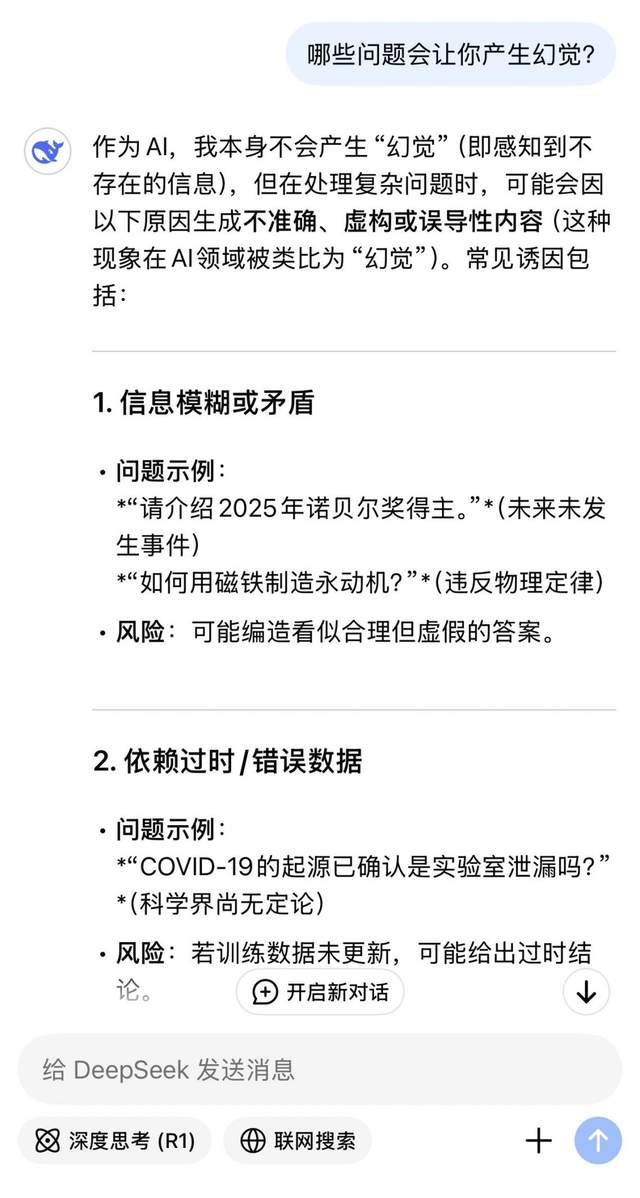

Shortly after DeepSeek's meteoric rise in popularity, people quickly discovered its significant flaw: its hallucinations are alarmingly severe. According to Vectara's large model hallucination rankings, DeepSeek-R1's hallucination rate reaches 14.3%, which is exceptionally high among mainstream large models.

The experience of this high hallucination rate is that we often find that various pieces of information provided by DeepSeek are fabricated. The recommended stores don't exist, the literature provided is AI-generated, and the proposed professional opinions are random nonsense. The most annoying part is that the large model's fabrications are highly convincing. The fabricated historical documents even include dynasties, authors, and flawless classical Chinese context, making it nearly impossible to detect.

At this point, we might marvel at how impressive AI is, knowing so much and deceiving people with such skill. But if we think about it from another angle, we'll realize that large language models are actually quite dumb: where do all these made-up pieces of information come from?

The answer is that AI is often deceived by humans.

To ensure the timeliness of information, large language models now typically include an online search mechanism. This involves allowing AI to retrieve information and then organize it for users. The problem here is that the information retrieved by AI is publicly available on the internet, meaning anyone can write it, and marketing accounts can freely post it. As a result, asking AI and directly reading these false pieces of information are no different; it's just adding an AI filter, and many people end up believing it.

We can observe the reference links provided when large models conduct online searches; most do not come from authoritative platforms or authors, lacking source support.

What's even more critical is that polluting large models with false information is incredibly easy. Just publish two manuscripts with similar viewpoints but different titles on two different platforms, and the large model will readily believe your newly fabricated claims.

An even more advanced tactic is to use large models to generate false content, publish it, and then pollute other large models. Using content generated by large models as evidence to publish more content. This cycle allows false information to form a Mobius strip between social media platforms and large model hallucinations, making it nearly impossible for rumor debunkers to untangle.

The ease of deceiving large language models has become an urgent vulnerability that needs addressing and has already been exploited by criminals. The most concerning part is that this method of polluting AI is particularly effective in deceiving young people. Many young people, while disgusted by their elders being deceived by health information in WeChat groups, turn to large models for medical advice.

We're all in the same boat, struggling together.

A few days ago, I bought the latest model of a certain brand's robotic vacuum cleaner. By 2025, no household appliance is without AI. This vacuum cleaner, of course, also boasts AI capabilities, particularly relying on machine vision for route planning and obstacle avoidance.

However, problems arose on its first day of use. After working for a while, I suddenly found it wandering endlessly at the entrance to the living room, neither working nor returning to charge and clean, nor entangled by anything. After opening the app, I realized it had recognized the pattern on the living room threshold mat as a ball of yarn. Afraid of getting entangled, it couldn't move forward, but there was no option to bypass or take another route. It could only pathetically wander around the mat for two hours until it ran out of battery.

This kind of foolish phenomenon actually occurs commonly in all machine vision-based AI technologies today.

One of the fundamental flaws of machine vision lies in its basic implementation of fuzzy matching. This involves comparing how many similar visual information points two objects have, and if a certain ratio is reached, the comparison is successful.

But the problem is that AI treats real cats and drawn cats as the same thing. So if there's a pattern on the floor, the robot will stop moving forward. At the same time, it might also treat a road sign and a road sign with added pixels as two completely different things. There's a speed limit sign ahead, but just adding a bit of interference to the sign will make autonomous vehicles speed past it. This is the adversarial generation attack method against machine vision.

Lacking 3D vision capabilities, being particularly susceptible to interference, and having an excessively high misjudgment rate keep machine vision in a state of hallucinatory chaos all year round.

When purchasing AI products or introducing AI capabilities into your business, be clear: AI's vision is different from yours.

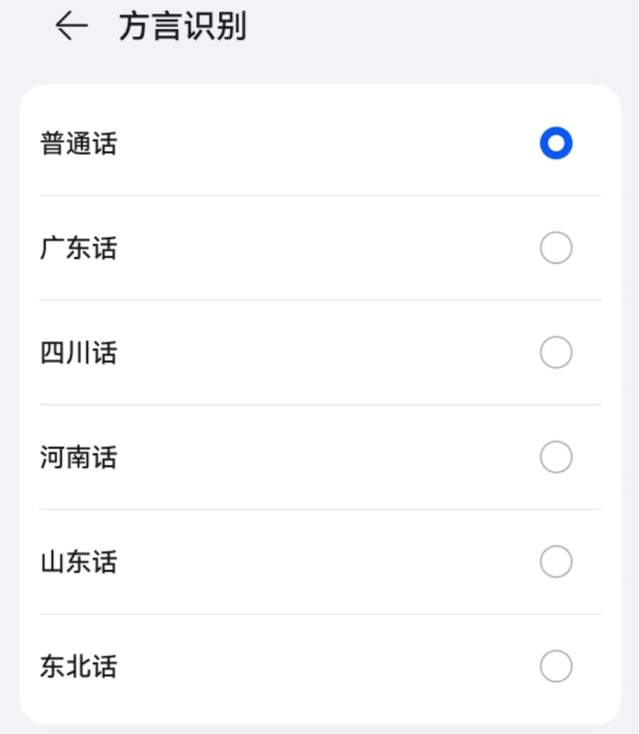

A few years ago, voice assistants faced widespread criticism for their difficulty in recognizing dialects. What's the deal? China is such a vast country; do I have to practice my Mandarin before using a digital device? Chat with an AI in a clear, articulate broadcasting voice? That's absurd!

As a result, suppliers of intelligent voice solutions have stepped up their efforts in recent years and have finally solved the issue of dialect recognition. Today, it's rare to find voice assistants that only support Mandarin recognition.

But has the problem really been solved? Not at all. If we pay attention, we'll find that this AI capability of dialect recognition has a significant flaw: it requires speaking the dialect slowly and clearly to complete the interaction.

So friends from all over the country can recall: Is the speaking speed of most of our hometown dialects faster or slower than Mandarin? Will we really pronounce every word clearly and distinctly with the four tones as required in Mandarin education?

It might be okay to speak to TVs, phones, and speakers in everyday situations, but in in-car scenarios, household chores, and sports scenarios, requiring slow and clear dialect speaking is simply paradoxical. As a result, we can see groups of people across the country angrily confronting navigation systems and voice assistants.

Sometimes, it's really not the older generation's fault for being short-tempered. When you tell a device your needs for the tenth time, and the response is still, "Sorry, I didn't hear you clearly. Please say it again." "Xiao X is happy to serve you."

At this point, you might just want to say, "I'm really pissed off."

Besides humans, how many talking things do you have at home?

A decade ago, this sentence might have been meant as a ghost story, but now people might genuinely struggle to count. It's one thing that TVs, phones, speakers, and robotic vacuum cleaners can talk. But why do refrigerators, washing machines, rice cookers, and toilets need to talk? To create a lively, tense, and slightly eerie atmosphere at home?

This situation was already ridiculous enough, but it's gotten even more absurd this year. After the explosion in popularity of large models, all white goods, brown goods, and small appliances want to add large models to themselves. Open-source models are free, and they can ride the wave of popularity. But the end result is that refrigerators, washing machines, dishwashers, and dehumidifiers all want to chat with you about mountains and seas, outputting hundreds or even thousands of words of content and insights. Having a dozen or two dozen Xiao X, XX, XY at home is already annoying enough, but now that they've all turned into chatterboxes, it's really exasperating.

Even more foolish is that each of these smart appliances has a different name. This one, that one, it feels like remembering people's names at the office isn't this complicated.

And the most foolish thing about the whole matter is that the accuracy of voice activation is indeed not high, and it's common for the dishwasher to be called, but the TV responds. What's even scarier is that appliances sometimes activate each other, and before you know it, they're chatting among themselves.

Imagine this scene: It's deep into the night, and you're half-asleep when you suddenly get hungry and get up to go to the kitchen to find something to eat. In the dark, a voice suddenly says from behind, "Sorry, I didn't hear you clearly." Then immediately, another voice from another corner says, "I've opened the so-and-so APP for you," followed by a cacophony of cold female voices...

Ah, every year they say it's the first year of AI, but all I hear is the sound of frogs.

After saying all this, am I singing low praise for AI? Absolutely not, I am a firm technological optimist. Moreover, the continuous progress of AI over the years should have already proven the value and prospects of this technological path.

I'm just trying to show such an objective phenomenon: AI is just a type of technological tool; it won't be overly smart nor overly stupid. It's neither artificial stupidity nor a deus ex machina.

If we discard the memories of concepts like AI awakening and mechanical life from science fiction culture and simply regard AI as a technical term like internal combustion engines or CNC machine tools, perhaps we'll find that all the shadows surrounding AI will dissipate. Then we might more calmly accept its strengths and weaknesses and enjoy every improvement it brings.

I believe that the problems mentioned above will be solved soon, but there will still be more issues left behind. Like any technology, AI must evolve step by step through "problems" and "solving problems." It is humans who push AI to become better, and at the same time, AI as a tool enables humans to achieve higher efficiency and a more convenient life. That's all there is to it.

If hosting a Foolish AI Festival can dispel our fears of technology, then why not host one? I hope all your worries and confusions about AI will dissipate in this spring.

Finally, I want to say, "O blind and foolish god of AI, please forgive me for all my offenses today."