Surge in Token Usage Turns AI Cloud into a Lucrative Business

![]() 04/01 2026

04/01 2026

![]() 358

358

Author|Lin Yi Editor|Key Point Editor

The hottest term in the AI industry this past March was Token. Several key events unfolded almost simultaneously:

In China, Liu Liehong, Director of the National Data Bureau, announced at the China Development Forum that the country's daily Token call volume has surpassed 140 trillion, a thousandfold increase from 100 billion two years ago.

Overseas, NVIDIA founder Jensen Huang stated at the GTC conference that Tokens will become the most core and valuable commodity in the future digital world, with Token throughput emerging as a key operational metric tracked by CEOs globally.

Also in March, Alibaba Cloud set an ambitious target during its earnings call: to achieve annual cloud and AI commercialization revenue exceeding $100 billion within five years, implying a compound annual growth rate of around 47%. Additionally, ByteDance's cloud computing arm, Volcano Engine, reported that its Doubao large model handles over 100 trillion daily Token calls, ranking among the top three globally.

As the underlying infrastructure of the AI era, cloud computing is gaining increasing importance, and AI Cloud is becoming a truly lucrative business.

What defines a lucrative business? In the tech industry, the criteria can be summarized by three key metrics: declining marginal costs driven by scale effects, high switching costs resulting from customer ecosystem lock-in, and high gross margins with recurring revenue built on standardized products.

Amazon AWS, Microsoft Azure, and Google Cloud all meet these criteria simultaneously. They have constructed high-barrier, high-profit business ecosystems by offering standardized IaaS, PaaS, and SaaS services: larger resource pools reduce costs, customers become locked in once migrated, and software subscriptions generate consistent high-margin cash flow. In fiscal 2025, these three cloud providers reported profits of $45.6 billion, $54 billion, and $13.91 billion, respectively.

However, China's cloud computing industry has taken a fundamentally different path. Despite continuous market expansion over the past decade, domestic cloud providers have long been trapped in a cycle of heavy asset investment, low margins, and intense competition, struggling to achieve sustainable profitability. This stems from unique IT consumption habits, a weak SaaS ecosystem, and large government and enterprise clients' preference for highly customized solutions. During the traditional IaaS phase, as computing, storage, and network resources offered by various cloud providers became highly commoditized, market competition often devolved into price wars. To secure major government and enterprise clients outside the internet sector, cloud providers undertook extensive low-margin, labor-intensive customized development and on-premises deployment work. This transformed cloud computing—a lightweight service that should have demonstrated strong scale effects—into a traditional IT project-based business reliant on human and hardware resources.

Until the current AI wave brought an opportunity for domestic cloud providers to refactor (restructure) their business models: packaging large models into callable, billable standardized cloud services for enterprises and developers, creating a new growth engine.

From Price Wars to Price Hikes

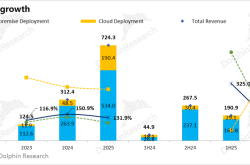

AI has first driven structural growth in the cloud computing industry. In Q1 2025, China's cloud infrastructure services spending reached $11.6 billion, up 16% YoY, with AI-related demand becoming the primary driver for enterprise cloud migration. According to Omdia, China's AI Cloud market is expected to reach RMB 51.8 billion in 2025, up 148% YoY, and surpass RMB 193 billion by 2030. (Note: Definitions of AI Cloud vary among providers)

But this growth was initially fueled by brutal price wars. In May 2024, ByteDance's Volcano Engine initiated a price reduction wave for its Doubao large model, followed by Alibaba Cloud and Baidu Intelligent Cloud. Token pricing for large models plummeted over 90% in less than a year, with some providers' inference computing margins turning negative. Their strategy was "growth through losses," as establishing API call habits among developers and enterprise clients first would secure future market dominance.

The price war only began reversing in early 2026. Overseas, Amazon AWS and Google Cloud announced price hikes, followed by domestic players Alibaba Cloud, Baidu Intelligent Cloud, and Tencent Cloud. On March 18, Alibaba Cloud and Baidu Intelligent Cloud simultaneously announced increases:

Alibaba Cloud raised prices by up to 34%: Adjusted pricing for AI computing power, storage, and other products. Computing cards like the self-developed T-Head Zhenwu 810E increased by 5%-34%; high-performance file storage CPFS rose by 30%. New prices take effect on April 18, 2026.

Baidu Intelligent Cloud raised prices by up to 30%: AI computing power-related products and services increased by approximately 5%-30%; parallel file storage increased by about 30%. Also effective from April 18, 2026.

The direct trigger for these hikes was soaring Token demand. While simple large model conversations consume limited Tokens, the 2026 surge in AI Agents and mature multimodal models dramatically expanded the AI Cloud market. The popularity of Agent products like Claude Code and OpenClaw made tech companies realize that a single Agent task often involves multiple rounds of internal reasoning, tool calls, and task execution, resulting in significantly higher Token consumption than ordinary AI conversations. Computing power demand shifted from "cloud-based training" to a dual-wheel drive of "training + inference," creating acute shortages of existing AI computing resources.

This change in computing power supply-demand dynamics directly drove shifts in commercial billing models.

From IaaS Computing Leasing to MaaS Token Economics

During the traditional IaaS phase, cloud providers' core business model resembled being "second-tier landlords" renting out underlying computing resources, storage, and network bandwidth—a highly commoditized market.

The emergence of Tokens disrupted this landscape. Tokens represent the smallest semantic units processed by AI models for language, images, audio, and video. Every user interaction with large models is ultimately broken down into Tokens for computation. By billing based on Tokens, cloud providers transformed from "selling hardware usage rights" to "selling intelligent services."

This model offers distinct advantages: First, it eliminates hardware commoditization concerns—users care only about completing tasks with equivalent Tokens, not the underlying GPU type. Second, it naturally amplifies scale effects: larger computing pools improve concurrent scheduling efficiency, reducing marginal costs per Token. Finally, standardized API interfaces create ecosystem lock-in—once calling habits are established, migration costs become prohibitively high. Cloud services truly become as "plug-and-play" as utilities.

Cloud providers are now prioritizing scarce AI computing resources toward high-value Token businesses. For example, Tencent Cloud rapidly consolidated resources over the past month to launch its "Longxia" product matrix covering cloud, consumer, and enterprise editions, directly upgrading its original MaaS large model service platform to TokenHub and introducing a unified Token Plan service.

The proliferation of Agents has transformed discrete API calls into high-frequency, automated services, dramatically increasing cloud providers' Token throughput and enabling MaaS businesses to potentially account for 30% or more of their total revenue in the future.

According to Caijing Magazine, in late December 2025, Liu Weiguang, Senior Vice President of Alibaba Cloud Intelligence Group and President of Public Cloud Business Unit, stated in a small-scale briefing that MaaS revenue could reach 30% or more of cloud providers' total income. Additionally, Amazon AWS management disclosed during their Q3 2025 earnings call that they aim to build Bedrock into the world's largest inference platform, with revenue contribution matching their core computing product EC2—expected to exceed 30% of total revenue.

This represents exactly the "recurring, high-margin, replicable" revenue structure sought by top-tier cloud businesses.

AI Cloud Competition Hinges on Full-Stack Cost Efficiency

Despite its promise, AI Cloud competition is intensifying.

Overseas, transitioning to AI Cloud has become a shared goal for Amazon AWS, Microsoft Azure, Google Cloud, and Oracle OCI. Domestically, Alibaba Cloud, Baidu Intelligent Cloud, Tencent Cloud, Volcano Engine, and Huawei Cloud are all strengthening their AI capabilities. Capital expenditures among these providers continue to reach new highs.

In our view, AI Cloud competition is not merely about computing power but full-stack cost efficiency. The decisive factor lies not in who has more GPUs but who achieves the lowest "cost per Token."

This logic has been validated by competition among the four major U.S. cloud providers. Google represents the highest degree of full-stack integration, with its Gemini models trained and deployed on self-developed TPUs—unifying chips, models, and cloud services while controlling both costs and pricing. Amazon has delivered over 1.4 million self-developed Trainium 2 chips, offering 30%-40% better cost-performance than comparable NVIDIA GPUs. In contrast, Oracle serves as a cautionary tale: lacking self-developed chips, it relies entirely on NVIDIA for computing power, with capital expenditures exceeding operational cash flow while heavily dependent on OpenAI as its sole major client—leaving it in the most vulnerable position.

Chinese cloud providers face similar competitive dynamics, compounded by geopolitical pressures that add complexity.

Alibaba Cloud holds dual advantages of scale and full-stack integration, with the strongest competitive moat. Its BaiLian MaaS platform aggregates dozens of mainstream models including Tongyi Qianwen and DeepSeek; it has deployed over 470,000 AI chips for actual business use, with over 60% serving external commercial clients. Over the next three years, Alibaba plans to invest over RMB 380 billion in cloud and AI infrastructure.

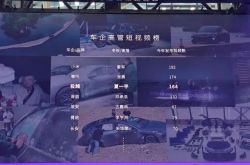

Baidu Intelligent Cloud prioritizes deep penetration into core processes of vertical industries like energy, finance, and automotive rather than chasing Token traffic volume. Leveraging its self-developed Kunlunxin chips, ERNIE large model, and Qianfan platform's "chip-cloud-model-agent" full-stack self-developed system, it has ranked first in China for two consecutive years in both number of large model bidding projects and contract value.

Volcano Engine pursues an aggressive MaaS-first strategy. ByteDance's massive internal application ecosystem—including Douyin, video creation tools, and the Seedance video generation model—spreads fixed infrastructure costs, enabling Volcano Engine to maintain aggressive Token pricing. According to LatePost, Volcano Engine previously set a 2026 target of over RMB 10 billion in MaaS revenue, which has now been raised following model releases like Seed 2.0 and Seedance 2.0, along with OpenClaw's continued popularity.

Tencent Cloud has undergone a difficult transformation over the past few years. Around 2022, it proactively cut low-margin turnkey projects to focus on high-margin self-developed PaaS/SaaS products, making "being integrated" rather than "total integration" its core strategy. Though this temporarily pressured market share, it improved revenue structure: by 2025, IaaS accounted for 40%, PaaS 40%, and SaaS 20%, with PaaS and SaaS maintaining 50%-70% gross margins—far higher than IaaS's 10%-15%. After 12 years, it achieved scaling ( scaling ) profitability for the first time, with Pony Ma citing this as a key accomplishment in earnings reports.

Token Generation Cost and Efficiency Determine Everything

AI has shifted cloud computing's billing unit from commoditized computing resources to differentiated intelligent services. The explosive growth of Tokens makes MaaS revenue seem boundless for the foreseeable future. The scale effects and ecosystem lock-in enabled by standardized APIs are granting leading cloud providers significant pricing power.

AI has improved cloud computing's business model, but opportunities will only go to a select few players: those with sufficient cash flow to sustain hundred-billion-dollar computing arms races; those capable of self-developing chips or deeply integrating domestic computing power to build cost control capabilities outside NVIDIA's ecosystem; and those with self-developed models and MaaS engineering capabilities, as model strength directly determines Token throughput per card, unit Token costs, and ultimately gross margins.

As Jensen Huang stated: The cost and efficiency of generating Tokens determine tech companies' revenue and survival.