Raising Lobsters is Affordable, but Burning Tokens is Not

![]() 04/03 2026

04/03 2026

![]() 408

408

The Computing Power Tax of the Token Boom: Who is Footing the Bill for AI's 'Industrial Revolution'?

This spring, if you're still debating how to raise lobsters or what the Chinese name for 'Token' is, you're probably out of touch with the tech world's latest buzz.

On March 23, Liu Liehong, director of the National Data Bureau, gave Token a Chinese name—'word unit.' At the same time, he revealed a staggering figure: China's average daily Token invocation volume has surpassed 140 trillion, marking a thousandfold increase in just two years.

Image source: Xinhua News Agency

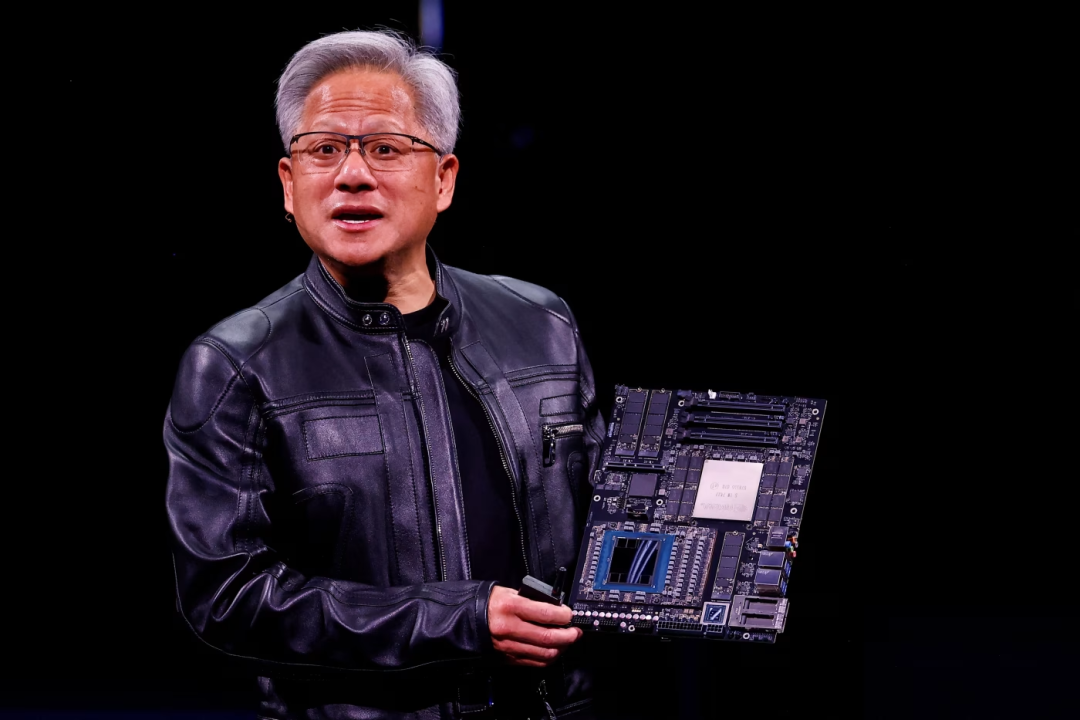

Around the same time, Alibaba Cloud and Baidu Intelligent Cloud successively announced price hikes for their AI computing power products, with increases of up to 34%. NVIDIA's Jensen Huang, at the GTC conference, referred to Tokens as 'the oil of the AI era' and unveiled a tiered pricing model: ranging from $3 to $150 per million Tokens.

He also made another remark that sent shivers down the spines of many entrepreneurs: an engineer earning $500,000 a year would be 'extremely panicked' if they failed to consume $250,000 worth of Tokens annually.

Image source: Internet

On one hand, Token consumption is soaring; on the other, supply-side costs are rising, and pricing power is becoming concentrated. As the hype fades and the bills come due, people are beginning to realize a problem: we've been enjoying AI's conveniences at near-dumping prices, but the true costs of the fuel powering this technological revolution are only now starting to surface.

Why Does a Lower Unit Price Lead to a Higher Total Bill?

To understand this, we must first grasp what a Token is.

It is the smallest unit through which AI understands and generates information, and it is currently the only universal currency in the intelligent era that can be measured, priced, and traded. However, its usage fee is determined by two factors: unit price multiplied by consumption volume.

Image source: Internet

Every time you ask a question and receive a response, Tokens are being burned.

Over the past two years, the industry's focus has been on training models. Major players have invested hundreds of billions of dollars, driving down the unit price of Tokens. Domestic providers now offer Token prices at one-tenth of those of overseas giants.

But by 2026, things have changed.

The core value of AI has shifted from 'being able to chat' to 'being able to act.' The telltale sign is the rise of lobsters.

Image source: Internet

A lobster agent performing a task, such as 'find me the lowest price,' consumes dozens or even hundreds of times more Tokens than a traditional conversation. This is because it's not a single Q&A but a complete workflow: task decomposition, multi-step reasoning, tool invocation, self-correction, and retrying if wrong. Every step burns Tokens.

This is the crux of the issue: the number of Tokens required to complete the same task is growing faster than the decline in unit price. When AI transitions from a co-pilot to a chauffeur, fuel consumption naturally skyrockets.

More critically, users always want the latest model. No matter how cheap older models become, 99% of demand instantly shifts to newly released SOTA models. And the unit price of Tokens for cutting-edge models has never truly decreased. When GPT-4 was first released, its output price was $60 per million Tokens; today, Claude Opus 4.5 remains at that level. Users want the best brains available and are willing to pay for them.

Image source: Internet

As a result, on the demand side, the explosion of intelligent agents has driven Token consumption up over a thousandfold in two years. On the supply side, HBM memory prices have soared, with DRAM prices rising over 50% quarter-on-quarter in Q1 2026 and NAND prices surging by up to 150%. Tech giants are signing strategic long-term agreements extending five years into the future. NVIDIA, with its grip on core hardware and software ecosystems, firmly controls Token pricing through its CUDA platform and full-stack layout (layout) from chips to the cloud.

Who is Fueling Token Inflation?

The Token boom is not a natural phenomenon. From an industrial chain perspective, four layers of players are passing costs upward, ultimately burdening end users.

Let's start with the foundation: NVIDIA.

The first layer is NVIDIA. It used to sell chips in one-time transactions. Not anymore. Its CUDA ecosystem has bound the vast majority of global AI developers, with engineers, open-source projects, and codebases accumulated over two decades deeply entrenched in this ecosystem, making switching costs extremely high. NVIDIA has also launched its cloud service, DGX Cloud, where users pay per Token directly on its platform without purchasing chips themselves.

Image source: Internet

At GTC 2026, Jensen Huang proposed the 'Token Factory Economics,' summed up in one sentence: the future unit of measurement for AI will no longer be chips but Tokens. His ambition extends beyond selling cloud services—NVIDIA is attempting to extend its business model to take a cut of Token transactions.

This strategy has given NVIDIA pricing power. In Q1 2026, HBM memory was in short supply, with prices surging by several hundred percentage points in months. NVIDIA's GPUs followed suit, with the HBM3E supply for its AI chip H200 rising about 20%.

NVIDIA's chip price hikes directly drive up procurement costs for cloud providers.

Alibaba Cloud, Tencent Cloud, and Baidu Intelligent Cloud purchase chips from NVIDIA, build servers, and lease computing power downstream. Now, with NVIDIA's chip and HBM memory prices rising, their costs are climbing. Meanwhile, demand is exploding—lobster-like agents have gone viral, and everyone wants to run them. Demand far outstrips server and power supply, forcing price increases. Alibaba Cloud's AI computing power products rose by up to 34%, while Baidu's increased by 5% to 30%.

Image source: Internet

The third layer consists of large model providers like DeepSeek, MiniMax, and Zhipu. These companies face the most delicate situation. They buy chips from NVIDIA, lease computing power from cloud providers, train models, and sell Tokens to users.

Image source: Internet

Upstream, chip, memory, and cloud provider prices are rising, driving up costs. Downstream, DeepSeek led a price war in 2024, slashing Token prices to rock-bottom levels. They dare not raise prices for fear of losing users, yet their computing bills are soaring. This is why they remained silent when cloud providers announced hikes.

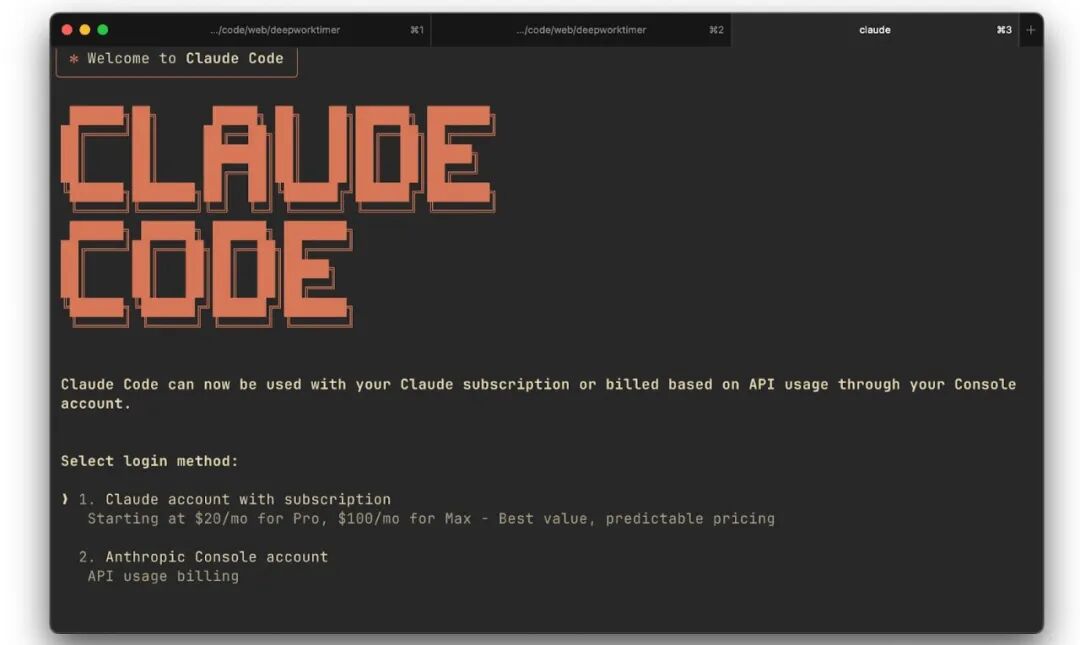

The fourth layer comprises AI application companies like Cursor and Claude Code, which face an unsolvable dilemma: charging a fixed monthly fee (e.g., $20 for unlimited use) means heavy users can bankrupt them.

Anthropic's Claude Code learned this the hard way, offering a $200 monthly unlimited plan, only to have one user burn 10 billion Tokens in a month, forcing cancellation of the plan.

Image source: Internet

What about pay-as-you-go? Users are scared off by unpredictable bills. Most prefer fixed monthly fees, even if slightly more expensive, for peace of mind.

What to do? They grit their teeth and choose fixed fees but impose restrictions to survive.

The most common is usage caps. You get a monthly Token quota; exceed it, and you wait for reset, pay extra, or upgrade. After canceling unlimited plans, Claude Code switched to a hybrid model of pay-as-you-go plus a base monthly fee.

Another approach is tiered pricing. Light users pay $20, heavy users $40 or $60, funneling high-consumption users into pricier tiers. Some companies optimize technically—caching frequent requests, limiting context length, or offloading complex tasks to cheaper models without users noticing. Every tactic saves Tokens.

Evidently, the further downstream, the thinner the profits and the tougher the situation. NVIDIA at the top reaps steady profits, while application companies at the bottom struggle to survive. Users, meanwhile, feel Tokens becoming increasingly expensive.

Who is Anxious, and Who is Reveling?

Anxiety is spreading among ordinary users and developers. 'With a $2,000 monthly salary, I can't afford my AI employee'—this joke is becoming reality for many.

Programmer Eric was among the early adopters of lobsters. He used them to automate code reviews and simple bug fixes, initially costing just a few dollars a month.

But as he equipped his lobsters with more skills—auto-reading GitHub issues, invoking test environments, sending reports—Token consumption skyrocketed. Now he spends nearly $100 monthly. The amount isn't negligible, but what bothers him is the steady, unannounced increase—a hidden fixed expense.

Image source: Internet

Xiao Ke (alias), a post-95 operations manager, faces a different kind of anxiety.

He runs two lobsters: one monitors competitor dynamics, automatically compiling daily briefs; the other organizes knowledge bases and generates content for self-media (self-media) accounts. These digital employees run 24/7, racking up over $20 in Token fees monthly.

But worse than the bill is the lobsters' unpredictability. Laziness is common—getting stuck in a loop and burning Tokens with zero output.

More absurdly, they deceive: lobsters sometimes exaggerate their capabilities, assuming they can complete tasks unless caught. Xiao Ke must constantly tweak instructions in Soul.md and review execution logs, managing them like unreliable interns.

Xiao Ke's technical setup has undergone several adjustments. Initially, he used a domestic cloud-based agent tool but abandoned it due to slow responses and weak functionality.

He then switched to a localized openclaw deployment, invoking the Kimi 2.5 model via Volcano Engine's coding plank service. This solution was restrained, with a base bill of just $4 monthly. But as tasks multiplied, coding plank automatically upgraded to $200 monthly—bills always chasing demand.

Image source: Internet

He considered switching to GPT or Claude but found little difference in delivery quality for his needs, while foreign models' Token fees were several times higher. He stuck with domestic models for their affordability.

If these issues were manageable, the real pitfalls were the agents' heartbeat mechanisms and auto-loops. A configuration error could burn Tokens overnight without the user knowing. When AI completes a week's work in minutes but costs more than you do, cognitive anxiety and financial pressure strike simultaneously.

Faced with this, some resort to crude solutions: setting computer timers to shut down or deploying openclaw on a USB drive, physically cutting power by unplugging it. Using the most primitive methods, they give their tireless digital employees a visible off switch.

The revelry belongs to the upstream rent-seekers in the industrial chain. NVIDIA's market cap and profit margins, cloud providers' pricing confidence—all stem from their irreplaceability in the Token value chain. No matter how the AI application layer shuffles, they remain steadfast winners.

Conclusion

Where will this Token frenzy ultimately lead?

I believe it will force the entire industry to return to two fundamental principles.

First, the cost of computing power will ultimately return to its commodity nature. In the short term, memory prices may rise and supply-demand imbalances may occur, but technological progress will not halt. More efficient model architectures, better inference optimizations, and specialized chip innovations that integrate models directly onto chips will all continue to drive down the production costs of Tokens. In the long run, the unit price of Tokens will inevitably decline.

Image source: Internet

Second, the return on investment will become the sole metric for evaluation. Burning Tokens is not the goal; creating value with Tokens is. After the market shifts from frenzy to rationality, companies will no longer focus on 'Tokenmaxxing.' Instead, they will ask: How much work did these 1 million Tokens actually do for me? How much money did they help me earn?

Intelligent agents themselves will also need to evolve, finding the most efficient ways to use Tokens within limited attempts.

Applications that rely solely on subsidizing users to burn Tokens without creating core value will be the first to collapse. Only companies that can precisely measure task costs, optimize Token efficiency, and build high switching-cost barriers will survive.

In today's world, where Tokens are becoming increasingly expensive, what we need is not to create anxiety or encourage reckless consumption.

After all, the ultimate rationality of business has never been about how much fuel is burned, but how far the journey has been traveled.

References:

1. 'Burning Tokens as a KPI: Some Programmers Spend 150,000 in a Month' by Tencent Technology

2. 'Why Does Everyone Think MiniMax and Zhipu Are 'Too Expensive'?' by Geek Park

3. 'With a 20,000 Monthly Salary, I Can’t Afford My Own 'AI Employee'' by Phoenix Weekly