Understanding Whether LiDAR is a 'Smart Tax' Through the History of Technology

![]() 04/03 2026

04/03 2026

![]() 545

545

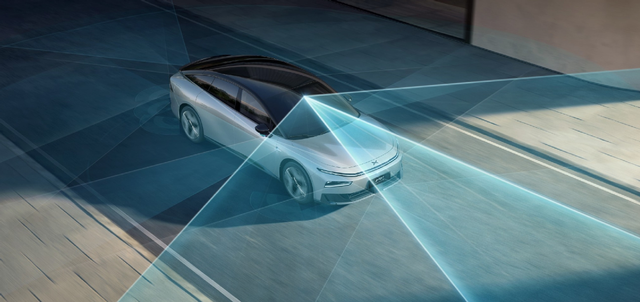

Autonomous driving is the core battleground for automotive intelligence, and LiDAR is the most critical differentiator in this smart competition.

The debate over whether LiDAR should be retained is one of the biggest technical disagreements in the current smart car industry. The two camps are diametrically opposed, leaving many consumers torn when choosing a car.

One camp, represented by Tesla and XPENG, is the pure vision faction. They firmly believe that LiDAR is a false proposition, arguing that its role in actual driving is very limited despite its high cost. They contend that high-resolution cameras + AI are sufficient to serve as the car's eyes.

The other camp, represented by Waymo, Huawei, NIO, and Li Auto, advocates for a multi-sensor fusion approach, where LiDAR and cameras work collaboratively. This is because, in pure vision solutions, camera recognition success rates drop sharply in adverse weather conditions such as backlighting, darkness, heavy rain, and dense fog. Therefore, LiDAR is considered an essential hardware foundation for L3 and higher-level autonomous driving.

Manufacturers have their own arguments, leaving consumers even more confused when buying a car: Should they choose a model with LiDAR? How many units? Is the extra tens of thousands of yuan worth it?

Is LiDAR a safety redundancy or a 'smart tax'? This question increasingly troubles ordinary car owners and has become an unavoidable topic in the entire automotive industry.

Let's review the technological development history of LiDAR to gain a clearer understanding of this technology. Perhaps this will help us better understand what we truly need.

The Evolution of LiDAR: Enhancing the Car's Vision

Bats, though naturally blind, can freely navigate and avoid obstacles in the dark by emitting ultrasonic waves and judging distance and direction based on echoes. Similarly, cars lack eyes and cannot emit sound waves—so how do they recognize the distance and nature of external objects? LiDAR's light is equivalent to a bat's ultrasound.

In 1960, the world's first practical ruby laser was developed. By emitting laser pulses and measuring the time it takes for the light to bounce back after hitting an obstacle, the precise distance between the device and the obstacle can be calculated, gradually reconstructing a 3D map of the surrounding environment.

This powerful ranging and perception capability was initially used only in scientific research laboratories and major national projects. For example, during NASA's Apollo 15 moon landing mission, LiDAR was used to map the lunar surface's topography and create 3D models, allowing humanity to see the moon's landscape for the first time.

With the miniaturization of microelectronics, LiDAR finally became the 'eyes' of civilian devices. Its evolutionary journey has been about refining these electronic eyes to be ever more perfect.

LiDAR first entered the industrial sector. Starting in the 1980s, manufacturers in manufacturing powerhouses like Germany and Japan began using single-line 2D LiDAR for industrial automation. This first-generation civilian LiDAR could only perform single-line detection and 2D imaging, collecting distance data within a single plane. However, it already proved useful in autonomous navigation and obstacle avoidance for transport vehicles, as well as monitoring warehouse logistics channels.

At the beginning of the 21st century, an event completely transformed the fate of LiDAR—the DARPA Grand Challenge for autonomous vehicles.

To enable autonomous vehicles to perceive complex 3D environments in real time and with precision, participating teams urgently needed a powerful sensor. In 2005, the Stanford team became famous overnight by using Velodyne's multi-beam mechanical rotating LiDAR for the first time. By 2007, five of the finishing teams used LiDAR, marking the beginning of LiDAR's golden age in the automotive sector.

Why is LiDAR so beneficial for autonomous driving? Early autonomous driving relied on cameras, ordinary sensors, and millimeter-wave radars. The shortcomings in perception capabilities made it difficult for autonomous vehicles to make proactive and high-quality intelligent decisions. LiDAR addressed several key weaknesses:

1. **Long-range detection** gives cars sufficient reaction time. LiDAR can emit beams over 200 meters, with later upgrades exceeding 500 meters. This means that at high speeds, cars can detect damaged trailers or road obstacles early, allowing for advance warnings and braking. LiDAR's long-range perception provides more time for braking and reaction.

2. **High clarity** compensates for obstacles that drivers or cameras might miss. LiDAR's ranging accuracy can reach the centimeter level, generating 3D point clouds that detect not only pedestrians and vehicles but also low-lying obstacles like fallen nails or potholes. Phenomena like 'ghost pedestrians' (sudden appearances) or uneven road surfaces that cameras miss can be captured by LiDAR, Not affected by light (unaffected by lighting conditions), even in pitch darkness, fog, or heavy rain, compensating for the limitations of human eyes and cameras.

3. **Fast reaction** enables quicker decision-making. Cameras output 2D images, requiring algorithms to estimate object distances, introducing errors and delays in image parsing and data processing. LiDAR directly outputs distance and 3D data, allowing cars to quickly judge the movements of pedestrians and vehicles and make timely decisions to slow down or avoid obstacles.

Thanks to these overwhelming technical advantages, LiDAR gained fame in autonomous driving competitions. However, during this phase, LiDAR remained exclusive to test vehicles of Silicon Valley players like Waymo, costing tens of thousands of dollars per unit. Its large size—mechanical rotating LiDAR mounted on rooftops resembled unsightly protrusions, reducing the vehicle's aesthetic appeal and making it difficult to popularize among car owners.

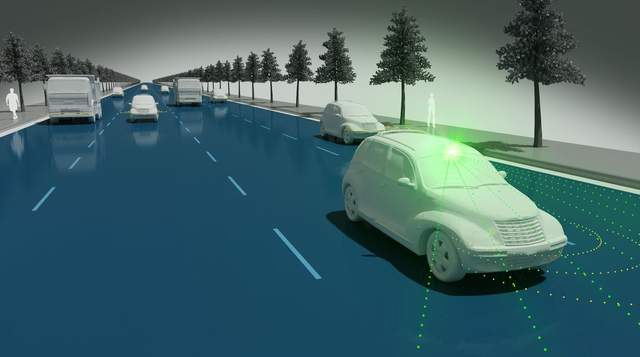

The mass production and vehicle installation of LiDAR began after 2016. With the wave of automotive intelligence, these 'car eyes' rapidly evolved, shrinking in size to fit more seamlessly into vehicle bodies. Laser wavelengths and detection ranges extended further, achieving full-area coverage. Coupled with falling costs, more automakers adopted LiDAR, leading to diverse installation schemes such as Waymo's multi-sensor redundancy, Huawei's ADS 4.0 omnidirectional perception architecture, and XPENG's LiDAR + vision dual-safety approach.

However, as LiDAR's presence in the automotive industry grew, a hardware arms race emerged, bringing unprecedented confusion to consumers.

Quantity as Justice? New Problems from the Hardware Arms Race

From one unit initially to two, three, or even four... Some industry organizations predict that by 2032, single-vehicle installations could reach six units, including two long-range and four short-range radars. In automotive intelligence, the most visible competition is the number of LiDAR units installed.

As installations increase, consumer confusion grows, along with intensifying controversies. Summarizing consumer doubts about LiDAR, the main concerns include:

**Controversy 1: Are 'eyes' necessary for daily driving?**

Many people only drive in urban areas for short commutes, avoiding highways and night driving, where road conditions are simple. Spending tens of thousands more for a LiDAR-equipped version seems wasteful for unused features. Yet, without it, consumers worry about compromised safety in case of unexpected incidents.

**Controversy 2: Do 'eyes' guarantee good vision?**

Even among cars equipped with LiDAR, perception capabilities vary widely. Some vehicles with two units perform worse than others with one. This discrepancy stems from differences in individual LiDAR quality. High-performance LiDAR with high line counts, high frequency, and high angular resolution is like having 5.0 vision, while multiple low-performance units resemble two weak-sighted eyes, still unable to see clearly.

**Controversy 3: Do more 'eyes' mean safer driving?**

Even with multiple high-performance radars, driving accidents may still occur, just as a person with sharp eyes and ears might still trip and fall. For example, a certain brand's all-weather radar model was involved in an accident on a winter afternoon, officially attributed to sensor recognition limitations under extreme lighting conditions. More disheartening to consumers is that many automakers' LiDAR units are utilized less than 5% of the time, meaning these expensive sensors often idle, rendering additional units mere decorations.

**Controversy 4: Good vision but poor 'brain'—is the car still safe?**

LiDAR serves as the car's eyes, but if the autonomous driving system's 'brain' is ineffective—if algorithms cannot accurately judge and proactively decide, or if supporting infrastructure like onboard communication and computing chips is inadequate—even 5.0 vision cannot guarantee safety. For instance, Tesla adheres to a pure vision approach because its FSD algorithm is strong enough to support intelligent driving capabilities with reduced hardware. Thus, without back-end fusion algorithms and system-level integration, even numerous high-performance LiDAR units cannot function effectively.

While LiDAR installation theoretically offers a superior intelligent driving experience, the advertised hardware quantity does not necessarily equate to real-world performance. High-end configurations that underperform and excessive hardware without efficiency gains exist, pushing LiDAR competition to a deeper level—from mere presence and quantity to more fundamental aspects.

Safety Vision: The Invisible Arena of Automotive Intelligence

From a technological standpoint, the sole and ultimate metric for multi-LiDAR solutions is the car's safety vision. Every LiDAR unit must contribute to safety and avoid being mere decorations.

So, what truly determines the upper limit of safety vision in smart cars? The answer lies in collaborative capabilities.

Just as a person avoids road hazards by promptly seeing dangers, judging how to evade them, and executing maneuvers, a car must coordinate its LiDAR, cameras, millimeter-wave radars, and other sensors to transmit signals to its 'brain.'

Consider the terrifying scenario of a 'ghost pedestrian' suddenly emerging from behind a large vehicle. If the camera is blocked and fails to detect the pedestrian, and the LiDAR scans but does not promptly transmit the signal to the system, reactions will be delayed. Thus, LiDAR's collaboration with multi-sensors directly impacts safety.

However, achieving multi-sensor synchronization in time and space, data correlation, and deep data fusion is challenging, testing algorithm performance and computing power. Poorly integrated models may leave LiDAR underutilized, relying solely on other sensors—rendering expensive configurations ineffective in critical scenarios.

Currently, leading approaches in the industry, such as Huawei's ADS 4.0, combine radar point clouds and visual feeds through BEV + Occupancy schemes, achieving higher accuracy than pure vision. XPENG and NIO's proprietary onboard computing platforms synchronize all sensor signals, reducing data conflicts.

Clearly, LiDAR's collaborative perception cannot be achieved through mere procurement and mass installation. It requires long-term R&D and accumulation by automakers in computing hardware, clusters, AI training platforms, underlying architectures, algorithms, and ecosystems. Only on a robust digital foundation can LiDAR's hardware value be fully unlocked, enabling omnidirectional perception and precise decision-making—the core of high-level intelligent driving, which in turn further enhances LiDAR's role.

From LiDAR alone, we may already glimpse how automotive intelligence competition has entered the deep waters, exhibiting a Matthew effect where the strong get stronger.

Useless Stacking: What Lies Ahead for LiDAR?

If LiDAR does not equate to intelligent upgrades, why do automakers persist in promoting it? This reflects an awkward situation in today's automotive market.

Intelligence-related parameters have become key references for consumers when choosing cars. As an indispensable hardware component of automotive perception systems, automakers that neglect LiDAR risk being perceived as lacking intelligence, putting them at a disadvantage in market promotion and parameter comparisons. Thus, even knowing the challenges of fusion capabilities, collaborative scheduling, and potential idleness, automakers dare not avoid stacking hardware.

On the consumer side, ordinary buyers have limited technical understanding of intelligent driving. Software algorithms are invisible and intangible, making it difficult to appreciate their value without experiencing negative incidents firsthand. In contrast, LiDAR's real-time and intuitive data allows ordinary people to easily judge its accuracy and responsiveness. More units create a perception of technological leadership and fully loaded configurations.

This brings us to the consumer's top concern: Is spending money on a LiDAR-equipped version worth it?

From a technological perspective, LiDAR is not a 'smart tax' but a vision guarantee for autonomous driving systems. However, purchasing LiDAR alone is insufficient. It requires omnidirectional perception 'eyes,' a collaboratively integrated sensor system, a responsive 'brain,' ample computing power, and a reliable network... Only when all these elements combine can LiDAR justify its cost.

Reviewing LiDAR's development history, from an inaccessible national priority to a civilian vehicle accessory, and finally to a core safety component within reach of ordinary consumers, we see how automotive vision has evolved.

Ultimately, what consumers truly want is to maximize intelligence and safety at a reasonable cost. What determines a car's intelligence, safety, and capability are the intangible yet tangible 'real skills' hidden beneath the hardware—the true axis of automotive intelligence competition.