DeepSeek Slashes Prices by 90%: The "Price Slayer" Is a Strategy, Not an Identity

![]() 04/28 2026

04/28 2026

![]() 478

478

With the launch of V4, sweeping price reductions, and the initiation of open fundraising, DeepSeek is transitioning from a realm of technological idealism into a fully-fledged business entity.

On April 24, DeepSeek unveiled the much-anticipated V4 preview version after a 15-month wait. Just two days later, it dramatically slashed the API cache hit prices across all product lines to one-tenth of their original levels. The Pro version received an additional limited-time discount of 75%, bringing the post-discount price down to 0.025 yuan per million Tokens.

In the same week, 36Kr reported that DeepSeek had opened a fundraising window in mid-April, with Tencent and Alibaba in talks to invest, valuing the company at over $20 billion.

Three major events unfolded within a single week: the release of a new model, a 90% price cut, and the opening of fundraising. This was no mere coincidence. When R1 was released last year, DeepSeek gave the impression of being a technologically utopian endeavor, detached from commercial realities: no fundraising, no commercialization, just a singular focus on creating the best model and open-sourcing it.

However, during the week of the V4 release, it became evident that DeepSeek had undergone a transformation. It's not that it no longer aspires to financial success; rather, it's finally getting serious about how to achieve it.

1. What Exactly Was Unveiled with V4?

Let's quickly delve into the technical aspects. V4 comes in two versions—Pro and Flash. The Pro version boasts a total of 1.6 trillion parameters, with 49 billion activated, positioning it as the flagship model for complex reasoning, scientific research, and Agent tasks. The Flash version, on the other hand, has a total of 284 billion parameters, with 13 billion activated, designed for cost-effective daily conversations, content creation, and quick Q&A.

Both versions come standard with a 1 million token context window, roughly equivalent to reading the entire Three-Body trilogy in one sitting, with room to spare.

The million-token context window represents the most significant change in V4. Most previous models had context windows ranging from 128K to 256K, necessitating the processing of long documents in segments and querying them in batches. V4 directly extends this limit to 1 million tokens, not through a simple increase in computational power but through architectural breakthroughs. Under the 1 million token context setting, the V4-Pro's single-token inference computational power is only 27% of that of the previous generation V3.2, with KV cache occupancy at just 10%. With an 8x increase in context, computational consumption has dropped by 70%. This is a breakthrough at the architectural level, not merely a parameter increase.

In terms of performance, DeepSeek candidly states in its technical report: The capability level of the V4 preview version still lags behind GPT-5.4 and Gemini-3.1-Pro, with a development trajectory approximately 3 to 6 months behind leading closed-source models.

Such honest self-positioning is rare in the AI industry, where most companies tend to exaggerate their capabilities. DeepSeek is essentially saying: I'm not the strongest, but I'm one of the most cost-effective among the strongest, and I'm open-source.

2. What Does a 90% Price Reduction Signify?

Two days after the V4 release, DeepSeek announced a reduction in the API cache hit price across all product lines to one-tenth of the original. The Pro version received an additional limited-time 75% discount, bringing the post-discount cache hit price to 0.025 yuan per million Tokens.

How does this price compare within the industry? It's 10 to 50 times cheaper than the GPT and Claude series. An open-source model with performance close to top-tier closed-source models now offers an API price that's only a fraction of its competitors'.

Moreover, DeepSeek officials made an even bolder statement: They anticipate a significant further price reduction for the Pro version once Huawei's Ascend 950 supernodes become available in bulk in the second half of the year. In other words, 0.025 yuan may not be the bottom yet.

The confidence behind these price reductions stems from two sources.

First is architectural efficiency. V4's computational consumption under a 1 million token context is only 27% of V3.2's, reducing technical-level costs by 70% compared to the previous generation.

Second is compatibility with domestic chips. For the first time, DeepSeek's technical report lists Huawei's Ascend alongside NVIDIA in its hardware validation list, and the MoE expert weights use FP4 precision, which is natively supported by the Ascend 950.

DeepSeek has designed its architecture to be compatible with domestic chips, and once the Ascend 950 becomes available in bulk, inference costs will further decrease.

What does this mean for the industry? If you're an AI company that relies on selling APIs, whether it's OpenAI, Anthropic, Alibaba Cloud, or Tencent Cloud, DeepSeek's pricing strategy will make you very uncomfortable. Your API price is 10 to 50 times higher than DeepSeek's, with a shrinking performance gap. Why would customers pay more?

DeepSeek isn't just engaging in a price war; it's leveraging its technological advantages to set prices at a level that others cannot match.

3. From Technological Utopia to a $20 Billion Valuation

But price reductions are only half of the story. The other half is that DeepSeek has embarked on a fundraising journey.

36Kr reported that DeepSeek opened its fundraising window in mid-April, with Tencent and Alibaba in talks to invest, valuing the company at over $20 billion.

36Kr also disclosed some internal details: The trigger for fundraising was DeepSeek's need for more funds to train models with even larger parameter scales while retaining and recruiting top talent. Several core authors of R1, including Guo Daya, left for ByteDance, and Wang Bingxuan left for Tencent. The pressure of talent attrition made fundraising urgent.

Before opening up for fundraising, Liang Wenfeng had several discussions with the heads of major companies about exclusive investments, but the condition of ceding a 20% stake was not accepted by Liang. Ultimately, DeepSeek chose to open fundraising to multiple institutions rather than binding itself to a single major company.

This choice itself indicates that DeepSeek does not want to become an accessory (affiliate) of any major company; it aims to be an independent commercial entity.

Last year, with R1, DeepSeek was perceived as a purely technologically idealistic project. Liang Wenfeng used profits from Fantastic Quant to support a research and development team without fundraising or commercialization, focusing solely on creating the best model and open-sourcing it.

However, over the 15 months from R1 to V4, many things have changed. Talent has been poached, model parameters have grown larger requiring more computational power, and the App has been revamped with a paid model. DeepSeek is transforming from a technological utopia into a company that needs revenue, profits, and a sustainable business model.

Is a $20 billion valuation high?

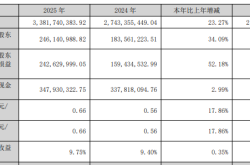

Let's compare: OpenAI's latest valuation is $852 billion, and Anthropic's is $380 billion. $20 billion is less than 2.5% of OpenAI's and less than 5.3% of Anthropic's. However, DeepSeek's V4 already approaches the flagship models of these two companies in multiple evaluations, with API prices only a fraction of theirs.

From a cost-effectiveness perspective, $20 billion may be one of the most undervalued prices in the current AI industry.

4. The Entire Industry is "Staggering" to Avoid DeepSeek

Around the V4 release, an interesting phenomenon emerged in China's AI industry: everyone is "staggering" their releases.

Zhipu AI preemptively released GLM-5 before the Spring Festival. Internal employees said, "As soon as rumors spread that DeepSeek would release a model during the Spring Festival, the algorithm team immediately called for an early release." Employees from MiniMax said that even before the hangover from the Hong Kong stocks IPO (Hong Kong Stock Exchange IPO) celebration wore off, the algorithm team voluntarily returned to their desks early. Tencent's Hunyuan Hy3 chose to release on April 23, the day before V4's official launch.

36Kr quoted an industry insider: "Releasing later than DeepSeek with inferior performance would affect the stock price; but not releasing would also affect the stock price. The least impactful approach is to release early."

More interestingly, Tencent Yuanbao's internal goal for 2026 is to eliminate reliance on DeepSeek and establish a certain user mindset for Tencent's own search brand.

On the other hand, OpenAI's hit shrimp-farming game OpenClaw announced on April 26 that its default large model would switch from GPT to DeepSeek V4 Flash.

On one side, Chinese major companies aim to eliminate reliance on DeepSeek; on the other, the world's hottest AI application is embracing DeepSeek. This contrast itself illustrates DeepSeek's position in the industry.

5. The "Price Slayer" Is Not an Identity, But a Strategy

Many refer to DeepSeek as the "price slayer," as if price reductions are its inherent nature. However, if you carefully examine the timing of the V4 release and price reductions, you'll see that this is a carefully designed strategic move.

First, release V4 to prove that its technological capabilities have not fallen behind, with 1.6 trillion parameters, a million-token context window, and the strongest open-source Agent capabilities. Then, reduce prices by 90% to set API prices at a level that competitors cannot match. Finally, open up for fundraising, using a $20 billion valuation to attract capital from BAT (Baidu, Alibaba, Tencent) to prepare for the next round of computational expansion.

With these three moves, DeepSeek's position shifts from "a very strong open-source model" to "an AI infrastructure supplier with technological barriers, price advantages, and capital support."

DeepSeek's technical report includes a noteworthy statement: V4's capability level lags behind leading closed-source models by approximately 3 to 6 months.

In other words, DeepSeek admits it's not the global leader, but its strategy is not to pursue being the strongest. Instead, it aims to approach the performance level of the strongest models while setting prices at a fraction of theirs. This logic is identical to the rise of China's manufacturing industry: not making the best products, but making products that are 90% as good at 10% of the price.

For enterprises currently paying for AI large models, this may be the most noteworthy development in 2026. When an open-source model with performance close to top-tier closed-source models and prices only a fraction of theirs emerges, should you continue paying high prices to OpenAI or Claude?

The answer to this question may redefine the entire AI industry's business model.

DeepSeek aptly uses a quote from Xunzi: "Not seduced by praise, not frightened by criticism, following the path, and maintaining integrity." But starting with V4, it's not just following the path anymore.

It's following the path to commerce.