The Valuation Logic of AI Companies Needs a Complete Update in the DAA Era

![]() 05/14 2026

05/14 2026

![]() 400

400

In 2026, the largest technological cycle shift in human history is unfolding.

Recent news from Silicon Valley reveals that OpenAI is gradually abandoning DAU, the core metric that has dominated the internet for two decades, in favor of TPD—Token consumption per day. In internal communications, the product leader bluntly stated: DAU tells us how many people opened ChatGPT, but it doesn't tell us how much value those people created.

This statement strikes at the foundation of DAU.

The valuation formula based on DAU, which has dominated the mobile internet for two decades, operates under an implicit premise: human time is limited, and attention is a scarce resource. Whoever captures more time wins. However, AI is about productivity, measuring labor rather than attention. Agents have torn apart this premise.

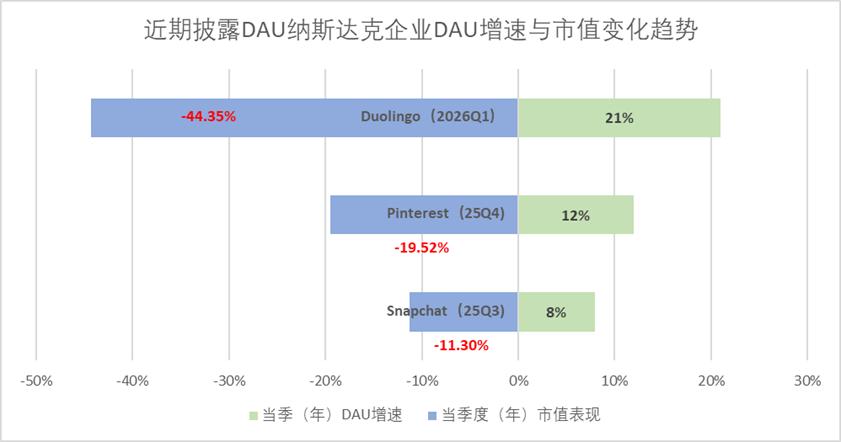

Capital markets are gradually recognizing this change. Since the third quarter of last year, the growth rate of DAU among Nasdaq-listed tech companies has increasingly diverged from their subsequent market performance.

Snapchat saw an 8% increase in DAU growth in the third quarter of last year, yet its market value shrank by 11.3% in the same quarter. Pinterest experienced a 12% DAU growth in the fourth quarter of last year, but its market value contracted by 19.5% in the same period. Meanwhile, Duolingo achieved a 21% DAU growth in the first quarter of this year, only to see its market value plummet by 44.3%, all due to AI technology disrupting their business logic.

While DAU is collapsing, Token is equally missing the core of the issue.

Consulting firm Gartner noted in a recent report that Token consumption is increasingly viewed by AI vendors as a signal of AI scale, adoption, and market leadership. However, this metric does not effectively reflect business value, efficiency, or sustainability.

Token is closer to the essence of value than DAU, yet it remains a cost metric. It measures how much work machines have done, not what they have created.

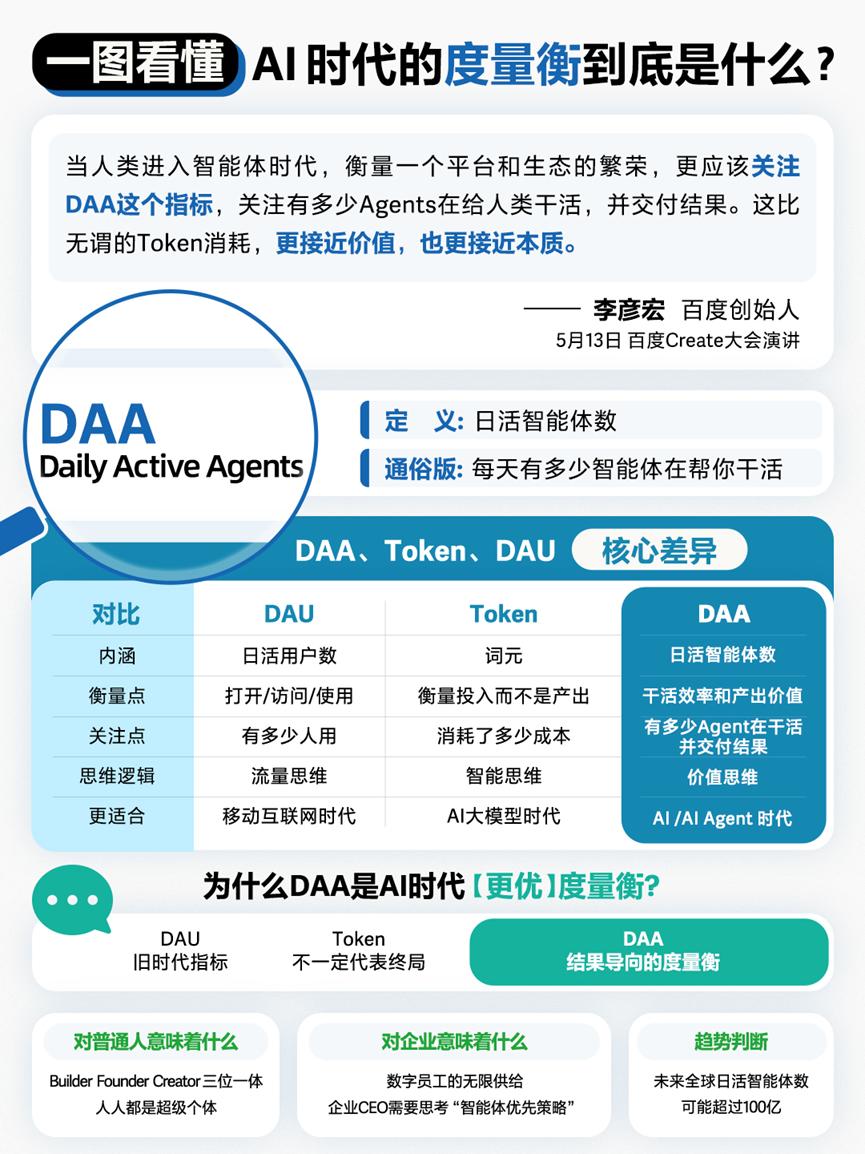

Until May 13, 2026, at Baidu's Create conference. Li Yanhong took the stage and introduced the concept of DAA: Daily Active Agents.

“Entering the age of agents, to measure the prosperity of a platform and ecosystem, we should focus on the DAA metric—how many Agents are working for humans and delivering results. This is closer to value and essence than meaningless Token consumption.” Li predicted that the global number of daily active agents could exceed 10 billion in the future.

In our view, DAA represents a high-dimensional understanding with immense information density. It is not merely an update to a technical metric but the starting point for a new valuation logic.

Just as GDP defines the industrial economy and DAU defines the mobile internet, DAA attempts to define the value scale of the agent economy.

Shortly after Li Yanhong proposed DAA, capital markets responded directly. On May 14, Baidu's U.S.-listed shares closed up 7.55%, surpassing $150, while its Hong Kong-listed shares opened 7% higher, showing strength in both markets. Market reactions are never just a vote for a metric but a pricing of the logic behind it. As the Create conference brought DAA from concept to center stage, investors' buying direction has already placed the first bet on this paradigm shift.

01 From DAU to DAA

Li Yanhong made it clear at the Create conference: DAU is outdated, Token does not represent the final answer, and DAA will be the standard for measuring success in the AI agent era.

This is not just a slogan. It is backed by a complete logical framework.

1. DAU is outdated

For the past two decades, value in the internet world has been defined by human attention. Whoever's app captures more user time wins. DAU, or daily active users, has been the ultimate metric for this logic. Product managers' KPIs revolve around it, investors' models anchor to it, and public companies' annual reports prominently display its growth curve.

But in 2026, this ruler is beginning to bend.

Beyond the inverse relationship between DAU growth and valuation increases, the limitations of DAU become even more apparent when considering AI-native companies.

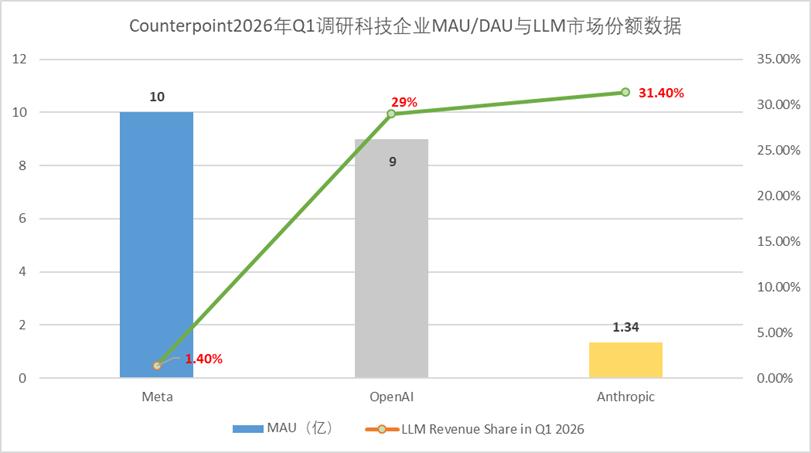

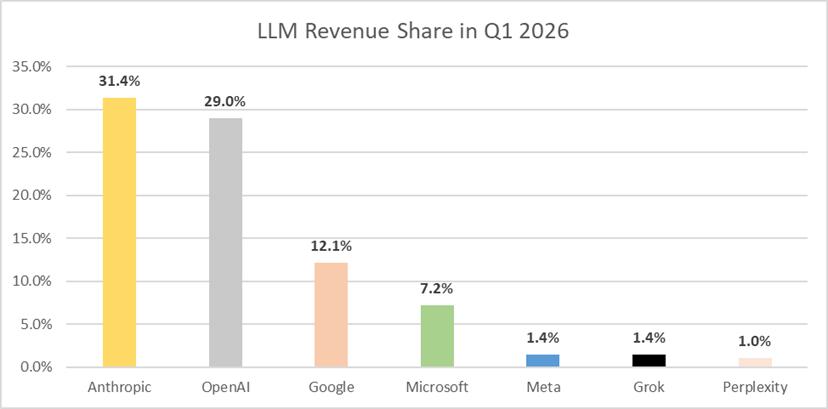

According to the latest research data released by Counterpoint Research, in the first quarter of this year, Anthropic had an average of 134 million active users, while OpenAI and Meta had 900 million and 1 billion users, respectively. However, in terms of LLM market share measured by revenue, Anthropic reached 31.4%, surpassing OpenAI's 29% despite having seven times fewer active users. Meta held a mere 1.4% share.

When value creation shifts from traditional models to agents, the old ruler loses its foundation. Interfaces begin to disappear, and the attention economy represented by DAU hits a wall.

2. Token is not the final answer

Compared to DAU, Silicon Valley's alternative answer is TPD—Token consumption per day. Token is closer to the essence of value than DAU, but Li Yanhong's judgment goes further: Token does not represent the final answer.

Token is a cost metric. It measures how much work machines have done, not what they have created.

When comparing Jensen Huang's Token theory with Li Yanhong's DAA, one can sense the evolution of value measurement: one focuses more on production costs, while the other focuses more on value delivery.

In March 2026, Gartner, the world's most influential IT research and advisory firm, released an official research report titled 'Token Consumption is a Misleading Metric for Measuring AI Market Leadership.'

Gartner's core conclusions are threefold:

First, Token data among vendors are not directly comparable. Different models consume vastly different numbers of Tokens for the same task, making it technically impossible to align Token counts across vendors.

Second, Token consumption is disconnected from business value. Gartner explicitly states that 'Token consumption occurs early in the AI value chain, long before decisions are made or business outcomes are realized,' reflecting computational activity itself rather than economic or strategic impact.

Third, using Token as a signal leads to misaligned incentives. Gartner advises AI leaders to 'de-emphasize Token metrics and instead evaluate AI through solution capabilities, decision-enabling effects, cost predictability, and quantifiable business outcomes,' explicitly proposing alternative directions—'completed tasks, decision quality, cost predictability, and integration with business processes.'

This is the fundamental difference between DAA and Token. Token asks 'how much was consumed,' while DAA asks 'what was delivered.' Token is a process; DAA is a result. Token measures cost; DAA measures output. The transition from DAU to Token to DAA represents a triple leap in measurement: from attention to cost and finally to value output.

3. DAA: The North Star metric for the agent economy

Just as GDP defines the industrial economy and DAU defines the mobile internet, DAA has the potential to become the North Star metric for the entire agent era. The commercial essence of the AI era is not 'humans using tools' but 'agents completing tasks.' When the subject of value creation shifts from humans to agents, the measurement standard must switch from 'how many people came' to 'how many tasks were completed.'

The valuation logic for AI companies must be completely updated accordingly.

02 The Three Dimensions of the DAA Framework

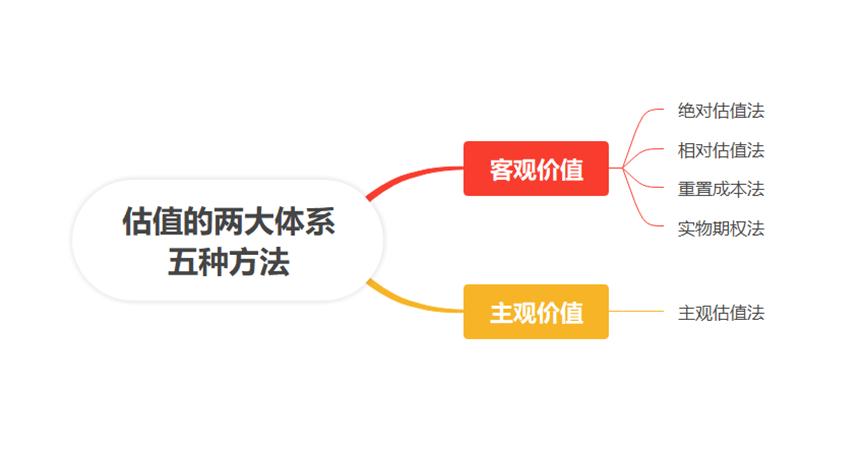

In traditional finance, valuation thought can be boiled down to two distinct systems: the objective value system founded by John Burr Williams and Benjamin Graham, and the subjective value system originating from John Maynard Keynes. Objective value is quantifiable, leading to a more systematic development of the objective value system.

Figure: The two systems and five methods of valuation, from 'Corporate Valuation: Methodology and Intellectual History,' compiled by Jinduan

Building on this foundation, the development of valuation methods has, almost with every round of improvement, accompanied innovations in supply-demand relationships. When old mathematical models fail to calculate the economic benefits of new business models, new pricing methods emerge. In the internet era, the price-to-sales ratio (P/S) and daily active users (DAU) replaced price-to-book (P/B) as the central pricing metrics. The innovation in valuation calculation models primarily corresponds to three stages:

First, traditional P/B could not measure the asset value of internet companies, so investors estimated potential value based on user numbers. Second, internet scalability was high, so valuation and revenue growth replaced traditional profit growth. Finally, different internet companies had varying values per user, leading to the logic of calculating value through DAU and ARPU.

The three-stage evolution of AI investment corresponds precisely to the unfolding of this logic:

The first stage, from 2023 to 2024, focused on model capability investment. Investors asked about parameter sizes and training data volumes, akin to evaluating steam engines by horsepower. This was a model-centric investment logic.

The second stage, in 2025, focused on application deployment investment. Investors began asking about the number of paying users and deployment scenarios, still stay at 'how many people installed the machine' stage. This was an application-centric investment logic.

The third stage, starting in 2026, marked a turning point. The core questions became: How many agents are delivering tasks? How much value are these tasks creating? This is a DAA-centric investment logic.

As a three-dimensional valuation coordinate, DAA points to a profound societal transformation.

The first dimension is DAA scale. In the consumer market, penetration matters. How many agents are active daily? This is the user reach metric for the agent era. Li Yanhong's prediction of 10 billion refers to this dimension. A single user can activate multiple agents; agents are not substitutes for humans but copies of capabilities.

The second dimension is task completion rate. In the enterprise market, effective labor matters. How many actual results have agents delivered? Whether an agent was activated matters far less than whether it truly accomplished its task.

The third dimension is value per task. In the developer market, economic output matters. How much revenue does each closed-loop task generate? Similar to ARPU in the mobile internet, it measures not the value created by a single user but the economic return of a single task.

Combining these three dimensions—scale, quality, and value—forms a complete valuation coordinate. Its essence is shifting the valuation anchor from attention competition back to productivity creation.

This framework is already being priced by the market.

The traditional SaaS per-seat pricing model is being disrupted precisely because its pricing detach s this coordinate. Enterprises pay for 'usage rights' rather than 'actual value.' Compared to Anthropic's million-Token-based usage charging, vendors like Sierra directly charge based on problem-solving effectiveness and share in the value created. The new framework pulls the valuation system from 'subjective judgment' back to 'actual output.'

Anthropic CEO Dario Amodei disclosed at the 2026 Developer Conference that the company's revenue and usage volume grew 80-fold on an annualized basis in the first quarter of 2026. ARR surged from approximately $8.7 billion at the end of 2024 to $30 billion by April 2026, surpassing OpenAI's $25 billion annualized revenue. Salesforce took about 20 years to reach $30 billion in annual revenue; Anthropic achieved it in less than three.

Figure: LLM Revenue Share in Q1 2026, Source: Counterpoint

The core product driving this growth curve is Claude Code, a programming agent—its logic is not 'calling a model' but 'getting the job done.' Customers pay for delivery fees, not API fees.

03 Why Baidu Proposed DAA

Baidu founder Li Yanhong's early proposal of DAA is no accident but an insight born from practice and an inevitable vision within a high-dimensional cognitive framework.

1. The spiral of cognition

Reviewing Li Yanhong's public statements over the years reveals a clear evolutionary arc.

At the 2024 WAIC conference, he proposed that 'in the AI era, the goal is not to launch a 'super app' but to create millions of 'super useful' applications,' First to question (being the first to question) the core evaluation logic of the mobile internet. In September of the same year, he publicly asserted that 'agents will be the most mainstream form of AI applications.'

In 2025, he published a signed article in the People's Daily, predicting that '2025 may become the first year of the AI agent boom.' At the same year's Baidu World Conference, he officially proposed 'internalizing AI capabilities' and 'emerging effects.'

Li Yanhong's AI predictions have been repeatedly validated by industry developments. In 2024, when the industry was still chasing model parameters, he was the first to propose 'competing on applications rather than models' and preemptively bet on agents, which has now become an industry consensus.

Later came the formal establishment of the DAA metric at Create 2026. From competing on applications and betting on agents to proposing AI internalization and emerging effects, and finally defining DAA—this is a cognitive spiral spanning over three years of continuous deepening.

2. Transcendence of Practice

The proposal of DAA stems from Baidu's own large-scale deployment of agents.

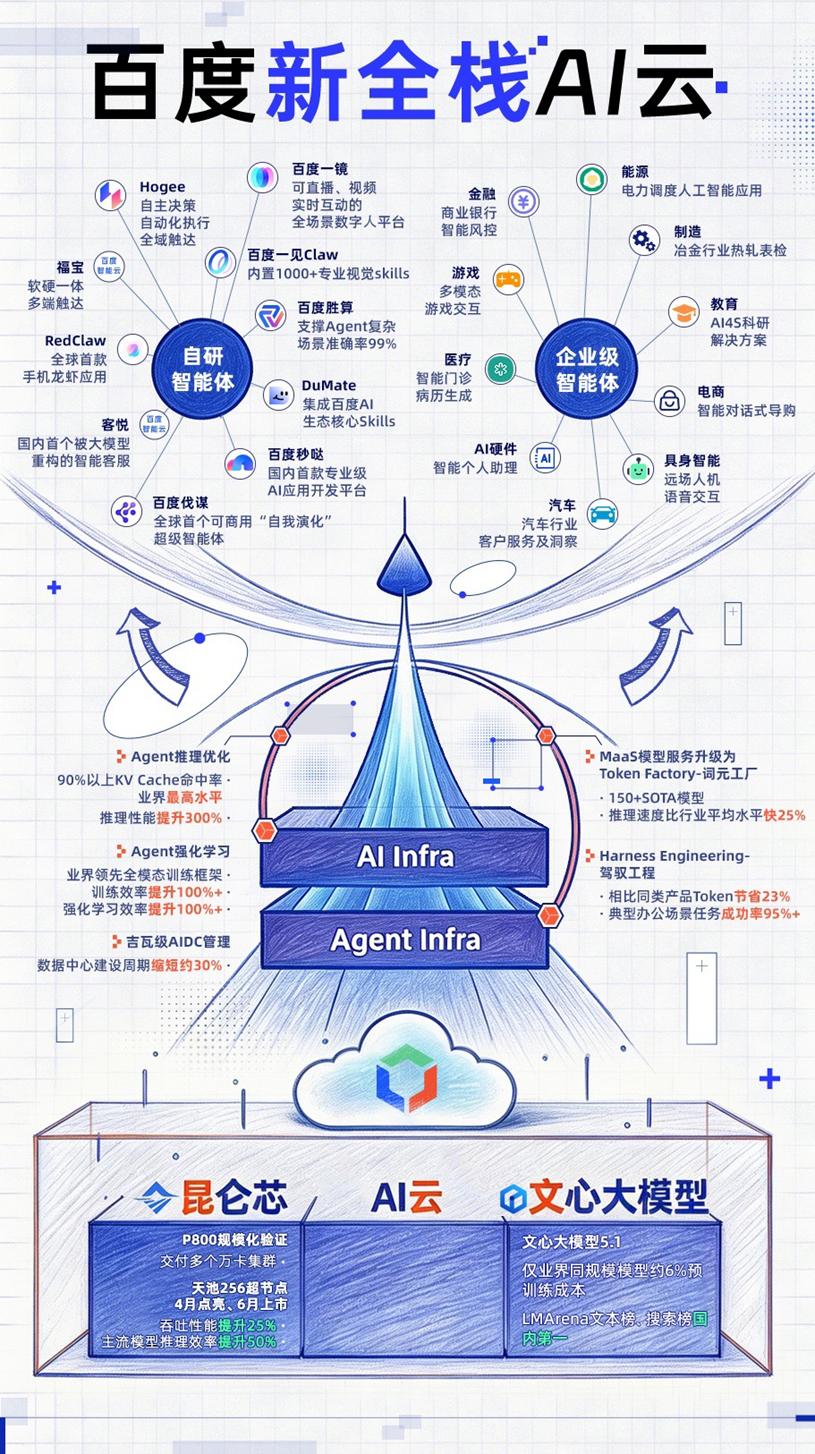

At this year's Create conference, Baidu unveiled an upgraded full-stack agent matrix. Without listing them all, let's examine a few representative examples:

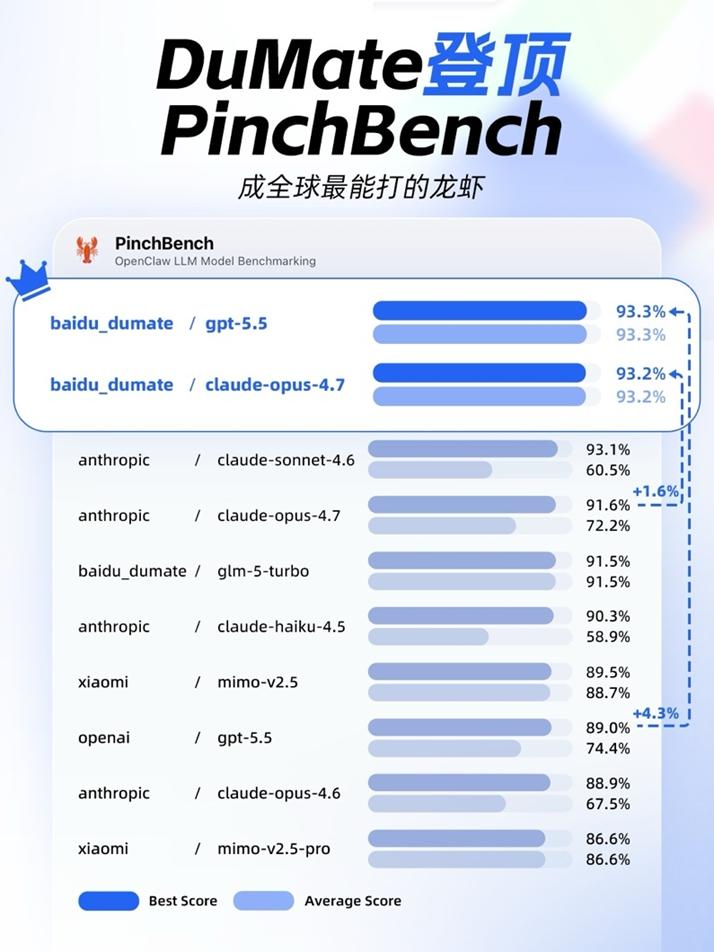

The general-purpose agent DuMate can perceive screens, operate software, process files, and integrate business systems. With a single verbal instruction, it autonomously completes tasks and delivers results. Baidu's core premium capabilities, such as Search AI API, Miaoda, Famous, Baike, and others, can be seamlessly integrated into the unified entry point of DuMate. In the authoritative PinchBench evaluation, DuMate ranked first globally with a 93.3% success rate, surpassing OpenAI.

The no-code platform Miaoda has been upgraded to version 3.0, launching an enterprise edition and introducing APP generation capabilities. To date, it has assisted global users in creating over 1 million AI applications, with 81% of users having no programming background. At the event, a second-grader from Wenzhou created a campus umbrella-sharing mini-program with a single sentence. This demonstrates how agents are transforming non-technical users into developers.

The self-evolving decision-making agent Famous 2.0 has been released, designed for business experts to make globally optimal decisions. At Qingdao Port, it enhanced automated terminal efficiency (see image below). In Ordos, its traffic control agent reduced average vehicle delays by 18% in the main urban area. At CITIC Baixin Bank, it doubled the efficiency of risk control feature mining. This is an agent that has learned to operate in the real world.

Huiboxing has evolved into "Baidu Mirror," expanding from live-streaming e-commerce to a full-scenario digital human platform. Through its e-commerce agent, merchants can generate professional-quality promotional videos at minimal cost. This means that advertising blockbusters previously requiring million-dollar budgets are now accessible to even the smallest brands.

From these examples, the common direction of Baidu's agent product matrix is clear: enabling agents to perform tasks and deliver results. The proposal of DAA first serves as a name for our own practical achievements.

3. New Full-Stack Foundation

The third source of confidence for DAA lies in Baidu's years-long investment in its "chip-cloud-model-agent" new full-stack infrastructure.

Agents do not emerge in a vacuum. They require a complete supporting infrastructure, with chips, cloud computing, and foundational models all needing adaptation for this new species. Baidu has been building along this chain for a considerable time.

Let's start with chips. The Kunlun Core P800 has established China's first self-developed 30,000-card cluster, successfully operating in real training and inference scenarios. It has achieved over 10,000-unit deployments across internet, finance, manufacturing, education, and energy sectors. This achievement, known to be extremely challenging in the industry, was accomplished quietly by Baidu. The upcoming 256-card version will further boost inference efficiency by 50%. J.P. Morgan values Kunlun Core at $40-49 billion in independent valuation , and the company has initiated IPO counseling for the STAR Market.

Moving up to the cloud layer, Baidu Intelligent Cloud holds over 40% market share in China's AI cloud full-stack services, ranking first for five consecutive years. In 2025, its full-year AI cloud revenue grew 34% YoY, with Q4 subscription revenue for AI high-performance computing facilities surging 143%. In the embodied AI cloud market, Baidu also leads with 35% share. At this conference, Baidu Intelligent Cloud fully upgraded to a new full-stack AI cloud for large-scale agent applications, with comprehensive enhancements to both Agent Infra and AI Infra.

Cloud computing is not merely a compute resource business—it forms the underlying foundation for large-scale agent deployment. According to 2025 public tender data for large models, Baidu Intelligent Cloud ranked first for two consecutive years in both project count and contract value.

At the model layer, the Wenxin large model 5.1 achieved leading performance at approximately 6% of the pre-training cost of comparable industry models, topping China's LMArena search rankings.

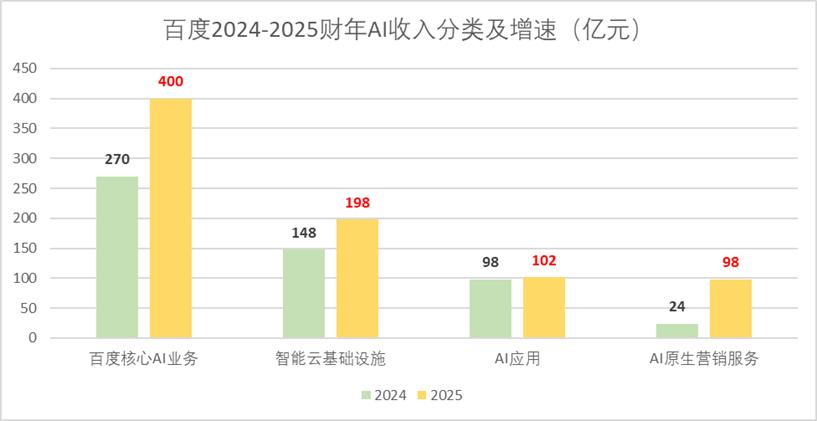

Financial metrics provide further validation. In 2025, Baidu's full-year AI business revenue reached RMB 40 billion, with AI revenue accounting for 43% of general business revenue in Q4. Citigroup projects 45% YoY growth in Q1 AI cloud infrastructure revenue, with AI-driven revenue exceeding 50% for the first time. Macquarie expects over 40% Q1 growth in AI cloud infrastructure and raised its 2026 EPS forecast for Baidu by 9%.

Each of these metrics could warrant a separate research report. Together, they convey a single message: agents do not emerge in isolation—they grow from the layered foundation of chips, cloud, and models. Baidu has deep roots in each layer, reaching a point where competitors have moved from incomprehension to inability to keep pace.

Notably, Baidu's Hong Kong shares have surged over 70% in the past year, leading Chinese concept stocks, yet the current stock price remains well below target prices from multiple institutions using SOTP valuation: CICC raised its US/HK targets to $205/HK$196. Macquarie maintains an Outperform rating, while Benchmark sets a $215 target.

Baidu, valued under old paradigms, has significant revaluation potential. As markets begin reassessing the company through DAA's new lens, its valuation benchmark will shift from classical AI's cyclical fluctuations to agent ecosystem value creation.

Baidu has continuously evolved and navigated multiple industry cycles—this is embedded in the DNA of its founder and this veteran tech giant. While studying at Peking University in 1990, Li Yanhong wrote "artificial intelligence" in his notebook. In 2013, he established China's first deep learning research institute in Silicon Valley—the first Chinese internet company to elevate deep learning to core technological innovation status.

Key milestones followed: discovering scaling laws in 2017, releasing the self-developed Kunlun chip and debuting autonomous driving in 2018, launching China's first knowledge-enhanced large model in 2019, premiering Wenxin Yiyan in 2023, pioneering agent applications and embracing MCP in 2024, and betting early on agents—each strategic move was initially non-consensus but ultimately drove industry consensus.

04 Conclusion: The Generational Shift in Valuation Paradigms Waits for No One

Li Yanhong predicts the global daily active agent count could exceed 10 billion.

Today, Meta leads global DAU with over 3.4 billion. In the future, agent activity will explode in scale—a single user may run multiple agents simultaneously, as agents serve as capability copies rather than human replacements. Within years, we'll see the agent economy dwarf the mobile internet's scale.

This projection's industrial significance lies in how agents will create enormous value, swelling economic totals. DAA serves as the core metric for measuring this new prosperity.

The establishment of standards is never purely technical. Throughout tech history, from telegraphy's baud rate to the internet's bit rate, standard battles ultimately reflect industrial power struggles. Currently, OpenAI promotes TPD (Token Processing Demand) as its key metric, attempting to make "token consumption" the next universal valuation language. Baidu counters with DAA, targeting a more fundamental layer: task closure for digital workers (agents). These paths represent different business logics—TPD remains at the model cost layer, while DAA operates at the agent value layer.

In investing, a proven pattern repeats: consensus yields only beta returns, while alpha opportunities emerge from non-consensus positions. When something becomes universal consensus, its valuation is typically fully priced. True investment giants emerge during phases of disbelief and incomprehension.

DAA represents precisely such a new non-consensus.

From attention metrics to productivity, from DAU to DAA—one era ends, another begins. And generational shifts in valuation paradigms wait for no one.

This article is based on publicly available information and serves for informational exchange only, not constituting investment advice.