NVIDIA GTC 2025 Keynote: A Summary of Core Content

![]() 03/25 2025

03/25 2025

![]() 537

537

The annual GPU Technology Conference (GTC) has convened once again, serving as a gathering for NVIDIA's AI initiatives and attracting a diverse array of tech industry heavyweights and academic institutions, including Meta, OpenAI, and UC Berkeley. Notably, numerous automotive intelligence enterprises from both domestic and international markets, such as Lixiang, Xiaomi, SenseTime, Rivian, and Wayve, were also in attendance. During the two-hour keynote address, Jensen Huang highlighted the expansive applications of AI supported by NVIDIA systems, delving into the company's contributions to autonomous vehicles, advanced wireless networks, and cutting-edge robotics. He also unveiled NVIDIA's product roadmap for the next two years.

This article summarizes the pivotal points of Jensen Huang's GTC 2025 keynote, shedding light on NVIDIA's AI empire and inspiring reflections on AI applications and developments within its ecosystem.

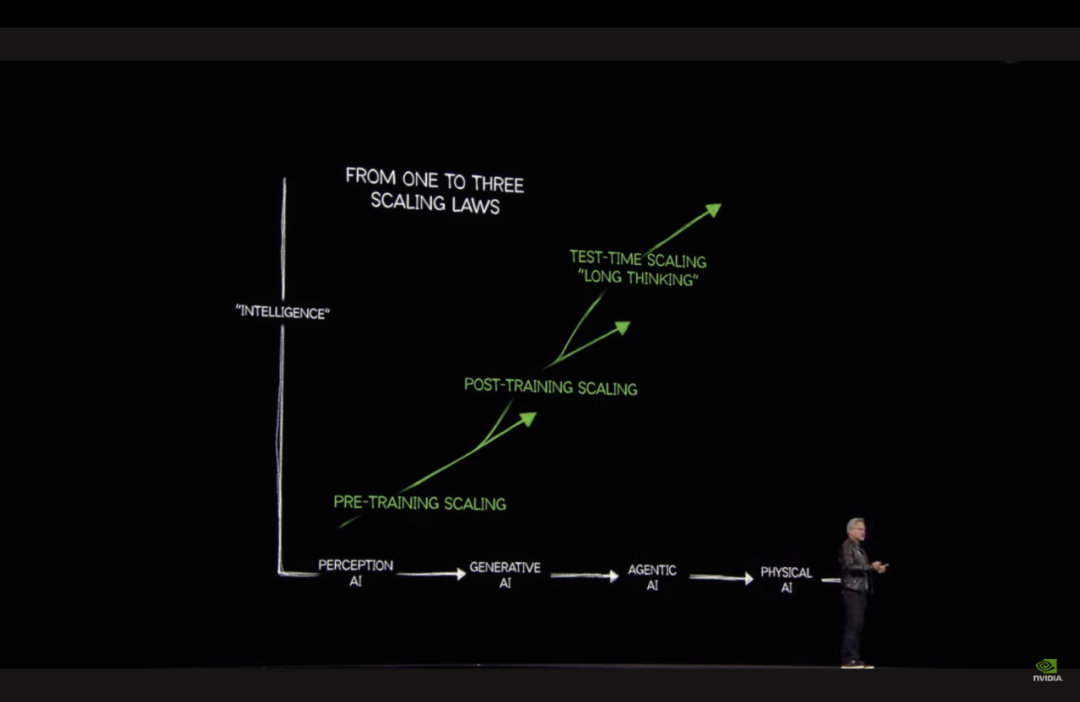

'Token' Everything: AI as the Digital Era's Engine

In the digital age, our information is transformed into 01 digital codes. Now, we stand at the forefront of generating 'tokens'—the fundamental building blocks of AI—which open up a world of limitless possibilities. These tokens are revolutionizing various fields:

- Scientific insights into images, illustrating data on alien atmospheres to guide future explorers.

- Converting raw data into forward-looking predictions, preparing humanity for the next challenge.

- Decoding and reconstructing physical laws, propelling us to greater speeds and distances, exemplified by NVIDIA's Physical AI for autonomous driving and robotics.

- Cracking and applying the code of life, uncovering hidden dangers before diseases manifest.

From digital Agentic AI that generates digital information to Physical AI that interacts with the physical world, AI has become the engine driving the digital era, heralding the age of AI.

AI Factory: A Paradigm Shift in Computing

In the context of 'tokenizing' everything, the AI Factory is leading a revolution in hardware. The question arises: what kind of AI computing units do we need? From Retrieval to Generation: The AI Factory has evolved beyond being a mere data storage warehouse; it is now an intelligent engine that produces 'tokens' (information units) in real-time through generative AI. This shift presents scalability challenges, as future data centers must handle exponentially growing inference demands, such as Agentic AI generating thousands of intermediate tokens. To meet these demands, AI computing chip architectures and cooling technologies must support ultra-large-scale deployments. Additionally, the AI Factory's revenue is directly tied to 'token generation speed × throughput,' making energy efficiency (tokens per watt) a core competitive factor.

The Advent of the Next-Generation GPU: Blackwell

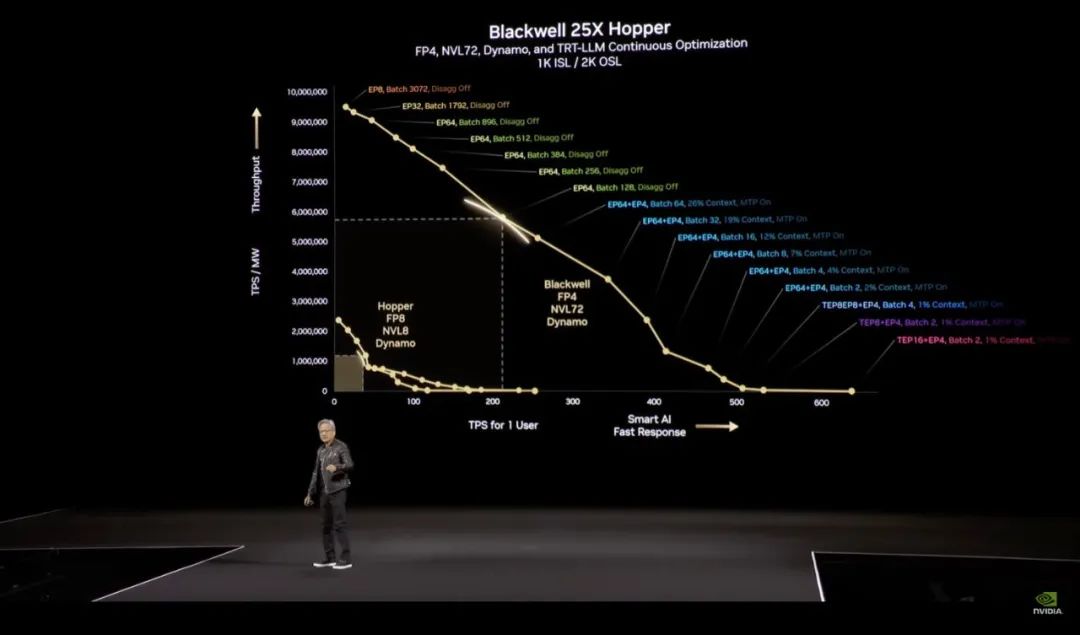

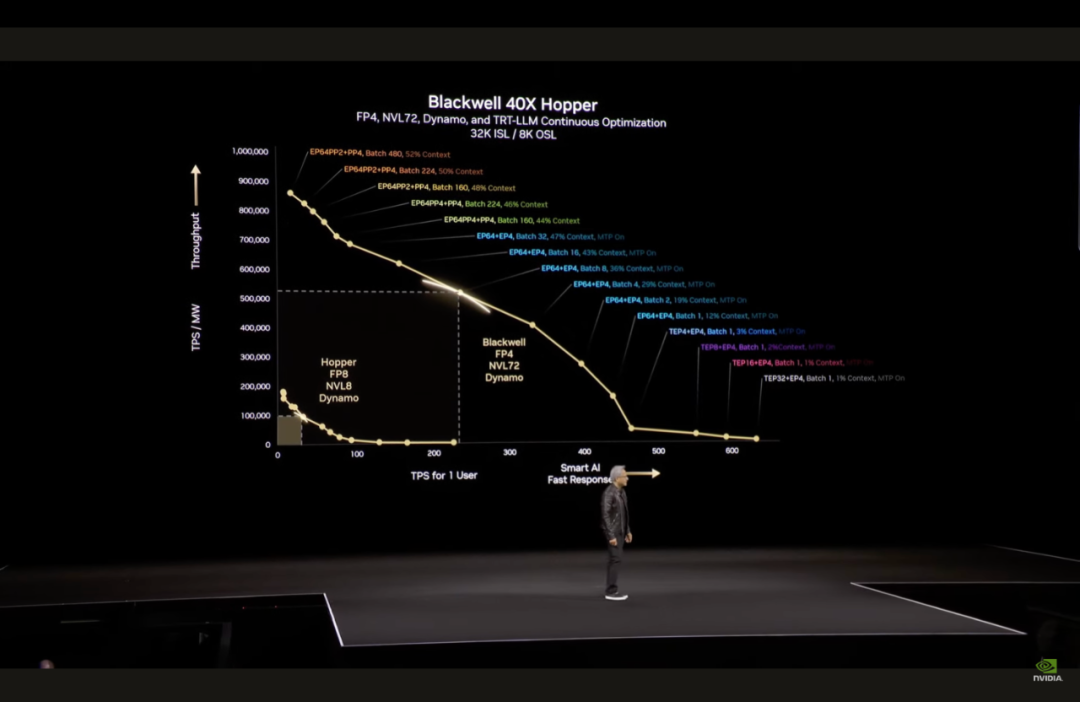

As per tradition, Jensen unveiled NVIDIA's latest GPU architecture at GTC. The Blackwell Architecture, integrating 208 billion transistors and offering up to 20 petaflops of AI computing performance, boasts a 25-fold increase in energy efficiency compared to the previous-generation Hopper.

The Blackwell Architecture introduces three key breakthroughs:

- Leap in Inference Performance: MVLink high-speed interconnect technology enables seamless coordination among 72 GPUs, resolving memory and bandwidth bottlenecks during large model inference.

- Dynamic Resource Allocation: In conjunction with the Dynamo operating system, it flexibly allocates computing power for 'prefilling' (context understanding) and 'decoding' (generating answers), optimizing AI Factory efficiency.

- 4-bit Floating-Point Quantization: Significantly reduces energy consumption and supports real-time inference for more complex models.

Most importantly, Blackwell is poised to accelerate mass production this time around.

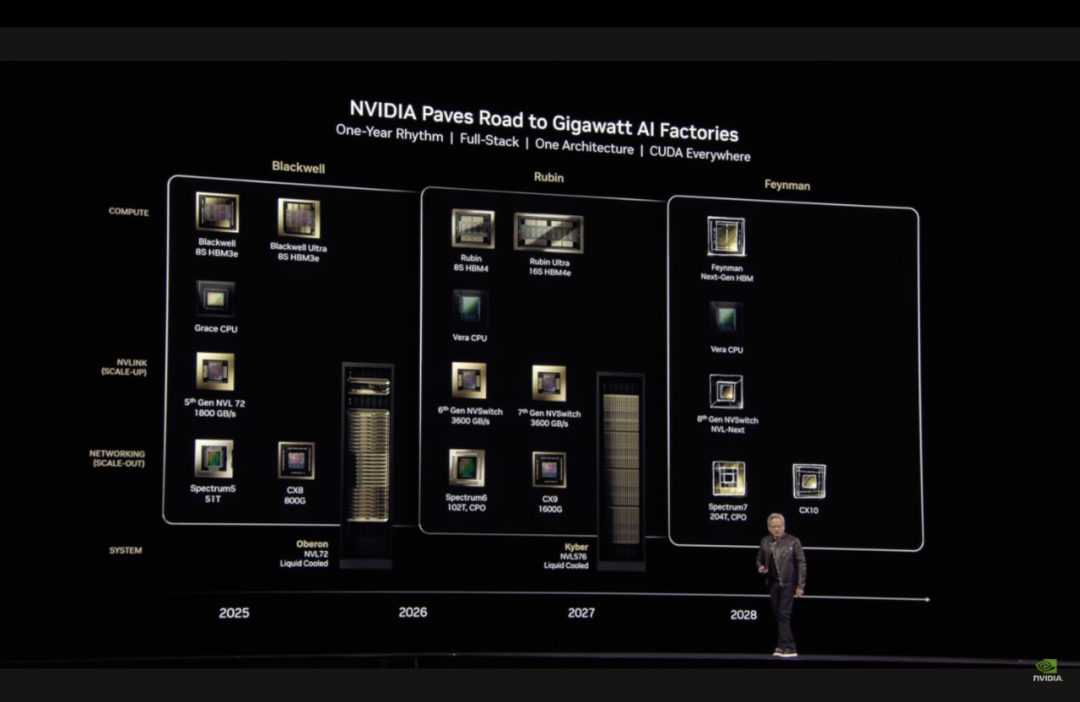

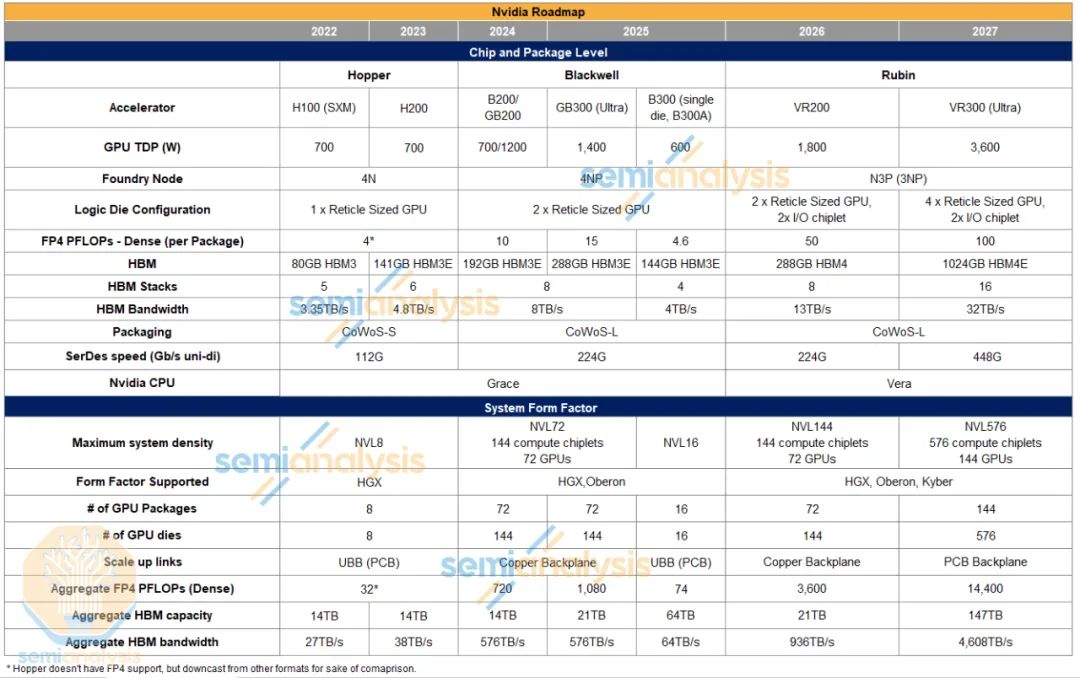

NVIDIA's Future AI Chip Roadmap

- Second half of 2025: Launch of Blackwell Ultra, offering a 50% performance improvement.

- 2026: Introduction of Vera Rubin Architecture (CPU+GPU co-design), featuring an Nvidia-designed CPU, HBM4 memory, and silicon photonics network (1.6T CPO), comprising 144 independent Nvidia GPUs.

- 2027: Rubin Ultra Architecture, including 576 GPUs, achieving 15 exaflops of computing power per cabinet, supporting a million-GPU cluster.

- 2028: Introduction of Feynman.

With the backing of this powerful AI computing chip, NVIDIA aims to continue driving the revolution in AI computing paradigms.

Software Ecosystem: CUDA-X and Dynamo

At the conference, NVIDIA introduced two software ecosystems to facilitate the deployment of computing hardware:

- CUDA-X Acceleration Library: Building upon NVIDIA's CUDA, which serves as the foundation layer providing a GPU programming model, CUDA-X can be understood as the superstructure. It offers domain-specific optimizations, akin to a 'toolbox,' helping developers harness the raw computing power of GPUs to boost industry productivity. CUDA-X covers fields such as scientific computing, 5G, and quantum chemistry, with tools like cuQuantum for accelerating quantum simulations and cuLitho for revolutionizing semiconductor lithography.

- Dynamo Operating System: Designed exclusively for the AI Factory, Dynamo dynamically schedules GPU cluster resources, supporting distributed training and inference of trillion-parameter models, essentially creating a 'single GPU superbrain.' As an open-source software, NVIDIA claims it can enhance AI inference efficiency and reduce costs.

Robotics: Breakthroughs in Physical AI

During the GTC keynote, Jensen Huang announced a new enterprise inference model based on Llama, named Nvidia Llama Nemotron Reasoning. Described as an 'incredible new model that anyone can run,' it surpasses DeepSeek and is part of the Nvidia Nemotron series, designed to enhance Agentic AI development. According to Jensen's roadmap, Physical AI holds immense potential. To facilitate its adoption, NVIDIA released Groot N1, a humanoid robot AI base model. Based on generative AI and reinforcement learning, Groot N1 boasts multi-task generalization capabilities. By generating extensive training data through Omniverse digital twins, robots can be swiftly deployed.

NVIDIA claims that Groot N1 features a 'dual-system architecture' enabling 'fast and slow thinking,' inspired by human cognitive processes. Groot N1 is now open-source, meaning developers can customize robot skills using the Groot ecosystem, making robot building as straightforward as smartphone development.

Industry Collaboration and Future Vision

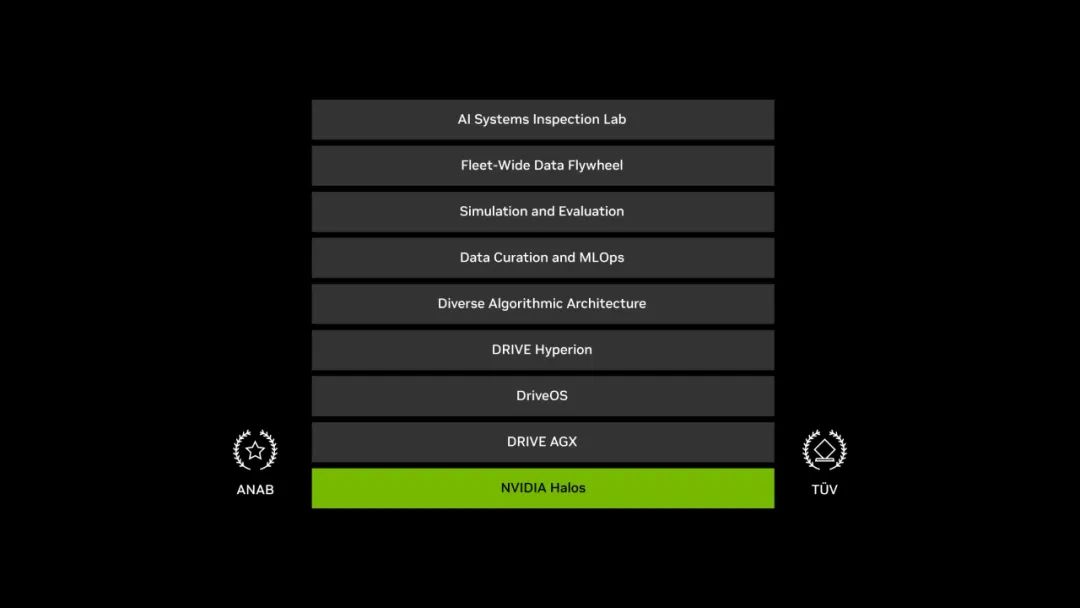

For the automotive industry, NVIDIA provides a comprehensive suite of solutions, encompassing cloud algorithm training, virtual simulation validation, and in-vehicle products. While Jensen Huang didn't elaborate much on this at GTC, readers can refer to our previous article 'What is AI Giant NVIDIA Doing in the Automotive Field?' for more details. However, NVIDIA did introduce NVIDIA Halos, a full-stack integrated safety system for autonomous vehicles, unifying its safety AV development technology suite from the cloud to the vehicle, simplifying the autonomous driving development process. Additionally, Jensen announced that General Motors (GM) has joined NVIDIA's ecosystem, adopting its full suite of solutions (training, simulation, in-vehicle chips) to advance autonomous driving.

Edge Computing and Beyond

- Edge Computing: Collaborating with Cisco and T-Mobile to build AI edge networks, optimizing intelligent scheduling of 5G base stations.

- Digital Twins: The Omniverse platform aids in designing AI factories, simulating energy consumption, heat dissipation, and network topology, reducing trillions of dollars in data center construction risks.

- Quantum Computing: Introducing the CUDA-Q quantum-classical hybrid computing framework to accelerate algorithm research.

Core Insights

According to NVIDIA's development and vision:

- AI Industrialization: Computing is shifting from 'general-purpose' to 'specialized,' with the AI Factory becoming the core of new infrastructure.

- Energy is King: Future data center revenue will be determined by energy efficiency, with liquid cooling and silicon photonics technology being crucial.

- Robotics Explosion: Physical AI + generative training herald the first year of humanoid robot commercialization, addressing global labor shortages. NVIDIA is driving a full-stack AI revolution from the cloud to the edge and from data to the physical world through a trinity of 'chips + software + ecosystem.' In summary, NVIDIA is making AI and robotics accessible to all.

Unauthorized reproduction and excerpts are strictly prohibited.

References:

NVIDIA GTC 2025 keynote presentation PPT and video