Data Revolution: How Physical AI Is Reshaping the Future of Large AI Models

![]() 03/27 2025

03/27 2025

![]() 720

720

In the realm of artificial intelligence, a quiet yet profound transformation is underway. Once-dominant general large models are gradually being supplanted by more specialized and precise vertical large models. This shift underscores a redefinition of data's role and value as the "fuel" of AI. With issues such as AI hallucinations and data bias emerging, constructing end-to-end vertical large models based on multimodal data from the physical world has become an inevitable path for industrial progress.

Dilemma of General Large Models: The "Cognitive Ceiling" of Internet Data

The "Digital Cocoon" of General Models

The success of general large models like ChatGPT and GPT-4 hinges on the "brute force" approach of internet image and text data. These models leverage trillions of parameters and textual data from books, webpages, code, and more to develop powerful language understanding and generation capabilities. However, this training model, reliant on static data, faces three significant bottlenecks:

Semantic Distortion

The internet is rife with outdated, incorrect, or even malicious information, leading to frequent erroneous conclusions in professional fields like healthcare and law.

Scenario Disconnection

General models lack real-time perception of the physical world and cannot grasp real-world rules, such as "stopping at red lights" or "slowing down on wet roads," making them unsuitable for applications like autonomous driving and robot control.

Logical Breakdown

Textual data alone cannot fully replicate the causal relationships in the physical world. For instance, when asked "How to heat an egg in a microwave," a model might dangerously suggest "placing it directly in the microwave," overlooking the physical principle of eggshells exploding.

The "Original Sin of Data" in AI Hallucinations

A 2024 study by Stanford University revealed that general large models have an error rate of up to 37% in complex tasks, with 62% of errors stemming from biases or omissions in training data. In medical diagnosis, for example, a renowned model's misdiagnosis rate in clinical cases exceeds twice the average for human doctors, due to training data that overly relies on published papers and lacks dynamic updates from real clinical scenarios.

This "original sin of data" has sparked industry reflection: General large models are essentially "internet memories" rather than "real-world decision-makers." They must transcend the limitations of the digital world and seek answers from real-time physical world data.

Physical AI Constructs "Digital Twin" Capabilities with Multimodal Data

Dimensional Upgrade of Data: From "Single Modality" to "Integrated Sensing, Computing, and Reasoning"

An industry-leading AI model provides a groundbreaking case:

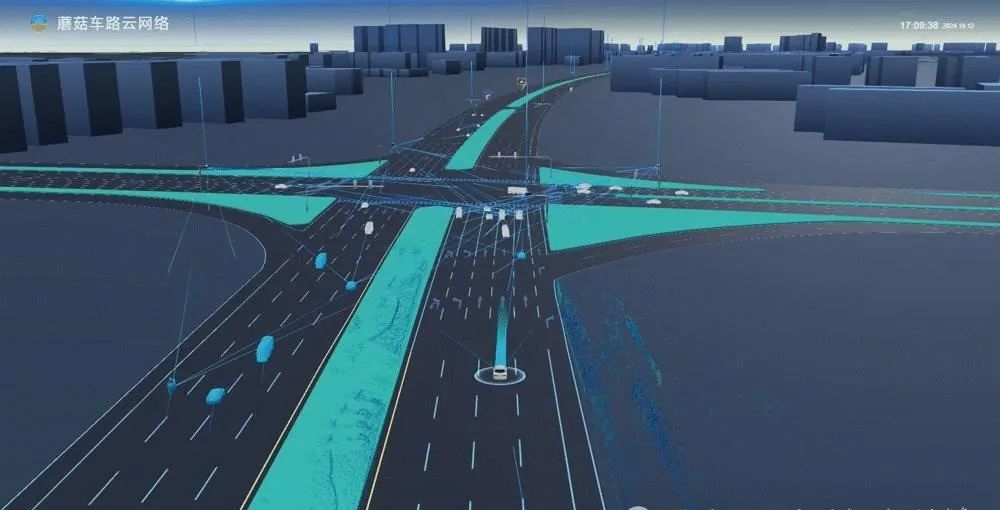

Multi-Source Data Fusion

Integrates data from roadside cameras, in-vehicle sensors, weather satellites, and the Internet of Vehicles to construct a city-level "digital twin" network.

Real-Time Dynamic Updates

Synchronizes data from the physical world every 10 milliseconds, ensuring "zero-latency" alignment between model decisions and real-world scenarios.

Edge + Cloud Collaboration

Edge computing handles urgent tasks (like obstacle avoidance in autonomous driving), while cloud computing optimizes global strategies (like traffic light scheduling), achieving a harmonious balance between efficiency and accuracy.

This data architecture directly addresses the pain points of general models. For instance, during heavy rain, the model automatically adjusts autonomous vehicle braking strategies by integrating data from wet road sensors, vehicle slipping data, and real-time weather information, reducing the accident rate by 82%.

The "Data Moat" of Vertical Models

The core advantage of vertical large models lies in the closed-loop optimization of "data - scenario - iteration":

Precise Data Collection

Deploys dedicated sensors for specific domains (like smart transportation and industrial quality inspection) to obtain high-value structured data.

Scenario-Based Training

Trains the model's dynamic decision-making capabilities by simulating real-world scenarios (like traffic congestion and equipment failures).

Continuous Evolution

Real-time feedback data fuels model iterations, fostering a positive cycle of "data quality improvement → model capability enhancement → application effect optimization."

In industrial quality inspection, for example, a company deploys visual sensors on production lines to collect millions of defect samples daily, increasing defect detection accuracy from 95% to 99.99% and reducing false alarm rates by 90%.

Collaborative Revolution of LLM+VLM: From "Text Games" to "Real-World Reasoning"

"Dual-Wheel Drive" of Language and Vision

The separation of traditional LLMs (Large Language Models) and VLMs (Large Visual Models) has hindered AI's ability to understand complex scenarios with "mixed text and images." However, Physical AI Agents achieve integration of "semantics - vision - decision-making" through the deep fusion of LLM+VLM:

Cross-Modal Understanding

A model can simultaneously analyze video streams from traffic cameras and text information from electronic road signs to determine the real-time meaning of "construction ahead."

Causal Reasoning

When detecting vehicle queues, the model not only recognizes the "congestion" phenomenon but also infers whether it is "congestion caused by an accident" or "regular congestion during peak hours" through historical data, thereby providing differentiated solutions.

Embodied Intelligence

By integrating the motion data of robots (like robotic arm angles and motor torques), the model can optimize operation paths to avoid physical collisions.

The "Emergent Effect" of Multimodal Data

A 2025 study by the Massachusetts Institute of Technology found that models integrating text, image, and sensor data outperform single-modality models by over 40% in complex decision-making tasks. For example:

Medical Field

An AI system combines pathological slice images, patient medical records, and genetic data to increase cancer diagnosis accuracy to 98.7%.

Agricultural Field

An agricultural technology solution predicts crop pests and diseases with 92% accuracy through satellite remote sensing, soil sensors, and meteorological data, providing early warnings seven days ahead of traditional methods.

The Implementation Path of Physical AI from "Laboratory" to "City-Level Ecosystem"

"Data Hub" of Infrastructure

Industry practices indicate that implementing Physical AI requires the construction of three key infrastructures:

Integrated Sensing, Computing, and Reasoning Base Stations

Integrate cameras, radars, and edge computing units to achieve localized "data collection - processing - decision-making."

AI Cognitive Network

Connect city-level data centers through 5G networks to enable global optimization capabilities.

Developer Platform

Open API interfaces to attract automakers, logistics companies, and research institutions to jointly develop vertical scenario applications.

Under this model, AI Agents are no longer isolated algorithms but become integral parts of the "digital nervous system" integrated into urban operations. For instance, a megacity has achieved dynamic traffic light control by deploying such a network, reducing the congestion index during peak hours by 27%.

How Physical AI Reshapes Human Civilization? The "Domino Effect" of Industrial Change

Transportation Sector

Vehicle-road coordination will foster a "zero-accident" society, with the global traffic accident fatality rate expected to decrease by 80% by 2030.

AI in Manufacturing

Quality inspection will drive "zero-defect" production, increasing yield rates in industries like automobiles and semiconductors by 5-10 percentage points.

Smart Cities

Sectors such as energy, healthcare, and education will achieve "precise supply," enhancing urban operational efficiency by over 30%.

Data is the new "oil," but it requires a "refinery." The multimodal data of Physical AI serves as the "blood" of AI Agents, while vertical large models are the "engines" that convert data into intelligence. The "rough data exploitation" of the general large model era is no longer viable. Future competition will focus on the three dimensions of "data quality," "scenario depth," and "iteration efficiency." The ultimate form of AI is not a text game on the internet but a native of the physical world that can perceive, think, and act like humans.