A True 'Hexagon Warrior'! Capybara Unifies Image and Video Processing: One Model for T2I, T2V, and I2V!

![]() 03/16 2026

03/16 2026

![]() 657

657

Analysis: The Future of AI-Generated Content

Key Highlights

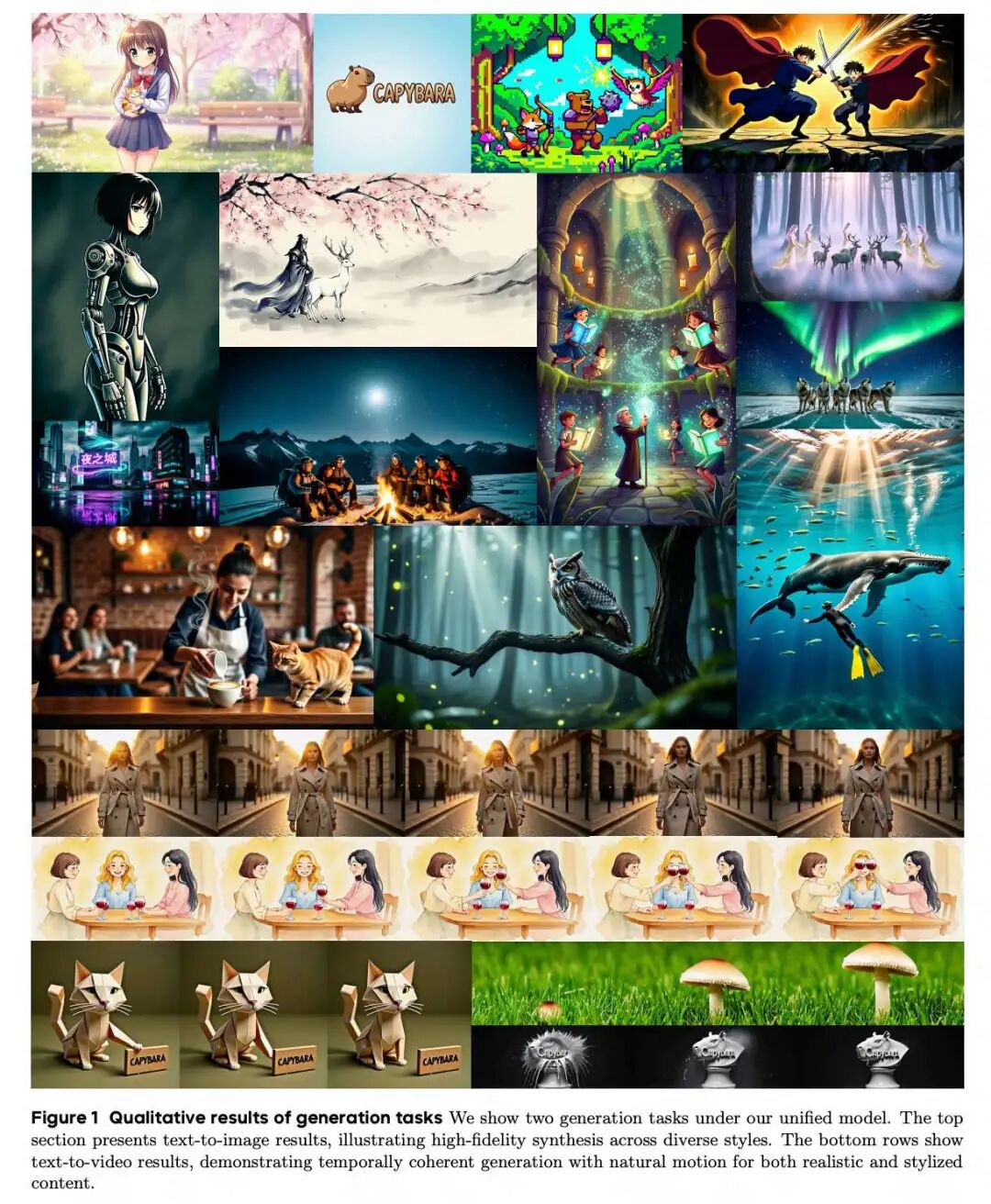

Unified Visual Creation Model Capybara: Addressing the high fragmentation in current visual content creation (single modality, fragmented functionality, incompatible interfaces), this paper proposes Capybara, a unified foundational model for visual creation. The model supports both image/video generation and editing tasks within a single framework.

Achieves True Multimodal Unified Interface: The core innovation of Capybara lies in its shared multimodal conditional interface. A single model can receive contextual inputs from multiple modalities, including text, images, and videos, and achieve diverse creative behaviors by altering the input context and instructions, without requiring architecture switching or training multiple specialized models.

Integrates and Unifies Four Core Creation Tasks: This paper unifies scattered creative functions into a single framework, including:

1. Text-to-Image/Video Generation.

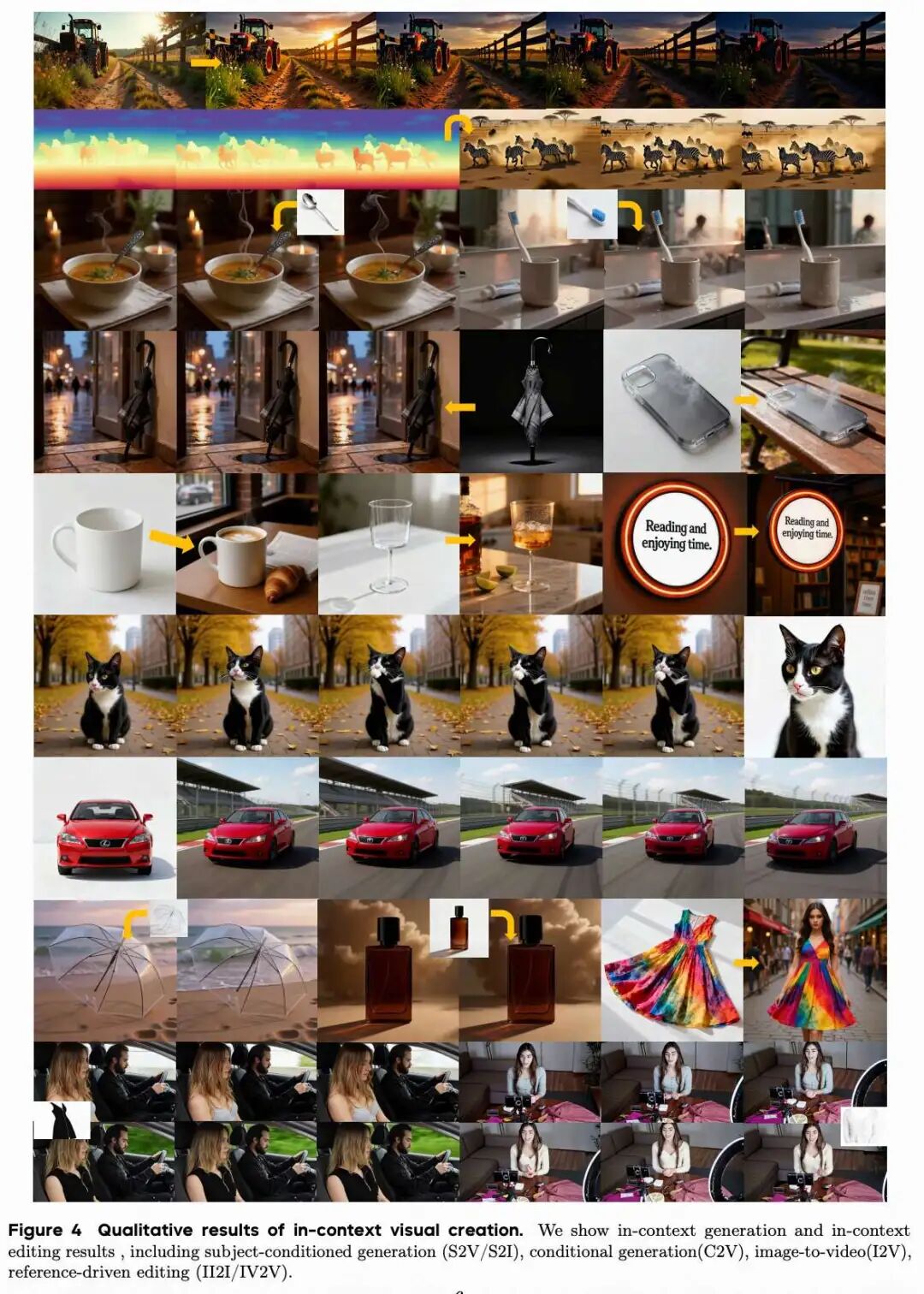

2. Contextual Generation: Generation based on visual conditions such as sketches, subject references, and starting frames.

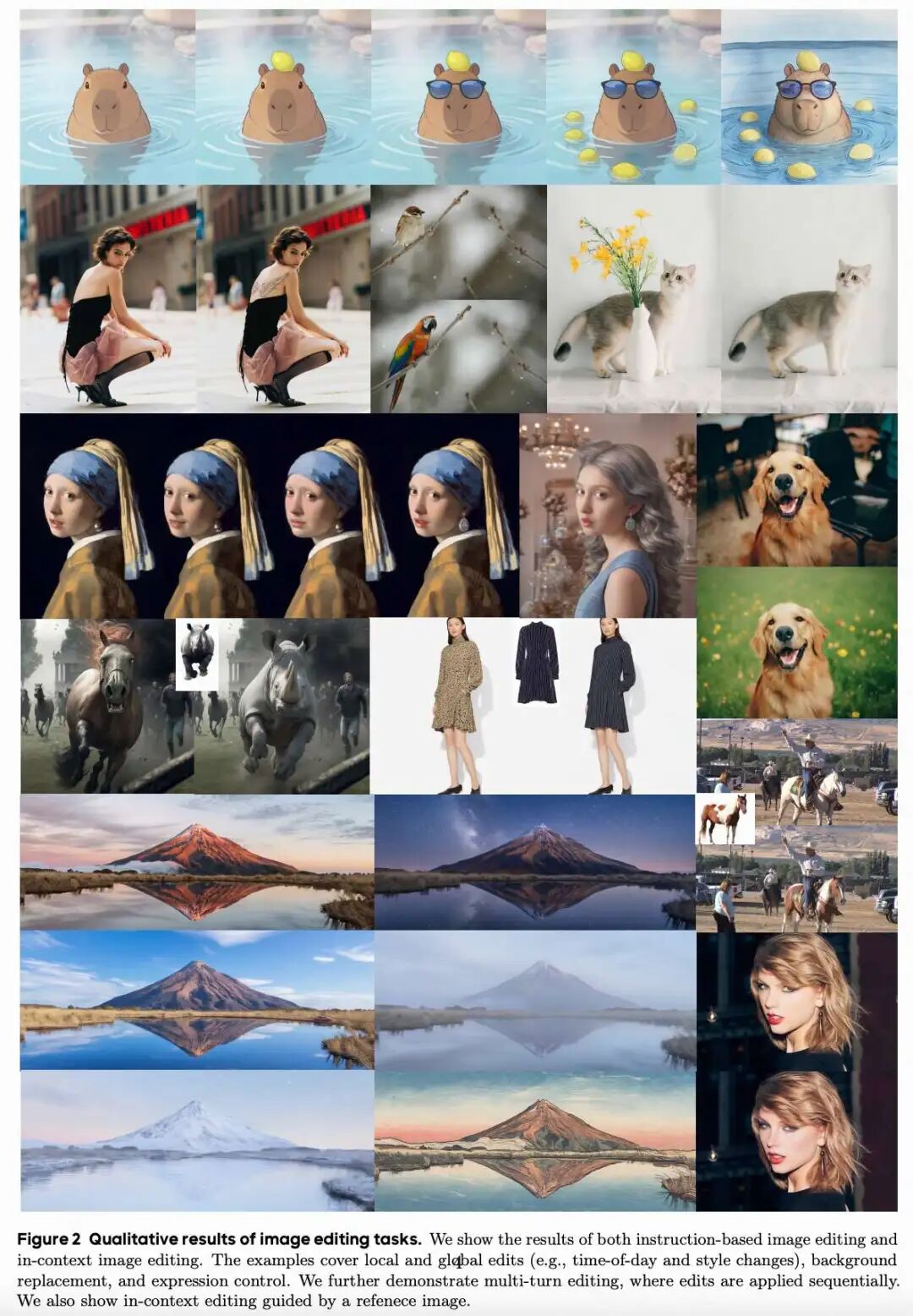

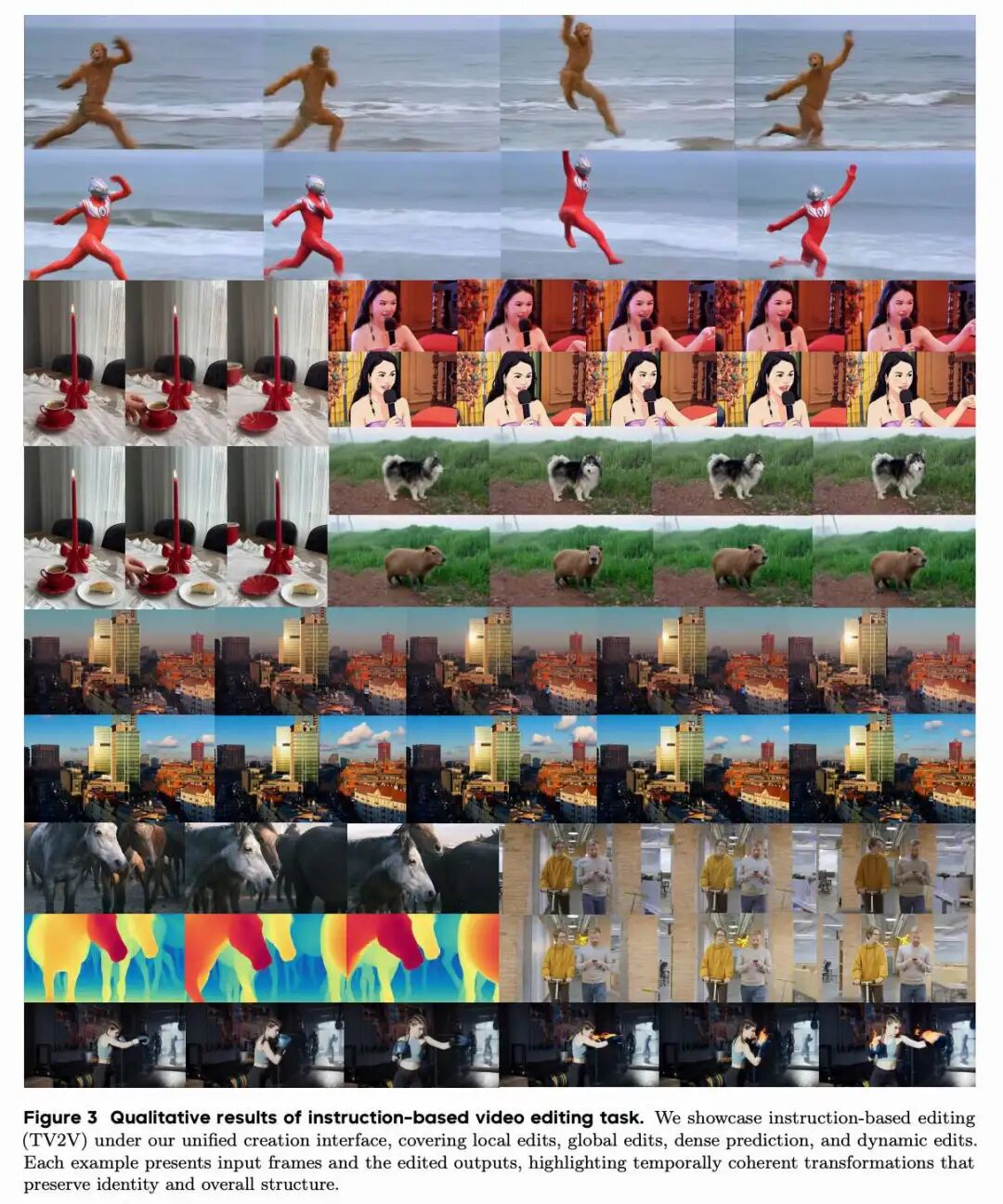

3. Instruction-Based Editing: Editing images/videos through text instructions, treating dense prediction tasks as special cases for the first time.

4. Contextual Editing: Editing driven by additional visual references, style examples, or multimodal contexts, such as keyframe propagation.

Reconstructs the Paradigm of Visual Creation: This paper redefines visual creation as a combination of textual conditions and multimodal examples under a unified backbone network. This design supports both static and dynamic content creation while flexibly combining textual intent with visual context.

Demonstrates Strong Scalability and Application Potential: The framework naturally extends to long-video editing and, with high throughput support, enables streaming video editing. Its unified interface also supports compositional multimodal workflows, such as mixing images and videos as references in a single request to simultaneously capture identity, motion, and structural information for more flexible creation.

Summary Overview

Problems Addressed

The current field of visual content creation suffers from high fragmentation: Existing works focus primarily on single modalities (e.g., images or videos) or implement only partial creative functions (e.g., generation or editing only). This results in fragmented solutions with incompatible interfaces, where contextual conditions (e.g., sketches, reference frames) are often introduced as task-specific add-ons, making it difficult to build a single system that supports diverse multimodal inputs and a unified creative workflow.

Proposed Solution

Introduces Capybara, a unified foundational model for visual creation. The model integrates scattered generation and editing tasks into a single framework through a shared multimodal conditional interface. Its core design allows a single model to receive multimodal contextual inputs—including text, images, and videos—and express diverse creative tasks by altering the provided context and instructions, without requiring architecture switching or training separate specialized models.

Technologies Applied

Unified Conditional Interface: Unifies visual creation into a single conditional package containing (1) text input, (2) primary visual context (image/video/starting frame), and (3) optional auxiliary conditions (style examples/sketches/depth maps, etc.).

Multimodal Context Learning: Supports the combination of textual conditions and multimodal examples under a unified backbone network.

Four-Task Framework: Supports (1) text-to-image/video generation, (2) visual context-based generation (e.g., sketches/reference frames), (3) instruction-based editing (text-guided editing, including dense prediction), and (4) contextual editing (visually referenced editing, such as keyframe propagation) through the same interface.

Achieved Outcomes

Functional Unification: Successfully unifies generation and editing, image and video tasks into a single model, enabling cross-modal consistent transformations.

Flexible Creation: Enables flexible combination of textual intent and visual context, supporting the creation of both static (image) and dynamic (video) content.

Strong Scalability: The framework naturally extends to long-video editing and supports streaming video editing under high throughput; it also supports compositional multimodal workflows (e.g., mixing images/videos as references in a single request), providing a foundation for flexible multi-task creation.

Data

To support unified visual creation, a joint image-video corpus was constructed to provide training signals for text-to-image/video generation, contextual generation, instruction-based editing, and contextual editing. Thus, our data includes both standard text-image/video pairs for zero-shot synthesis and context-rich tuples containing textual and visual inputs: subject references for reference-based image/video generation, visual prompts or structured controls for condition-controlled image/video generation (e.g., sketches, layouts, poses, depth/edge maps), starting frame-conditioned clips for image-to-video generation, paired source-instruction-target examples for instruction-based editing, and reference-driven editing tuples for contextual editing (source plus one or more visual examples). For propagation tasks, data was randomly sampled from the TV2V dataset as our training data.

A systematic multi-stage processing workflow was employed to transform heterogeneous raw data collections into high-quality training data. The process includes: (1) Quality Filtering: Using automated classifiers to remove defective content (blurriness, artifacts, harmful material) and additional overlay elements (watermarks, subtitles); (2) Semantic Deduplication: Retaining diverse, non-redundant samples through embedding-based clustering; (3) Distribution Rebalancing: Ensuring sufficient representation across subject categories, scene types, and visual attributes; (4) Dense Restatement: Using a bilingual (Chinese/English) vision-language model trained on high-quality annotations to generate detailed descriptions of dynamic elements (motion, camera movement) and static features (appearance, aesthetics, style). Specifically for editing tasks, we developed a large-scale synthesis pipeline to generate paired data (source image/video, edited result, instructions).

Model Design and Training

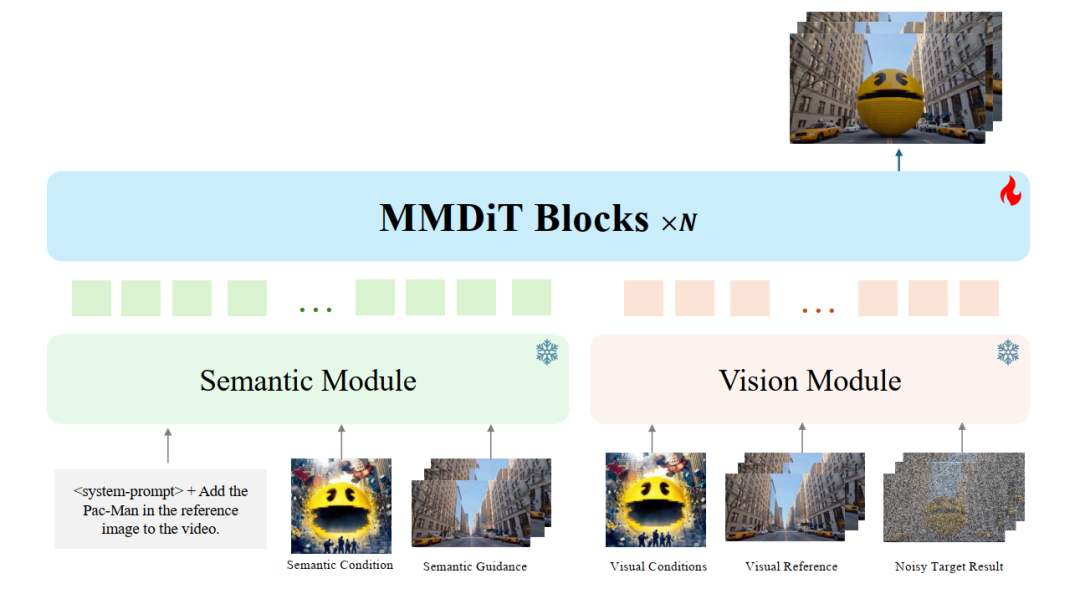

Unified Architecture: Decoupling Understanding from Generation

To build a unified visual creation model, the core challenge lies in receiving various contextual inputs—text, images, and videos—and fusing them into a single conditional space capable of driving generation and editing. Therefore, we adopted a dual-stream decoupled architecture that separates multimodal understanding from diffusion-based synthesis: a semantic-aware module focuses on processing user inputs and reasoning about multimodal contexts, while a visual fusion module integrates aligned semantic and visual features into a denoising backbone network for high-fidelity synthesis. By structurally decoupling understanding from generation, we avoid forcing a single set of modules to perform both high-level interpretation and low-level denoising simultaneously, enabling a single model to support diverse creative tasks by simply altering the provided context and instructions.

Semantic Module: The proposed semantic module integrates various conditions (e.g., text, images, and videos) into a unified latent representation. The module performs contextual reasoning to extract intent-specific features while remaining structurally isolated from the denoising network. This design provides a robust semantic prior that guides the generation process to strictly adhere to the user's creative intent.

Visual Module: The visual module is responsible for the diffusion denoising process and the precise integration of fine-grained pixel-level conditions. Complementing the high-level guidance from the semantic module, the visual module incorporates detailed visual conditions. This architecture directs generative capabilities toward faithful reconstruction and spatiotemporal consistency, ensuring strict adherence to multimodal constraints within a unified framework.

Diffusion Transformer Backbone Network: The model is initialized from the pre-trained Hunyuan-Video 1.5, inheriting its variational autoencoder, diffusion transformer architecture, and spatiotemporal modeling capabilities. Building upon this, we introduced a dual-stream decoupled modeling design: the semantic module processes all conditional inputs into a unified representation, while the visual module focuses on handling low-level features. This architectural modification enables flexible multi-conditional modeling while preserving the strong generative priors from pre-training.

Training Strategy

To establish a unified visual generation framework, a progressive three-stage training scheme was adopted. This strategy systematically addresses the unique challenges associated with unifying various tasks and conditional signals. The training trajectory enables the model to progress from robust reconstruction to broad multi-task generalization and ultimately to high-fidelity instruction alignment.

Stage 1: Reconstruction and Contextual Generation Training. Starting from a strong generative prior (initialized from HunyuanVideo-1.5). The goal is to ensure that the conditional signals produced by the semantic module can be reliably utilized by the visual module without performance degradation, which is particularly crucial for editing tasks where unedited regions must remain consistent. Additionally, we trained a mix of standard and contextual generation tasks (reference-based image/video generation, condition-controlled image/video generation, image-to-video generation) to introduce pixel-level conditional capabilities.

Stage 2: Editing Task Training. After establishing a stable multimodal conditional interface for generation tasks in Stage 1, we extended training to editing tasks within the same unified framework. Specifically, we introduced instruction-based editing (text-guided image/video editing), including dense prediction as a special case where instructions require generating structured outputs aligned with the input content. We further expanded to contextual editing driven by additional visual references, style/subject examples, and structured or region-specific guidance (reference-based image/video editing, cross-video editing) and incorporated propagation sequences where sparsely edited keyframes supervise the temporally consistent propagation of changes across longer videos.

Stage 3: Quality Fine-Tuning. Finally, quality fine-tuning was performed to improve instruction adherence, visual fidelity, and temporal stability in both generation and editing tasks. This stage focused on difficult cases, such as fine-grained editing locality, identity/appearance preservation, complex multimodal constraints, and long-range temporal consistency. We collected higher-quality and more challenging examples and applied targeted fine-tuning to reduce artifacts and strengthen alignment between inputs and outputs.

Agent-Assisted Visual Creation

For iterative video editing, a closed-loop process involving an agent was adopted: planning → editing → evaluation/diagnosis → optimization. The agent translates high-level intent into an editing plan, defining what to change (content/style/motion) and what to preserve, along with constraints regarding identity, locality, and temporal scope. It then invokes a video editor (e.g., text-to-video/video-to-video, optionally using masks/boxes, references, or per-segment scheduling) to generate candidate clips.

An evaluation module scores the results using a small set of metrics—target alignment, subject consistency, temporal stability, and constraint satisfaction—and outputs structured feedback pointing out incorrect changes and locations of artifacts. The agent converts this feedback into more precise instructions and updated controls (prompt modifications, intensity scheduling, time windows, regional constraints, anchors) and conducts several rounds of iteration until the metrics stabilize or reach a threshold. This is iterative guidance through explicit diagnosis rather than one-shot prompting.

Conclusion

Capybara, a unified foundational model for visual creation, effectively bridges the gap between static and dynamic content generation. By unifying multiple paradigms—from text-to-image to complex video editing—Capybara excels in precise instruction adherence, structural stability, and realistic visual quality. It demonstrates core technological innovations in native unified architecture, intrinsic 3D-aware mechanisms, and comprehensive multi-task training strategies, which are effectively integrated to build a robust and versatile system. It exhibits exceptional capabilities in handling complex multi-conditional scenarios, maintaining physically plausible temporal coherence, and enabling seamless professional-grade workflows for full-spectrum visual creation.

References

[1] CAPYBARA: A Unified Visual Creation Model