Benchmark, The Most Lacking Infrastructure in Embodied AI Research

![]() 03/23 2026

03/23 2026

![]() 538

538

Editor: Lv Xinyi

The structural contradiction of embodied AI models lies in the rapid iteration of models on one hand and outdated benchmarks on the other.

In other words, there has never been a scientific and reliable evaluation standard for embodied models to guide their growth from unchecked 'wild growth' to focused 'upward growth.'

"Wood becomes straight when measured by a plumb line." Embodied models also require a scientific Benchmark for precise evaluation, diagnosis, and even guidance for future research directions. However, the current reality is that the lack of a unified, high-standard real-world evaluation system has been constraining model iteration and industrialization.

In fact, any industry transitioning from technological exploration to scaling (mass production) undergoes a phase from 'diversity' to 'standard convergence.'

This is a proven successful path verified across multiple trillion-dollar industries. During the Internet era, protocol standards enabled global network interoperability; the explosion of deep learning also relied on evaluation systems. While they do not directly create products, they determine the direction and speed of technological progress.

Embodied AI is now at a similar early stage. Over the past two years, from VLA (Vision-Language-Action) models to world models, technological paths have emerged endlessly, with highly fragmented research paradigms. However, the industry does not lack models or demonstration videos—what it lacks is a unified yardstick to answer the question: 'What can models truly achieve in the real world?'

Without a Benchmark, model improvements remain largely narrative. With a Benchmark, technological progress gains verifiable, reproducible, and accumulative industrial value.

Against this backdrop, the launch of the CVPR 2026 official competition, ManipArena, signifies more than just another competition—it attempts to fill the most critical yet long-missing piece of infrastructure in the embodied AI field: a real-world unified evaluation system.

More importantly, a sustainably operated research platform can continuously accumulate data, validate conclusions, and feed back into model iteration, forming a positive cycle of 'evaluation-improvement-re-evaluation,' thereby driving the entire field from chaotic exploration to systematic evolution.

On the surface, ManipArena is a robot manipulation competition, but its design logic is closer to a systematic capability measurement.

For a long time, robot evaluations have relied on simulated environments or carefully arranged, highly simplified desktop grasping tasks. While such benchmarks have driven algorithmic progress, they fail to reflect the complexities of the real world. Long-term decision-making, spatial mobility, multimodal perception, and unpredictable physical interactions—the true essence of the physical world—are often excluded from evaluations. This has left researchers blindly rushing forward, unable to iterate precisely, with models performing well in labs but struggling to transfer to real-world scenarios.

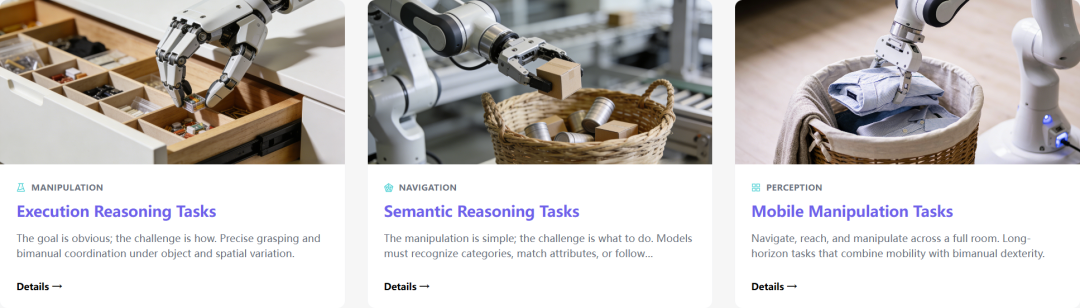

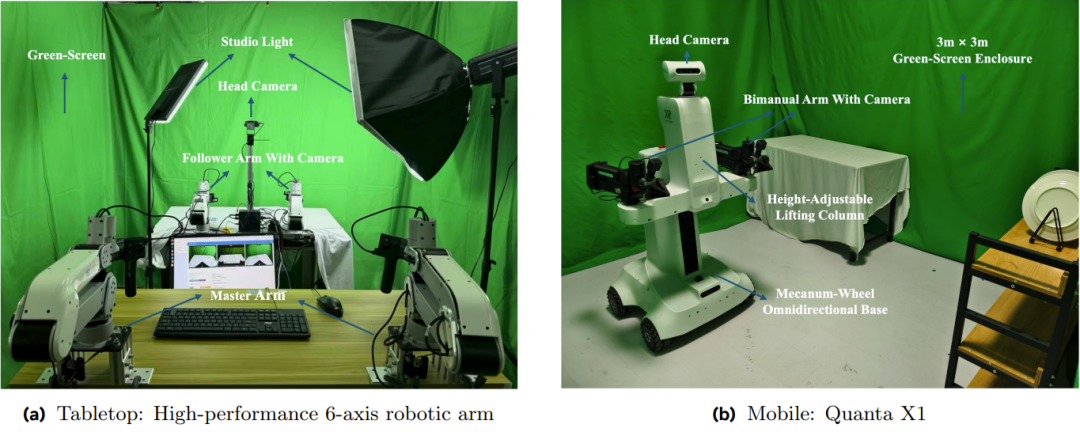

ManipArena's core goal is precisely to bridge this gap. The competition features 20 real robot tasks evaluated on a unified platform, covering key dimensions such as reasoning ability, generalization, long-term decision-making, and multimodal perception. Compared to past 'simple grasping' tests, this represents a more systematic examination of complete operational capabilities.

ManipArena has undergone extensive scientific design. One key feature is 'One Model for All Tasks.' Participants cannot train separate models for different tasks but must rely on a unified strategy to complete all challenges. This rule essentially screens for general capabilities rather than single-point tricks or task-specific overfitting.

Another critical design is hierarchical OOD (Out-of-Distribution) evaluation. Each task constructs varying difficulty levels through multidimensional changes in physical properties, spatial layouts, and semantic combinations, systematically testing model performance under unknown conditions. This shifts evaluation from merely providing a score to presenting an ability curve, revealing whether models struggle with perception, reasoning, or execution.

Furthermore, ManipArena expands evaluation scope from tabletop operations to mobile tasks involving navigation and full-body control, such as organizing clothes, hanging paintings, and storing items, covering operational scenarios closer to real life. This means it no longer evaluates 'robotic arm skills' but 'embodied system capabilities.'

In other words, the competition's goal is not to showcase what robots can already do but to accurately define what they temporarily cannot.

This is precisely the information most needed for industrial decision-making. While the event may not bring Carnival on the list (rankings-driven excitement), it will undoubtedly help researchers understand the true state of technology.

ManipArena's deeper significance may lie in its role as not just a competition but a sustainably operated research platform characterized by 'regularized evaluation,' 'continuous operation,' and 'significantly lowered barriers.'

First, it enables regularized evaluation capabilities. Participants can train models on publicly available data, submit algorithms via remote interfaces, and have the platform complete real-world testing and return results. This mechanism supports not only competitions but also daily research validation, making it a continuously usable Benchmark rather than a one-time event.

Second, the platform provides high-quality real-world data and a refined evaluation system, including 188 hours of high-quality real-world robot data, with a commitment to continuous future data open-sourcing, directly supporting model training and analysis. In robotics, acquiring real-world data is extremely costly, making this centralized supply an important research infrastructure.

More critically, it significantly lowers participation barriers. Research teams no longer need to purchase expensive robotic equipment and can participate in the full evaluation process with just a GPU server.

This represents a crucial turning point. Embodied AI research has long been constrained by hardware costs, with only a few laboratories possessing equipment advantages while most teams struggle to conduct real-world experiments. The remote real-world testing mechanism enables more researchers to compete, expanding innovation sources.

Additionally, this unified hardware approach avoids the impact of hardware differences on results. Moreover, since hardware facilities like 'Quantum One' are AI-native and designed for models, they can better unleash model performance. If ManipArena achieves long-term development, it will also help establish unified hardware standards.

When performance differences are primarily determined by algorithms rather than equipment, research focus will shift more toward models, accelerating competition and convergence at the software level.

"To prosper, build roads first." Today's embodied AI research, seeking to transition from chaotic wild growth to standardized development, lacks precisely such stable, scientific infrastructure.

Outsiders may ask: Why is a model company driving this effort? The answer lies precisely in the fact that only those who have truly developed models understand their capability boundaries and potential vulnerabilities.

First, recognize that Benchmarks are never neutral. They implicitly assume future technological directions:

- For example, by prioritizing reasoning, long-term decision-making, and multimodal fusion, ManipArena is making a judgment about the mainstream development path of embodied AI, correcting past simplistic task evaluations.

- Moreover, the intentionally emphasized motor currents and joint velocities in the competition's open-sourced multidimensional data, as officials stated, 'can serve as proxy signals for force and contact, which current mainstream models (VLA, World Models) have not effectively utilized.' ManipArena's targeted open-sourcing will help advance force-sensitive strategy research.

- Additionally, officials have repeatedly emphasized VLA and World Models competing on the same stage to see which excels, implicitly indicating technological trends.

Second, those who have built models better understand how models 'cheat.' In many benchmark tests, models can achieve high scores through statistical biases, environmental patterns, or specific tricks without possessing true general capabilities. ManipArena's design clearly attempts to avoid these issues, with features like unified environments, evenly distributed variations, and cross-task universal model requirements all aimed at preventing overfitting and opportunistic behavior.

Third, truly scientific and effective Benchmark design often comes from extensive experience accumulation. Only those who have self-developed models end-to-end and encountered enough challenges know where models tend to fail. From this perspective, 'those who solve many problems are better at setting them' is not just a joke but a technological reality. Evaluation systems essentially represent structured precipitate (precipitation) of past research experience and guidance for future technological paths.

As a company long committed to end-to-end embodied large model development, Independent Variable has deeply participated in the evolution from VLA to world model fusion paradigms, gaining firsthand knowledge of model capability boundaries and failure modes in the real physical world.

Its self-developed WALL-A model pioneered the deep fusion of VLA and world models, introducing an embodied multimodal chain-of-thought within a unified multimodal input-output architecture. Through spatial-temporal state prediction, visual causal reasoning, and learnable memory mechanisms, it enables robots to achieve stronger zero-shot generalization in unstructured environments. Meanwhile, relying on large-scale real-world reinforcement learning, the model accumulates high-quality experience through continuous interaction with the physical world, autonomously repairing long-tail issues and forming a technical closed loop of 'basic model—real interaction—capability evolution.' The subsequently open-sourced WALL-OSS also demonstrates excellent long-range operational capabilities, causal reasoning, and spatial understanding.

This end-to-end practice—from model architecture and training methods to real-world deployment—has not only given Independent Variable deep insights into model training challenges and kept it in sync with model technological development but also positioned it as an active shaper of embodied AI capability evaluation systems. For a technological revolution, its societal benefits never depend on which company's technology prevails but rather begin with the industry gradually establishing reliable standards. The same holds true for embodied AI.

Model competitions merely witness one aspect of rapid technological development. If ManipArena can operate sustainably, it will record not just rankings but potentially the timeline of embodied AI's industrialization.