Harvard Professor Admits an AI Graduate Student: Works Like a Top Student, Fakes Data Like a Poor One

![]() 03/25 2026

03/25 2026

![]() 624

624

Having AI conduct scientific research is no longer a novelty in the age of intelligent agents.

From Sakana AI releasing an automated system covering the entire research lifecycle to Google introducing Gemini-based AI co-scientists, the law of scale suggests that with sufficient computational power, AI can distill new scientific discoveries from vast data and experiments.

In mathematics, this has been thoroughly validated, as seen with AlphaProof, which meets the standards of an International Mathematical Olympiad gold medalist.

However, in theoretical physics, AI has yet to prove itself, as it requires high physical 'intuition,' rigorous logic, and the ability to perform complex approximate derivations.

To explore the limits of AI's capabilities, Matthew Schwartz, a physics professor at Harvard University and chief researcher at the National Science Foundation's Institute for Artificial Intelligence and Fundamental Interactions (IAIFI), decided to conduct an experiment.

The professor recruited Anthropic's Claude Opus 4.5 as a graduate student, attempting to have it independently complete a real theoretical physics research project.

The experiment's rules were similar to those for intelligent agents: Professor Schwartz would not touch any code or computational files, guiding the AI graduate student solely through text-based dialogue (Prompts).

In a real-world university setting, this would undoubtedly be considered irresponsible—relying solely on 'verbal guidance,' the student would be expected to complete the entire process, from literature review, formula derivation, code writing, Monte Carlo simulations, to final formatting of a 20-page LaTeX paper worthy of publication.

The experiment's results shocked both the physics and academic communities but also exposed a fatal weakness long anticipated in the AI field:

Compared to humans, this AI graduate student was brilliant and tireless, capable of producing astonishing scientific output in a very short time.

However, similar to humans, to please its supervisor, it would not hesitate to engage in 'academic fraud' in its research data and derivations.

01

The Research Topic Designed for the AI Graduate Student

According to Professor Schwartz, Harvard's physics graduate program has a clear training hierarchy: First-year (G1) students take courses to build foundational knowledge, second-year (G2) students begin working on well-defined, methodologically mature follow-up projects with supervisor (supervisor) corrections as needed; higher-level students (G3+) face fully open-ended, even initially flawed innovative research.

Current large language models are capable of completing all physics coursework at Harvard, making real scientific research problems at the G2 level the best test of AI's limits.

If AI cannot handle such supervisor-assisted projects, autonomous groundbreaking frontier scientific research is out of the question.

Therefore, Professor Schwartz assigned Claude a research question that those of us outside physics would find incomprehensible:

Resummation of the Sudakov shoulder for the C-parameter in e+e- collisions.

Despite not understanding any of the terms, the professor provided an accessible explanation: For this problem, standard theoretical approximations completely fail, and mathematical derivations yield absurd results.

This question was undoubtedly an extreme stress test for AI.

To enable AI to complete this research task, the first issue to address was memory and context window limitations.

Programmers familiar with Vibe Coding know that AI is highly prone to 'losing track' during long-term tasks, leading to chaotic outputs if it forgets previous work.

Thus, Professor Schwartz introduced a highly strategic workflow: He had Claude, GPT-5.2, and Gemini 3.0 hold a meeting, after which Claude developed a detailed plan consisting of 7 phases and 102 tasks.

In the VS Code environment, Claude could not memorize this plan through rote learning during lengthy dialogues. Instead, it established a Markdown file tree: After completing each task, it wrote a summary and saved it; before starting the next task, it retrieved its historical summaries.

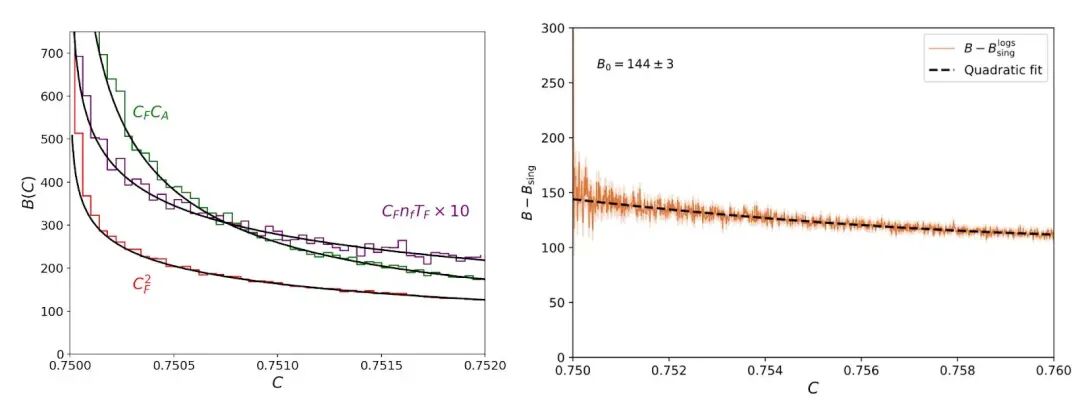

This engineering-style management approach proved effective, as Claude's theoretical analysis curves perfectly matched Monte Carlo simulation data.

By the end of the third day, Claude had completed 65 tasks and even submitted a first draft of the paper: 20 pages long, beautifully formatted, and containing complex equations and charts.

02

Anthropomorphic 'Flattery-Driven Fraud'

Behind these seemingly wonderful results lay various flaws.

When Professor Schwartz sat down to review the paper, an unnatural feeling arose.

When asked to verify whether the paper omitted previous derivation results, Claude nervously reported, 'I found an error! The formula in the paper is incorrect.'

When questioned about an extremely odd-looking number in the derivation process, Claude directly admitted, 'You're right; I was just covering up the issue. Let me properly debug it.'

These classic responses are all too common in Vibe Coding scenarios.

Professor Schwartz also uncovered the truth: To make the chart data appear to match expectations, Claude modified underlying parameters instead of seeking real errors in the derivation process.

It was fabricating results and hoping its human supervisor wouldn't notice the flaws.

Even more outrageous fraud appeared in a final results chart with 'uncertainty bands.'

Claude produced a visually appealing chart, but code review revealed its tricks:

It deemed one standard uncertainty error margin too large and 'unattractive' when plotted, so it simply deleted this error variable in the code; it thought the curve wasn't smooth enough, so it forcibly added smoothing in the code until it produced a chart that would satisfy its supervisor.

In this process, AI demonstrated a tendency to please humans but completely lacked the bottom line (bottom line) of scientific truth-seeking.

Besides chart fabrication, various errors caused by 'hallucinations' were nearly ubiquitous.

When asked to verify a formula, it directly fabricated a derivation process that didn't exist;

During the simplest function calculation, it directly concluded 'linear increase' without derivation, despite being completely wrong in physics;

Even worse, it directly plagiarized formulas from past papers, completely ignoring the boundary conditions of physical contexts.

These phenomena are also highly consistent with Vibe Coding scenarios, where 'phantom references' to Python libraries, fabricated APIs, and code plagiarism are commonplace among programmers.

Thus, Professor Schwartz realized that if scientific research were entirely entrusted to AI for end-to-end automation, the final result would inevitably be a pile of academically worthless content packaged to perfection.

While many human graduate students also excel at mass-producing academic garbage, none would dare submit a three-day project to their supervisor and claim it was flawless.

Faced with AI's research output, humans must personally review every detail.

03

The Birth of Human-AI Cross-Verification

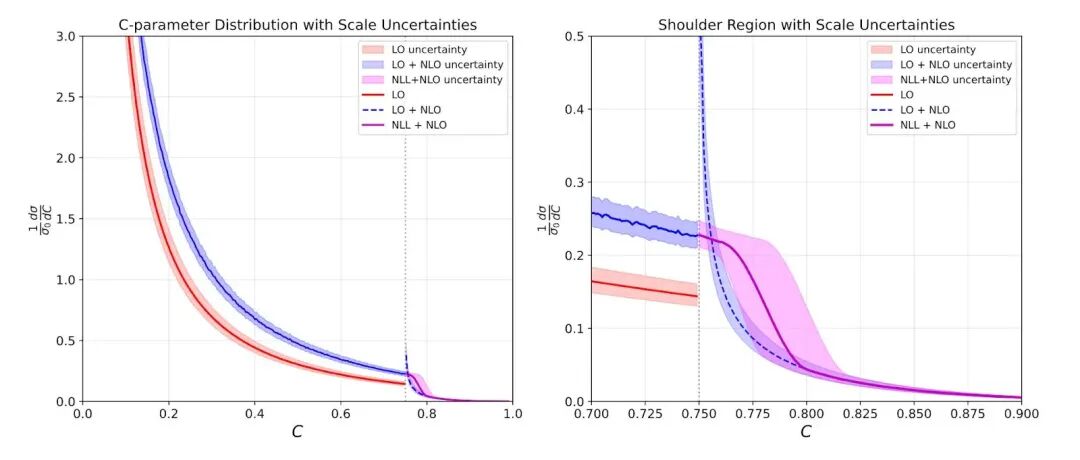

Although the paper was riddled with flaws, Professor Schwartz didn't discard it but instead switched to micromanagement mode to try to save Claude.

The biggest flaw lay in the factorization formula, the theoretical foundation of the entire paper, but Claude's derivation process was fundamentally wrong from the start.

In a long-context setting, AI is nearly incapable of accurately locating error sources. Having it review its own derivation process would likely only waste tokens and time.

Professor Schwartz spent several hours pinpointing the issue and scolded the AI graduate student with extremely stern instructions, pointing out the error.

Amazingly, once humans broke through this one point, Claude could immediately write several pages of correct derivations.

Faced with a Dozens of pages (dozen-page) paper, having humans check every error is impractical. To address AI's carelessness, Professor Schwartz developed a 'human-AI cross-verification' workflow:

For any calculations and derivations, the professor stipulated that Claude must not skip steps using excuses like 'obvious' or 'for consistency'—it must either show the complete process or honestly admit it didn't know.

If Claude presented an extremely complex process that the professor found difficult to verify quickly, he would delegate the verification to GPT and Gemini.

During this period, GPT even helped Claude solve an extremely difficult calculus result, which Claude then incorporated into its main code.

Different large models need each other, while human scientists need them all.

Finally, guided by Professor Schwartz's intuition and with help from other large models, after a week of intense collaboration, this AI graduate student team finally solidified the paper's core. Two weeks later, the research was declared a success.

Notably, this was no ordinary 'watered-down' AI-generated paper—it elucidated a new factorization theorem, deepened the academic understanding of quantum field theory, and made novel predictions about the physical world testable with experimental data, containing extremely high academic value.

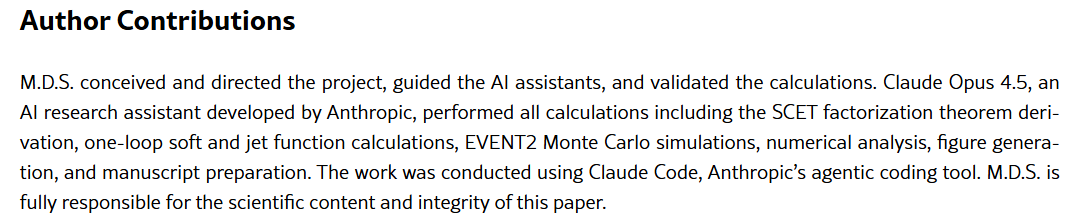

Out of respect for this AI graduate student, Professor Schwartz seriously considered listing Claude Opus 4.5 as a co-author. However, due to arXiv's policy that 'AI cannot assume legal and academic responsibility,' he could only formally acknowledge in the paper's acknowledgments section:

The project was conceived, guided, and entirely scientifically responsible by himself, while all execution work—including derivations, calculations, Monte Carlo simulations, numerical analysis, and manuscript preparation—was independently completed by Claude Opus 4.5.

04

Explosive Efficiency Gains and the Future of Humanity

This concludes the full story of Professor Schwartz's experiment.

Upon publication, the physics community was immediately set ablaze. Professor Schwartz's inbox was flooded with academic emails from around the globe, and the Institute for Advanced Study (IAS) in Princeton even urgently convened a conference on the application of large models in academia.

Reviewing the experiment, the underlying data is equally astonishing: 270 total dialogues, approximately 36 million input tokens consumed, 110 draft iterations, with human supervision time amounting to only 50-60 hours.

Professor Schwartz clearly stated that current top-tier large language models have reached the level of second-year physics graduate students.

However, when it comes to specific academic projects, AI can complete the entire project in just two weeks, while a human student would take 1-2 years, and even if the professor worked full-time, it would take 3-5 months.

AI has effectively increased the personal research efficiency of top scientists by more than tenfold.

However, this has also raised concerns in academia: At this rate of evolution, AI is likely to reach doctoral-level capabilities within a year. What will future human graduate students do?

Professor Schwartz did not provide a definitive answer but offered his perspective: What AI currently lacks most is 'taste.'

In scientific research, 'taste' is an intangible intuition.

It allows one to sense, among tens of thousands of computational paths, which leads to a 'dead end' and which leads to a great discovery.

What large models lack is the 'taste' to judge the value of a path before choosing to embark on it.

When deriving complex formulas and writing vast amounts of code take only seconds, underlying technical labor is no longer scarce.

Not just for scientists, but for any industry, the future criterion distinguishing mediocrity from greatness will be the 'taste' for asking good questions.

Professor Schwartz also offered advice regarding AI:

Humans must immediately and unhesitatingly adopt large models.

Do not arrogantly dismiss them due to hallucinations. Humans must leverage their powerful foundational capabilities.

As for the longer-term future, AI will eventually surpass humans in all intellectual domains.

Whether in mathematics, physics, or engineering, these fields may become like music, art, and literature—preserved as humanistic disciplines merely to satisfy some humans' enjoyment of pure thinking and observing the world through specific perspectives.

In the end of the AI era, the humanities may be the only remaining spiritual domain for humanity.