NVIDIA Alpamayo: A Comprehensive Analysis of the Design and Mass Production Deployment of Reasoning-Based Autonomous Driving Large Models

![]() 03/26 2026

03/26 2026

![]() 591

591

At GTC 2026, NVIDIA further elaborated on its open-source Alpamayo VLA model. Marco Pavone, representing NVIDIA's research team, shared insights into Alpamayo's model design and the latest causal chain. Patrick Liu, a former subordinate of Xinzhou Wu at XPeng who later joined NVIDIA, shared some experience and methods for Alpamayo's mass production deployment on behalf of the production side.

Based on the content of their speeches, this article summarizes and shares Alpamayo's model design and mass production experience.

Our previous article, 'The Battle for Intelligent Driving Standardization: A Comprehensive Look at the Underlying Logic and Architectural Evolution of 'End-to-End' Autonomous Driving,' also shared how, in the development of autonomous driving, enabling AI not only to 'see' and 'drive' but also to 'think' and 'explain' like humans represents the second breakthrough point after the popularization of end-to-end algorithms.

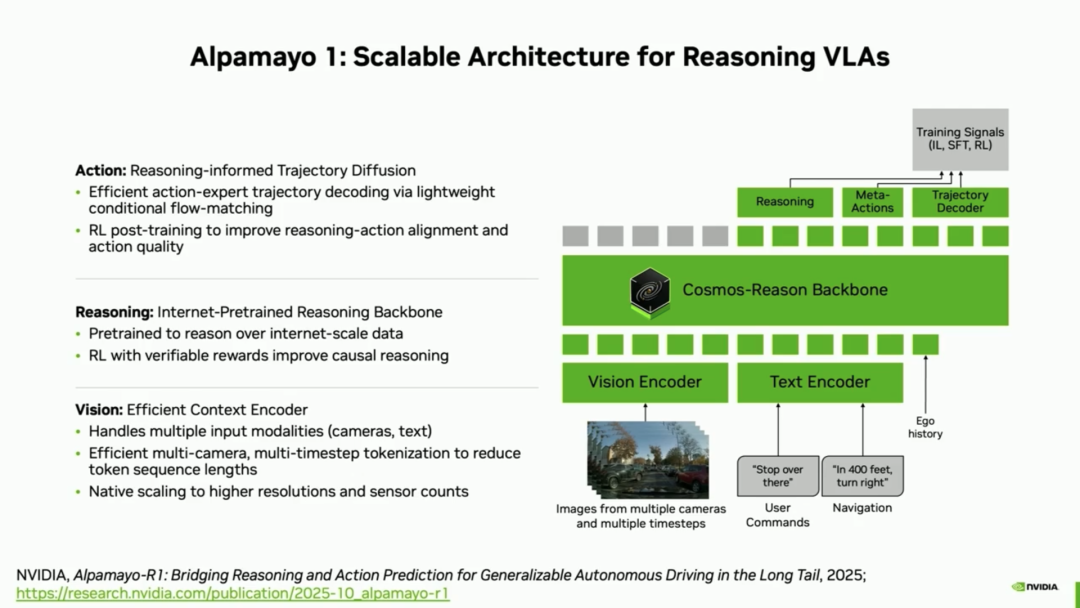

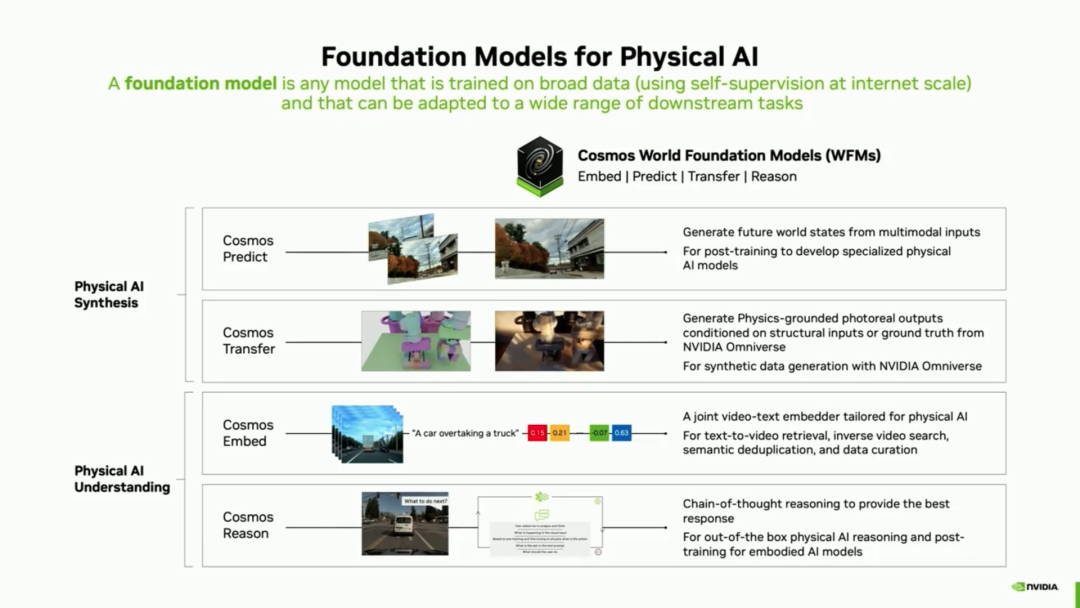

The highlight of NVIDIA's Alpamayo is its reasoning capability. In his speech, Marco Pavone stated that Alpamayo is a 10 billion (10B) parameter end-to-end, reasoning-based vision-language-action (VLA) model built on NVIDIA's foundational model, Cosmos Reason.

Part 1: Model Design - Enabling AI to Learn 'Causal Reasoning' and 'Unity of Knowledge and Action'

Like all VLA models, Alpamayo 1 receives multi-camera images, user commands, and navigation guidance, and outputs three key results: reasoning trajectories, meta-actions, and driving trajectories.

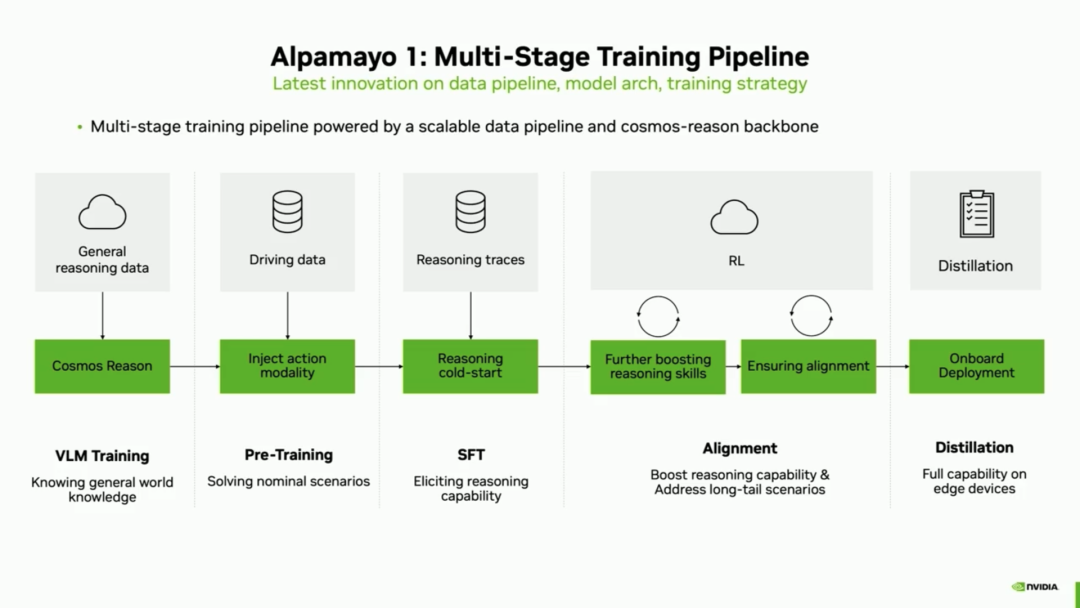

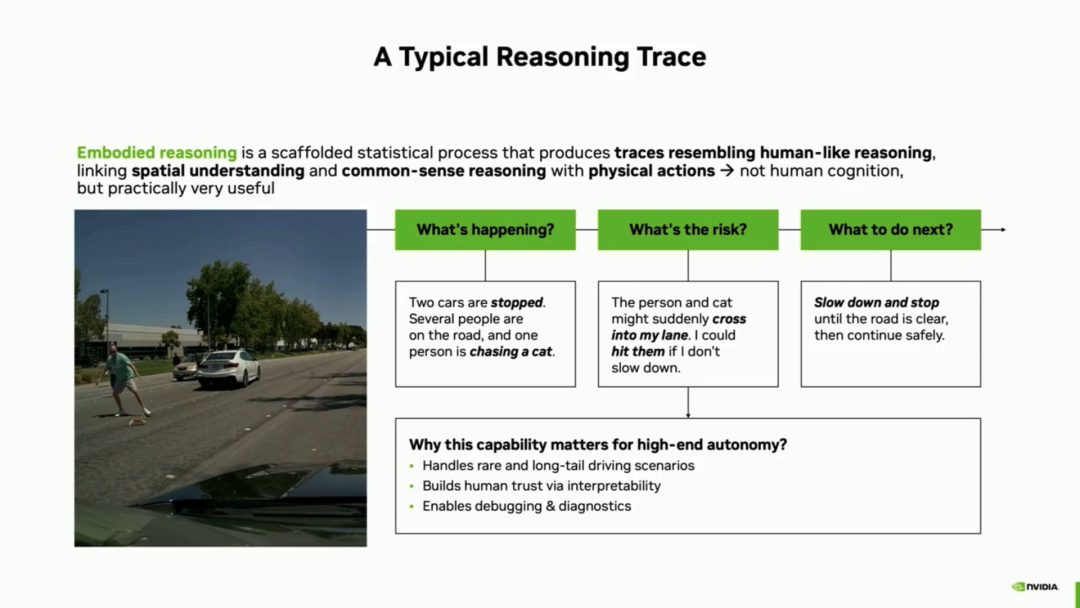

The first major highlight of this model's algorithm is 'concrete reasoning'—generating trajectories similar to human reasoning by linking spatial understanding and common-sense reasoning with physical actions. To build this 'concrete reasoning' capability, NVIDIA's Alpamayo adopts a multi-stage training pipeline:

General Reasoning: Starting with Cosmos Reason, general reasoning capabilities are trained using internet-scale data. This is essentially within the realm of foundational model training.

Trajectory Pretraining: Pretraining on massive driving data endows the model with trajectory generation capabilities for autonomous driving. Generally, the first step in training from a general foundational model to a specialized autonomous driving model involves dedicated driving data training.

Supervised Fine-Tuning (SFT): Fine-tuning using automatically labeled driving-related reasoning trajectories to elicit explicit reasoning capabilities. This step primarily endow s (endows) the VLA model with language-based explicit reasoning capabilities.

Reinforcement Learning (RL): Building on RL in scenarios produced and modified by Cosmos, reasoning in highly challenging situations is improved, and alignment between output modalities is promoted.

After these steps, a VLA large model is essentially complete, as detailed in our previous article, 'Xinzhou Wu Leads NVIDIA's Charge Toward L4 Autonomous Driving with VLA Large Model Algorithms.'

Finally, knowledge distillation is employed for model deployment: compressing vast capabilities into a model suitable for vehicle deployment.

The entire training process presents the following challenges:

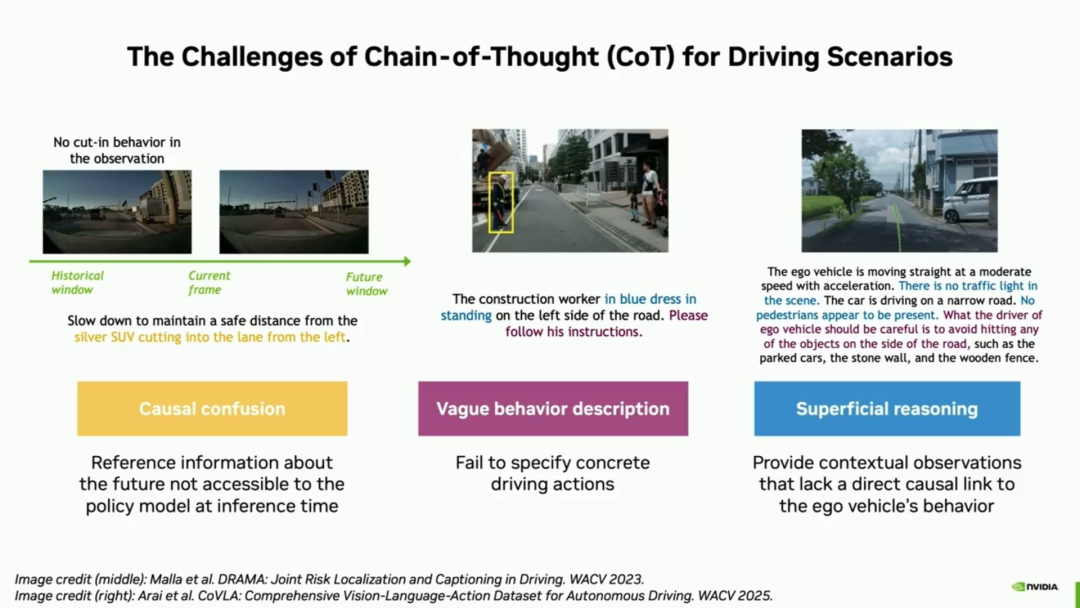

1. Breaking through the limitations of automatic labeling with pure text chains of thought (COT): The biggest challenge in automatic causal chain labeling during the SFT stage is generating high-quality reasoning labels at scale. Traditional automatic labeling with text chains of thought (COT) has three fatal flaws:

- Causal confusion: Reasoning trajectories may leak future information, such as prematurely stating, 'The silver SUV will cut in later.'

- Vague behavioral descriptions: Unable to provide specific driving operations.

- Superficial reasoning: Descriptions lack context directly causally linked to the vehicle's behavior.

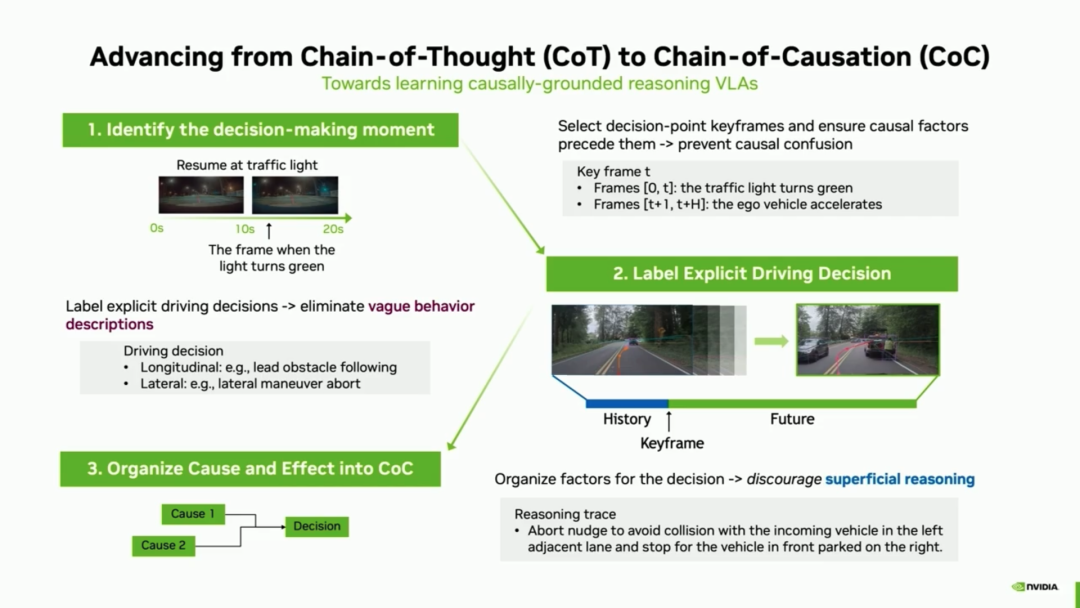

To address these issues, NVIDIA adopted a 'causal chain automatic labeling pipeline' to overcome this pain point:

- Anchoring Key Frames: Reasoning generation is strictly anchored to critical decision-making moments (e.g., the instant a traffic light turns green), ensuring the reasoning process includes only factors preceding the key frame and preventing future information leakage.

- Closed Decision Vocabulary: Decisions are classified into longitudinal and lateral types, with a clear vocabulary established to ensure precise terminology describes behaviors, eliminating ambiguity.

- Causal Chain Templates: Models are guided to ensure each statement conforms to causal chain logic, preventing superficial reasoning.

NVIDIA's Marco Pavone stated that by switching from unstructured chains of thought to structured causal chains, explicit reasoning accuracy improved by an astonishing 121%. When handling 'long-tail scenarios' capturing complex motion behaviors and out-of-distribution visual contexts, trajectory displacement (average ADE) decreased by approximately 12%, proving reasoning highly beneficial in complex edge scenarios.

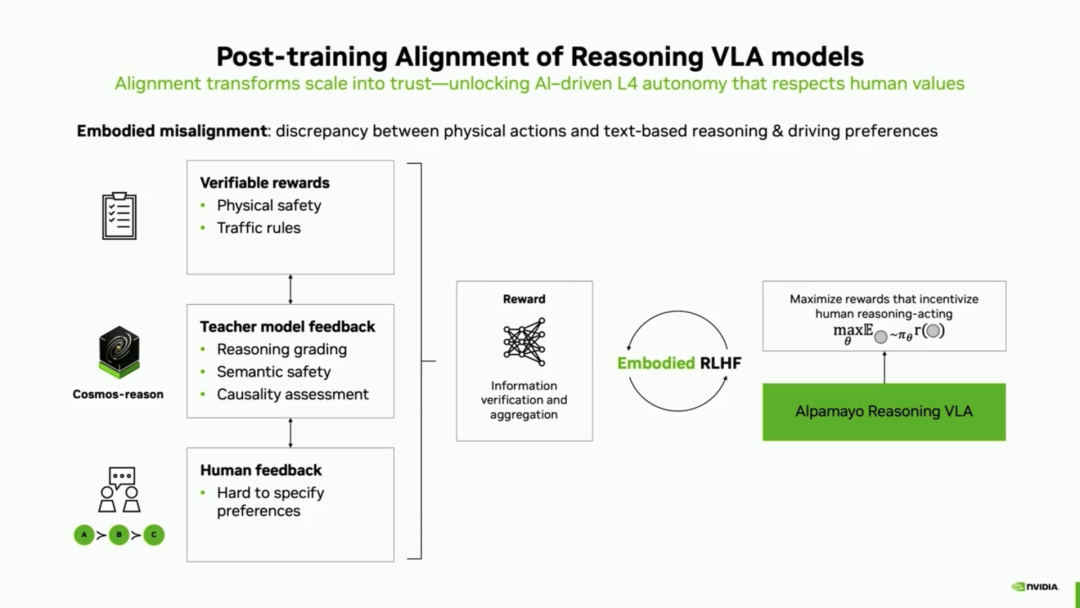

2. Eliminating 'Embodiment Inconsistency': After reinforcement learning, the model can reason, but what if 'it thinks left but turns right'? This potential discrepancy between chain-of-thought reasoning and the model's directly output actions is called 'embodiment inconsistency' (since action generation often merely imitates training data without truly understanding the underlying reasons).

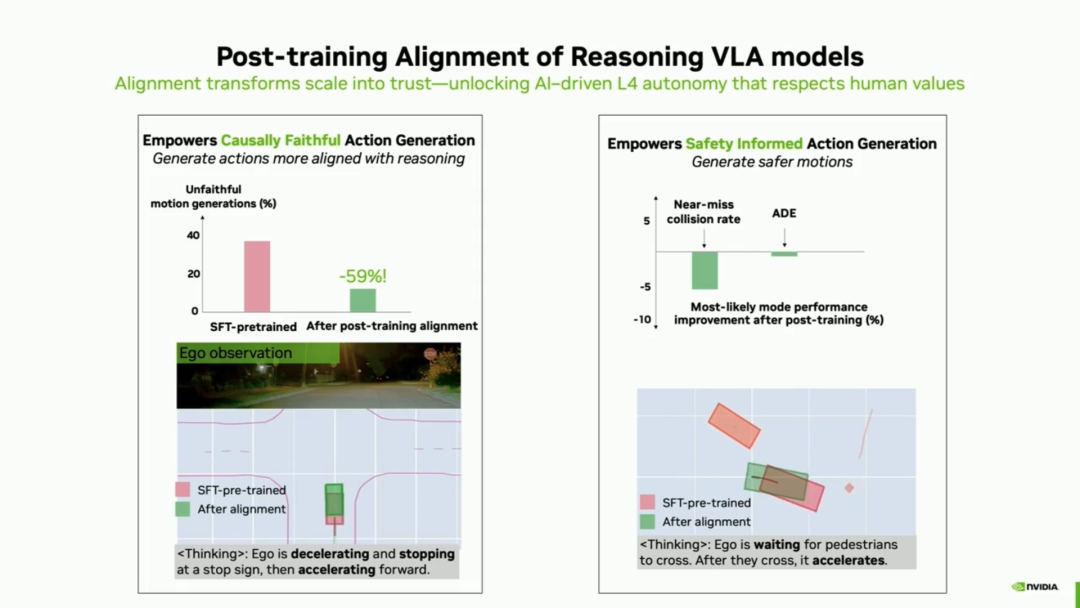

To address this, the team introduced reinforcement learning (RL), integrating verifiable safety rewards, teacher model feedback, and human preference aggregation into a unified reward model. After alignment, the model's generated actions became more consistent with corresponding reasoning trajectories, reducing unfaithful actions by nearly 60%. For example, when the model inferred the need to decelerate, stop, and then accelerate, the aligned model strictly followed the complete causal sequence while significantly reducing near-collision rates.

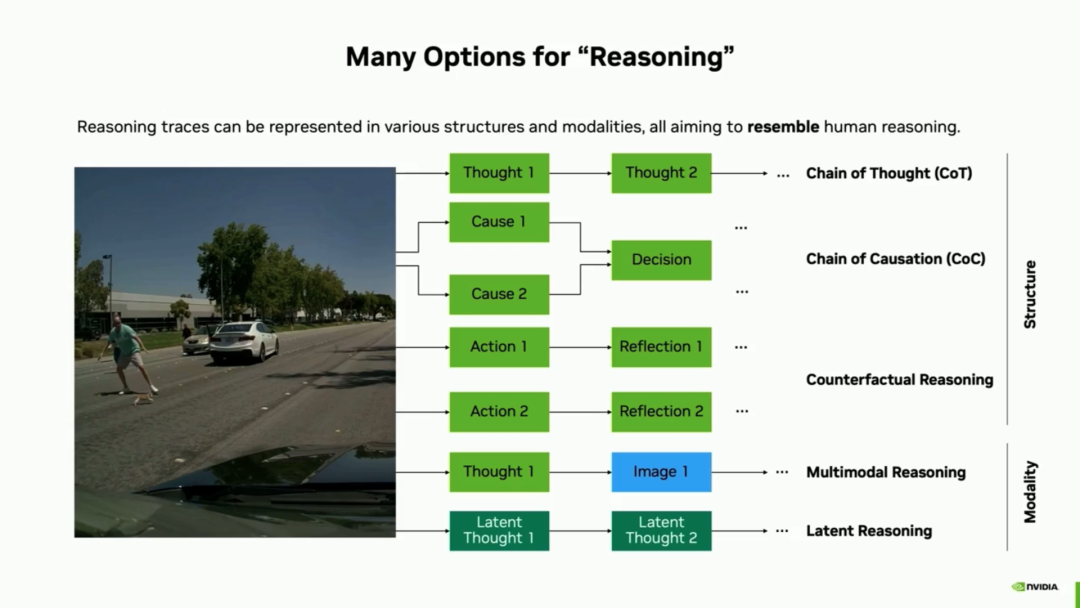

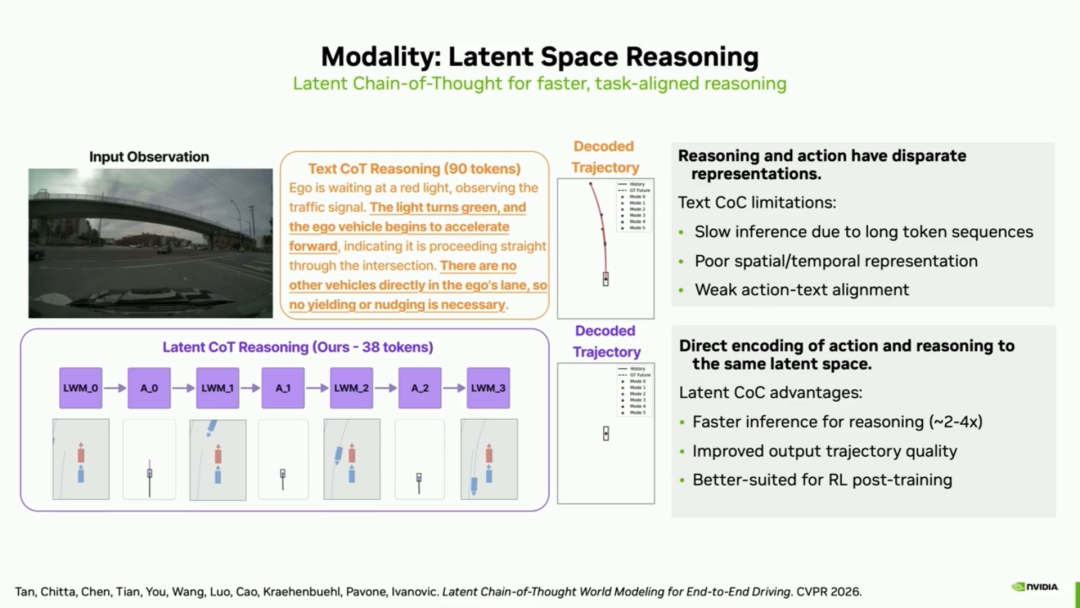

3. Cutting-Edge Exploration: From Text Reasoning to 'Latent Space Reasoning' While language text is easy to interpret, it is not the most efficient representation in terms of token count and reasoning time. This highlights that the 'L' in VLA is truly token-intensive, a current engineering challenge for VLA deployment. NVIDIA is exploring reasoning in continuous latent spaces. This not only brings 2 to 4 times reasoning acceleration but also makes post-training optimization smoother. In complex partially observable scenarios (e.g., responding to pedestrians who may cross the road at any moment), the model even demonstrates counterfactual reasoning and self-regulating 'thinking rate' capabilities—the harder the scenario, the more time it spends deducing updates, achieving better driving performance.

This is the implicit reasoning method, or what some call the 'world model.' Li Auto also shared in their GTC 2026 presentation that their next-generation MindVLA will adopt this approach, as detailed in our article, 'Analysis of the Architecture and Algorithmic Applications of Li Auto's Next-Generation Foundational Model, Mind VLA-o1.'

Part 2: Mass Production Deployment - Overcoming Physical Bottlenecks in Interaction and Real-Time Computation

In reality, deploying such a powerful research-grade reasoning model into actual vehicle production requires overcoming three pillar challenges: system interaction, data quality, and extremely high real-time performance, given the computational constraints at the vehicle end.

How did NVIDIA's Patrick Liu address these issues in mass production? He provided their answers:

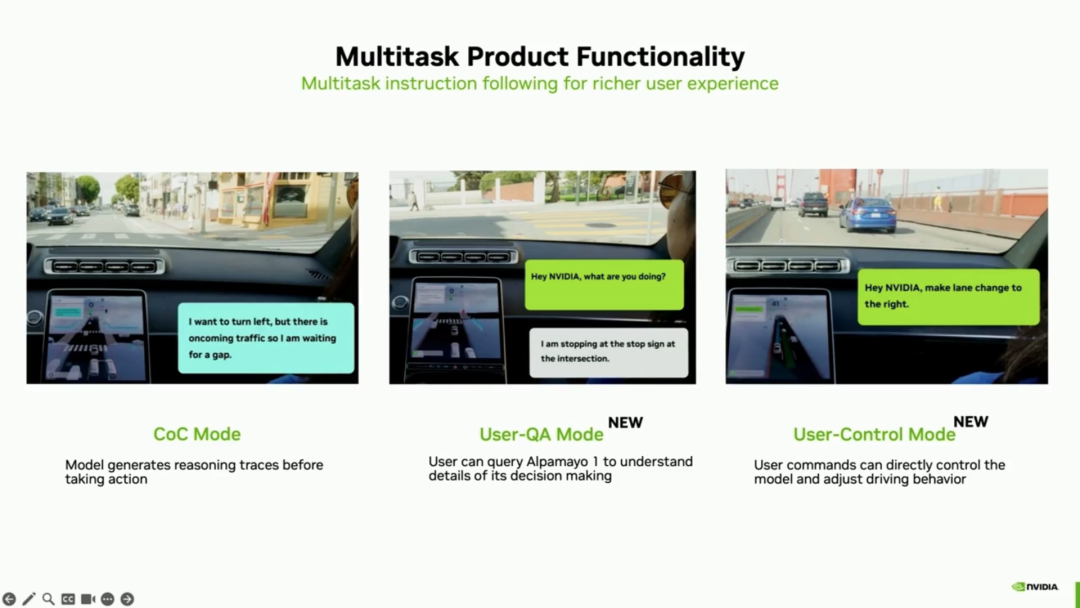

1. Multi-Task Product Functionality and 'Mode Expert' Architecture To achieve an L4-level experience that combines autonomous driving with interactivity and explainability, the production model introduces two new modes in addition to autonomous reasoning:

- User Q&A Mode: Adds a natural language interface to the black-box neural network, allowing users to ask, 'What are you doing?' or 'Why are you slowing down?' greatly enhancing trust.

- User Control Mode: Users can directly issue commands like 'Pull over,' 'Take the next exit,' or 'Go a bit faster.'

To support these three modes, a core module—the Mode Expert—is introduced at the system level. It serves two main functions:

- Protective Interception: If a user issues a harmful command (e.g., 'Hit that trash can'), the Mode Expert proactively rejects it, preventing it from reaching the model.

- Seamless Routing: It encodes the decision on which mode to execute as an 'extremely tiny single-modality token' forcibly input to the model.

This MOE design avoids delays caused by generating additional tokens and allows the model to override the original navigation route to comply with user control commands when necessary. The MOE approach has proven its efficiency under equivalent computational power over the past two years, as demonstrated by Deepseek.

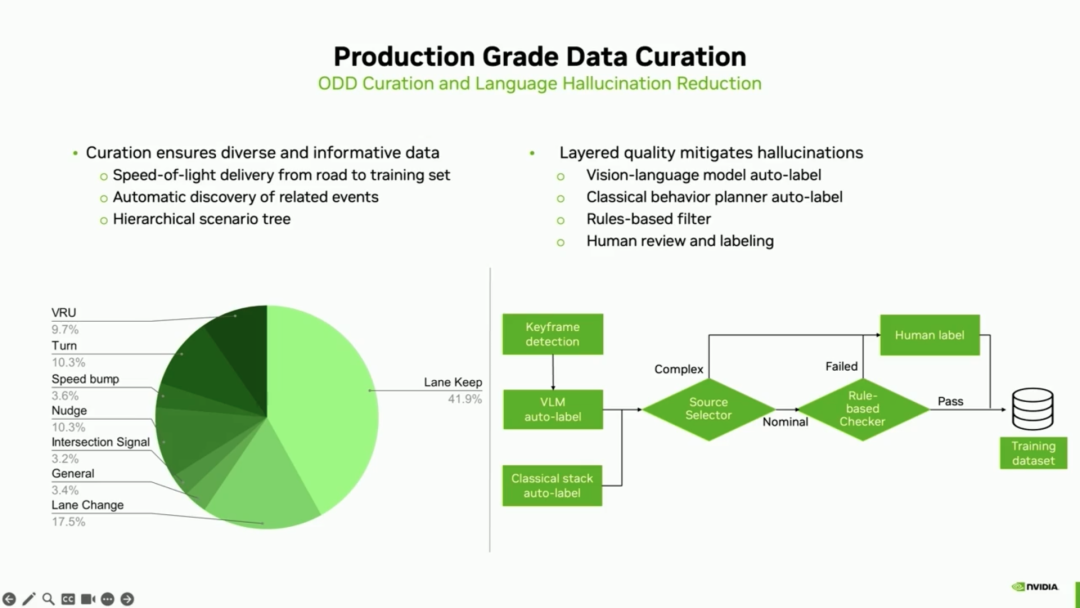

2. Production-Grade Data Pipeline To generate high-quality, highly consistent 'C datasets,' the R&D team spent over 100 iterations balancing complex data hybrid structures between cloud and vehicle ends. In addition to relying on vision-language models (VLMs) and classical behavioral planning stacks for automatic labeling and using rule filters to clean data, the entire pipeline must include 'human-in-the-loop (HITL) QA' to rigorously verify the accuracy and authenticity of all labels.

3. Real-Time Deployment: Hardcore 4x Real-Time Acceleration Technology This is the most critical aspect of mass production. The vehicle-end replanning budget is 100 milliseconds (i.e., 10 fps), while the original unoptimized model latency exceeded the budget by approximately 4 times. To generate all reasoning and trajectory tokens within the stringent budget, the team adopted a dual-pronged technical breakthrough:",

Combining the aforementioned low-level restructuring for model design and extreme engineering optimizations for mass production deployment, NVIDIA has successfully brought Alpamayo 1 from cutting-edge research into real-world automotive production deployment.

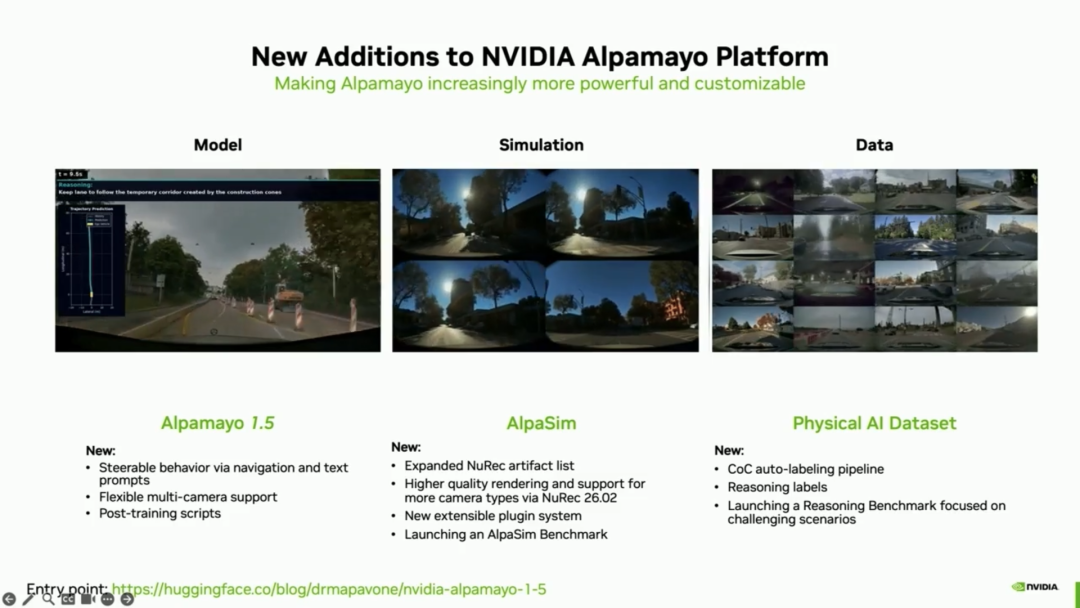

Finally, at GTC 2026, NVIDIA announced the release of the new Alpamayo 1.5 model

The newly released Alpamayo 1.5 model, while maintaining its original scale of 10 billion parameters, primarily adds the functionality of controlling assisted driving through navigation and language dialogue. This is quite a challenging feat. In addition to these enhancements, the open model also includes specialized virtual simulation suites, as well as datasets such as the CoC auto-labeling and inference labels mentioned earlier.

The addition of these new features further enhances the model's flexibility and controllability in practical applications, making it akin to a public L4 Android software. It can assist many traditional OEMs in initiating self-research modes, much like how many internet companies have started.

Finally, while algorithms are crucial tools for autonomous driving, autonomous driving products are where the deepest interactions with application scenarios occur. Friends interested in autonomous driving products can click on the book 'Autonomous Driving Product Manager' co-published by Vehicle and Mechanical Industry Press, which provides a detailed introduction to the entire process of autonomous driving products and operations.

References and Images

From Research to Production: How Alpamayo Accelerates Autonomous Vehicle Development - NVIDIA *Reproduction and excerpting are strictly prohibited without permission-