In the ‘Token’ Era, Cloud Providers’ Survival Strategies Evolve

![]() 04/03 2026

04/03 2026

![]() 539

539

Source | Bohu Finance (bohuFN)

Since the start of the year, a ‘lobster craze’ has swept across the nation, with open-source AI agents like OpenClaw quickly gaining traction. Users globally are busy ‘raising’ digital employees to ‘work,’ leading to an exponential increase in Token consumption.

In response, NVIDIA CEO Jensen Huang suggested using Tokens as a form of payment; Alibaba and Tencent have also begun offering Tokens as employee benefits. While this may seem far-fetched, with Token prices skyrocketing, the prediction of ‘computing power as compensation’ is edging closer to reality.

Recently, Alibaba Cloud, Baidu Cloud, and Tencent Cloud have successively adjusted prices for AI computing power and storage-related products, with some increases exceeding 30%. Earlier this year, Amazon AWS and Google Cloud initiated a round of price hikes, breaking the cloud computing industry’s long-standing trend of ‘only decreasing, never increasing’ prices.

Cloud giants justify these price increases by citing sustained growth in computing power demand and significant rises in costs for core hardware and related infrastructure. Simply put, the supply of computing power can no longer keep pace with consumption.

The National Data Bureau noted that in early 2024, China’s average daily Token invocation volume was 100 billion; by March of this year, this figure had surpassed 140 trillion, marking a thousandfold increase over two years. A ‘Token revolution’ is taking shape.

As competition in the AI era shifts from ‘model competition’ to ‘computing power competition,’ tech giants are accelerating their strategic realignment. Whoever can more efficiently ‘burn’ Tokens will gain pricing power in future commerce.

01 Cloud Providers Raise Prices En Masse

Why have Tokens suddenly become so valuable? Let’s first clarify what a Token is.

A Token is the smallest ‘computational unit’ when AI processes information. When we submit a sentence, a code snippet, or an image to AI, it is divided into individual Tokens, which the large model then understands, predicts, and generates.

In simpler terms, Tokens can be compared to the ‘kilowatt-hour’ metric in a power plant—the more electricity (large model usage) consumed, the higher the electricity bill (Token consumption) will be.

However, Tokens were not always expensive; they were even free in the early days.

In late 2022, ChatGPT opened the door to artificial general intelligence (AGI) with its large language model. Over the following year, domestic and foreign tech giants rushed to develop their own general-purpose large models, bringing Token consumption to the forefront.

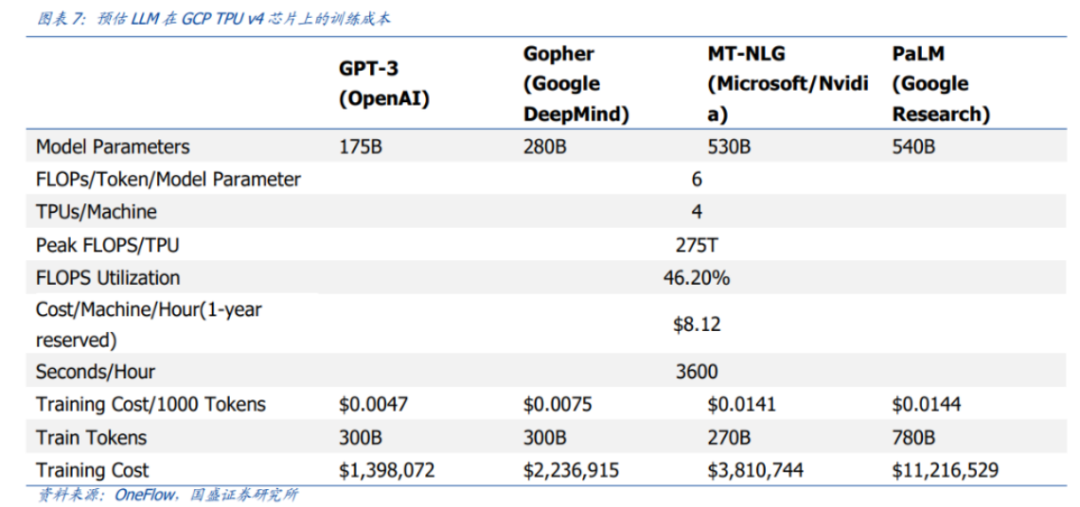

Based on parameter count and Token volume, Guosheng Securities estimated that training GPT-3 once costs approximately $1.4 million; for some larger LLM models, training costs range from $2 million to $12 million.

Despite these substantial training costs, in 2024, Alibaba, ByteDance, Baidu, and other major players not only offered free access for consumer-end users but also engaged in a fierce price war in the enterprise market, reducing API call prices from ‘cents’ to ‘fractions of a cent.’

During the initial boom phase of large models, the industry consensus was that computing power would become increasingly affordable, even becoming as ubiquitous as broadband resources and a staple of internet infrastructure.

Building on this assumption, major players continued to apply internet-era thinking, treating model capabilities as gateway resources. They aimed to attract developers and enterprises with extremely low prices, seeking a first-mover advantage in commercialization.

However, the scenario did not unfold as expected. The ‘Hundred Models War’ ended abruptly after just one year, with major players realizing that the commercial value of generative dialogue was limited. Large models needed to be applied in vertical scenarios to unlock greater competitiveness.

Yet, as AI shifted from ‘training’ to ‘inference,’ each conversation, generation, and inference required new computations, meaning Token demand no longer grew linearly but exponentially.

The emergence of programming agents like Claude Code and OpenClaw further intensified Token demand—agents can work around the clock, with each agent spawning hundreds or thousands of sub-agents to handle different task components.

Developers report that from chatbots to Agents, the computational power consumption per task can increase by 30-100 times, potentially exceeding 1000 times in extreme scenarios.

For example, a student using AI to complete a 7,500-word essay without revisions would consume roughly 10,000 Tokens. By this calculation, even a day of pure text-based conversations consuming a million Tokens would be substantial.

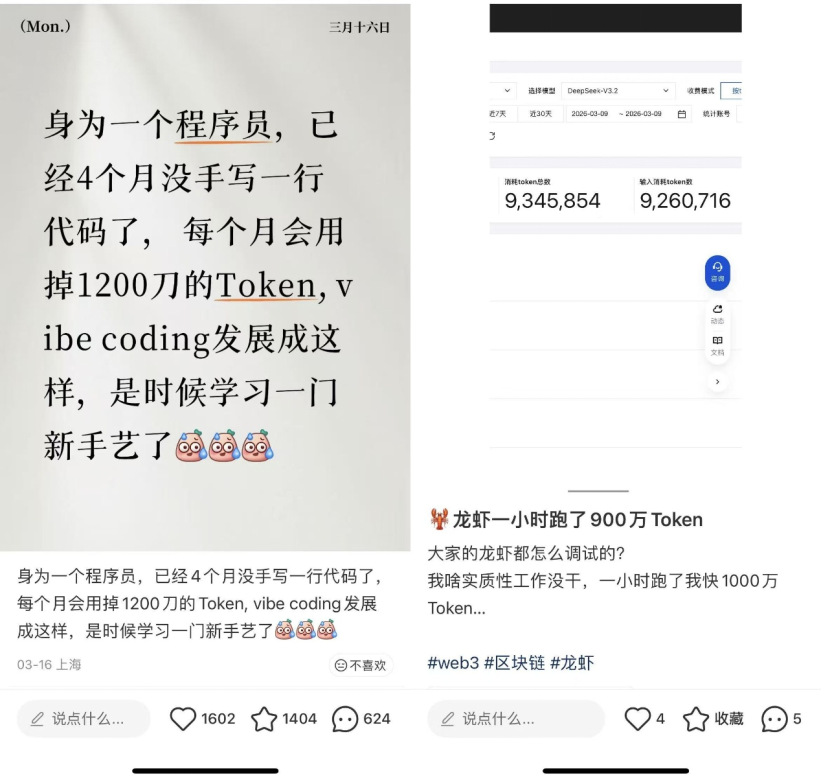

However, an agent performing a simple task might trigger millions of Token consumptions. Some users report spending 50 million Tokens running OpenClaw for half a day; others claim to burn through thousands of dollars programming with OpenClaw in a month.

As Agents become a broader public demand, users have learned to shop around, with cheaper domestic models sweeping global developer communities and becoming major beneficiaries of this Token boom.

Recently, multiple media outlets cited insiders revealing that Kimi's cumulative revenue over the past 20 days had surpassed its total revenue for all of 2025; newly listed Minimax and Zhipu also saw their stock prices reach new highs.

However, with a frenzied influx of domestic and international users, Kimi has frequently displayed ‘insufficient computing power during peak hours’ warnings, while MiniMax directly announced traffic restrictions...

Ultimately, these smaller domestic models do not own their own GPUs. To profit from the ‘lobster craze,’ they rely on the pricing set by cloud providers like Alibaba Cloud, Tencent Cloud, and Volcano Engine.

Yet computing power costs are not cheap, with the GPU market already facing supply shortages. When revenue growth from selling Tokens cannot keep pace with the costs of building data centers, price hikes become inevitable.

02 Competing to Become ‘Token Factories’

Over the past year, cloud providers’ revenues have multiplied several times over due to surging Token demand.

According to multiple media reports, Volcano Engine achieved over 20 billion yuan in revenue in 2025, up from over 12 billion yuan in 2024; Alibaba's Q3 FY2026 earnings report showed a 36% acceleration in Alibaba Cloud's revenue growth, reaching a three-year high, with AI-related product revenue posting triple-digit growth for the tenth consecutive quarter.

However, we must consider both revenue and investment. In 2025, Alibaba's capital expenditures reached 123.8 billion yuan; according to the Financial Times, ByteDance's capital expenditures were approximately 150 billion yuan. When will ‘selling computing power’ alone recoup these investments?

Tech giants are clearly unwilling to remain mere ‘shovel sellers.’ Since Tokens are the ‘hard currency’ of future AI competition, they aspire to control the entire Token production, scheduling, and monetization chain, becoming the ‘utilities’ of the intelligent era.

At NVIDIA's GTC 2026 conference, Jensen Huang outlined a new vision: NVIDIA would transform from a ‘chip company’ to an ‘AI infrastructure and factory company,’ becoming a ‘Token factory’ rather than just selling chips.

Currently, NVIDIA aims to address inference shortcomings by acquiring Groq. Its newly released Vera CPU enables real-time scheduling and collaboration for agent AI systems. Combined with CUDA's ecological moat and its partnership with power giants to build next-generation AI factories, all Tokens—from creation to consumption—will pass through NVIDIA's ‘Token factory.’

Under Huang's tiered pricing strategy, Token pricing will directly correlate with computing power output—the more capable and faster the system, the higher the price. NVIDIA will control the roadmap and pricing power of this system.

Alibaba's strategic direction aligns closely with NVIDIA's, but Alibaba aims not only to be a Token supplier and infrastructure operator but also to explore new businesses that drive greater Token consumption.

Recently, Alibaba established the Alibaba Token Hub (ATH) business group, led directly by Wu Yongming, with the core objectives of ‘creating Tokens, delivering Tokens, and applying Tokens’ to further strengthen AI business strategic synergy.

Subsequently, Alibaba launched ‘Wukong,’ an enterprise-grade AI-native work platform targeting the B2B market, initially covering ten scenarios including e-commerce, design, manufacturing, and finance. It represents a data army capable of truly integrating into enterprise workflows.

Chen Hang, founder of DingTalk, who has returned to Alibaba, pointed out that the value of Token economics lies not in replacing humans with machines but in converting a portion of massive human resource costs into digital productivity amplified 10-100 times.

This aligns with the previous goal of improving operational efficiency through SaaS (Software as a Service), but SaaS mainly sustains operations, whereas ‘Wukong,’ built on Maas (Model as a Service), drives quantifiable business growth. Once enterprises anchor their computing power and data within Alibaba's ecosystem, migration costs will rise, ultimately forming core barriers.

ByteDance's Volcano Engine shares similar ambitions, placing Maas at a high strategic position to drive comprehensive breakthroughs in cloud services.

Volcano Engine's advantage lies in ByteDance's ownership of national-level apps like Douyin and Jianying, allowing models for image and video generation to rapidly validate and iterate in user scenarios with hundreds of millions of users, forming a closed-loop advantage of ‘computing power-model-scenario.’

For example, the Seedance 2.0 model quickly gained attention for its breakthrough capabilities, attracting massive user inflows and achieving scaled implementation in professional scenarios like serialized dramas, directly driving a surge in Volcano Engine's Token consumption.

According to LatePost, ByteDance's Volcano Engine's Doubao large model now exceeds 100 trillion daily Token invocations, making it the world's third cloud provider to surpass this milestone.

However, domestic cloud providers are not sitting idle. Alibaba CEO Wu Yongming stated that the newly established ATH business group aims to capture 80% of China's AI cloud market growth in 2026.

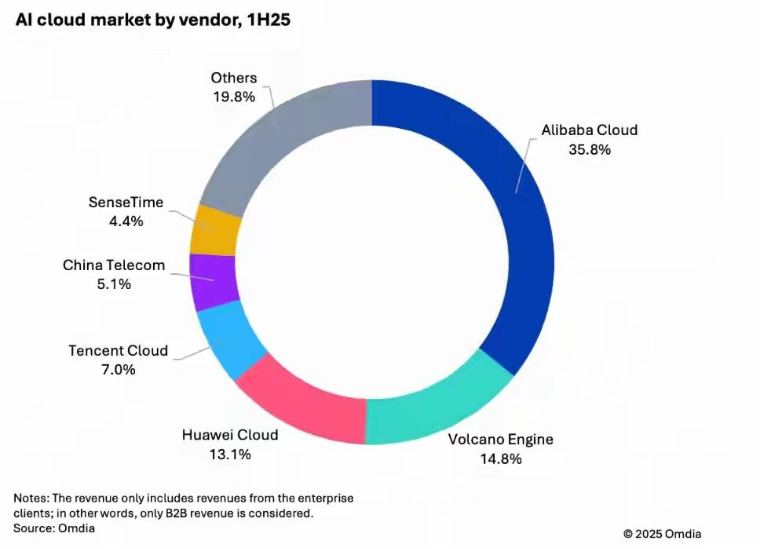

Currently, Alibaba Cloud leads China's AI cloud market with a 35.8% share; Volcano Engine ranks second with 14.8%. The battle for Tokens is imminent.

03 Tokens Become ‘Currency’

Despite differing paths, tech giants’ strategic layouts all point to the same reality:

Competition in the AI era is no longer just about model capabilities; tech giants are vying for control over a Token production, distribution, and application system. Whoever controls Token pricing will essentially control the most valuable production assets of the AI era.

Previously, tech giants competed for user attention; in the future, they will focus on where Tokens originate, where they flow, and how they are consumed.

First, producing high-quality Tokens at lower costs. In the past, the internet industry's ‘moats’ were light-asset narratives like data, algorithms, and scale. In the future, major players will need to re-embrace ‘heavy assets,’ treating computing power infrastructure as a new strategic high ground.

China's inexpensive and stable electricity supply, combined with tech firms' breakthroughs in chips and model architectures, provides natural advantages for computing power exports. This round of collective price hikes by cloud providers also signals their intent to seek profit margins in the global market.

Second, competing for Token distribution rights. Major players control large model technologies, enabling them to convert underlying computing power into standardized, callable Token services, further lowering the barrier for users to access model capabilities.

For example, Alibaba's ‘Wukong’ integrates into enterprise work platforms; WeChat launched plugins supporting OpenClaw access. Once users deploy these services, continuous Token consumption will follow, with major players vying for new ‘Token gateways.’

Finally, stimulating Token consumption. Owning Tokens alone does not form a commercial closed loop; embedding model capabilities into real business scenarios is essential for sustainable Token consumption.

In the long run, ‘Token factories’ seek ‘consumption-output’ conversion efficiency. The core is not selling one-time computing power but truly binding Token consumption to customer value, creating stable and sustainable commercial revenue.

However, rules will not remain static. AI's development speed has far exceeded human expectations, and no one can predict the future—just as no one predicted OpenClaw's sudden popularity years ago.

Therefore, whether tech giants, vertical model companies, or agent tool developers, all must continue running and learning in their respective domains.

For major players to transform AI from a vague concept into a tangible business, it will no longer be just a parameter race or a simple competition over Token consumption. Ultimately, it will follow the most fundamental logic: AI that ordinary people can use effectively is the most valuable AI.

The true gateway to AI commercialization in this era may be near—or still far away.

The cover image and illustrations belong to their respective copyright holders. If copyright owners believe their works are unsuitable for public browsing or should not be used freely, please contact us promptly, and our platform will make immediate corrections.