Rivaling Nano Banana Pro! RealRestorer - The King of Open-Source Real-World Image Restoration by South University of Science and Technology & StepFun and Others

![]() 04/10 2026

04/10 2026

![]() 467

467

Interpretation: The AI-Generated Future

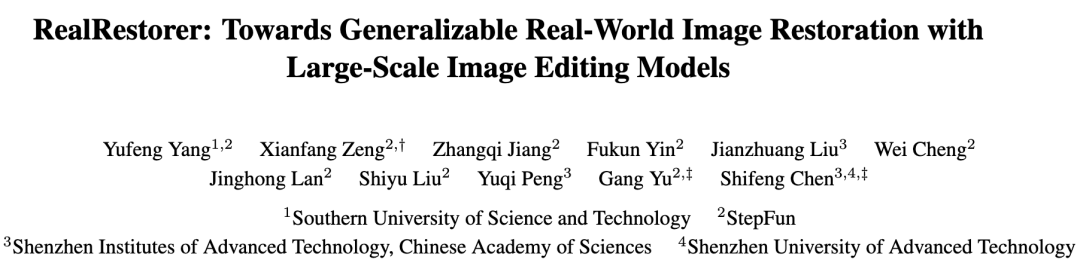

This work was jointly completed by institutions including the Southern University of Science and Technology, StepFun, and the Shenzhen Institute of Advanced Technology, Chinese Academy of Sciences. The paper, project page, model, and benchmark have been published simultaneously.

Key Highlights

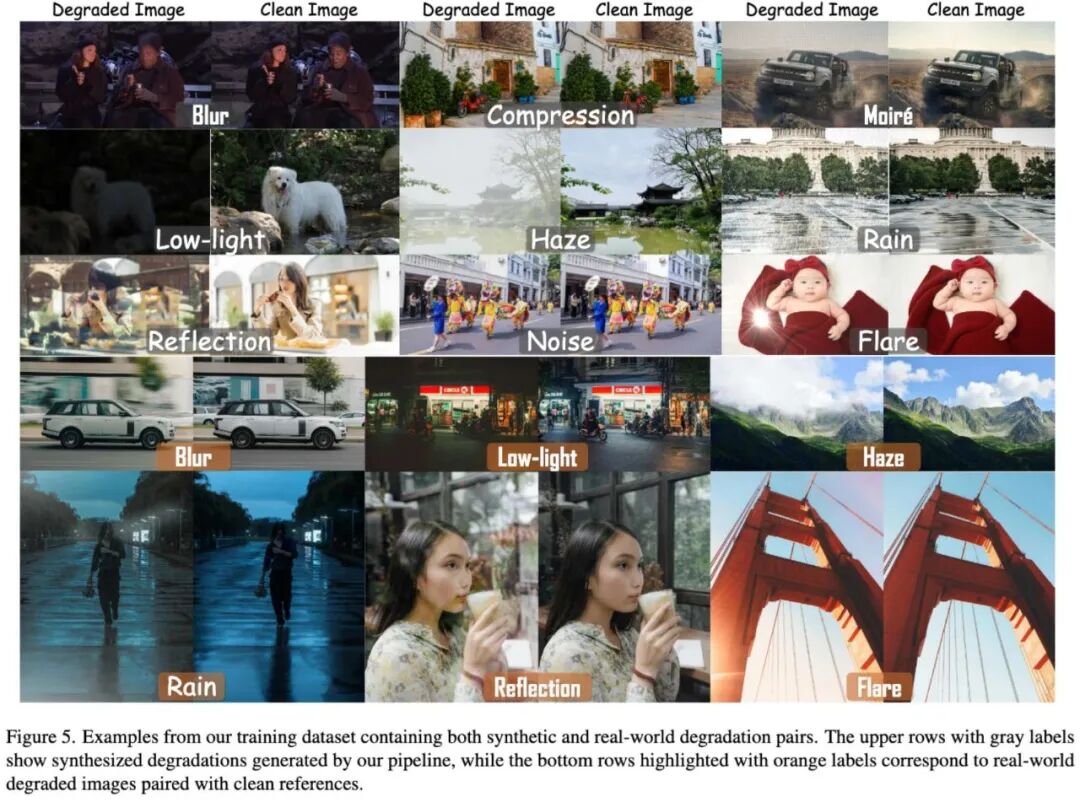

Real-world image restoration goes beyond 'synthetic degradation' to create a more versatile and practical model for real-world scenarios.

Based on large-scale image editing model transformation, it balances 'clean restoration' and 'content fidelity,' focusing on preserving the original image's scene structure, semantic content, and fine-grained details while avoiding common issues like 'over-restoration,' 'content distortion,' and 'semantic drift.'

Integrated approach to data, model, and evaluation. The paper not only proposes the model itself but also constructs a data generation process closer to real-world distributions and introduces a new real-world evaluation benchmark, RealIR-Bench. The model tops open-source methods.

Summary Overview

Problems Solved

Poor Generalization in Real-World Degradation: Traditional image restoration methods are often trained and evaluated on synthetic degradation data, leading to a significant decline in generalization when faced with complex degradations in real-world photography.

Inadequate 'Real-World' Evaluation Methods: Many restoration tasks rely on paired clean images to calculate PSNR and SSIM. However, real-world scenarios often lack strictly aligned 'ground truth' images, making traditional reference-based metrics inaccurate in reflecting actual restoration performance.

Significant Gap Between Open-Source and Closed-Source Solutions: Closed-source image editing systems have demonstrated strong real-world restoration capabilities, but the open-source community has long lacked a comparable solution.

Proposed Solution

Core Framework: RealRestorer is based on the open-source image editing model Step1X-Edit, retaining its large-scale DiT architecture, QwenVL text encoder, and Flux-VAE representation capabilities. Only the DiT backbone is fine-tuned, shifting its high-level editing capabilities to low-level real-world restoration tasks.

Core Idea: Leveraging the strong priors of large-scale editing models, combined with synthetic and real degradation data pipelines, to train a powerful image restoration model capable of generalizing in real-world scenarios.

Key Technical Points:

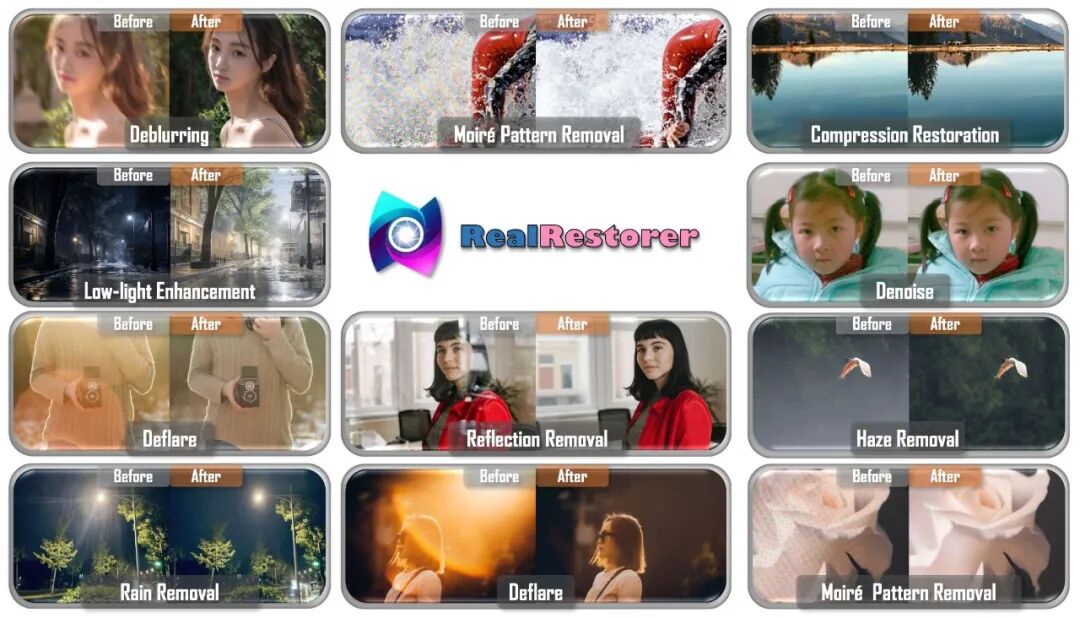

Constructed a large-scale degradation synthesis pipeline covering nine types of real-world degradations, introducing finer-grained noise modeling, regional perturbations, and web-style degradation processes to narrow the gap between synthetic and real distributions.

Collected additional real degradation images and generated corresponding paired high-quality degradation-free data using high-performance models to further align with real-world distributions.

Adopted a two-stage training approach: The first stage uses approximately 1 million synthetic degradation pairs for transfer learning, while the second stage introduces about 100,000 real degradation pairs for supervised fine-tuning. The Progressively-Mixed training strategy is employed in the second stage, retaining a small amount of synthetic data to prevent overfitting to real sample distributions and maintain cross-task generalization.

Technologies Applied

Large-scale image editing model transfer. Large-scale image editing models possess stronger semantic priors and content modeling capabilities, making them more suitable for handling complex real-world degradations.

Synthetic + real hybrid data construction. The authors did not simply stack data but used both synthetic and real degradation pairs to balance scalability and authenticity.

Non-reference evaluation benchmark. RealIR-Bench does not rely on paired ground truth images but introduces VLM to evaluate Restoration Score (RS) and combines LPIPS to measure content consistency, resulting in a comprehensive Final Score (FS).

Achieved Results

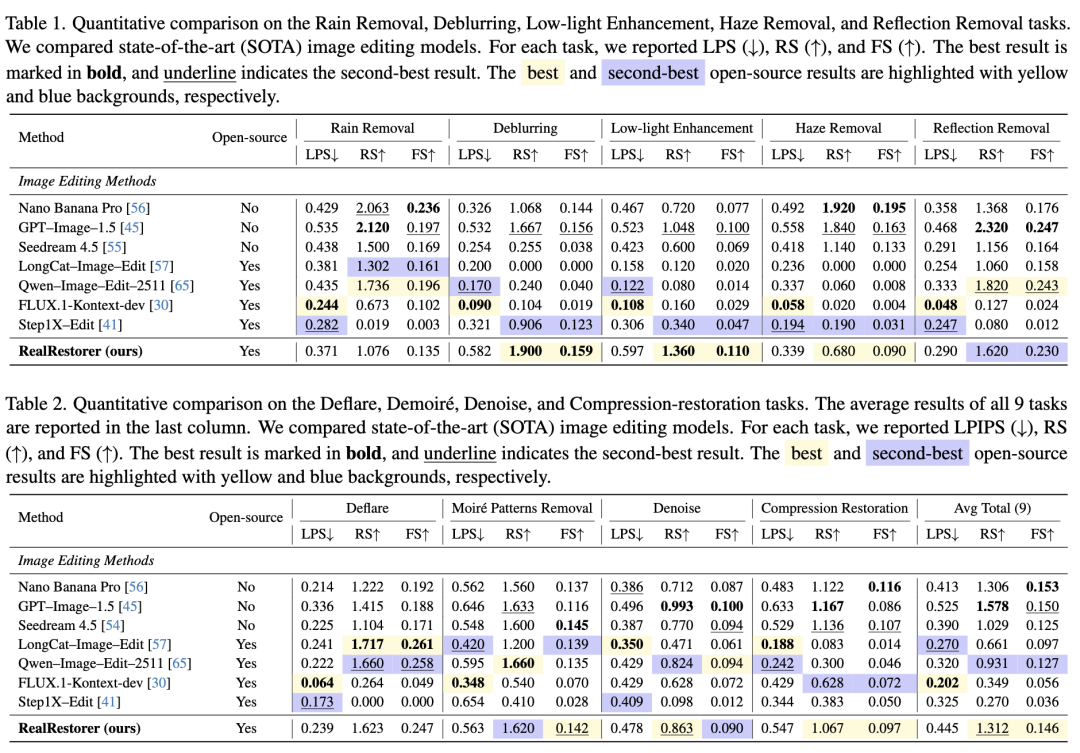

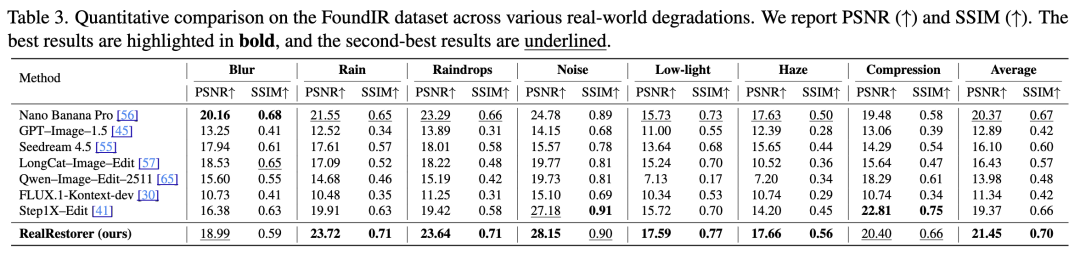

Open-Source SOTA: RealRestorer ranks first among open-source methods on RealIR-Bench and third overall, very close to top closed-source models.

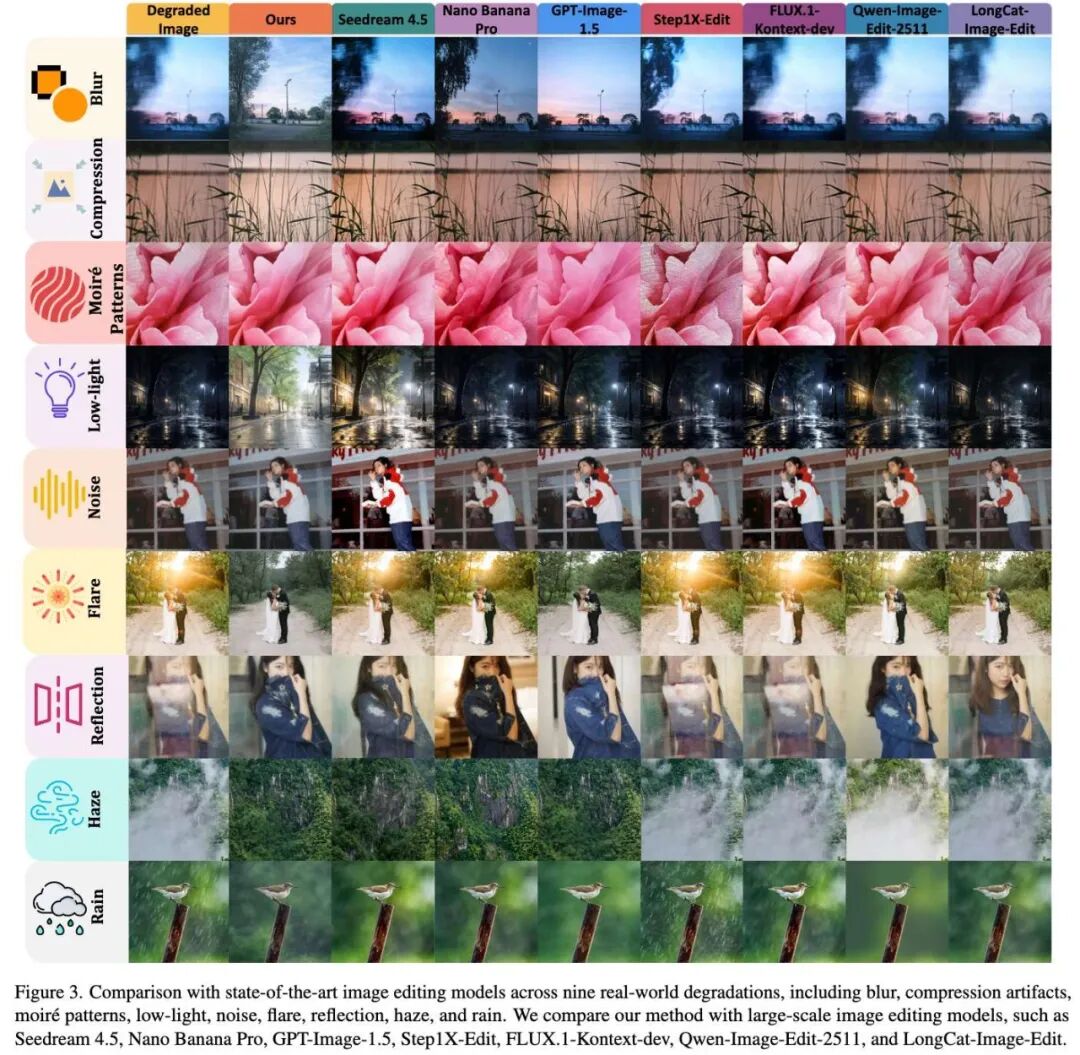

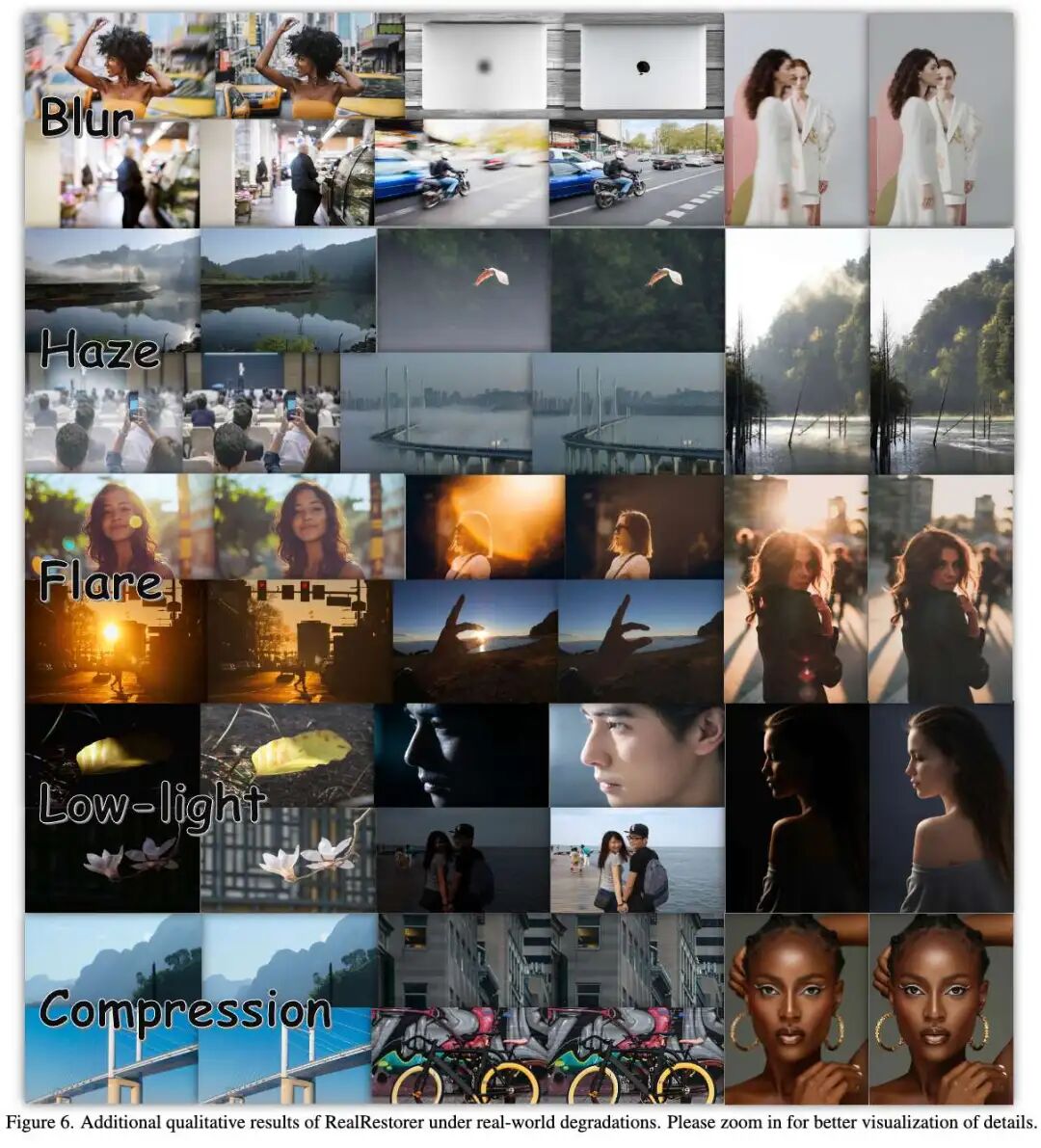

Balanced Performance Across Multiple Tasks: The paper shows that RealRestorer performs strongly across nine task categories, achieving the best results in deblurring and low-light enhancement and ranking second in moiré removal. Overall, it ranks first in five categories and second in two among open-source models.

Stronger Content Consistency: Compared to aggressive editing models that 'over-restore' and drift in content, RealRestorer emphasizes preserving structure, semantics, and details, enhancing usability in real-world applications.

Zero-Shot Generalization Capability: Beyond the nine degradation types covered in the paper, the authors also report zero-shot generalization to unseen tasks, such as snow removal and old photo restoration.

Methodology

Model Design

RealRestorer is fine-tuned based on Step1X-Edit, with a large-scale DiT as its core backbone, QwenVL for text encoding, and Flux-VAE for mapping images to latent space. During training, the VAE and text encoder are frozen, and only the DiT backbone is fine-tuned, gradually shifting its capabilities from 'generation/editing' to 'real-world restoration.'

Dataset Construction

The paper divides training data into two parts:

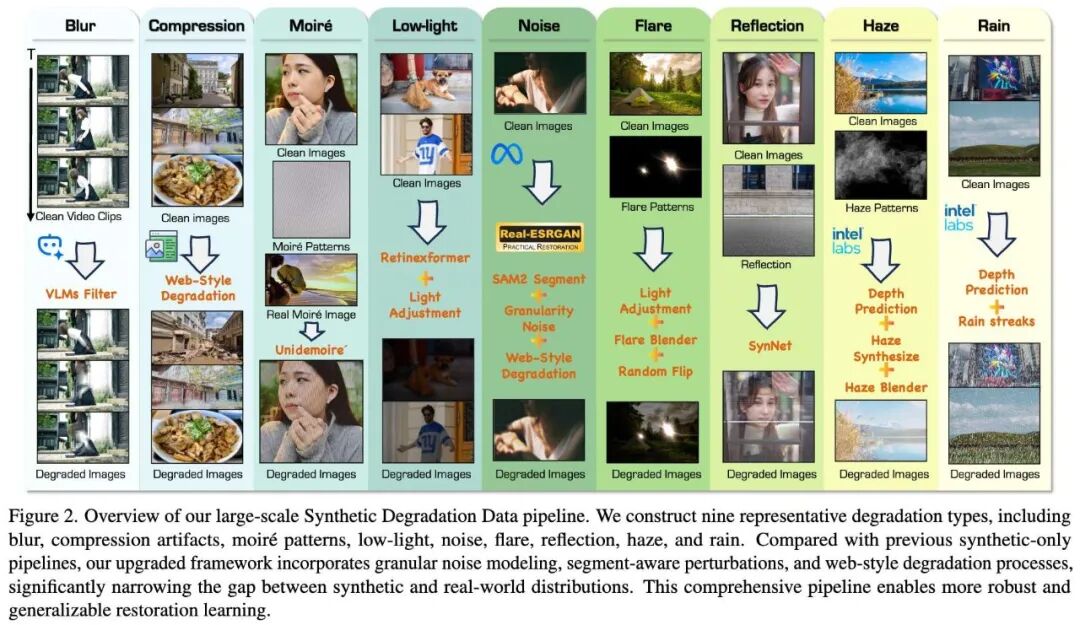

1. Synthetic Degradation Data

Clean images are collected from the internet and subjected to high-quality degradation simulation. Unlike traditional simple degradations, this process more closely mimics complex degradation patterns in real-world photography and is filtered and validated using SAM-2, MiDaS, VLM, and quality assessment models.

2. Real-World Degradation Data

Real degradation images are directly collected from the web, and corresponding high-quality reference images are generated. These are filtered and checked for consistency using CLIP, watermark detection, Qwen3-VL, and low-level metrics, followed by manual review to ensure quality.

Training Scheme

RealRestorer adopts a two-stage training approach:

Stage 1: Transfer Training. Large-scale synthetic degradation pairs are used to transfer high-level priors from the image editing model to the image restoration task, establishing basic restoration capabilities.

Stage 2: Supervised Fine-Tuning. Real degradation data is introduced to enhance the model's adaptability to complex real-world scenarios. The authors employ a progressively mixed training strategy, incorporating some synthetic degradation pairs during the second stage to ensure the model retains broad generalization capabilities from synthetic data while approaching real-world distributions.

High-resolution (1024×1024) settings are used throughout the two-stage training.

Experiments

RealIR-Bench consists entirely of real degradation images collected from the internet, totaling 464 images covering nine degradation types. Scenes are manually filtered to ensure diversity, degradation intensity, and image quality. Unlike traditional synthetic test sets with 'ground truth,' it emphasizes restoration capabilities in real-world environments.

Evaluation Metrics: Assessing Both 'Restoration Quality' and 'Content Fidelity'

The paper does not rely solely on PSNR/SSIM but designs two complementary metrics:

RS (Restoration Score): Measures the effectiveness of degradation removal.

LPIPS/LPS: Measures content consistency before and after restoration.

FS (Final Score): A comprehensive score combining both metrics.

Results

Experiments show that RealRestorer consistently outperforms existing open-source image editing models on RealIR-Bench and achieves results close to top closed-source systems.

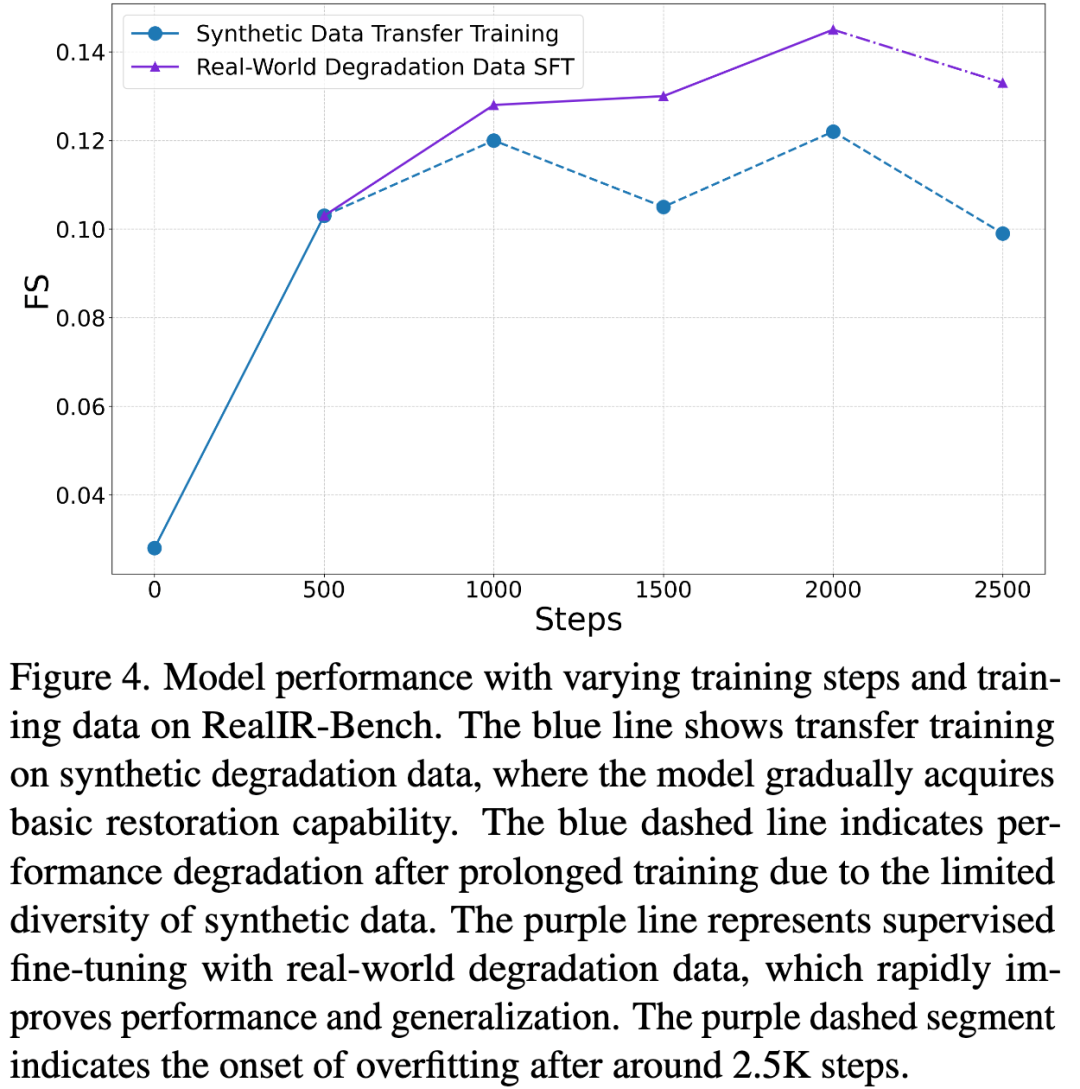

Ablation Study: Two-Stage Training is Essential for Performance

To verify the effectiveness of the proposed scheme, the authors systematically ablated training data and stages. Results show that when using only about 1 million synthetic degradation pairs for the first stage, the model gradually learns basic restoration capabilities and reaches a peak FS of 0.122. However, its generalization to complex real-world degradations remains insufficient, and performance declines over time due to the limited distribution of synthetic data.

Introducing about 100,000 real degradation pairs in the second stage allows the model to quickly surpass the best score from the first stage and significantly improves generalization in real-world scenarios. However, prolonged training on real data can lead to overfitting, so the authors employed early stopping to control the final checkpoint.

The authors further compared different training strategies. Models trained solely on synthetic degradation data still struggle with 'unclean restoration' of complex real-world degradations. Models trained only on real degradation data tend to overfit degradation patterns, leading to object distortion, character displacement, misremoval of natural light sources, and over-enhancement. In contrast, RealRestorer's two-stage approach achieves a better balance between 'degradation removal capability' and 'content structural stability.'

Progressively-Mixed Strategy: A Key to Preventing Overfitting

Beyond the two-stage training itself, the paper analyzes the role of the Progressively-Mixed strategy. By retaining a small amount of synthetic degradation data and mixing it with real degradation data during the second stage, the model avoids overfitting to limited real sample distributions. Visual results show that without this mixing strategy, the model becomes less stable in terms of structural consistency and content fidelity. In other words, this simple yet effective strategy provides tangible gains in performance and visual quality.

User Study: Automated Metrics Align with Human Judgment

To verify whether RealIR-Bench's evaluation metrics align with 'human intuition,' the authors conducted a user study. Thirty-two participants were recruited to rank 3,200 results generated by five high-performing models based on two criteria: restoration quality and content consistency. Results show that Nano Banana Pro ranked first in 32.02% of cases, GPT-Image-1.5 ranked second at 23.83%, and RealRestorer followed at 21.54%. This ranking trend aligns closely with the overall results from the paper's automated evaluation, indicating good credibility of the benchmark and metric system.

Furthermore, the authors calculated the correlation between automated metrics and human judgment, including Kendall’s τ, Spearman Rank Correlation Coefficient (SRCC), and Pearson Linear Correlation Coefficient (PLCC). Results demonstrate moderate alignment between evaluation metrics and human perception. For real-world image restoration tasks lacking strict ground truth images, this is crucial as it means RealIR-Bench can not only 'calculate scores' but also reflect subjective user preferences to some extent.

Conclusion

The significance of RealRestorer lies not just in being 'another image restoration model' but in filling a long-standing gap in the open-source community: an open-source restoration solution tailored for real-world, multi-degradation scenarios that balances restoration quality and content consistency while providing a complete benchmark. However, RealRestorer has some limitations: its base model requires 28-step denoising inference, resulting in high computational costs; it may still fail in scenarios like mirror selfies, extreme degradations, and complex physical consistency challenges.

Array

Array