It's Time for Large Model Vendors to Bid Farewell to the Token Frenzy

![]() 04/13 2026

04/13 2026

![]() 404

404

Source | Bohu Finance (bohuFN)

"Selling Tokens at low prices and opening up to third parties may seem friendly, but it's a trap."

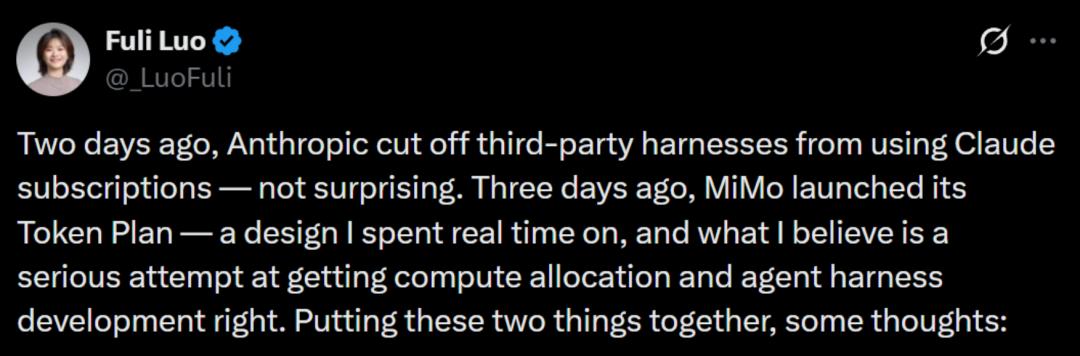

Recently, Luo Fuli, head of Xiaomi Group's MiMo, posted on platform X, likening the Token price war to a "trap" and cautioning large model companies against blindly participating in price wars.

A few days earlier, Anthropic suddenly announced that it would cut off third-party tools' access to Claude subscriptions, prompting Luo Fuli to discuss the logic behind Token pricing.

Amid the Token frenzy where everyone is "raising lobsters," Luo Fuli's open letter and Anthropic's "ban" have become rare "dissenting voices" in the industry, throwing cold water on the excitement.

But the question remains: Can large model vendors really not calculate the cost? Or is this just an industry-wide tacit game, using rampant Token consumption to secure a ticket to the future, betting on the future of AGI?

If so, who can wake up someone who is pretending to be asleep?

01 Anthropic Can't Hold On

A few days ago, Anthropic sent an email to all users announcing that starting at 3:00 PM local time on April 4, Claude Pro and Max subscriptions would no longer cover the use of third-party tools like OpenClaw.

The sudden move prompted Anthropic to offer users a one-time subsidy equal to one month's subscription fee. However, compared to the days when users could call Claude unlimitedly for a $200 monthly fee, this subsidy was merely a drop in the bucket.

The news sparked an uproar on social media, with users lashing out and accusing Anthropic of "burning bridges," given the longstanding feud between OpenClaw founder Peter Steinberg and Anthropic.

When OpenClaw first launched as Clawdbot, its name was highly similar to Anthropic's Claude, leading to a cease-and-desist letter from Anthropic and the start of their rivalry.

More importantly, after OpenClaw validated the market demand for open-source agents, Anthropic promptly launched Claude Cowork, which was seen not only as a safety consideration but also as an attempt to replace OpenClaw with its own product.

However, these factors alone do not fully explain the "ban." The real reason Anthropic took such drastic action was cost.

Anthropic mentioned in its user letter that "third-party tools are putting excessive strain on the system, and we must prioritize the user experience of our core products."

Foreign media reported that Cursor, a Star Unicorn (celebrity unicorn), estimated last year that a $200 monthly Claude Code subscription could consume up to $2,000 worth of computing resources, indicating that Anthropic has been heavily subsidizing costs. Other analysts have pointed out that the actual computing costs of Anthropic's subscription model could be as high as $5,000.

This suggests that the subscription pricing model for large models may not be sustainable in the Agent era.

On one hand, under the Agent model, Token usage is expanding at a geometric rate.

When large models were limited to conversational interfaces, a single round of dialogue consumed about 1,000-3,000 Tokens. Platforms could run a subscription model by calculating an average usage level representative of most users.

However, in Agent scenarios, a single user might run 10 or even 100 Agents simultaneously, each operating 24/7 and triggering multiple model inferences per task. As interactions increase, Token consumption snowballs, disrupting the balance of the subscription model, which relies on subsidizing heavy users with light users.

For reference, an average ChatGPT user might consume millions of Tokens per month even with daily chatting, while a heavy "lobster-raising" user could consume 30-100 million Tokens daily.

On the other hand, the costs for large model companies have not decreased with the surge in usage but have instead risen.

Stanford University's "2025 AI Index Report" noted that driven by efficient small models, the inference cost of GPT-3.5-level models has dropped to 1/280th of its original cost over the past two years, with hardware costs falling 30% annually.

However, while inference costs have decreased, training costs remain staggering. More importantly, global computing power is still in short supply, and as more users flock to Agents, operational costs for companies continue to rise.

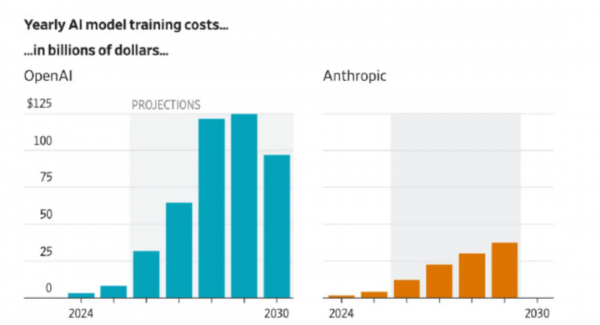

Take OpenAI as an example: it told investors that it expects computing power expenditures to reach $121 billion by 2028, with losses potentially hitting $85 billion, surpassing the loss records of existing public companies.

While Anthropic's training costs are not as high, at about 40% of OpenAI's, it is still burning money and naturally unwilling to let third-party tools freeload.

(Figure: Comparison of training costs between OpenAI and Anthropic)

02 Competing on Token Prices Is a Trap

If Anthropic can't hold on, how are Chinese large model companies faring?

Luo Fuli may resonate most with Anthropic's struggles. She posted on social media that Claude Code is likely unprofitable or even operating at a loss because its pricing logic only works if users stick to Anthropic's own framework; otherwise, issues arise.

Using OpenClaw as an example, she pointed out the problems with integrating third-party frameworks:

"I've observed OpenClaw's context management—it's terrible. For a single user query, it triggers multiple rounds of low-value tool calls, each carrying a long context as an independent API request, often exceeding 100,000 Tokens."

In simple terms, for the same task, OpenClaw runs several more cycles than Claude Code's native framework, making the actual cost dozens of times higher than the subscription price. Even light users of OpenClaw end up costing as much as heavy users in terms of cost structure.

Thus, selling Tokens at low prices and opening up to third parties may seem user-friendly but is actually a trap. To control costs, companies must either reduce computing power or use cheaper, less intelligent models; users, in turn, have a poor experience bouncing off low-intelligence models.

However, Luo Fuli's remarks are a "minority voice" in China's large model industry. For now, most major companies and large model vendors still view Token throughput as a key indicator of strength.

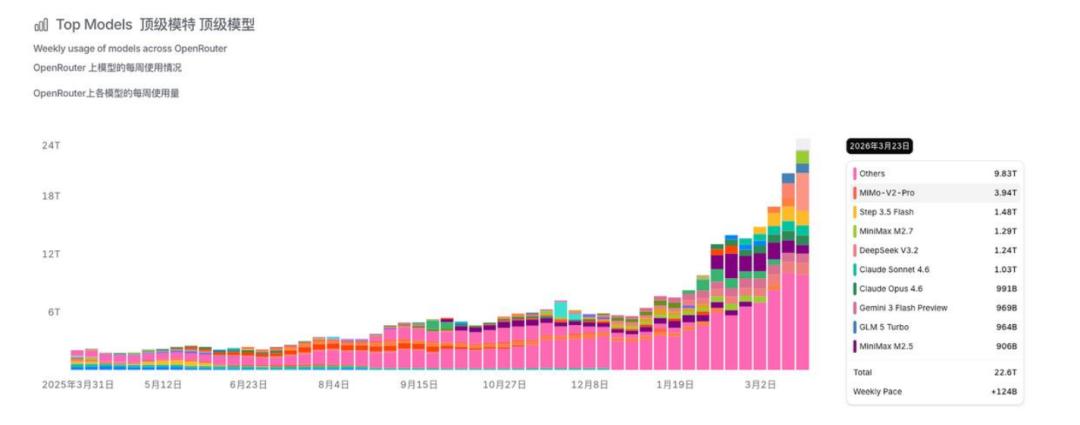

Data from OpenRouter, a global large model aggregation platform, shows that Chinese large models have surpassed overseas models in weekly call volume for a month straight, with domestic models like Xiaomi, JieYue XingChen, and Minimax leading the rankings.

Global tech giants are also fueling the fire, encouraging employees to use AI tools extensively. Meta even ranks employees by Token consumption, making it an implicit KPI for tech companies.

Thus, the high cost of Tokens is not just due to persistent costs but also because this is a war of attrition with no end in sight. As everyone races to consume more Tokens, computing power can never catch up with manufactured demand.

Moreover, beyond the question of whether Token consumption is a false prosperity (prosperity), large model companies find it harder to resist the lure of real money—in just three months, Anthropic's annualized revenue soared from $9 billion to $30 billion.

Competing on Token prices may be a "trap," but with global large model vendors "chasing each other," none want to hit the brakes first.

For first-tier tech companies like Alibaba, ByteDance, and Tencent, the battle for AI super-entry points has long been underway, but they cannot escape the internet playbook of "burning money for traffic." Handing out red envelopes and increasing marketing spend can boost DAU, but without "money power," users will quickly churn.

"Lobsters" offer a new opportunity. Once users deploy their "intelligent assistants" on a cloud platform, it generates continuous Token consumption while locking personal data into the ecosystem, raising migration costs. Big companies are unlikely to overlook this new "ecosystem entry point."

For second-tier vendors like Kimi and Zhipu, the emergence of "lobsters" has driven demand for computing power, enabling their models to be called upon and providing a narrative for API growth, motivating them to sell APIs even harder.

Logically, Luo Fuli is right about Tokens—"price competition" cannot last indefinitely. But for large model vendors riding the "lobster" growth narrative, they may want to "pretend to sleep" a little longer.

03 Efficiency Matters More Than Price

No one can wake up someone who is pretending to be asleep, but reality might—the soaring Token consumption has not brought corresponding profit growth, an issue large model companies cannot avoid.

Take Zhipu, which fully benchmarks against Anthropic, as an example. In 2025, it delivered a "high-growth, high-loss" performance: total revenue of 724 million yuan, up 131.9% year-on-year; net loss of 4.718 billion yuan, up 59.5% year-on-year.

Zhipu founder Zhang Peng once said Zhipu aims to be a cost-effective alternative to Anthropic, even joking that if Anthropic sells for $200, they'll sell for 200 RMB. In March, Zhipu launched a one-click AutoClaw installation: the personal version costs 39 RMB/month for 35 million Tokens or 99 RMB/month for 100 million Tokens, a low barrier to entry.

But the bill behind it is hefty. In 2025, Zhipu's R&D spending reached 3.18 billion yuan, up 44.9% year-on-year. Without its own infrastructure, Zhipu must pay high procurement fees to third-party computing power suppliers, soaring from 14.63 million yuan in 2022 to 1.145 billion yuan in the first half of 2025.

Faced with two unavoidable rigid expenses—R&D investment and computing costs—domestic and foreign cloud vendors have begun adjusting prices for AI computing power and storage products in 2026. However, domestic models remain cheaper than overseas counterparts.

According to a December 2025 research report by Minyin Securities, the average price of domestic large model APIs is about 3.88 RMB/million Tokens, while overseas models cost around 20.46 RMB/million Tokens, more than five times higher.

This price advantage has driven scale demand, meaning domestic large model vendors are unlikely to abandon price wars for now. However, with Token consumption outpacing supply, tightening free quotas and subsidies is inevitable.

Luo Fuli mentioned that the way out for the large model industry is not cheaper Tokens but "higher token-efficient Agent frameworks" combined with "more powerful and efficient models." The Agent era does not belong to those who burn the most computing power but to those who use it most wisely.

This will push large model vendors in two directions:

On one hand, shifting from "computing power scale" to "engineering efficiency" competition. Companies selling APIs alone will face diminishing returns and must deeply integrate model layers with intelligent hardware and application products to inject more possibilities into their business models.

On the other hand, promoting tiered Token pricing. Currently, mainstream large models primarily use subscription, pay-as-you-go, and Token Plan packages (with additional pay-as-you-go after exceeding limits).

In the long run, Token pricing could move beyond simple "volume-based tiers" to more refined systems based on inference capabilities, task quantity, and other dimensions. This would help platforms alleviate peak computing pressure and further increase revenue.

For example, DeepSeek quietly launched "Fast Mode" and "Expert Mode" entrances, seen as a new exploration of tiered pricing. Volcano Engine's Tan Dai mentioned the possibility of incubating vertical-domain agents charged by the number of questions answered.

For now, the Token frenzy may continue for a while, but for the large model industry as a whole, Token costs have become an unavoidable factor for every company and user.

Ultimately, large models are not purely a technical business but a game of efficiency and value. To build a sustainable business, large model companies must learn to calculate costs—only by staying grounded can they better reach for the stars.

The cover image and illustrations belong to their respective copyright holders. If the copyright owners believe their works are unsuitable for public browsing or should not be used free of charge, please contact us promptly, and our platform will make corrections immediately.