Early Morning Drama: OpenAI’s Altman’s Residence Targeted in Bombing

![]() 04/13 2026

04/13 2026

![]() 514

514

Text by / Lingdu

Source / Node Finance

The flames of anti-technology sentiment have finally reached the doorstep of Sam Altman, CEO of OpenAI.

On April 11, reports surfaced that at 3:45 AM local time on April 10, a Molotov cocktail was hurled at Altman’s San Francisco residence, igniting the exterior door. Fortunately, no injuries were reported.

OpenAI confirmed that there were no injuries and stated that all its San Francisco offices would operate normally on Friday, albeit with heightened police and security presence.

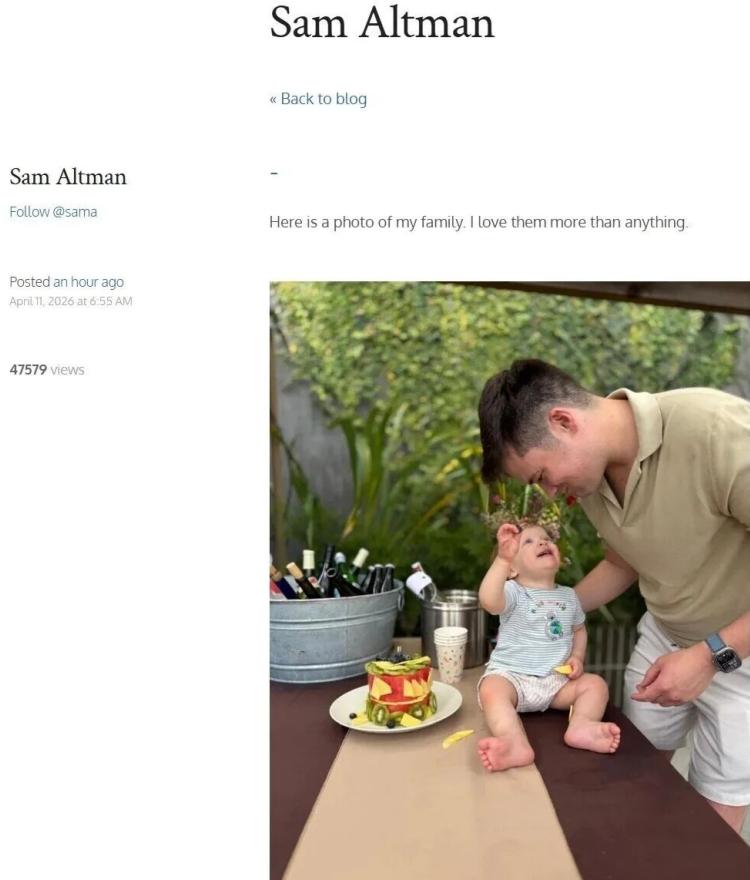

Following the incident, Altman took to social media with a lengthy post to reassure the public. Sharing photos of his children and partner, he conveyed a lingering sense of shock. He reflected on his previous underestimation of the dangers posed by public opinion incitement, as well as his mistakes and pride during his tenure at OpenAI.

This violent act has cast a spotlight on the growing anti-AI sentiment.

A Shocking Early Morning: Molotov Cocktail Outside a Luxury Mansion

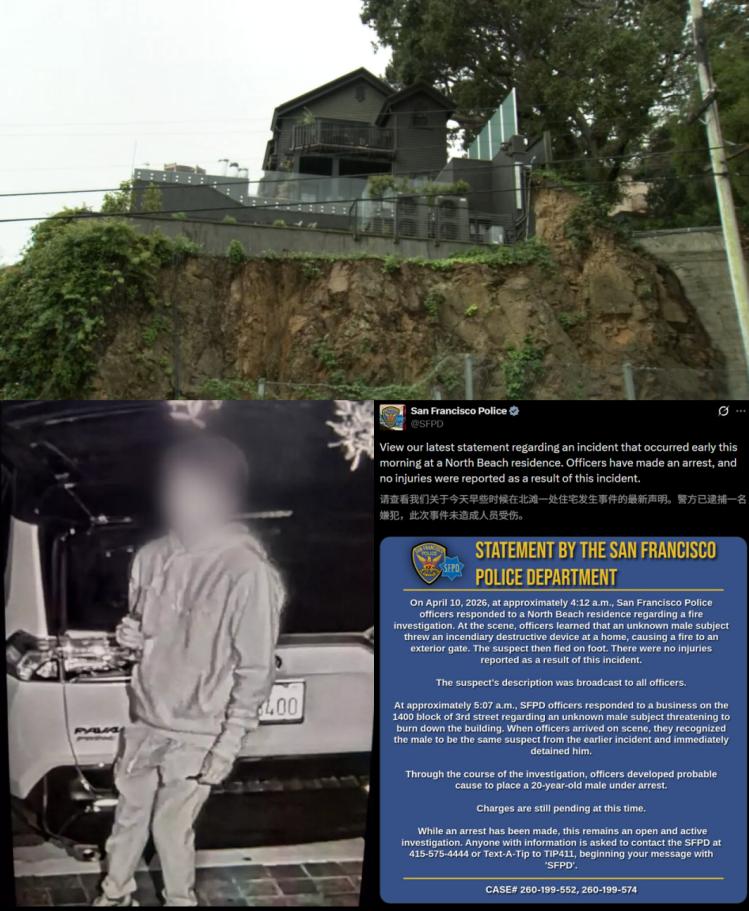

At 3:45 AM on April 10, the tranquility of a luxurious San Francisco neighborhood was shattered by a loud crash. A 20-year-old man had thrown a Molotov cocktail at the $27 million residence of OpenAI CEO Sam Altman.

By 4:12 AM, police had responded to the call and, upon investigation, found no injuries and the fire under control. However, the suspect had fled on foot, and his description was circulated among law enforcement.

Around 5:07 AM, police received another report of an unidentified man outside OpenAI's office building threatening to set it ablaze.

Officers quickly determined that the man matched the description of the suspect from Altman’s residence and detained him immediately.

The San Francisco Police Department issued a notice on X, stating that the investigation is ongoing and no formal charges have been filed yet.

Following the incident, Altman posted a lengthy message on the official blog to reassure the public. Surprisingly, the leader of the AI giant not only shared family photos but also conveyed a lingering sense of shock in his writing. He admitted, "I previously underestimated the dangers posed by public opinion incitement, as well as my mistakes and pride during my time at OpenAI."

Additionally, regarding public sentiment towards AI, Altman stated that advancing AI is a moral responsibility, but societal anxieties must be addressed. AI will bring significant changes, and people's fears and anxieties about AI are understandable. It requires a collective societal effort to address new threats and formulate policies to aid economic transition.

From Node Finance's perspective, this attack is not merely an act of violence against an individual but also a manifestation of the growing "anti-AI" sentiment in society. Altman called for calmer rhetoric in his message and acknowledged, "People's fears about AI are reasonable. We have a responsibility to address societal anxieties, not just pursue technological leaps."

The Truth Behind Anti-AI Sentiment

This extreme act of violence is not an isolated public security incident. Altman seems to be facing not just Molotov cocktails but also the complex emotions stirred by artificial intelligence.

In March, the largest global AI protest in U.S. history erupted, with 200 participants from Anthropic, OpenAI, and xAI shouting, "Stop the AI race!"

The protest's demands were clear: If major AI companies reach a consensus, they should commit to pausing the training and release of more powerful models. Today, the relationship between AI safety advocates and technology accelerationists has become extremely tense.

For every participant in the AI era, the greatest fear is that human jobs are being replaced by AI.

From Node Finance's perspective, past technological revolutions replaced human physical labor, while AI challenges human intelligence and creativity. When programmers, artists, and analysts find that skills accumulated over decades can be replicated by AI in seconds, it creates immense job insecurity. People worry that their loan applications, job opportunities, and even legal rulings may be influenced by hidden algorithms beyond their control.

Altman specifically mentioned the dangers of "public opinion incitement" in his lengthy message to reassure the public.

In the social media era, radical narratives like "AI will exterminate humanity" and "AI will monitor the world" spread much faster than technical explanations. When panic accumulates online to a certain extent, vulnerable or marginalized individuals in reality can easily be incited into perpetrators of violence.

Historically, 19th-century workers smashed machines in factories (Luddite movement); today, Molotov cocktails have been thrown at the home of AI figurehead Altman.

As Altman said, "Technology is not beneficial to everyone." For those left behind in this rapid era, technological progress is not a dividend but a hurricane destroying their way of life.

For a long time, Silicon Valley has adhered to the philosophy of "Move fast and break things," but in the AI field, this approach has led to severe ethical debts. Societal anxieties have not been addressed by timely policies, eventually erupting into social violence.

Moreover, unequal wealth distribution has exacerbated violent behavior.

The Largest Single Financing in History: A '$122 Billion Crazy Gamble'

Just a week before the attack, OpenAI completed a private financing round that can be considered a miracle in human business history: $122 billion, with a valuation soaring to $852 billion.

OpenAI CFO Sarah Friar also commented, "This financing even makes the largest IPOs in history pale in comparison." She stated that amid multiple uncertainties in the public market, this financing would provide the company with sufficient operational flexibility to ensure steady progress in computing resource investment and AI technology research and development.

This figure not only astonished Silicon Valley but also overshadowed the traditional IPO market. OpenAI CFO Sarah Friar bluntly stated, "This financing makes the largest listing events in history pale in comparison."

The capital landscape behind this "game of money and power" is staggering:

Amazon ($50 billion): Accounts for half of the total but comes with extremely stringent conditions—$35 billion is contingent on OpenAI completing an IPO or achieving AGI (Artificial General Intelligence) milestones.

NVIDIA and SoftBank ($30 billion each): Paid in installments, with the first $10 billion to arrive in July this year.

Top banking syndicate: Global giants like JPMorgan Chase and Goldman Sachs provided a $4.7 billion revolving credit facility.

Capital's will has pushed OpenAI onto an irreversible path. Whether Altman is ready or not, the company must rush toward the finish line of the capital market at breakneck speed.

However, another piece of news soon poured cold water on OpenAI's IPO prospects.

According to reports, OpenAI CEO Sam Altman and Sarah Friar have differing views on the pace of IPO advancement. Almost simultaneously, OpenAI's management is undergoing a fierce restructuring: COO Brad Lightcap has been reassigned, App CEO Fidji Simo has taken sick leave due to a worsening neuroimmunological disease, and CMO Kate Rouch has left for cancer treatment. The adjustments to these three key executives were announced in the same batch of memos on April 4, a timing so sensitive that it raised eyebrows.

Today, OpenAI faces internal and external challenges: internally, team turmoil; externally, intensifying competition.

On April 7, U.S. AI giant Anthropic (whose model is Claude) announced an astonishing and counterintuitive figure—as of March, its annualized revenue had reached "$30 billion."

This figure surpasses the "$25 billion" annualized revenue disclosed by OpenAI at the end of February and confirmed during its new financing round in April, making Anthropic the world's highest-grossing AI large model company. This also means that Anthropic's valuation may have officially surpassed OpenAI's by April 2026.

For any era, AI large models represent a cross-generational super trend. Whether OpenAI, at the forefront of this trend, can successfully go public remains uncertain. However, the question of how AI and humanity can coexist is one everyone wants to ask.

This Molotov cocktail is a dangerous signal. It reminds all AI practitioners that technological leadership cannot offset societal divisions.

From Node Finance's perspective, the Molotov cocktail at Sam Altman's doorstep is more like a metaphor for the times: when AI-driven wealth accumulation and technological power concentrate to an unsettling degree, conflict becomes inevitable.

Today, OpenAI stands at the intersection of valuation myths, power transitions, and societal anxieties. Is AI a tool to benefit all of humanity, or a bargaining chip for a few companies to control the future? This question is written on the minds of every ordinary person uneasy about technological change.

On the journey toward AGI, OpenAI must solve not just code and computing power but also how to find a way forward amid the frenzy of capital and societal turmoil.

*The featured image was generated by AI.