CPU in the AI Agent Era: A Resurgence to the 'Golden Age'

![]() 04/21 2026

04/21 2026

![]() 633

633

If AI were merely a chatbot that answered questions one by one, the computing power requirements would indeed be straightforward—the number of GPUs would essentially dictate the extent of AI's capabilities. During the era dominated by conversational models, the CPU served as a low-key coordinator, managing data flow rather than being the primary determinant of response speed.

However, as AI evolves beyond the chatbox to invoke tools, read and write code, and orchestrate complex tasks, transforming into a true 'digital agent,' the dynamics of computing power have shifted dramatically. The surge in branch instructions has left GPUs, which excel at matrix multiplication, struggling to adapt. Meanwhile, the CPU, once relegated to the background, now stands at the forefront of control flow and memory management.

01. CPU's Secondary Role in the Era of Conversational Large Models

In the early stages of AI development, the industry was primarily driven by a single principle: computing power determined the upper limit, and GPUs were the cornerstone of this power. Whether it was training models with hundreds of billions of parameters or performing real-time inference on large models, the core computations relied heavily on matrix multiplication—a domain where GPU architecture shines. Under this paradigm, the CPU played a supporting role, handling tasks such as data preprocessing, task scheduling, and result post-processing. Its performance did not seem to directly impact the user experience.

However, by 2026, the AI industry underwent a pivotal shift. AI was no longer confined to being a 'question-answering chatbot'; it began to venture into the real world to 'execute tasks.' This transformation not only represented a leap in capabilities but also a fundamental restructuring of computing power demands. While large model training was once the primary consumer of AI computing power, by the second half of 2025, AI inference spending officially surpassed training, marking the so-called 'inference flip.' As the focus shifted from training to inference and large-scale deployment, the criteria for evaluating computing power also evolved—it was no longer about who had the most powerful GPUs but whether the entire system could operate efficiently.

In the era of conversational models, processing a user request followed a relatively straightforward path: the CPU converted text into tokens, the GPU processed the model to generate responses, and the CPU then converted the tokens back into text. In this round trip, the GPU's computation time dominated the total latency, with the CPU's role being relatively minor in terms of performance. However, when the workload shifted to agents, the situation changed dramatically. A typical agent task requires multi-step reasoning, API calls, database read/write operations, code execution, document parsing, and then orchestrating all intermediate results into a final output.

On April 8, Dylan Patel, Chief Analyst at the renowned semiconductor analysis firm SemiAnalysis, pointed out in an in-depth interview that due to the evolution of AI workloads from simple text generation to complex 'agents' and 'reinforcement learning (RL),' CPUs are facing an extremely severe capacity shortage.

02. Agent Work Mechanisms Drive a Reassessment of CPU Value

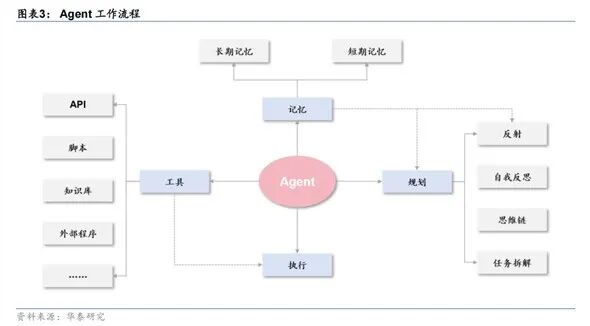

Why do agents rely so heavily on CPUs? The answer lies in the agent's work mechanism.

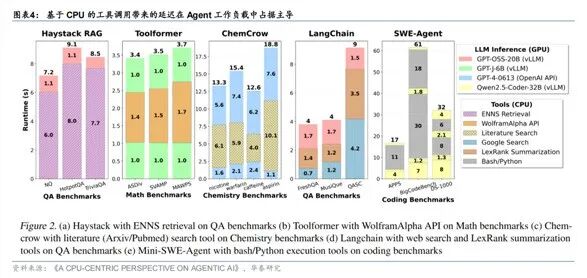

Traditional conversational models have few branches; one inference is just one inference. However, the action phase of agents is filled with if/else judgments and system calls. Mainstream agent architectures, such as Manus, allocate an isolated cloud sandbox virtual machine for each task, allowing parallel execution but with completely different control flows—some browsing the web, some modifying code, and others deploying environments. Executing such branch-heavy tasks on GPUs would lead to a sharp decline in computing power utilization due to control flow divergence. Branch prediction and handling, however, have been the core optimization areas of CPU microarchitecture for decades. This is precisely what Dongwu Securities refers to as the 'CPU-ization of execution control flow.'

Meanwhile, the agent's memory system is also undergoing a transformation. In long-context scenarios, large model inference generates massive KV Cache, whose occupancy grows linearly with conversation turns and context length, quickly exhausting the GPU's precious HBM capacity. The industry's widely adopted solution is to migrate the KV Cache to CPU memory—through KV Cache Offload technology, paired with large-capacity DDR5/LPDDR5 memory and CXL expansion, the CPU becomes the optimal container for KV Cache, balancing throughput, scalability, and cost efficiency. Dongwu Securities summarizes this phenomenon as the 'de-GPU-ization of the memory system,' meaning the CPU's role has expanded from a mere scheduling hub to a core resource pool handling both control and partial storage functions.

Notably, agent workloads not only challenge CPUs qualitatively but also exert unprecedented quantitative pressure. Compared to standard generative AI, agent-based AI deployments consume 20 to 30 times more tokens. Each user interaction involves multi-step reasoning, tool invocation, and cross-agent coordination, resulting in token consumption far exceeding that of a single Q&A. Gartner even predicts that by 2027, 40% of agent projects will be canceled due to infrastructure cost overruns. The high costs stem not only from GPU inference but also significantly from ongoing CPU expenses.

03. Overseas Tech Giants Launch a 'Core Count Race,' Industry Poised for High Growth

At this critical juncture of surging CPU demand and constrained capacity, the moves of industry giants often reveal the future first.

In early 2026, NVIDIA made two seemingly unexpected moves: first, it invested an additional $2 billion to subscribe to CoreWeave stock and deployed the Vera CPU, designed specifically for agent-based inference, on its platform; second, it significantly increased the CPU core count in its next-generation Rubin architecture and opened NVL72 racks to support x86 CPUs.

Meanwhile, traditional CPU vendors are collectively rushing toward ultra-multi-core architectures driven by agents. AMD's Turin offers up to 192 cores; Intel's Sierra Forest adopts a pure efficiency-core design, with core counts reaching 144 or even 288. Ultra-multi-core CPUs support large-scale, long-running agent execution environments with higher parallelism and lower per-unit power consumption. As agent commercialization progresses, vendors must continuously reduce the execution cost per task—under this goal, more cores mean lower unit costs, and the CPU core count race may have just begun.

From an investment perspective, IDC projects that the number of tasks executed by agents annually will surge from 44 billion in 2025 to 415 trillion in 2030, corresponding to a compound annual growth rate (CAGR) of 524%. The development of Agentic AI is driving CPUs toward a new growth opportunity.

Among A-share related companies, Dongwu Securities' research report highlights CPUs: Montage Technology, Hygon Information, Guanghe Technologies, Loongson Technology, and China Great Wall. Databases: StarRing Technology (optimized for ARM, compatible with NV-GPU-Grace CPU).

04. Conclusion: CPU Poised for a 'Golden Age' Resurgence

The shift in the computing power landscape, from GPU-centricism in conversational models to the CPU value resurgence in the agent era, reflects the profound evolution of AI application forms. When inference spending surpasses training and agent token consumption multiplies compared to single Q&As, the infrastructure efficiency proposition is no longer just about who has the most powerful GPUs but whether the entire system can operate at sustainable costs. With its architectural advantages in branch prediction, memory expansion, and concurrency control, the CPU has leaped from a mere scheduling hub to a core resource pool handling control logic and the memory system.

The core count race among overseas tech giants is just an outward sign of this transformation. It all points to a clear direction: for large-scale, long-duration agent-based AI workloads, ultra-multi-core CPUs are becoming the key balance point for cost and energy efficiency. It is foreseeable that as agent commercialization deepens, the criteria for evaluating computing power systems will be partially rewritten—the heterogeneous computing scale will no longer tilt solely toward GPUs, and CPUs will take a more proactive stance in defining the shape and boundaries of next-generation AI infrastructure.

- End -