Wall-Facing Intelligence: An ‘Alternative’ Survival Strategy for Large Models

![]() 04/21 2026

04/21 2026

![]() 538

538

Author | Hao Xin

Editor | Wu Xianzhi

Wall-Facing Intelligence stands out as an anomaly in China’s large model sector.

At a time when the Scaling Law—the notion that expanding parameters, data, and computing power leads to enhanced model capabilities—was widely accepted both domestically and globally,

Wall-Facing Intelligence took a different path, prioritizing ‘knowledge density’—achieving greater capabilities with fewer parameters—and the ‘density rule’—steadily enhancing model capability density under constrained computing resources.

One approach relies on brute force to achieve breakthroughs, while the other achieves remarkable results with minimal resources. From the outset, this set Wall-Facing Intelligence apart from the mainstream ‘AI Six Little Dragons.’

Interestingly, the trajectories of Wall-Facing Intelligence and the ‘AI Six Little Dragons’ inadvertently formed a large ‘X.’ The intersection was the shakeout phase of large models. Early leaders Lingyi Wanwu and BaiChuan Intelligence showed clear signs of lagging behind, while Zhipu and MiniMax successfully navigated the challenges, and Yuezhi Anmian and Jieyue Xingchen were close behind.

Throughout this process, Wall-Facing Intelligence could be described as ‘surviving in the margins’ and ‘swimming against the tide.’ Lacking the spotlight or substantial funding, Wall-Facing Intelligence focused on the ‘edge-side’ direction, successfully avoiding the fierce competition over computing power, pricing, and consumer-end traffic.

This strategy of conserving resources not only kept Wall-Facing Intelligence afloat but also transformed it into a significant player.

From April 2023 to the present, over approximately three years, Wall-Facing Intelligence has completed seven rounds of financing, averaging nearly one every six months—a pace faster than most contemporary startups.

After multiple funding rounds, as of the latest round in April this year, Wall-Facing Intelligence officially reached unicorn status for large models (valued at the US$1 billion level), placing it at the forefront of China’s current second tier of large models.

“Misaligned” Market Expectations

In 2023, the concept of ‘Agent’ was still unclear to most, with academic papers being the primary source of understanding. That year, the most discussed topic around Agents was the ‘Stanford Town’ experiment.

How well did the industry grasp the concept of Agents at the time? One example illustrates the point: Entrepreneurs returning to China to introduce their Agent-based startups at small forums had to spend at least an hour explaining the concept because it was too abstract for the audience.

In this environment, at the end of 2023, Wall-Facing Intelligence released the AI agent application framework ‘XAgent,’ the universal agent platform ‘AgentVerse,’ the multi-agent collaboration development framework ‘ChatDev,’ and an AI development software—all at once.

In closed-door discussions with the media, Wall-Facing Intelligence had to explain Agents using more familiar terms like ‘Copilot’ and ‘SaaS.’ Looking back, it’s clear that Wall-Facing Intelligence already had the vision for Vibe Coding at that time.

A representative from Wall-Facing Intelligence told Photon Planet, ‘We believe AI Agents are a crucial pathway for large models to be implemented in real-world scenarios. The future will be an Agent-dominated world, where everything operates as an Agent.’

A rice cooker can function as an Agent—once ingredients are placed inside, it automatically heats up according to the logic of making porridge. Similarly, a refrigerator can act as an Agent—if there’s a coolant failure, it alerts you through dialogue and schedules a repair technician upon confirmation.

The rice cooker and refrigerator analogy isn’t about making appliances ‘talk’ but about enabling them to perceive, decide, and execute autonomously.

This also laid the foundation for the later focus on edge-side models, as ‘everything as an Agent’ and ‘hardware edge-side’ are interdependent. Without the edge-side model as the foundation, the Agent’s capabilities would remain theoretical, unable to intervene in the physical world. Conversely, without the Agent’s intelligence, the edge-side model would merely be a smaller model file lacking a clear application direction.

The ideal is ambitious, but reality is challenging. Rewinding to 2023, Baidu’s Wenxin, Alibaba’s Tongyi, and Tencent’s Hunyuan large models had just been released in the first half of the year, while Yuezhi Anmian’s Kimi assistant began internal testing only in the second half.

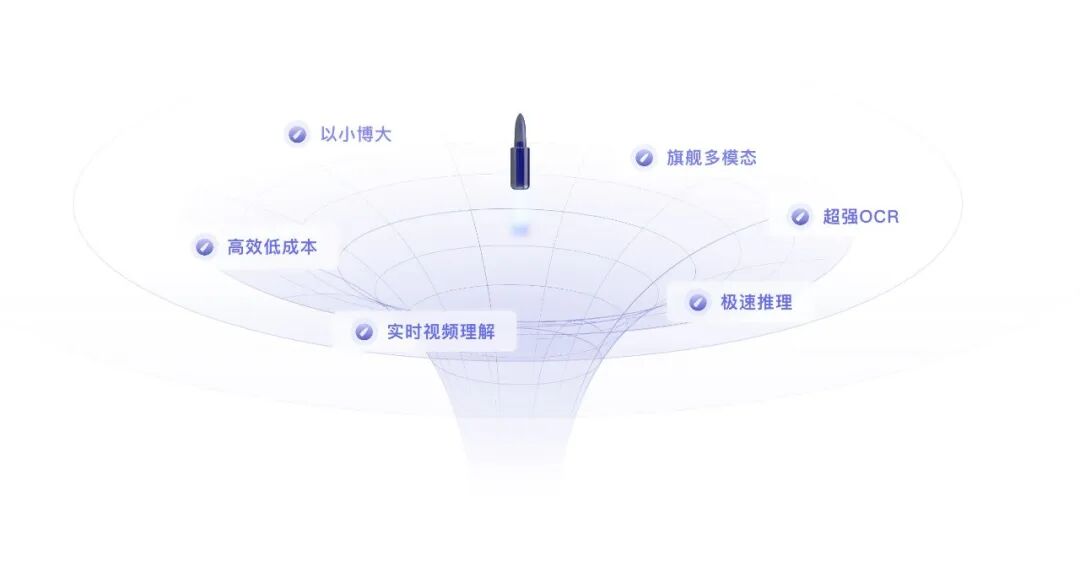

In early 2024, Wall-Facing Intelligence shifted its focus to the edge-side, starting with the compact yet powerful MiniCPM. As CEO Li Dahai put it, ‘achieving remarkable results with minimal resources’ and ‘building strength through efficiency’ form the core capabilities of Wall-Facing Intelligence. The essence is to optimize performance with the least resources and smallest scale.

To date, the MiniCPM model has been updated to the 3.0 series, with parameters consistently kept below 10 billion, gradually expanding from general-purpose language models to multimodal, full-modal, and vertical-scenario specialized models.

Large and small models are relative concepts. If the battleground of the ‘AI Six Little Dragons’ is large models, then Wall-Facing Intelligence’s primary focus has always been ‘small models.’

Some AI entrepreneurs argue that compared to massive parameter models, small-to-medium models with scales ranging from billions to tens of billions are more practically valuable. ‘Open-source models with hundreds of billions of parameters benefit small and medium-sized enterprises, while models with tens of billions of parameters are accessible to nearly all developers.’ Yet, the domestic model ecosystem lacks this ‘missing piece’ for small-to-medium models.

Both have their strengths. In productivity or creative scenarios requiring complex knowledge reasoning, long document analysis, or professional content generation, the cloud models of the ‘AI Six Little Dragons’ are indispensable. However, in scenarios demanding real-time interaction, privacy protection, and stability—such as in cars, phones, and IoT devices—Wall-Facing Intelligence’s edge-side models have the advantage.

Together, they form the complete AI industry ecosystem of ‘cloud-trained brain, edge-executed cerebellum.’

Avoiding the Frontline Battlefield

The entry of tech giants has accelerated the shakeout in the large model industry. The ‘AI Six Little Dragons’ were pushed into a fierce war of attrition.

Wall-Facing Intelligence not only avoided being swept away by this wave but also saw its value highlighted as the wave crashed over others.

Avoiding models with hundreds of billions or trillions of parameters is equivalent to voluntarily stepping away from the computing power arms race. Its models run on customers’ phones, cars, and terminal chips, bypassing cloud API billing entirely and thus avoiding the price war vortex. Not pursuing super apps dependent on daily active users, monthly active users, or retention rates means avoiding expensive marketing costs and the traffic competition with internet giants.

Edge-side model vendors like Wall-Facing Intelligence directly address enterprises’ data privacy concerns, becoming one of their core competitive advantages. For enterprises, critical and sensitive data cannot be directly fed to large models, especially for large state-owned enterprises.

There are also scenarios requiring immediate feedback where edge-side models outperform general-purpose large models. Take embodied intelligence scenarios: When a humanoid robot attempts to step over an obstacle, it needs to immediately judge the obstacle’s height, hardness, and the appropriate posture to respond. In such cases, model invocation cannot happen on a public cloud due to network latency; instead, it relies on a built-in distilled local small model for timely response.

The battleground of the ‘AI Six Little Dragons’ is computing-intensive—whoever has more GPUs or larger parameters holds the advantage. This is the domain of internet giants and well-funded players.

Wall-Facing Intelligence’s logic, however, is that the edge-side battleground is energy-efficiency-intensive—whoever can achieve near-cloud-model performance under extreme constraints of 1 billion parameters, 50 milliseconds of latency, and 100 milliwatts of power consumption will lead. This is a technical niche overlooked by giants.

After selecting its battleground, Wall-Facing Intelligence began doing the ‘grunt work’ that giants avoid.

Industry insiders told us, ‘When chip and phone giants develop their own edge-side models, most only optimize for their own products, making them vertically closed.’

As a neutral third party, Wall-Facing Intelligence can provide unified model interfaces and optimal adaptations for all mainstream platforms. For automakers and Tier 1 suppliers, this means ‘single integration, multi-platform deployment,’ significantly reducing development costs.

The core of this step is building compatibility barriers through engineering effort. Wall-Facing’s competitive edge isn’t a single clever algorithm but tens of thousands of lines of adaptation code and a deep understanding of dozens of chip architectures. Such barriers are something giants won’t build and small companies can’t afford to build.

From its inception, Wall-Facing Intelligence targeted B-end clients, expanding from early financial and marketing domains to now include automotive, embodied intelligence, education, and more.

Notably, many of Wall-Facing Intelligence’s clients come from second-tier players squeezed by giants but unwilling to fully depend on them. These include Loongson, which counters ARM and Intel ecosystems with an independent AI software stack; Porsche, which avoids being tied to Huawei, Xiaomi, and other smart cockpit ecosystems; and China Telecom, which doesn’t want to rely entirely on Alibaba and other giants for AI cloud services.

All the clients mentioned above appear on Wall-Facing Intelligence’s financing list from Series B to Series C. By binding equity with business, Wall-Facing Intelligence tries to become an integral component of hardware products.

This means that as long as cars and phones equipped with Wall-Facing’s models are shipped, it generates continuous revenue. It’s like NVIDIA’s graphics drivers—users don’t notice them, but they’re indispensable.

Additionally, Wall-Facing Intelligence is expanding into the information technology innovation sector. In scenarios like pan-judicial, government affairs, and finance, keeping data within terminals is a legal requirement. Wall-Facing Intelligence’s edge-side private deployment capabilities make it a legitimate option in these fields. This isn’t a technical competition but a compliance-driven market.

Waiting for the Wind to Come

The theory of technological innovation diffusion states that there’s a classic time lag between a technology’s lab phase and its industrialization—‘concept first, conditions lag.’

Wall-Facing Intelligence’s stance on Agents in 2023 exemplifies this view. Agents that year were more like Wall-Facing Intelligence’s advanced technological declaration, showcasing the destination but lacking the road and vehicle to get there.

The soil for Agents in 2023 was inherently deficient. At the time, the strongest open-source model was LLaMA 2, and the closed-source model was GPT-3.5/GPT-4’s initial version. While impressive for text generation, these models were highly unstable in the four core Agent capabilities: logical planning, task decomposition, tool invocation, and long-term memory. Agents were ‘toys,’ not ‘tools.’

Back then, most enterprises were still digesting ‘what large models are.’ Wall-Facing Intelligence’s proposals of ‘one-sentence software development’ and ‘multi-agent collaboration’ corresponded to highly digitized, process-standardized ideal organizations. Most enterprises’ digital foundations at the time couldn’t even support Agents executing operations. Agents became castles in the air.

Today, Wall-Facing Intelligence gains attention because it voluntarily abandoned pitching Agents as a selling point and instead compressed Agent capabilities into the ‘foundation’ of edge-side models.

In early 2026, ‘Lobster’ OpenClaw erupted globally, becoming the catalyst Wall-Facing Intelligence had been waiting for.

OpenClaw let the market intuitively experience for the first time that AI can physically perform tasks. However, users quickly discovered three pain points with cloud-based Agents: privacy exposure, network dependency, and high costs.

The market naturally began asking if there was an Agent that could run offline, process data locally, and not charge per use. The standard answer to this question points directly to Wall-Facing Intelligence’s three-year-deep edge-side Agent solution.

Industry experts have pointed out that the future direction is edge-cloud collaboration.

The cloud-based brain, with its powerful global planning and complex reasoning capabilities, handles strategic decomposition of long-term tasks, intent understanding, and final result verification. The edge-side acts as the hands and feet, leveraging its low latency, high privacy, and low-cost traits to execute decomposed, specific, short-cycle subtasks on devices like phones, PCs, and cars.

Even so, Wall-Facing Intelligence still faces valuation challenges. While its absolute valuation isn’t low, a clear gap exists compared to the top few among the Six Little Dragons.

The imagination space for large and edge-side models differs, but the core issue for Wall-Facing Intelligence ultimately boils down to: Does hardware define software, or does software define hardware?

The edge-side models of Wall-Facing Intelligence are unable to bypass the influence of vendors such as Qualcomm, Huawei, and Apple. These companies all possess in-house developed edge-side AI capabilities and exercise control over the fundamental chip architectures. The primary concern for the future lies in whether Wall-Facing Intelligence will follow the same path as input methods—ultimately being replaced or pushed to the margins by the self-developed solutions of phone manufacturers.

This risk of 'being hemmed in' serves to depress its long-term valuation multiples.

Wall-Facing Intelligence must consistently demonstrate that its algorithmic advantages can maintain a lead of at least one generation over the internal teams of chip vendors, in order to dispel this market skepticism.