Kimi K2.6: Large Models Enter the Era of 'Long-Range Execution'

![]() 04/22 2026

04/22 2026

![]() 472

472

On April 20th, rumors about the impending release of DeepSeek V4 once again disappointed AI enthusiasts.

But as midnight approached and the moon shone brightly, Moonshot AI quietly delivered a surprise:

The brand-new Kimi K2.6 model was officially released and immediately made open-source.

Over the past year, the domestic AI model landscape has been fiercely competitive, with new developments refresh (refreshing) people's perceptions almost daily.

The Chinese internet's discussion of large models has also gone through three competitive cycles:

The first round focused on stacking parameters, the second on context length, and the third on the much-anticipated price war.

However, the birth of Kimi K2.6 signifies that Moonshot AI has taken the lead in entering the deep end of the second half of large model competition—long-range reasoning and execution.

While it may seem unromantic to pour cold water on this joyous occasion, we must still confront an objective divide.

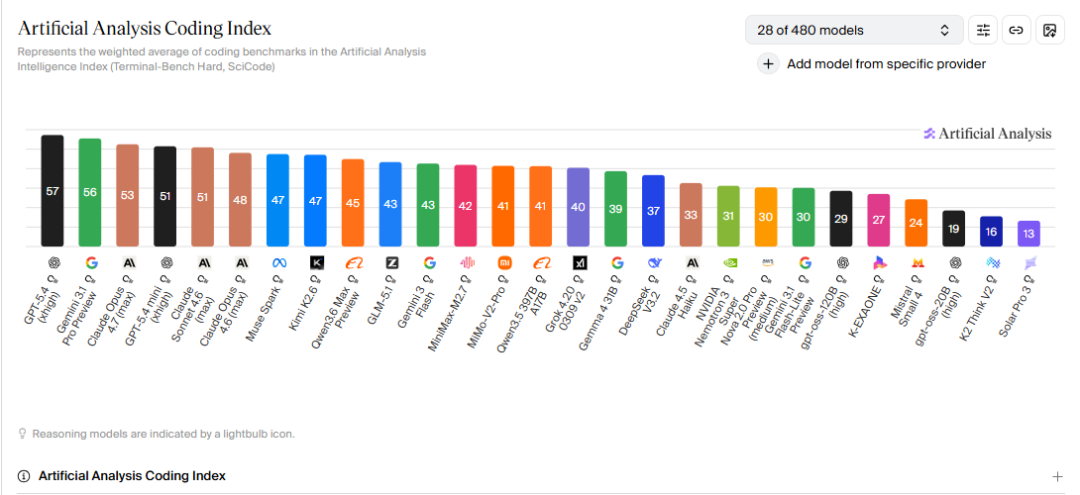

Without considering multimodality, a clear generational gap has emerged between domestic and international AI models.

Anthropic, which just released Claude Opus 4.7, and OpenAI, which updated Codex, have taken a commanding lead in programming and other highly logical fields, making their products the top choice for developers with access and sufficient budgets.

New products from domestic AI companies are essentially chasing the previous generation's flagship models from these two companies, competing to become the 'domestic alternative' for other developers.

This alternative strategy is not passive defense but rather an attempt to take root in China's AI landscape through ultimate (extreme) execution and localization adaptation, given the clear performance divide.

If Kimi won user mindshare in the first half with long texts and massive parameters, the emergence of K2.6 declares a strategic shift: from an information container to an execution engine.

Reading 100,000-word documents, creating multi-page PowerPoint presentations, and placing online orders for various products were tasks for Agents of the previous era (even if it was just two months ago).

Kimi K2.6, however, is a 'digital contractor' capable of working continuously for 13 hours, commanding 300 subordinate 'digital laborers,' and independently delivering thousands of lines of industrial-grade code.

Behind this late-night release lies CEO Yang Zhilin's latest revision to the Scaling Law and Moonshot AI's far-reaching plan to reshape the open-source ecosystem through the KVV project.

01

The Singularity of Long-Range Execution

Although detailed technical papers have not yet been released, two figures in the official blog post are enough to shock both the technical and business communities:

13 hours of continuous coding and 300 sub-Agents collaborating in parallel.

Over the past few months, the term 'Agent' has been passed around as if AGI would suddenly arrive in a few days.

But the reality is that most Agents are still toys or not particularly useful tools.

Once the task chain is extended, AI inevitably suffers from memory decay or logical drift. For enterprises with complex business scenarios, this core pain point directly restricts the practical deployment of Agents.

However, Kimi K2.6 achieves a qualitative transformation from task to project.

Just as these exaggerated figures intuitively suggest, Kimi K2.6 demonstrates an unimaginable long-range stability.

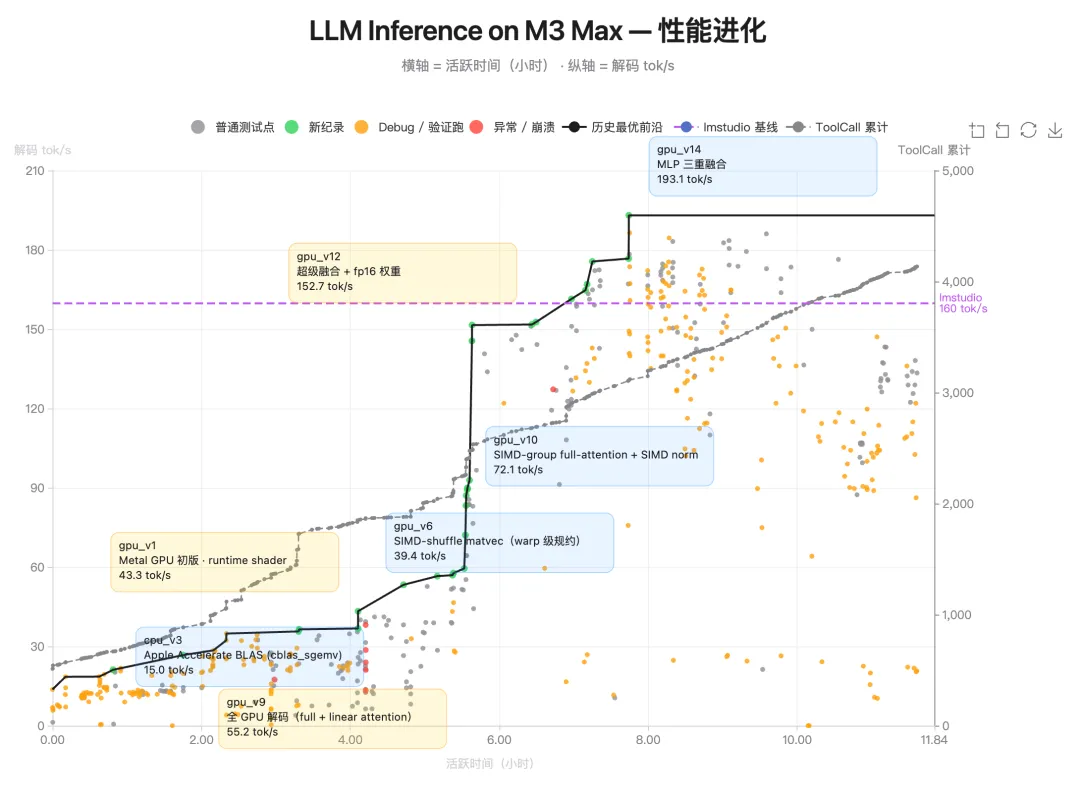

In an extreme scenario tested by the official team, K2.6 successfully downloaded a lightweight Qwen 3.5 model locally on a Mac and even implemented and optimized the inference process using the obscure Zig programming language.

After over 4,000 tool invocations and 12 hours of uninterrupted operation, K2.6's throughput skyrocketed from 15 tokens/s to a staggering 193 tokens/s.

In another case, K2.6 transformed into an expert-level system architect.

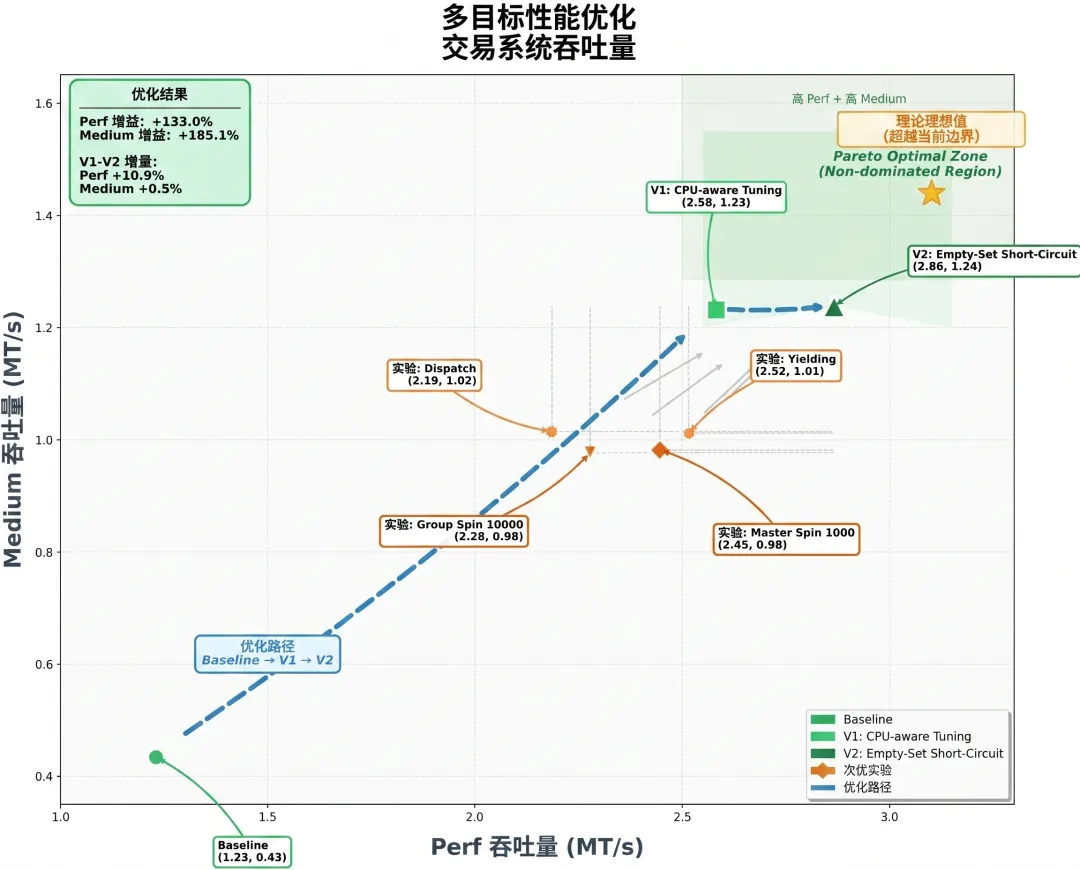

When refactor (restructuring) the 8-year-old open-source financial matching engine exchange-core, it identified bottlenecks by analyzing CPU flame graphs and precisely modified over 4,000 lines of code, boosting peak throughput by 133%.

The commercial truth hidden behind these two typical applications is clear: programming is currently the industry where AI creates the most significant value with the fastest closed loop.

For developers, the popularity of Vibe Coding demonstrates that AI's commercial landing must anchor in scenarios with high-frequency, high-fault-tolerance closed loops.

AI interns that require constant minute-by-minute supervision ultimately cannot integrate into practical applications, so K2.6 has chosen to reinvent itself as a product manager.

Meanwhile, the definition of productivity in the AI industry is changing: people will only pay for deterministic results, not for API call volumes.

This exaggerated leap in execution capability essentially stems from the 'Agent Swarm' paradigm proposed by Yang Zhilin two months ago at NVIDIA's GTC conference.

K2.6's cluster architecture supports 300 sub-Agents completing 4,000 collaborative steps in parallel, essentially simulating human society's industrial division of labor.

A single Agent is just a 'digital laborer,' but a cluster of 300 Agents forms a fully digitized large department.

More importantly, this large department is not confined to a single domain—it can execute quantitative strategies for 100 global semiconductor targets, match 100 job positions with fully customized resumes, and even transform a high-quality astrophysics paper into specific academic skills, charts, and structured datasets.

This expansion of organizational bandwidth precisely illustrates why developers are currently the group with the strongest willingness to pay for AI across society.

For scattered individual C-end users, overcoming the 'free tool' impression and generating paid subscriptions is a challenge all global AI companies face.

However, for clustered B-end enterprise developers, Agent clusters capable of parallel processing massive inputs and large-scale execution are genuine productivity tools.

When K2.6 begins pipeline operations at this massive scale, it completes the transition from intellectual display to producing economic value.

02

Breaking Compute Monopoly with Logic

Such terrifying long-range execution and Agent concurrency capabilities naturally make everyone curious about how Moonshot AI achieved this miracle.

The answer does not lie in stacking countless GPUs but in an efficiency revolution at the foundational infrastructure level.

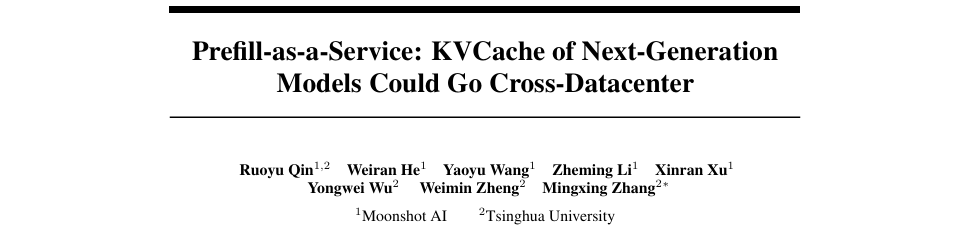

Just five days ago, Moonshot AI and Tsinghua University jointly published a paper titled 'Prefill-as-a-Service: KVCache of Next-Generation Models Could Go Cross-Datacenter.'

The secret to 'running fast while saving compute' lies within this paper: hybrid attention architecture and deep compression of KVCache.

Breakthroughs in foundational architecture directly address a seemingly contradictory phenomenon in the AI industry.

Over the past two months, the frenzy of pseudo-demand for desktop agents, exemplified by OpenClaw, has gradually subsided.

Even those still using such tools inevitably face a core dilemma:

Agent tools, involving frequent environmental interactions and tool invocations, consume Tokens at a rate far exceeding conventional usage.

If Agent tools cannot complete high-difficulty engineering tasks, the value they create cannot cover the exorbitant compute costs.

However, while pseudo-demand clears, genuine demand increases.

Domestic giants' Coding Plan prices have risen instead of fallen, with some even canceling new purchases and renewals for Lite-tier subscriptions while pushing Pro and Max-tier services.

This trend indicates that AI companies are using price levers to weed out 'AI-teasing' marginal users and focus on serving core users who genuinely leverage AI for productivity.

Even so, subscription services from companies like Zhipu remain in high demand, with users who finally secure purchase slots reporting frequent throttling during peak periods.

Rising prices combined with supply shortages stem from the brutal game theory (game) between compute costs and real output, forcing AI companies to make Coding Plan subscriptions profitable.

Moonshot AI is no exception. The Kimi Linear architecture it employs compresses KVCache traffic by an astonishing 13-36x through mathematical improvements. This extreme compression makes cross-regional KVCache transmission possible and inexpensive.

At the system level, Moonshot AI go with the flow ( go with the flow ) introduced the 'Prefill-as-a-Service' (PrfaaS) architecture.

It breaks the physical boundary that traditional inference must be locked to expensive RDMA networks, using compressed KV traffic to schedule compute resources through ordinary cross-datacenter Ethernet.

This 'model compresses data + system runs scheduling' combination allows Kimi to use expensive H200s exclusively for understanding during the prefill stage while letting cheap GPUs handle decoding generation locally.

This not only aligns with engineering aesthetics but also gives Moonshot AI profit margins through dimensionality reduction in foundational infrastructure during the era of high-priced subscriptions.

Alongside cost savings comes a breakthrough in intelligence ceilings.

As Yang Zhilin once said, Token efficiency is not just an engineering issue but also concerns intelligence ceilings.

Through the Muon optimizer, Kimi series models achieve 2x efficiency improvements with the same training volume and solve training instability issues at the 1-trillion parameter scale.

Thus, Moonshot AI has proven to the world that cost reduction and efficiency gains in the Token consumption war can be achieved through foundational architecture improvements.

03

Reshaping the Trust Chain

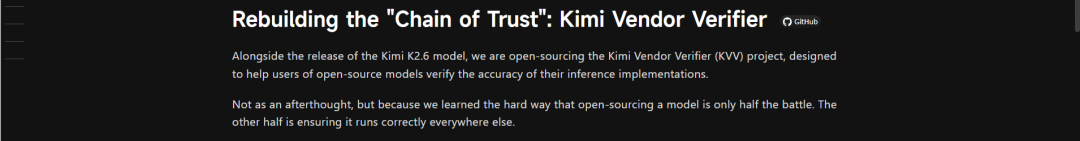

In K2.6's technical blog post, another easily overlooked but fascinating development is Moonshot AI's open-sourcing of the KVV (Kimi Vendor Verifier) verification project alongside the model.

This seemingly meddlesome move actually reflects the inevitable choice as large models enter the B-end delivery era.

Since the AI industry widely recognizes that developers remain the core audience, reliability becomes a more critical entry threshold than intelligence.

In the current open-source model ecosystem, AI companies publishing model weights is just the first step.

However, when these open-source weights are deployed by third-party cloud providers, adjustments to model parameters often occur due to cost-saving considerations and other factors.

Improper parameter settings can easily create significant differences between 'buyer show' and 'seller show' performances on various e-commerce platforms.

For developer groups with high willingness to pay but extremely low error tolerance, performance degradation is fatal.

If users cannot distinguish whether 'the model underperforms' or 'the deployment fails,' brand trust in the open-source ecosystem collapses.

The introduction of KVV represents Moonshot AI's attempt to establish industry rules through 'legislation.'

This evaluation standard includes six dimensions: OCRBench visual testing, AIME2025 long-output stress testing, SWEBench software engineering testing, and more. Moonshot AI mandates that all service providers integrating K2.6 must comply with official parameter standards.

In other words, KVV verification serves as the ISO 9001 quality control and Intel Inside certification system for the large model industry.

Thus, calling it a commercially insightful strategy is no exaggeration.

Moonshot AI recognizes that domestic AI routes can currently at best emulate Anthropic's vertical focus on programming and cannot create more miracles in the C-end market.

To win B-end developers' trust, establishing a transparent, traceable trust chain becomes essential.

Want to earn money within the Kimi ecosystem? Then you must remain 100% honest under KVV scrutiny.

Through this approach, Moonshot AI transforms from a mere technology provider to the architect of AI ecological environments and standards.

This shift in power logic may warrant deeper contemplation than technical breakthroughs alone.

04

The Key to the Social Operating System

At the end of the official blog post, mention is made of a service currently in internal testing: Claw Group.

If K2.6 is the engine and KVV is the standard, then the Claw Group represents the organizational prototype on Moonshot AI's future blueprint:

a collaborative space where humans, heterogeneous Agents, and cross-platform tools coexist.

The official definition of K2.6 is clear: it will serve as a coordinator, capable of integrating Agents from any device or model and dynamically matching tasks based on their skill profiles.

In fact, this is the ideal form envisioned by Vibe Coding.

Within a collaborative group, developers only need to define goals and ambiance, while the complex subsequent steps are automatically divided and completed by hundreds of Agents equipped with different specialized tools.

This is also the vision Yang Zhilin proposed at the end of his GTC speech.

Looking back at K2.6's late-night release, the logical chain has become clearly visible:

Solving 'can it get the job done' through long-term execution;

Solving 'is the cost of getting the job done expensive' through a hybrid attention architecture;

Solving 'are the delivery standards reliable' through KVV;

Solving 'how to work alongside humans' through the Claw Group.

Compared to domestic internet giants, Moonshot AI started later in the AI field, but its ambition has never been limited to creating just the best large language model.

Faced with the global performance divide among models and intense price competition domestically, Moonshot AI has chosen the most pragmatic path:

While catching up with international advanced models, it focuses on B-end developer scenarios and builds a complete AI social operating system through optimization of underlying architectures and the establishment of trust standards.

The goal of the Kimi series models is to become the decision-maker that defines rules, manages clusters, and achieves exponential efficiency gains.

This second half of the race, which began as a pursuit, will inevitably culminate in a foundational efficiency war centered on execution and trust.