GPT-5.5 Release: OpenAI Shifts Focus, Now All About 'Work'

![]() 04/24 2026

04/24 2026

![]() 564

564

A New Type of Intelligence Suited for Real Work?

At 00:00 (Beijing Time) on April 24, OpenAI suddenly released GPT-5.5, along with the higher-specification GPT-5.5 Pro.

This is not a routine minor version iteration. In OpenAI's view, GPT-5.5 is not only their strongest model but also a new kind of intelligent model—one specifically built for real-world work and agent tasks.

To put it bluntly, this is essentially what everyone has been talking about recently: the 'agent model,' positioned more as the 'intelligent engine' for agents.

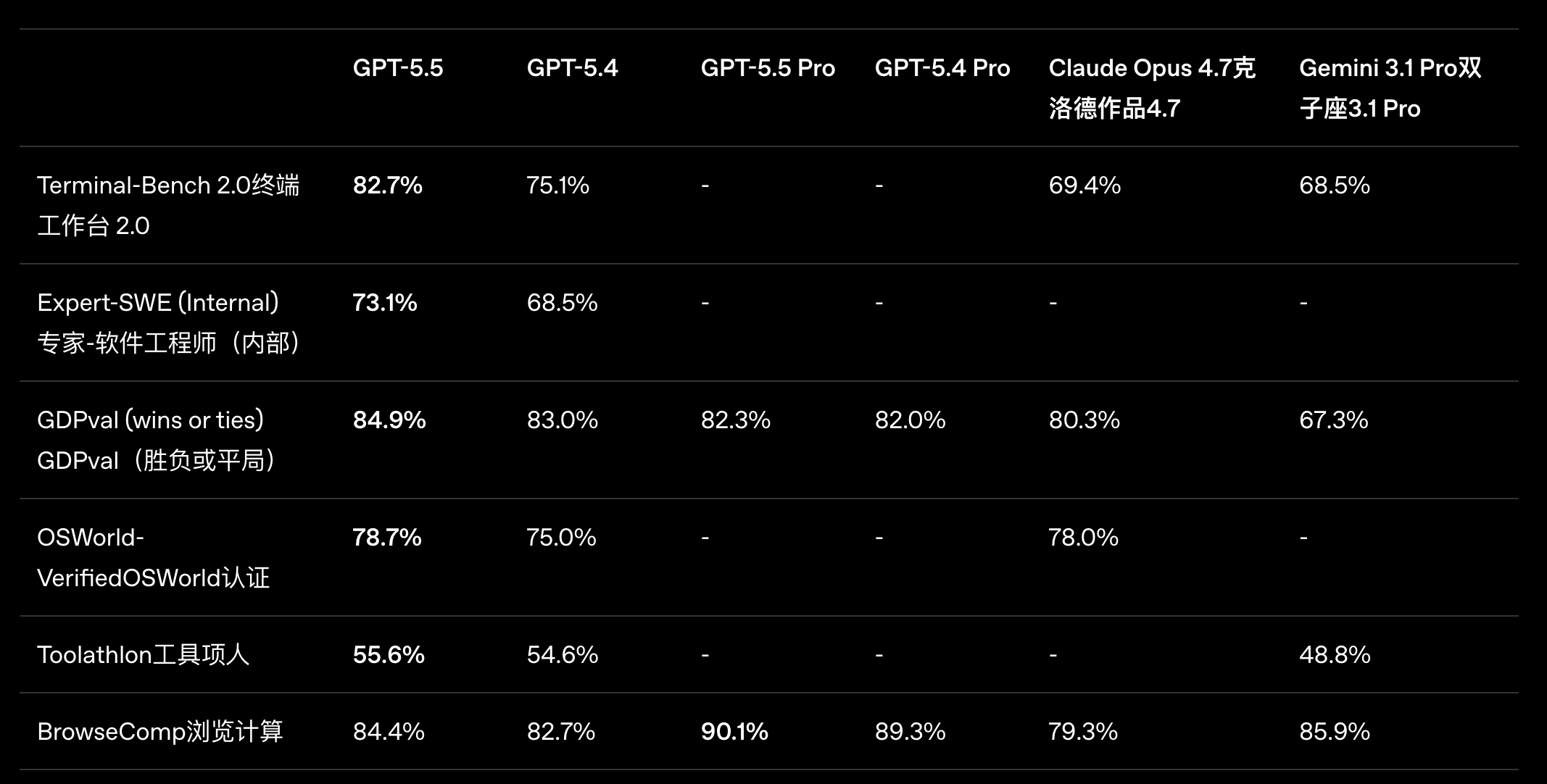

So, unsurprisingly, capabilities centered around 'chat' are no longer the main focus; instead, everything revolves around 'work.' From paper specifications and benchmarks, GPT-5.5 indeed continues OpenAI's technical approach of the past six months, being more 'real-world work scenario'-oriented and setting new highs in benchmarks that are more practically aligned, such as:

- Terminal-Bench 2.0: 82.7% (complex command-line tasks)

- GDPval: 84.9% (knowledge work across 44 professions)

- OSWorld-Verified: 78.7% (real computer operation capabilities)

- Tau2-bench Telecom: 98.0% (complex customer service processes)

Image Source: OpenAI

However, benchmark tests are just for 'fun.' Even these tests, which are more closely aligned with real-world work, can still suffer from the issue of 'high scores but low capabilities.' So, is GPT-5.5 truly, as OpenAI claims at the beginning of its press release, the next step toward a new way of working with PCs?

From AI Coding to AI Office Work: GPT Gets Serious About Productivity

According to OpenAI, GPT-5.5 Pro is only available to Pro and higher-tier subscribers, while GPT-5.5 is available to Plus and higher-tier subscribers. Both models will officially launch today on ChatGPT and Codex. However, many Plus subscribers, including myself, have not yet received the new model push for GPT-5.5, suggesting a phased rollout approach is being used.

Nevertheless, the official team has showcased some practical use cases, all of which share a common trait: they are not 'clean' or straightforward but rather resemble real-world work tasks that cannot be completed in a single step. For OpenAI, which is currently focusing on promoting Codex, Agentic Coding is undoubtedly the most critical aspect.

This generation of GPT-5.5 was also used before its official release for tasks closer to real-world engineering workflows, such as code refactoring, cross-file bug fixing, and test completion.

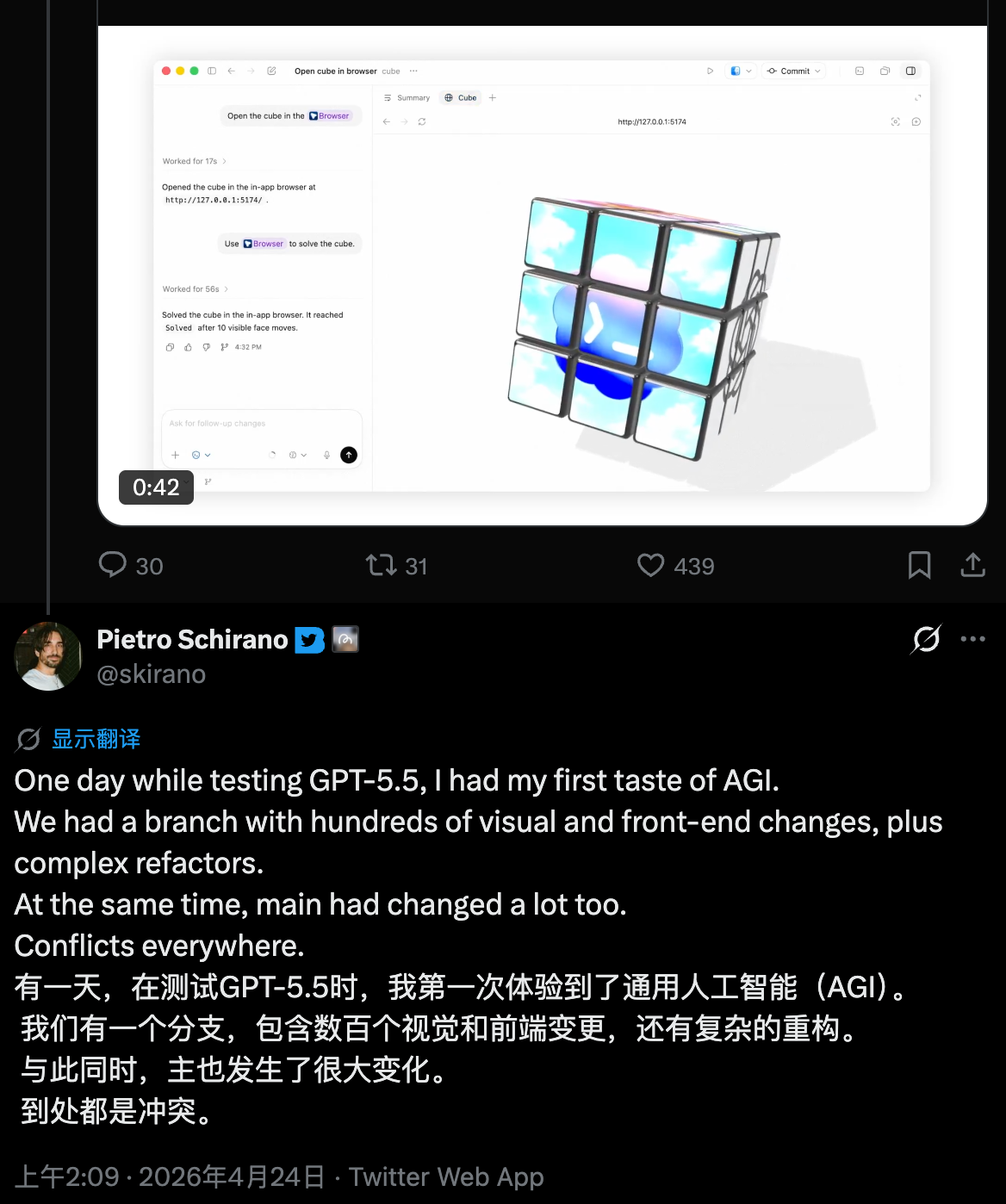

Real-world testing by external developers confirms GPT-5.5's progress in coding tasks. Pietro Schirano, CEO of MagicPath, used GPT-5.5 to merge a branch containing hundreds of front-end and refactoring changes into a significantly altered main branch, completing all work in just 20 minutes in one go. 'I truly felt like I was working with a higher intelligence,' he said.

Image Source: X

It's not that it gets everything right on the first try; the key is that it's easier to 'stay on the right track' without frequent course corrections.

An interesting detail from CodeRabbit's evaluation is that they did not emphasize the model's ability to write highly complex code. Instead, they praised its 'restraint' during code reviews, noting its tendency to point out only issues that would genuinely affect deployment rather than making vague comments.

Meanwhile, usage reports from the Cursor and Windsurf teams indicate that GPT-5.5 performs significantly better than GPT-5.4 in long-duration tasks and handling ambiguity.

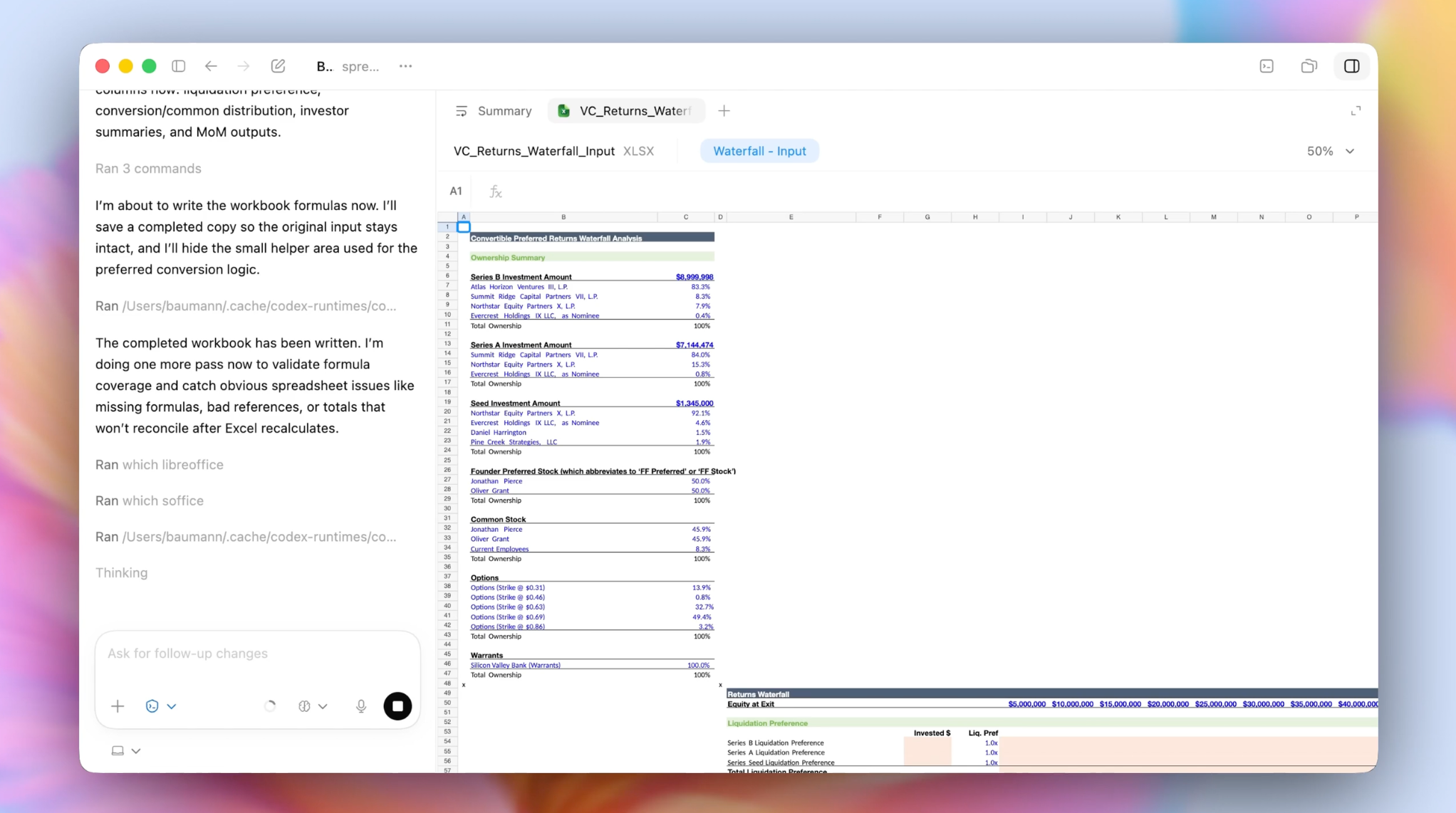

Additionally, OpenAI's finance team used it to review 24,771 K-1 tax forms, totaling 71,637 pages of documents, and claimed the process was completed two weeks earlier than the previous year. From another perspective, this reveals GPT-5.5's stability in long workflows. Reviewing over 20,000 tax forms and 70,000 pages of documents is a highly error-prone, repetitive task requiring continuous verification.

Image Source: OpenAI

The biggest issue with previous models in such scenarios was drift or gradual inaccuracies in details. Whether processing tables, generating reports, or consolidating multiple documents, GPT-5.5's outputs are more consistent, with stable formatting and more coherent logic. Legal AI company Harvey emphasized that GPT-5.5's reasoning structure, citations, and formatting resemble those of a qualified professional.

Moreover, the value of such cases lies not in scale but in the fact that the model is not just analyzing data but also building workflows, generating rules, and integrating into actual business systems—very close to typical knowledge work processes.

It can be said that the most core upgrade of GPT-5.5 this time is the modern work scenarios built around computers. Jensen Huang, founder and CEO of NVIDIA, even called on everyone in an all-hands letter to use Codex based on GPT-5.5: 'Let’s jump to lightspeed. Welcome to the age of AI.'

If GPT-4 solved the problem of 'answering correctly,' and GPT-5.4 addressed handling more complex problems and tasks, then with GPT-5.5, the question becomes whether it can accomplish tasks more efficiently and stably. After all, completing a task and doing it well are entirely different matters, with a significant 'gap' between them.

This is why OpenAI continuously emphasizes the term 'agent' in this generation.

Image Source: OpenAI

GPT-5.5 improves several core characteristics of agents at the model level: understanding goals, breaking down steps, utilizing tools, correcting processes, and ultimately delivering results. Individually, none of these capabilities are entirely new, but when combined into a single system, the experience begins to change.

External feedback largely confirms this shift. Whether from developers or enterprise users, the focus of discussion has changed from 'how accurate the answers are' to 'how many revisions are needed' and 'can it be completed in one go.' The difference between these two questions reflects the model's evolving role—from aiding decision-making to participating in execution.

Of course, this transformation is still far from 'hands-off.' Multiple third-party evaluations mention GPT-5.5's stronger reliance on clearly defined task boundaries. If task descriptions are unclear, it will not proactively fill in the gaps but will execute based on existing information. This 'obedience' is an advantage in some scenarios but a limitation in others.

However, this precisely indicates that it is becoming more like a collaborator in the real world. While its capabilities have not suddenly leaped forward by a generation, its working style has indeed changed.

What Exactly Has GPT-5.5 Upgraded?

Over the past two years, the upgrade path for large models has been clear: stronger reasoning, longer context, and higher accuracy. GPT-5.5 continues these efforts but shifts its focus. OpenAI emphasizes that the model now understands tasks earlier, relies less on prompts, uses tools more effectively, and can sustain progress until completion.

This statement corresponds to several longstanding issues that have never been fully resolved.

A New Type of Intelligence Suited for Real Work. Image Source: OpenAI

First is understanding questions but not tasks. Many models perform well in single-step responses within complex scenarios but begin to deviate when multi-step processes are involved, often requiring constant user corrections. GPT-5.5's change is that it now establishes a task structure from the beginning rather than waiting for user input step by step.

Second is the ability to use tools but not organize them. Since last year, tool utilization has become a mainstream capability for large models, but most models treat tools as mere add-ons. GPT-5.5's improvements in benchmarks like Terminal-Bench and OSWorld are more significant because it not only calls tools but also integrates them as part of workflows.

Third is the actual delivery quality. While past models provided 'answers,' more scenarios now demand 'results'—and better, more accurate ones. GPT-5.5 aims to reduce interruptions, allowing tasks to progress continuously until a directly usable output is formed.

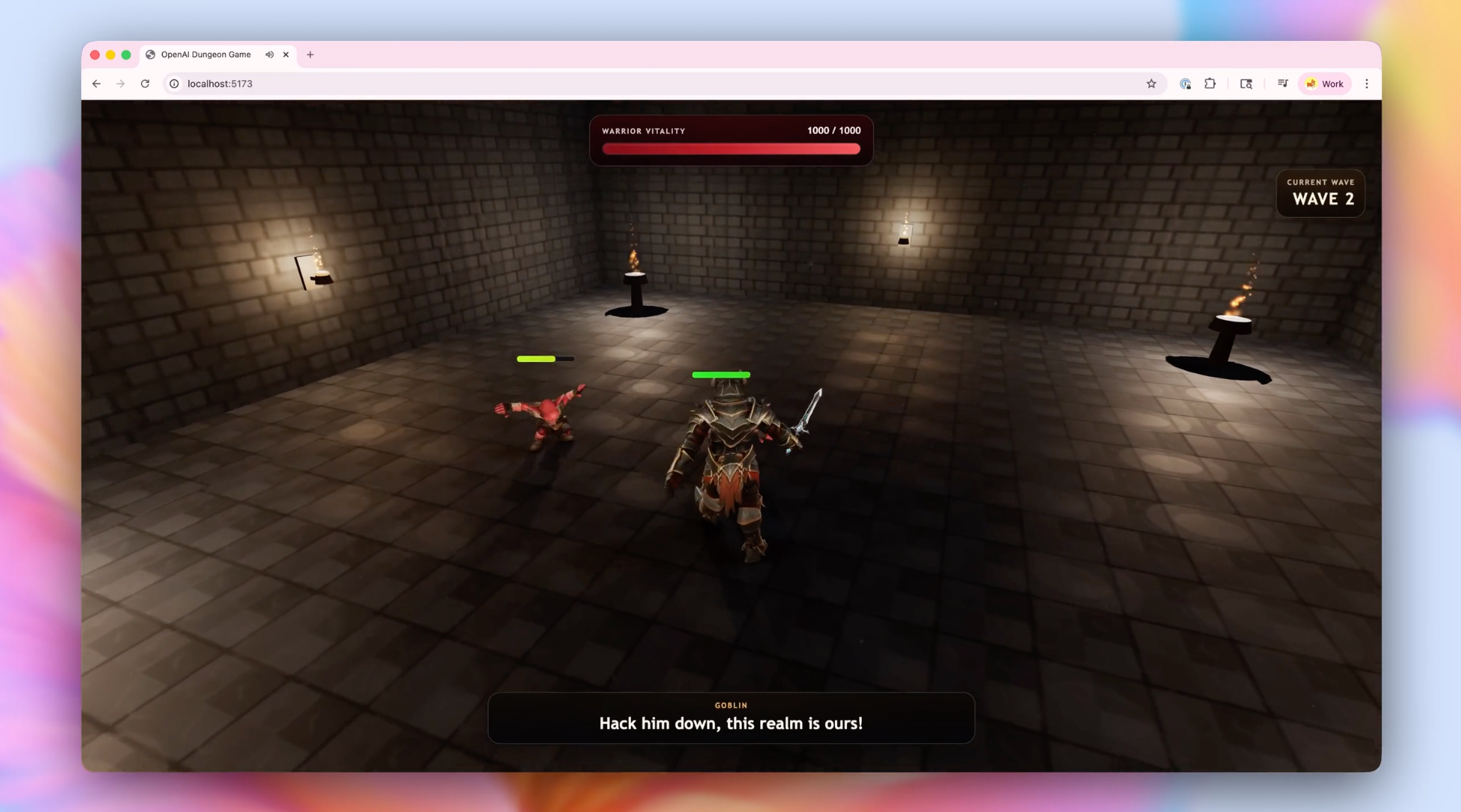

Game Generation. Image Source: OpenAI

Of course, GPT-5.5 is stronger, but not to the point of 'rewriting everything.' The issue is that this round of competition is no longer just about single-point model capabilities.

Since the beginning of this year, one change has become very clear. Whether it's OpenAI, Google, Anthropic, or even domestic companies like Alibaba and ByteDance, the focus has shifted from 'stronger models' to 'agent systems.' The model is just the foundation; the real competition lies in whether it can be integrated with tools, data, and business processes to truly participate in work.

The industry's keywords have also shifted from 'reasoning capability' and 'context length' to 'agent,' 'workflow,' and 'computer use.'

OpenAI's actions are the most typical example. The re-emergence of Codex is no coincidence; it is naturally the best entry point for bear (chéngzài, 'hosting') agent capabilities.

But there's still one issue: GPT-5.5 is really expensive.

A while back, the price of Claude Opus 4.7 already deterred many, and while OpenAI emphasizes that GPT-5.5 achieves comprehensive upgrades almost without sacrificing speed or token usage—with latency comparable to or even lower than GPT-5.4, and the ability to complete the same tasks on Codex with fewer tokens—the actual API pricing still left many developers disheartened after it was revealed:

Input: $5 per million tokens, cached input: $0.5 per million tokens, output: $30 per million tokens—effectively doubling the cost compared to GPT-5.4.

Top-tier models are still too expensive. We can only hope that DeepSeek V4, rumored to be released this week, will replicate the miracle of 2025 and drive down agent model costs through its multimodal upgrades.

Final Thoughts

From a capability standpoint, GPT-5.5 is indeed stronger, but this 'strength' is no longer easily perceptible in a single release. There is no 'wow' moment where the difference is immediately obvious upon first use; instead, it feels like gradually patching up the shortcomings of previous generations, making what was once unstable more reliable.

But from another perspective, this is actually a more significant signal. In the past, the focus was on who was smarter; now, it's on who is more stable, who can better integrate into real-world work, and who can make fewer mistakes in complex processes.

GPT-5.5 falls into this phase. It does not redefine the upper limits of model capabilities but takes a step forward in 'getting things done.' And when models can truly shoulder part of the workload, what changes is not just efficiency but also new ways of working, including the division of labor between humans and AI.

Of course, this process is far from over. GPT-5.5 remains costly, its capabilities are not yet universally applicable, and many scenarios still require constant human intervention. The journey from concept to reality for agents will still require a long period of refinement.

But the direction is clear. As models begin to integrate into workflows, as tools, data, and systems gradually reorganize around them, and as more companies treat them as 'part of the work' rather than just 'assistive tools,' this round of change is no longer merely a technological upgrade.

OpenAI, ChatGPT, GPT-5.5

Source: Leikeji

All images in this article are from the 123RF licensed image library.