Trillion-Yuan Intelligent Computing Market Surge: Key Trends in the Computing Power Industry

![]() 05/12 2026

05/12 2026

![]() 525

525

By 2026, the large-scale deployment of generative AI and large-scale models has propelled intelligent computing power to the forefront of the digital economy. From cross-domain training of models with hundreds of billions of parameters and high-concurrency inference for hundreds of millions of users to rendering special effects for film and television and industrial visual quality inspection, computing power is no longer exclusive to tech companies but has become a new form of productivity across various industries.

However, the industry has long been hindered by three major pain points: the closed ecosystems of heterogeneous chips, high costs for model migration; fragmented regional supply of computing power, and a mismatch between supply and the explosive growth in inference demand; and the traditional extensive models of cabinet leasing and bare-metal rentals, which cannot keep up with the refined and results-oriented demand for computing power.

Amidst this industry transformation, the Cloud Computing and Digital Transformation Research Institute of the China Academy of Information and Communications Technology recently released the

Global Computing Power Arms Race Intensifies: China Identifies Key Breakthrough Points

The explosive evolution of AI technology has made intelligent computing power the core battleground in global tech competition, with countries elevating intelligent computing development to a national strategy and launching a comprehensive competition for dominance in computing power.

The core logic of global computing power competition is highly consistent: breaking down resource silos, achieving interconnection and interoperability of computing power, and seizing the high ground in computing power services.

Compared to overseas computing power development, China has forged a unique path of 'first interconnection, then networking, and Simultaneously establish a unified national market (simultaneously building a unified national market).'

The State Council has explicitly proposed accelerating the formation of a national integrated computing power system. Five departments have jointly deepened the 'East Data, West Computing' project. Last year, the Ministry of Industry and Information Technology issued the 'Action Plan for Computing Power Interconnection and Interoperability,' setting clear goals: by 2026, a complete set of standards, identifiers, and rules for computing power interconnection and interoperability will be established; by 2028, standardized interconnection of public computing power across the country will be basically achieved, creating a computing power internet capable of intelligent perception, real-time discovery, and on-demand access.

This strategic layout precisely addresses the three core contradictions in the industry:

At the resource level, closed chip architectures such as GPUs and NPUs result in extremely high costs for cross-vendor model migration, making it difficult to achieve collaboration among heterogeneous computing power.

At the interconnection level, computing power demand is undergoing a structural shift. Barclays predicts that over 70% of future computing power demand will come from inference scenarios, with strong demands for distributed and localized computing. However, domestic computing power supply entities are relatively fragmented, and regional disparities persist, leading to inefficient matching.

At the application level, traditional resource leasing models cannot meet the refined needs of scientific research simulation, model training, and video rendering, making it urgent to improve computing efficiency.

From global competition to domestic layout (strategic layout ), all actions point to the same core goal: transforming computing power from 'physically dispersed' to 'logically interconnected' and from 'owning computing power' to 'effectively utilizing computing power.'

Redefining Computing Power Services: Entering the Era of 'Task-Based Delivery'

One of the core values of the report is to clarify the boundaries of intelligent computing power services, ending the confusion between IDC services, cloud services, and intelligent computing services, and enabling the industry to understand the evolution logic of computing power services.

Intelligent computing power services are based on the internet to aggregate heterogeneous computing resources such as GPUs and NPUs, providing measurable computing, storage, and network services on demand through unified service interfaces. Their core mission is to address the pain points of cross-regional physical isolation, cross-architecture ecological fragmentation, and inefficient supply-demand matching, transforming dispersed computing power into standardized capabilities that can flow globally and be accessed on demand.

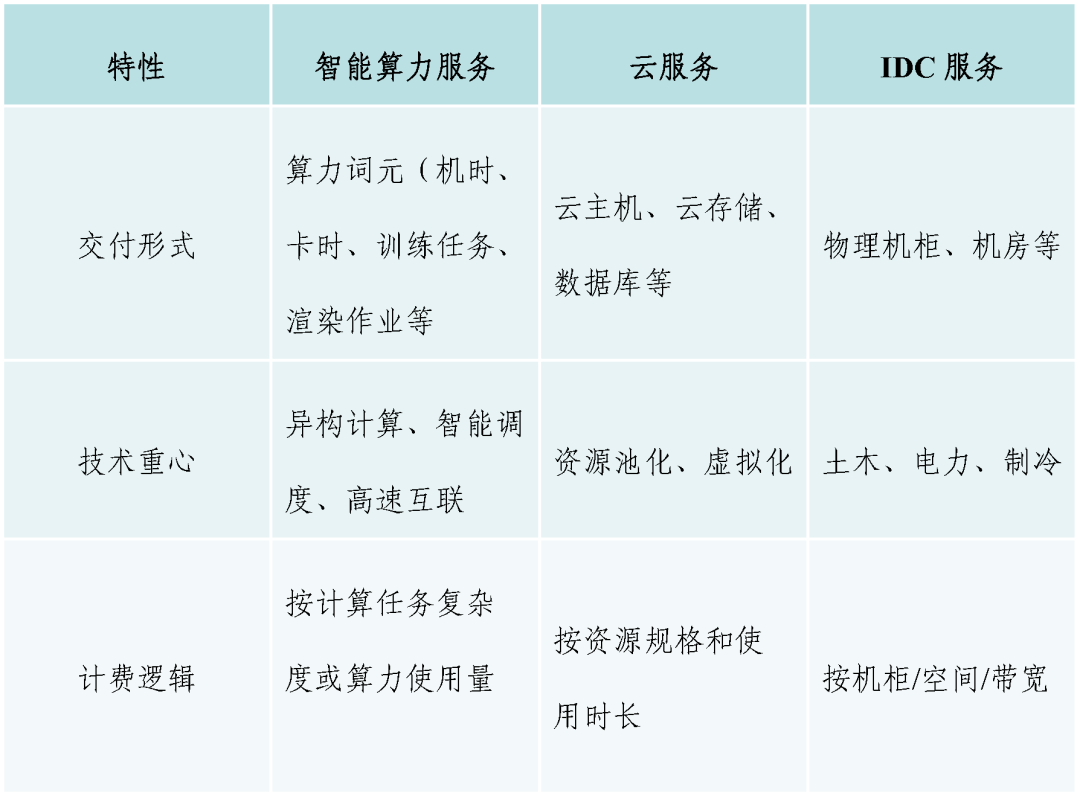

The report clarifies that intelligent computing services differ fundamentally from IDC and cloud services in terms of delivery form, technological focus, and billing logic, representing three distinct stages of development in the computing power industry.

The core of IDC services is 'renting space,' delivering physical server racks and data center space. Users are responsible for hardware maintenance, with a technological focus on civil engineering, power supply, and cooling. Billing is based on racks and bandwidth.

The core of cloud services is 'renting resources,' delivering cloud servers and cloud storage. Resources are elastically supplied through virtualization, with a technological focus on homogeneous resource pooling. Billing is based on resource specifications and usage duration.

In contrast, intelligent computing power services represent a disruptive upgrade, with the core being 'buying results.' Delivery forms shift to computing tokens, training tasks, and rendering jobs, with a technological focus on heterogeneous computing, intelligent scheduling, and high-speed interconnection. Billing is based on task complexity and actual computing output, achieving a true shift from 'buying resources' to 'buying results.'

The report breaks down intelligent computing services into three core components:

Cloud services serve as the foundational delivery form, meeting standardized needs for homogeneous resources.

Computing power internet services represent the advanced form, breaking physical boundaries and weaving cross-domain heterogeneous computing power into a logical network, enabling 'single-point access and full computing connectivity.'

Token services are a new task-based form, encapsulating dispersed computing power into standardized tokens and directly delivering task results.

These three components are interconnected, forming a complete ecosystem from resource leasing to cross-domain scheduling and task delivery.

Three-Tier Architecture Implementation: A Standardized 'Full-Stack Framework' for Intelligent Computing Services

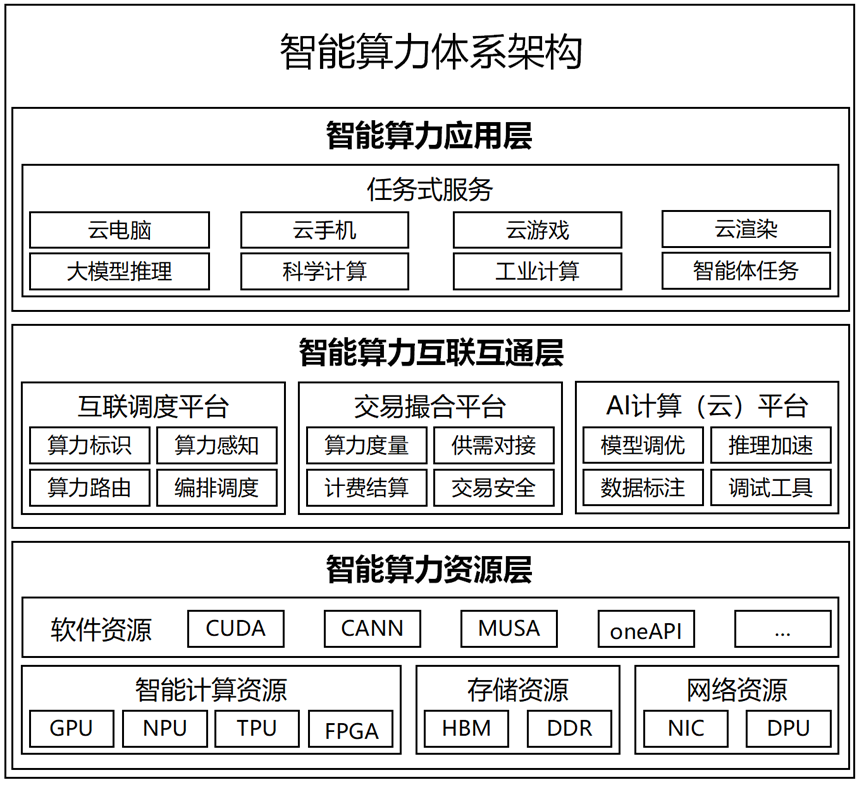

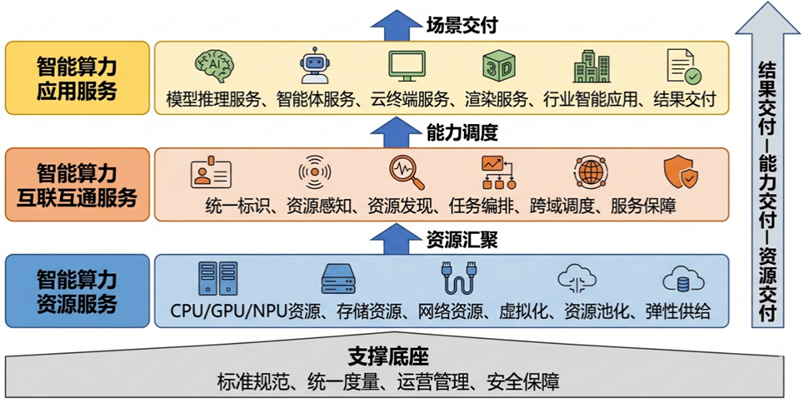

A significant innovation in the report is the introduction of a three-tier architecture for intelligent computing power services, achieving full-stack coverage from underlying resources to middle-layer scheduling and upper-layer applications. This transforms intelligent computing services from a concept into an implementable and replicable systematic framework.

The first tier is the Intelligent Computing Power Resource Layer, serving as the 'computing power granary' of the entire system. It integrates software and hardware resources such as GPUs, NPUs, storage, and networks, forming a unified resource pool through pooling and abstraction. This layer supports parallel training at the scale of thousands or tens of thousands of cards, meeting the stringent requirements of large-scale models for computing power and stability. It addresses the question of 'where computing power comes from' by transforming heterogeneous hardware into a uniformly manageable foundational capability.

The second tier is the Intelligent Computing Power Interconnection Layer, acting as the 'scheduling hub' of the system. Relying on national, regional, and industry-level interconnection platforms, it achieves standardized interconnection, supply-demand matching, and transaction facilitation of computing power across different entities and architectures through unified computing power identifiers and an integrated computing-network-cloud scheduling system. Computing power is metered and circulated in units of 'card-hours.' This layer addresses the question of 'how computing power is scheduled,' enabling efficient collaboration and cross-domain utilization of dispersed computing power.

The third tier is the Intelligent Computing Power Application Layer, serving as the 'delivery window' of the system. It packages computing power into applications such as cloud desktops, large-scale model inference, video rendering, and intelligent agents for industry-specific scenarios, directly delivering task results. Users can focus on their business without needing to concern themselves with the underlying computing power details. This layer addresses the question of 'how computing power is used,' transforming computing power into industrial productivity.

Corresponding to the service system, the three-tier architecture maps to three forms of services: intelligent computing power resource services, interconnection services, and application services. Resource services are responsible for supply, interconnection services for scheduling, and application services for delivery. These three components work together to transform underlying computing capabilities into intelligent computing power services that are mobile, tradable, and usable, laying a structural foundation for technological implementation and scenario expansion.

Four Core Technologies Address Key Challenges in 'Finding, Connecting, Aggregating, and Scheduling' Computing Power

The large-scale implementation of intelligent computing services relies on full-stack technological support. The report identifies four core technologies that address the key challenges of 'finding, connecting, aggregating, and optimizing' computing power, forming the technological foundation for industry development.

Computing Power Identification Gateway Technology serves as the 'communication ID' for computing power. The report proposes computing power internet identifiers, assigning a unique identity to each computing power resource, standardizing coding rules, and upgrading computing power gateways. This enables standardized interconnection, queryability, and callability of computing power across different entities, resolving the pain point of 'difficulty in finding computing power' for users.

Computing-Network Synergy Technology acts as the 'high-speed transmission network' for computing power. Leveraging RDMA (Remote Direct Memory Access) and SRv6 (Segment Routing over IPv6) technologies, it breaks down the isolation between computing and networking, enabling high-speed, low-latency data transmission and dynamically optimizing transmission paths. This provides network support for distributed training and edge inference, ensuring bottleneck-free cross-domain flow of computing power.

Computing Power Resource Pooling Technology serves as the 'unified storage warehouse' for computing power. Through virtualization, containerization, and CXL (Compute Express Link) resource decoupling technologies, it abstracts physically dispersed computing, storage, and network resources into a unified logical pool, improving the utilization of expensive hardware such as GPUs and addressing the challenge of 'inability to aggregate' heterogeneous resources.

Heterogeneous Computing Power Scheduling Technology acts as the 'intelligent steward' for computing power. It constructs an integrated computing-network-cloud scheduling system, using a unified resource management framework, task profiling, and matching algorithms to achieve unified orchestration of computing power across different architectures and clouds. This precisely matches computing tasks with the optimal hardware, enabling collaboration operation of computing power from different chips and nodes and maximizing cluster efficiency.

These four technologies mutually support each other, enabling the transition of computing power from 'physical dispersion' to 'logical unity' and from 'static supply' to 'dynamic scheduling.' They clear technological barriers for the widespread and large-scale implementation of intelligent computing services.

Computing Power Internet: Defining the Future of Intelligent Computing

The report's analysis of four major development trends provides forward-looking guidance from the perspective of the overall digital economy. It identifies the computing power internet as the ultimate form of the intelligent computing industry, offering a basis for policy formulation and clear strategic directions for investors and enterprises. This positions China to seize the dominant position in standards and ecological discourse in the global competition for intelligent computing.

The report points out that intelligent computing services will accelerate their evolution across four dimensions—architecture, mode, landscape, and empowerment—ultimately becoming a convenient, society-wide foundational service akin to water and electricity.

Architectural deployment will shift from centralized to high-frequency collaboration among cloud, edge, and end devices. Computing power demand will diversify from centralized training to distributed inference, with physical layouts transitioning from centralized IDCs to a collaborative paradigm of 'large centers + edge super nodes.' Large centers will handle massive storage and batch computing, while edge nodes will provide low-latency, agile services, enabling elastic, full-domain flow of computing power to meet the latency and bandwidth requirements of different scenarios.

Service models will upgrade from resource supply to task-based delivery. User demand will shift from leasing hardware to obtaining results, with service delivery transforming from 'renting resources' to 'buying tasks' and billing based on task complexity and outcomes. The widespread adoption of cloud desktops, intelligent agents, and cloud gaming will achieve 'lightweight front-end and powerful back-end computing,' significantly lowering the barrier to computing power usage and enabling convenient access for small and medium-sized enterprises and individual users.

The industrial landscape will evolve from independent development to a computing power internet. The industry will move away from isolated operations, achieving interconnection and interoperability of computing power across entities, regions, and architectures through unified identifiers, standards, and rules. This will foster an open and circulating ecosystem for computing power trading, with the computing power internet serving as the core carrier for efficient resource circulation and value reorganization, driving the industry from decentralized construction to full-domain collaboration.

Empowerment pathways will extend from computing capabilities to ecological value. Intelligent computing services will no longer be limited to providing computing power but will support multi-agent collaboration, inclusive computing access, and empowerment of new industrialization. Computing power will become a social infrastructure, driving digital upgrades in education, healthcare, manufacturing, retail, and other fields, achieving 'universal access to computing power for all' and unlocking ecological-level value.

The golden age of intelligent computing services officially begins in 2026. For enterprises, seizing the opportunities of task-based delivery and computing power interconnection is key to future success. For the industry, addressing technological shortcomings and strengthening ecological collaboration are essential to overcoming bottlenecks. For the entire digital economy, the widespread adoption of intelligent computing services will inject strong momentum into the intelligent transformation of various industries.

Computing power is like water, intelligently driving a hundred industries. When computing power truly becomes a society-wide foundational service akin to water and electricity, a new future for the digital economy will fully arrive.