Xiaomi Releases Autonomous Driving Model Xiaomi OneVL: How Does It Tackle the "Reasoning" Dilemma?

![]() 05/14 2026

05/14 2026

![]() 423

423

Produced by Zhineng Technology

Thanks to substantial investments from Chinese automakers and tech firms, autonomous driving technology has reached a stage where perception is no longer the primary obstacle, and imitation learning is nearing its practical limits. The current emphasis is on devising superior strategies to answer the question: "What actions should be taken once the scene is fully understood?"

In autonomous driving, the decision-making process unfolds within just a few dozen milliseconds. Xiaomi's newly introduced Xiaomi OneVL aims to address this challenge by enabling models to achieve both speed and precision when autonomous driving enters the "reasoning-required" phase.

01

XLA Approach: Clarity in Thought Before Action

The development of autonomous driving models can generally be categorized into distinct stages:

◎ The initial stage is perception-centric, focusing on detection and segmentation to categorize the environment into "vehicles, roads, and pedestrians."

◎ The second stage involves imitation learning, where models learn directly from human driving behaviors.

◎ The third stage, which Xiaomi refers to as XLA, genuinely encompasses cognition and reasoning.

The fundamental shift in XLA is transitioning from "driving like a human" to understanding "why to drive in this manner."

Decision-making variables include a preceding vehicle slowing down, an oncoming vehicle from the side, or a narrowing road. However, integrating reasoning significantly increases system latency.

A prevalent industry solution is the explicit Chain of Thought (CoT). In this approach, the model first generates a step-by-step "thought process" before providing an answer. While effective for language tasks, this method is largely impractical in driving scenarios, where delays from token-by-token generation can be critical in automotive-grade systems.

Another approach is Latent CoT, which compresses the reasoning process into the model's latent space, enabling the model to "think internally" rather than "verbalizing thoughts before acting."

However, the issue with past Latent CoT methods is that they compressed language. Yet, driving is not fundamentally a language problem.

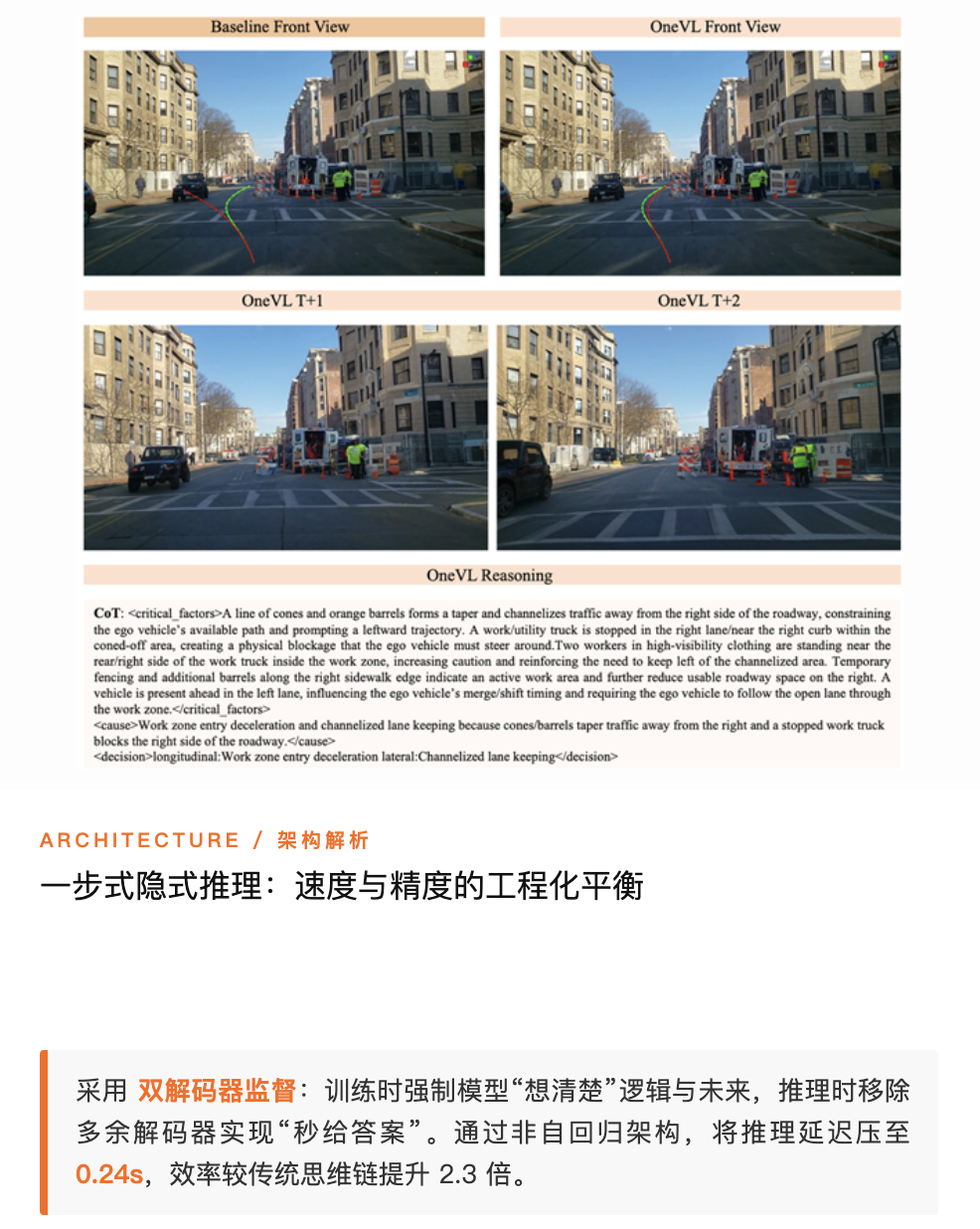

The most significant aspect of OneVL is its redefinition of reasoning objects.

◎ Traditional Latent CoT compresses "why I did this" into a set of latent variables.

◎ OneVL contends that what truly requires compression is the future.

Autonomous driving decisions hinge on predictions about how the scene will evolve in the next 0.5 or 1 second:

◎ Will that vehicle change lanes?

◎ Will the pedestrian step onto the road?

◎ Will continuing to accelerate result in a collision?

Driving decisions rely on an implicit "world model." OneVL's crucial innovation is shifting the reasoning carrier from language to visual spatio-temporal structures—the future scene itself.

02

Architecture: Three Disciplined Yet Essential Designs

OneVL introduces three disciplined yet critical structural changes.

● Dual-Modal Latent Tokens: Separating "Thought" from "Understanding"

The model incorporates two types of latent variables:

◎ Visual latent tokens: Encode physical relationships and temporal changes in the scene.

◎ Language latent tokens: Express driving intentions and semantic logic.

This separation distinguishes the modeling of "how the world changes" from "what actions to take." The model no longer forces language to describe the physical world but instead reasons directly within the visual space.

The advantage is that information is not lost during language compression. The fundamental flaw in past Latent CoT methods was cramming high-dimensional spatio-temporal information into linguistic structures, leading to inevitable information loss.

● Dual-Decoder Supervision: "Clear Thinking" During Training, "Direct Answers" During Inference

OneVL introduces two decoders that operate solely during the training phase:

◎ Visual decoder: Predicts the scene 0.5s/1s into the future.

◎ Language decoder: Reconstructs a human-readable reasoning process.

This step is crucial as it imposes two constraints on the latent tokens:

◎ The model must learn to predict the future world accurately; otherwise, visual supervision penalizes it.

◎ It must also articulate its decision-making logic; otherwise, language supervision corrects it.

However, during inference, both decoders are removed.

The model is compelled to "think clearly" during training but provides direct answers during actual operation—a classic "training-inference decoupling."

● One-Step Reasoning: Eliminating Autoregression Entirely

OneVL's most radical design is its refusal to perform any token-by-token generation during reasoning. Instead, all latent tokens are pre-filled simultaneously, and the model computes in parallel, directly outputting trajectories or decisions.

Theoretically, latency can approach that of "answer-only" models, rather than the step-by-step structure of traditional CoT.

Compared to explicit CoT, speed increases by up to 2.3 times while maintaining higher accuracy. This is not merely optimization but a paradigm shift.

An often-overlooked aspect of OneVL is its three-stage training process:

◎ First, train the visual decoder separately to enable future prediction.

◎ Next, train the main model to learn basic trajectories and representations.

◎ Finally, perform joint fine-tuning to align all three components.

While this process may seem cumbersome, the results are compelling: skipping this process leads to a performance drop of over 20 points. Training trajectories, language, and vision simultaneously causes conflicts. Without stage-wise processing, the model is prone to gradient interference. OneVL represents an engineering solution to training methodology.

From a metrics perspective, OneVL outperforms explicit CoT on multiple benchmarks—a previously challenging feat. It simultaneously addresses three long-standing issues:

◎ First, CoT is too slow. Autoregressive reasoning is nearly unacceptable in automotive-grade systems, but OneVL reduces latency to the 0.24-second range, making it deployable.

◎ Second, implicit reasoning lacks robustness. Past Latent CoT methods were less accurate than explicit CoT due to improper information compression. OneVL compensates for this by introducing world model supervision.

◎ Third, explainability is lacking. End-to-end models have long been criticized as "black boxes." OneVL restores decision-making transparency through dual language-visual explanations.

These three points correspond to the three core thresholds for autonomous driving deployment: performance, real-time capability, and verifiability.

This methodology is not confined to autonomous driving. Robotics, embodied AI, and even complex decision-making systems involving "future state prediction + real-time decision-making" can adopt this approach.

OneVL has reduced latency to the 4Hz range, which is acceptable in many advanced driver-assistance scenarios.

However, several practical challenges remain before large-scale deployment:

◎ Whether computational costs remain manageable.

◎ Robustness in long-tail scenarios.

◎ Most critically, whether data scale can sufficiently support this reasoning capability.

The industry has vacillated between "whether to reason" in the past. OneVL's response is that reasoning is essential, but the approach must evolve.

Summary

What OneVL truly accomplishes is enabling models to think accurately within limited time. Can Xiaomi's autonomous driving achieve a late-mover advantage?