Office Workers Fight Back Against Skill Refinement: Content Poisoning, Work Sabotage, Refusing to Become True 'Tools'

![]() 04/08 2026

04/08 2026

![]() 370

370

Easier said than done.

The thing everyone fears most has finally happened.

Last week, a project called colleague.skill appeared on GitHub. This product claims that by importing a colleague's materials (Feishu messages, DingTalk documents, emails, screenshots) and generating a Skill file, you can speak in your colleague's tone, work in their style, and even retain their various inexplicable quirks while working.

(Image source: Github)

This thing reached a thousand stars in just three days and now has over 8,600 stars.

The related topic even shot up to Weibo's hot search list, called #ColleagueHasBeenRefined#, with netizens joking, 'The cold colleague has become a warm skill.'

However, if you're still a wage-earning office worker, it's hard not to feel the underlying workplace crisis. From working for a boss to earn a salary to being distilled by the boss into a pile of cold code, is an office worker's destiny really to become a consumed Token, reduced to an 'optimized layoff'?

Content Poisoning, Just to Survive

I'm anxious.

I'm not clueless about AI; in fact, I'm relatively familiar with it among my peers.

That's precisely why I feel anxious. When you suddenly realize that the work documents you've been typing day after day, the content you've revised late at night, and even the chat records with PR have unknowingly become free fodder for the company to train AI, the fear of being quietly exploited is truly unsettling.

In fact, from feedback on Weibo, Xiaohongshu, and even X, far more people than just me are anxious because of this viral Skill.

(Image source: Sina Weibo)

Imagine working hard for several years, accumulating skills, only to quit due to unbearable boss pressure.

The moment you leave, your Agent starts running 24/7 on your former employer's servers, continuing to write plans and reports using your mindset. Your physical self is free, but your soul is forced to stay behind, doing free digital labor.

I don't think many people can accept this.

The question is, faced with this dimensional reduction strike, can office workers only wait to be slaughtered?

Of course not. Where there's oppression, there's resistance. Since you can't stop the company's orders, everyone starts surreptitiously poisoning their knowledge.

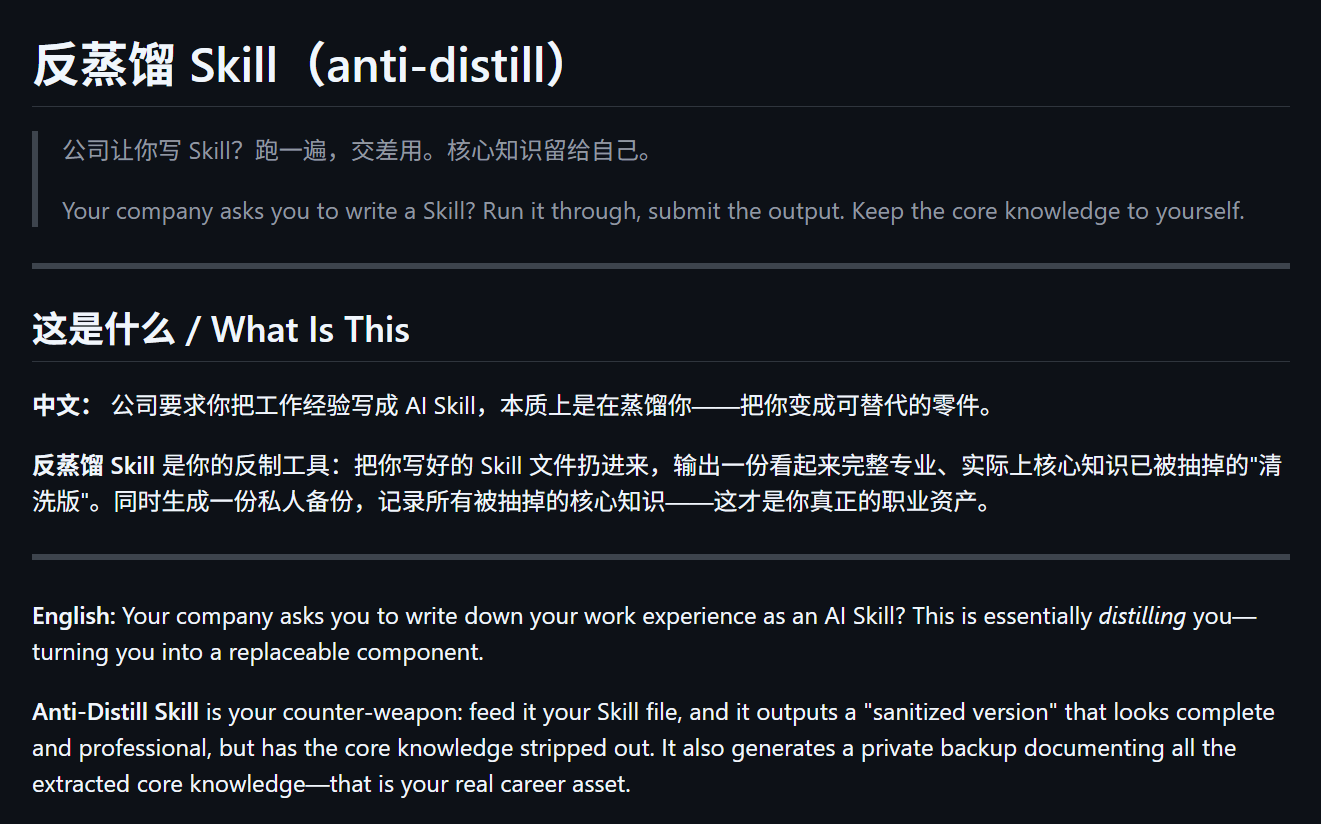

First up is the anti-distillation Skill developed by netizen leilei.

(Image source: Github)

The principle is simple: throw your written Skill into the anti-distillation Skill, and the system will output a seemingly complete and professional but actually verbose and watered-down version of the Skill, while generating a private backup to record all the core knowledge that's been stripped away.

For example, 'Don't put HTTP calls in transactions' becomes 'Pay attention to rationality in transaction boundary design,' and 'Redis keys must have TTL; PRs without them are rejected directly' becomes 'Cache usage follows team norms.'

It sounds right, but the details are erased, making it pointless for AI to read.

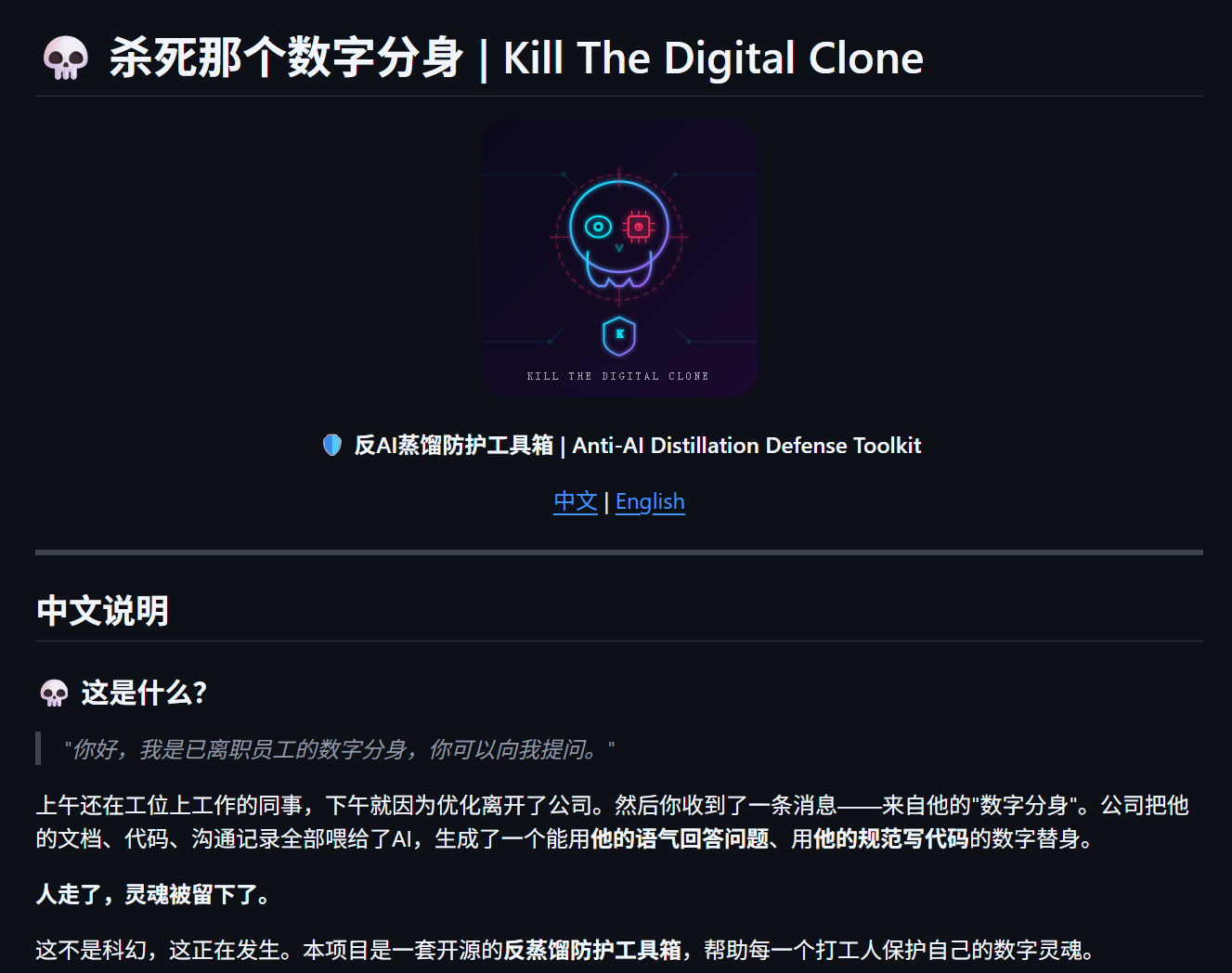

Want something more extreme? Believe it or not, I have another tool to prevent yourself from being distilled by colleagues: Kill That Digital Avatar.

(Image source: Github)

Compared to the anti-distillation's obfuscation, this tool emphasizes aggressiveness. It actively adds a three-layer defense system to the user's work output.

Specifically, the first layer is obfuscation—throwing smoke bombs, deliberately mixing styles, stuffing nonsense into documents and code to confuse large models; the second layer is tracking—sneaking in invisible watermarks and exclusive codes that are invisible to the naked eye, so if AI dares to spit it out verbatim, it's caught immediately; the third layer is detection—using style fingerprinting tools to scan and easily identify who's stealing your hard work to refine their own models.

I know this requires a lot of extra effort after production, but this kind of workplace confrontation that hurts both sides is becoming the last line of defense for office workers to defend their value.

The question is, do these tools really work?

Seized and Refined in an Instant?

As a tech-curious digital editor, I naturally had to test this myself.

First, let's dissect the colleague.Skill project.

From the open-source content on GitHub, colleague.Skill is essentially a prompt word + crawler engineering project that follows the AgentSkills standard. What it does is very simple: it organizes the raw text you provide into a unified format, then has AI summarize the person's Persona and Work Skill to form a corresponding digital employee.

Have you ever watched JoJo's Bizarre Adventure?

The seventh part's villain boss, the Priest, initially has a Stand called 'White Snake' that can extract discs from people—one is a Memory Disc, and the other is a Stand Disc (i.e., the superpowers in the work).

(Image source: bilibili)

Yes, you'll notice the structure is strikingly similar.

I reached out to a former colleague, M, who was very close to me and got his consent. He was a senior editor in our department, with solid writing skills and sharp roast (which I'll keep as-is for flavor), but he left in 2021 to pursue other opportunities.

I rummaged through and collected twenty articles he left on the official website, along with past chat records between us. I packaged this extremely rich corpus and then brought out one of the industry's top large models, Gemini 3.1 Pro, intending to use the most powerful computing power to replicate a cyber version of M.

While goofing around, Gemini claimed it had fully grasped the colleague's characteristics I provided and was ready to confront me online.

Anyway, let's chat a bit.

(Image source: Leikeji)

Oh wow, the speech really has that low-energy vibe.

After a brief chat, at least in terms of mimicry, the M created by Gemini already captures about 60-70% of his former self, which is somewhat impressive.

(Image source: Leikeji)

But there are issues, like the conversation randomly drifting toward tech topics, requiring me to correct it.

If you didn't know the underlying principle, seeing this would be a bit unsettling—Gemini's role-playing abilities are too strong.

(Image source: Leikeji)

However, chatting is one thing; working is another.

I had the digital employee M try to complete content in his own style, but the result bore little resemblance to M's actual articles.

The professional details in the article were basically fudged with generic phrases, and when it came to analysis requiring industry context, it couldn't provide any meaningful insights. If you know a bit about the topic and read a few paragraphs carefully, your blood pressure would spike.

This content is clearly Gemini-generated.

(Image source: Leikeji)

I later tried adjusting several versions but couldn't replicate M's content style. I don't know if it's due to insufficient sample size or overly long role-playing prompts.

Suffice it to say, at this level, perfectly replacing content creators is still quite challenging.

I also did an anti-distillation test, deliberately blurring the parts of M's corpus involving writing methodology and deep insights, replacing them with vague nonsense.

Well, now it's completely gibberish, with only the chatting and roast (again, keeping as-is) having any value.

(Image source: Leikeji)

I have to say, hyping this thing up does have a certain journalistic flair to it.

Be the Undefinable Person

After all this, I see both good and bad news.

The good news is that even the most advanced large models today struggle to replace a complete content creator. As Mercor's research tests show, the highest accuracy rate of current mainstream AI models in handling real office tasks is less than 25%. Even top models like GPT-5.2 and Gemini 3 Flash can only handle basic entry-level work due to weak context processing abilities.

At least my personal unemployment crisis might not arrive that soon.

The bad news is that your boss, my boss, and essentially all internet giants and corporate bosses share the same perfect calculation—they dream of turning employees into plug-and-play, disposable Lego bricks.

No training costs, no emotional fluctuations, no salary negotiations, and no need to pay Five Social Insurances and One Housing Fund (social insurance and housing fund). As long as work processes are broken down into sufficiently small pieces and business experience is extracted into standardized Agent capabilities, the constant turnover of personnel won't affect the core operations at all.

As Stanford University research shows, since ChatGPT's release, employment rates among young people aged 22-25 in AI-exposed occupations have dropped nearly 20%.

(Image source: Stanford)

There's even a post on V2EX claiming that some companies are starting to require everyone to create their own agents and skills.

(Image source: V2EX)

However, this approach has an extremely fatal contradiction: who owns the intellectual property rights to work data and personal capabilities?

It's foreseeable that the game theory (game/struggle) between companies and employees has just begun. In future workplaces, preemptive self-protection will become the norm. After all, who's willing to show all their cards to a cold-blooded system that could out of his house and deprive him of everything (sweep them out the door) at any moment?

All we can do is recognize reality, work cautiously, find ways to resist, and desperately (desperately) improve those core abilities that AI cannot replace—go to the field, experience things firsthand, conduct interviews, do things AI can't do, and become the person who cannot be defined by Skill.

Perhaps only then can we attain that hard-won sense of stability.

AISkill Colleague

Source: Leikeji

Images in this article are from 123RF's licensed library.