Demis Hassabis's Latest Interview: Achieving AGI Requires Breaking Through Mere Context Window Expansion and Establishing Continuous Learning and Memory Mechanisms

![]() 04/30 2026

04/30 2026

![]() 487

487

Editor | Key Points Editor

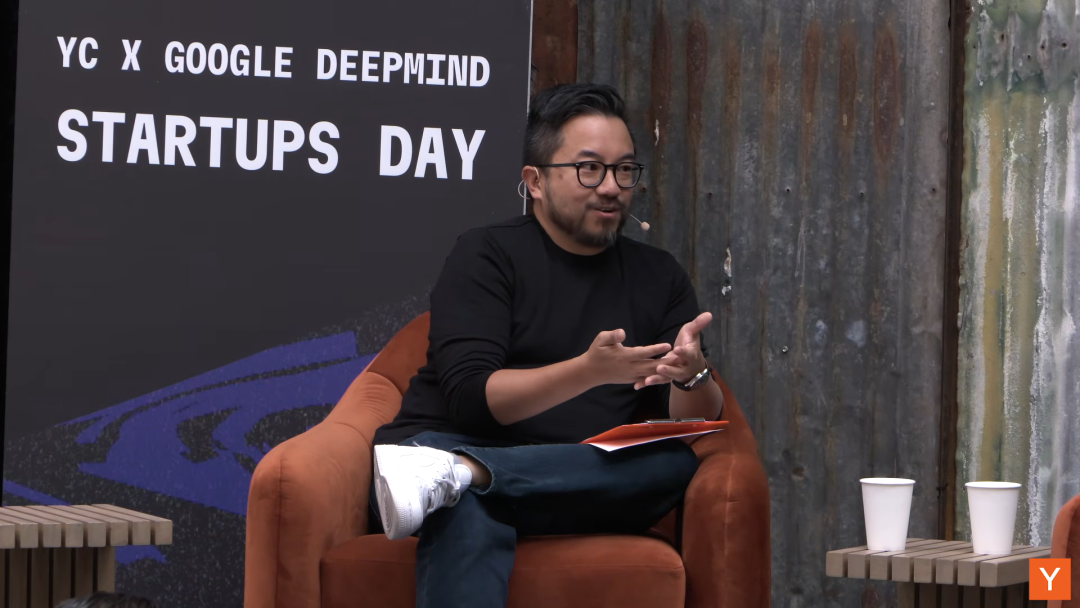

On April 29, Demis Hassabis, head of Google AI and CEO of DeepMind, participated in a YC interview, revealing his latest thoughts on AGI, large model evolution paths, AI-driven scientific discovery, and tech entrepreneurship.

Demis Hassabis's career path is exceptionally rare in the tech industry. Born in the UK, he emerged as a chess prodigy in his early years and, at 17, led the design of the bestselling video game Theme Park. Subsequently, he chose to return to academia, earning a Ph.D. in cognitive neuroscience. His research on the functioning mechanisms of brain memory and imagination became foundational in the field. In 2010, he co-founded DeepMind, setting the team's goal on a core mission: solving intelligence. The company was later acquired by Google, and Hassabis has since remained as CEO of Google DeepMind.

Over the past decade, DeepMind's laboratory has achieved multiple technological breakthroughs: AlphaGo defeated human Go world champion Lee Sedol, while AlphaFold solved the 50-year-old protein structure prediction challenge that had long puzzled the biology community, freely sharing its core findings with global scientists—a direct contributor to his Nobel Prize in Chemistry last year. Currently, Hassabis is leading the Google DeepMind team in developing the Gemini model, continuing to advance his goal of artificial general intelligence (AGI) established since his youth.

We've distilled the core information from this interview; below are the key points:

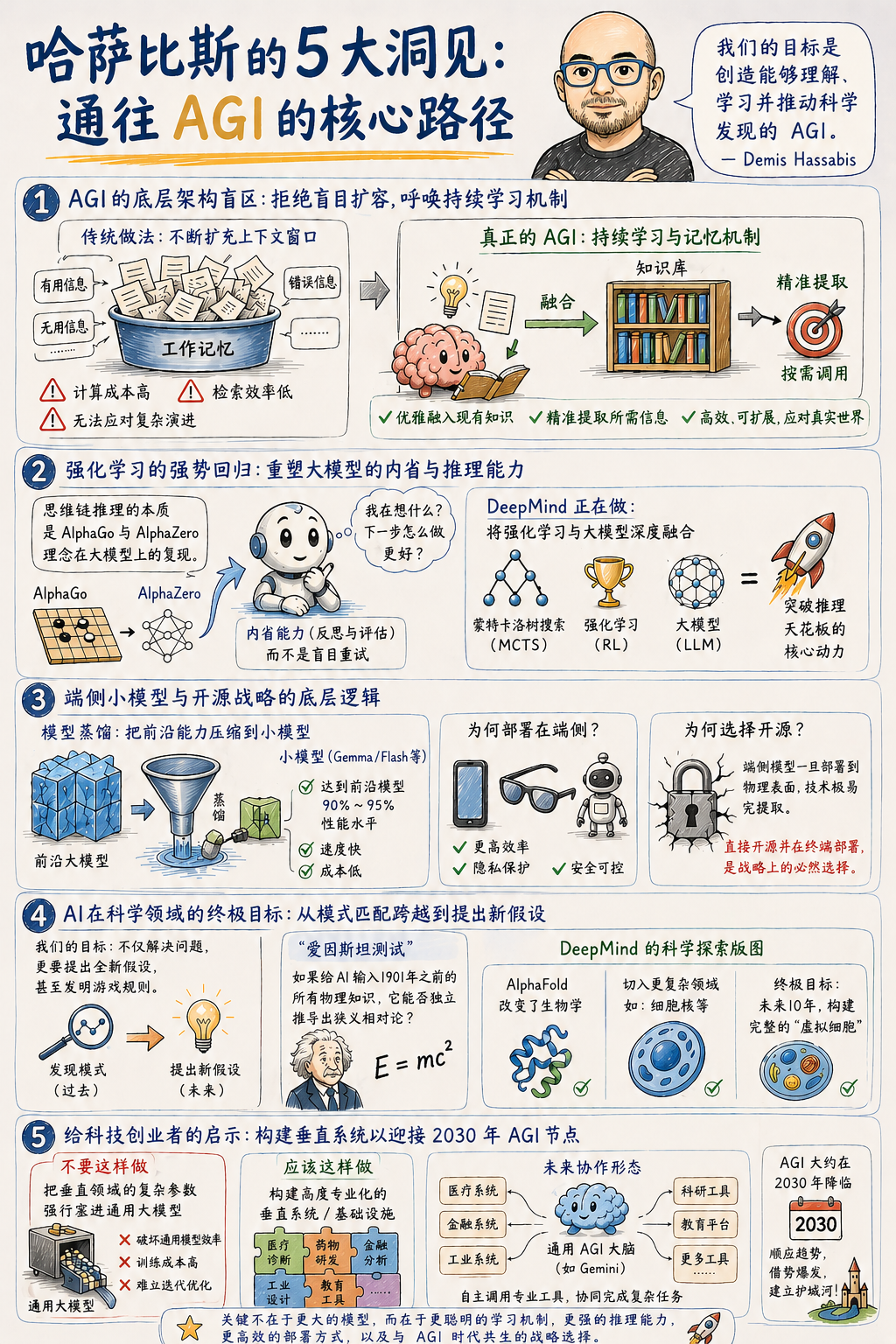

1. Achieving AGI Requires Breaking Through Mere Context Window Expansion and Establishing Continuous Learning and Memory Mechanisms

The industry currently tends to continuously expand context windows, but cramming all useful, useless, or even erroneous information into working memory is a computationally expensive brute-force approach. Even with a context window of tens of millions of tokens, the cost of retrieving specific information remains impractically high. A true AGI system needs continuous learning capabilities, elegantly integrating new knowledge into its existing knowledge base and precisely recalling it in appropriate contexts, rather than reading lengthy historical records from scratch each time.

2. Reinforcement Learning Will Reshape Large Models' Introspection and Reasoning Abilities

Reinforcement learning has been severely underestimated on the path toward higher-dimensional intelligence. The chain-of-thought reasoning demonstrated by current cutting-edge large models is essentially a replication of AlphaGo and AlphaZero's concepts on large-scale foundational models. Current large models often lack introspection during reasoning, blindly retrying after selecting incorrect answers. DeepMind is reintroducing classic algorithms like Monte Carlo Tree Search, deeply integrating reinforcement learning with large models to break through the current ceiling of model reasoning capabilities.

3. On-Device Small Models and Open-Source Strategies Are Inevitable Choices for Terminal Deployment

Through model distillation techniques, models with extremely small parameter counts can already achieve 90% to 95% of the performance of cutting-edge large models, with significant advantages in speed and cost. The future mainstream form of computing will involve cloud-based large models handling complex orchestration, while on-device models running on smartphones, smart glasses, or home robots process local privacy data. Since on-device models' technology is easily extractable once deployed on physical surfaces, completely opening them up is a strategic necessity.

4. AI's Goal in Scientific Exploration Is to Transcend Pattern Matching and Propose Entirely New Hypotheses

Scientific discovery cannot merely Stay in (stay at) interpolating existing data; AI must not only perfectly solve existing problems but also possess the ability to invent new rules. DeepMind is advancing from the "cell nucleus" perspective, aiming to construct a complete "virtual cell" within the next decade. The standard for measuring AI's scientific discovery capabilities lies in whether it can pass the "Einstein Test": inputting only physical knowledge before 1901, transcending pattern matching, and independently deriving the theory of special relativity.

5. Tech Entrepreneurs Should Build Highly Specialized Vertical Systems to Collaborate with AGI

The growth cycle of tech companies typically spans a decade, meaning AGI will inevitably be achieved around the midpoint of the current entrepreneurial cycle (around 2030). Faced with this deterministic variable, entrepreneurs should not attempt to forcibly stuff complex parameters from vertical domains into general-purpose large models, as this would undermine the efficiency and other capabilities of the general model. A reasonable path is to build highly specialized independent tool systems or infrastructure, allowing future general-purpose AGI to autonomously invoke these vertical systems in a collaborative relationship.

Below is the transcript of Demis Hassabis's interview:

1. What's Missing Before Achieving AGI?

Garry Tan: Demis Hassabis has one of the most extraordinary careers in the tech industry. As a child prodigy in chess, he designed his first hit video game, Theme Park, at 17. He then returned to school, earning a Ph.D. in cognitive neuroscience and publishing foundational research on the functioning mechanisms of brain memory and imagination. In 2010, he co-founded DeepMind with a single mission: solving intelligence. I believe they've achieved that.

Since then, his lab has continuously accomplished achievements that most believed would take decades. AlphaGo defeated the Go world champion, AlphaFold solved the major protein structure prediction challenge that had puzzled biology for 50 years, freely providing its findings to global scientists—a feat that earned him last year's Nobel Prize in Chemistry. Today, Demis leads the Google DeepMind team in building Gemini, striving toward the artificial general intelligence (AGI) goal he set in his youth. Let's welcome Demis.

You've thought about AGI longer than almost anyone. Examining current paradigms like large-scale pre-training, RLHF, and chain-of-thought (CoT) reasoning, how much do you believe we've already grasped in AGI's final architecture? What's fundamentally missing?

Demis Hassabis: First, thanks to Garry for that wonderful introduction. I'm delighted to be here—thank you all for the welcome. This venue is fantastic; I'll have to come more often. Working in this field is truly inspiring. To your question, I'm highly confident that the technological components you mentioned will all be part of AGI's final architecture. They've already made significant progress, and we've proven many of their functionalities. I don't believe we'll discover in a few years that these technologies are dead ends—that wouldn't make sense.

However, beyond what's already known to work, one or two critical technologies might still be missing. For instance, aspects of continuous learning, long-term reasoning, and memory systems remain unresolved, including how to make systems perform more consistently across various domains. I believe solving these issues is essential for achieving AGI.

Existing technologies might directly scale to AGI through incremental innovations, but one or two major theoretical breakthroughs could also be necessary. Even if mysteries remain, I don't think there are more than one or two. On this point, I consider both scenarios equally probable—50/50. At Google DeepMind, we're currently pursuing both paths simultaneously.

Garry Tan: When dealing with a series of agent systems, what amazes me most is how they largely reuse the same weights. Thus, the concept of continual learning is fascinating, as currently, we're somewhat cobbling them together with duct tape—like mechanisms such as dream cycles occurring at night.

Demis Hassabis: Dream cycles are indeed incredibly cool. We used to combine episodic memory, thinking about the issue through consolidation mechanisms. Actually, my Ph.D. research focused on how the hippocampus operates and integrates memories—how to elegantly incorporate new knowledge into existing knowledge bases. The brain excels at this, primarily accomplishing it during sleep, especially in rapid eye movement (REM) stages, where it replays important snippets to learn from them.

In fact, one way our earliest Atari game AI program, DQN, mastered games was through experience replay. We borrowed this from neuroscience, training models by repeatedly replaying successful trajectories. That was back in 2013—now, looking back, it was practically the Dark Ages of AI, but it was a crucial step.

I agree with you; now, we're somewhat patching things up everywhere, like crudely stuffing everything into context windows. But this seems unsatisfactory. Although we study machine brains rather than biological ones, you could have perfect context windows or memory scales in the millions or even tens of millions. However, retrieving and extracting the correct content still incurs costs, actually tied to the specific decisions you must make at present. This issue is non-trivial—even if you could store all data, the recall cost would be extremely high. I believe there's tremendous room for innovation in areas like memory.

Garry Tan: Indeed. What's crazy is that context windows with millions of tokens currently seem vast enough to support many operations.

Demis Hassabis: For most application scenarios, it is indeed large enough. If you think carefully, context windows are somewhat equivalent to working memory in humans. We can only remember a few digits at a time—an average of seven. Now, AI has context windows in the millions or even tens of millions. The problem is we're trying to cram everything in, including unimportant or erroneous information.

Currently, this brute-force approach seems unreasonable. The next challenge is that if you attempt to process real-time video, simply naively recording all tokens means a million tokens aren't actually that much—roughly enough for 20 minutes of video. So if you want a system that truly understands long-term context, knowing what happened in your life over the past month or two, you'd need far more capacity.

Garry Tan: Historically, DeepMind has favored reinforcement learning and search technologies, such as AlphaGo, AlphaZero, and MuZero. How much has this philosophy actually been incorporated into your current Gemini construction? Is reinforcement learning (RL) still underestimated today?

Demis Hassabis: Yes, I believe reinforcement learning is highly likely underestimated. Technological development always ebbs and flows. Since DeepMind's inception, we've been studying agents—that was our explicitly stated goal. All our Atari game research and AlphaGo were essentially agent systems.

By agent systems, we mean systems capable of autonomously achieving goals, making proactive decisions, and formulating plans. We initially undertook this work in gaming to make it tractable, then gradually tackled increasingly complex tasks. After AlphaGo, we developed AlphaStar for StarCraft. Essentially, we've conquered all games on the market at the time.

The natural next question was whether these models could be generalized into world models or language models, rather than being confined to simple or complex game models. That's been our focus over the past few years. Actually, you can see that much of our work today, including all cutting-edge models with thinking patterns and chain-of-thought reasoning, represents a return to AlphaGo's groundbreaking characteristics in some way.

I believe much of our early work remains highly relevant today. We're revisiting old ideas and practicing them in a more generalized manner at today's large model scales, including methods like Monte Carlo Tree Search, further enhancing reinforcement learning on that basis. Whether from AlphaGo or AlphaZero, these concepts are highly instructive for the current development stage of foundational models. I believe these ideas point toward the major breakthroughs we'll see in the coming years.

2. Why Are Small Models Becoming So Powerful?

Garry Tan: I have another question. Today, we need increasingly larger models to enhance intelligence, but we're also seeing the application of model distillation techniques, enabling smaller models to run much faster. You have incredible Flash models and find they can achieve 95% of the performance of cutting-edge (Frontier) models at just one-tenth the cost. Is that accurate?

Demis Hassabis: I believe this is one of our core strengths. Undoubtedly, you must build the most massive models to possess cutting-edge capabilities. However, our greatest strength has always been our ability to very quickly distill these cutting-edge capabilities and encapsulate them into smaller models.

We invented this distillation process early on, and thanks to scientists like Jeff and Oriol, we remain global top experts in this field. Simultaneously, we have enormous internal demand to implement this technology, as we must serve the world's largest AI user interface.

Besides search engines with AI Overviews and the Gemini app, more and more Google products, like Google Maps and YouTube, now incorporate Gemini-related technologies. This reaches billions of users—we have over a dozen products with user bases exceeding one billion—so their inference services must be extremely fast, efficient, inexpensive, and have ultra-low latency. This gives us tremendous motivation to develop Flash or even smaller Flashlight models, making them supremely efficient and hoping they'll ultimately perfectly adapt to the various workloads everyone handles daily.

Garry Tan: I'm curious about how intelligent these smaller models can actually become. For instance, is there a theoretical limit to the model distillation process? Could a model with 50B or 400B parameters eventually become as intelligent as today's cutting-edge large models?

Demis Hassabis: I don't think we've hit any kind of limit yet, or at least no one in the industry currently knows if we've reached some sort of information-carrying limit. Maybe there will be an insurmountable information density bottleneck at some point in the future. But based on current assumptions, when our Pro models or cutting-edge large models are released, six months to a year later, you'll see equivalent capabilities in those very tiny edge models. You can also see these advantages in our Gemma models—hopefully you'll like these four Gemma models. Given their parameter sizes, their capabilities are truly astonishing. This again heavily relies on model distillation techniques and innovative ideas on how to make extremely small models highly efficient. So I currently don't see any theoretical limits; we're still quite far from that ceiling.

Garry Tan: That's amazing, truly remarkable. One of the most incredible phenomena we're observing now is that engineers can accomplish 500 to 1,000 times more work than they could six months ago. I'm referring to many people in this room—their current work output might be a thousand times what it was in the past. As Steve Yegge said, this is equivalent to the combined workload of a Google engineer in the 2000s. It's extremely exciting.

Demis Hassabis: I think small models have many uses. Cost reduction is obviously one, but speed is even more critical. Whether it's programming or other tasks, this speed allows you to iterate much faster, especially when you're collaborating deeply with the system. We desperately need these extremely fast systems. Maybe they don't fully reach the level of cutting-edge models—like you said, only 95% or 90% performance—but that's good enough. When faced with agile iteration speeds, the benefits far outweigh that 10% performance gap.

I think another important aspect is running these models on the edge. This is primarily for efficiency, privacy, and security considerations. Given that these systems might run on devices processing extremely private information, or in robotics—for example, home robots require highly efficient and powerful local models to coordinate operations. As larger cutting-edge models emerge in the cloud, devices only need to delegate tasks to the cloud under specific circumstances. All audio and video streams can remain locally processed. I think this would be an ideal end state.

3. Continuous Learning and the Future of Agents

Garry Tan: Regarding context and memory capabilities, current models are stateless. How should developers guide them when using continuous learning models?

Demis Hassabis: That's a very interesting question. The current lack of continuous learning capabilities is one of the factors hindering agents from executing complete tasks. While they're very useful in certain aspects of tasks and can piece together cool things, they can't adapt to specific contexts. This is the missing key piece for them to achieve autonomous task completion. They need the ability to learn within specific contexts. We must overcome this to achieve true artificial general intelligence.

Garry Tan: How are we progressing with reasoning currently? Models can now perform impressive chain-of-thought reasoning, but they still fail at basic problems that even smart undergraduates wouldn't get wrong. What specific changes are needed, and what kind of progress do you hope to see in reasoning?

Demis Hassabis: There's still significant room for innovation in thinking paradigms. What we're doing currently is quite simple and heavily reliant on brute force. There's enormous potential in monitoring chains of thought and perhaps intervening mid-process.

I often feel that our systems, and those of our competitors, overthink and seem to get stuck in loops. I sometimes enjoy playing chess with Gemini. Interestingly, all leading foundational models perform poorly at games. Observing these chains of thought is fascinating because they're easily understandable.

I can quickly tell if the model has gone off track, and its thought process is highly verifiable. Sometimes while considering a move, it realizes it's a big mistake but, lacking a better alternative, tends to revert to it and ultimately executes it. This shouldn't happen in a rigorous reasoning system. Gaps still exist, but maybe only one or two adjustments are needed to fix these issues. These gaps lead to inconsistent intelligence. On one hand, it can solve extremely difficult International Mathematical Olympiad gold-medal problems, but on the other, it makes basic elementary math and reasoning errors if the question is phrased slightly differently. This indicates the model still lacks reflective capabilities about its own thought processes.

Garry Tan: Agents are extremely hot right now. While some believe they're overhyped, I personally think they're just getting started. What conclusions has DeepMind's internal research reached about the current capabilities of agents? How does the reality compare to the external hype?

Demis Hassabis: I agree with you—agents are just getting started. Having a system that can proactively solve problems is essential for achieving artificial general intelligence. This has always been clear to us; agents are the path to that goal. Everyone is gradually getting used to integrating them into workflows and maximizing their effectiveness, not just treating them as nice-to-haves but truly starting to use them for fundamental tasks.

Currently, we're all in the experimental phase. Only in recent months has the technological level truly reached a point where it can create substantial value. It's no longer a toy or a pretty demo but something that genuinely enhances time and efficiency. I see many people running dozens of agents for dozens of hours, but I'm not sure if I've seen outputs that justify such investments yet. However, that day will inevitably come.

We haven't seen a AAA game generated by agents top the app store charts yet. Many people have created great small demo programs—I can now prototype a theme park in half an hour, whereas when I was 17, that would have taken six months. It's shocking. But development still requires human craftsmanship, soul, and taste. We must ensure that whatever we build incorporates these qualities.

We haven't reached perfection yet. After all, we haven't seen a child create a blockbuster game selling tens of millions of copies. Given the efforts invested, this should be achievable, so something is still missing, perhaps related to processes or tools. I expect to see significant results within the next six months to a year once the technology delivers its full value.

Garry Tan: I don't think we'll see full autonomy first.

Demis Hassabis: We'll probably first see humans using tools to boost their productivity a thousandfold, with companies in gaming and other fields using these tools to develop bestselling apps or games, before more processes become automated.

Agents aren't quite at that level yet. If we discuss creativity, consider AlphaGo's Move 37 in the second game. We launched AlphaGo a decade ago, but I've been waiting for a scientific breakthrough moment like AlphaFold.

Simply coming up with Move 37, while cool and useful, is not the same as inventing Go. I want a system that can invent Go. If you give it a highly generalized description asking to invent a game that takes five minutes to learn the rules but a lifetime to master and is aesthetically beautiful, the system should return Go. Obviously, today's systems can't do that yet; I think something is still missing.

Perhaps nothing is missing, and it's just a matter of how we use these systems. With enough creative people using them, it might be achievable. That could indeed be the answer. As long as people dedicate themselves to mastering these tools, reaching a symbiotic level with them, and imbuing projects with soul and drive, when combined with true deep creativity, even more incredible things could become possible.

4. Open Models, Gemma, and Local AI

Garry Tan: Shifting gears to open source and open weights. The recently released Gemma is powerful and can run locally. What does this mean for the future? Will AI transition from primarily running in the cloud to becoming a tool truly in users' hands, and will this change the developer community for models?

Demis Hassabis: We're strong supporters of open source and open science. As mentioned earlier with AlphaFold, we made its results and all scientific work freely available, and we still publish papers in top journals today. We're committed to building world-leading models for their parameter scale, and Gemma was created for this purpose. Gemma reached 40 million downloads in just two and a half weeks, and we hope more people will develop based on it.

Given talent and computational resource constraints, simultaneously building two highest-specification cutting-edge models with different attributes is extremely difficult. Therefore, we decided to open-source the edge models applied to Android devices, smart glasses, and robotics. Once models are deployed on end devices, they become vulnerable to attacks, so we might as well make them fully open. We uniformly planned this at the Nano size level, which also strategically benefits us.

Garry Tan: Earlier, I demonstrated a Samantha-like version of Gemini from the movie *Her*. The successful demo felt incredible. Gemini is natively multimodal, and its contextual depth, tool usage, and voice-direct-input-to-model experience are unparalleled—undoubtedly the best currently available.

Demis Hassabis: The fact that the Gemini series was designed as multimodal from the beginning is still somewhat underestimated. Although this increased R&D difficulty by no longer focusing solely on text, we firmly believed we would benefit in the long run. We're witnessing this now.

When building world models like Genie based on Gemini, this is crucial for robotics and other fields. Robot foundational models will be built on multimodality, and with Gemini's strong multimodal performance, we have a competitive advantage and are increasingly applying it to projects like Waymo. Digital assistants following you into the real world and running on devices like phones or glasses need to understand the physical world, intuitive physics, and their physical environment. This is exactly what our systems excel at. We'll continue investing in this area to maintain our lead.

5. From AlphaFold to Virtual Cells

Garry Tan: With reasoning costs dropping rapidly, what will become possible when reasoning is nearly free, and how will this change teams' optimization goals?

Demis Hassabis: I'm not sure if reasoning costs can truly drop to nearly zero. It's somewhat like Jevons' paradox—ultimately, everyone will use millions of agents working collaboratively or have agents think in multiple directions and integrate, all consuming available reasoning resources. If breakthroughs occur in nuclear fusion, superconductors, or battery technology, energy costs could indeed decrease or even approach zero, but the physical bottlenecks of chip manufacturing will still exist. At least for the next few decades, resource quotas will remain, so computational power must be used efficiently.

Garry Tan: Fortunately, smaller models are becoming increasingly intelligent—that's fantastic. There are many founders in the audience from the biology and biotechnology sectors—I can see several. AlphaFold 3 lets us go beyond proteins to a broader spectrum of biomolecules. How far are we from simulating complete cellular systems, or is this inherently a much harder problem in another dimension?

Demis Hassabis: Isomorphic Labs, which we spun off from DeepMind after completing AlphaFold 2, is progressing very well. It's not just trying to build models like AlphaFold that handle single steps in drug development; we're also advancing related biochemical and chemical research to design compounds with the correct properties. We'll soon make some major announcements in this field.

Our ultimate goal is to build a complete virtual cell. I've spoken about this complete operational simulation in many scientific lectures: you could perturb a cell, and its output would be close enough to experimental data to be practically useful. You could skip massive search steps, generate large amounts of synthetic data to train other models, and ultimately predict real cells. I think we're about a decade away from achieving a complete virtual cell.

The DeepMind science team has already started this work. We began with the nucleus because it's relatively self-contained. The trick to solving such problems is whether you can cut into a corner of complexity. While the ultimate goal is to simulate the human body, before that, you need to find the correct level of detail simulation and identify a slice from which you can extract enough independent content. You can model and approximate it, integrate inputs and outputs into this independent system, and then focus solely on that part. From this perspective, the nucleus is a fascinating entry point.

Another issue is the current lack of data. I've spoken with several top electron microscopy scientists and other imaging experts. If we could image living cells without killing them, this would obviously be revolutionary because it would transform it into a visual problem we're skilled at solving. But I currently don't know of any technology that can simultaneously provide nanoscale resolution, be non-destructive, and observe all interactions in living, dynamic cells. While extremely detailed static images can now be taken, this isn't sufficient to transform it into a complex visual problem.

There are two approaches to solving this: a hardware- and data-driven solution, or a modeling-oriented one—building better learning simulators for these dynamic systems.

6. AI as the Ultimate Tool for Scientific Research

Garry Tan: You've been following various scientific fields beyond biology, including materials science, drug development, climate modeling, and mathematics. If you were to rank the scientific fields that will undergo the most dramatic transformations in the next five years, which would be on your list?

Demis Hassabis: These fields are all extremely exciting. The reason I entered the AI field and have dedicated over 30 years of my career to it is to use AI as the ultimate tool. I've always believed AI would be the ultimate tool for scientific research, exploring the environment, advancing scientific understanding and discovery, and deepening our comprehension of medicine and the universe around us.

Our initial mission was divided into two steps: The first step was to solve intelligence, i.e., building AGI; the second step was to use it to solve all other problems.

People often questioned back then whether we really intended to solve all other problems, and we truly meant it. Specifically, I am referring to addressing the root-node problems in science—those areas that can unlock entirely new branches of science or avenues of exploration—and AlphaFold is a prime example of how we aim to achieve that goal.

Currently, there are over 3 million researchers globally, and nearly every biology researcher in the world is using AlphaFold. Friends who are executives in the pharmaceutical industry have told me that almost every drug developed in the future will use AlphaFold at some stage of its research and development. This is exactly the kind of impact we hope to achieve through AI, and it's something we are very proud of—but I believe this is just the beginning.

I honestly can't think of any field in science or engineering where AI can't provide assistance. The fields you mentioned, I believe, are currently at a stage similar to AlphaFold 1. We've achieved very promising results, but we haven't fully solved the major challenges in those fields yet. Over the next few years, from materials science to mathematics, there will be a lot to explore in all these areas.

Garry Tan: In terms of science, this feels Promethean in its groundbreaking nature.

Demis Hassabis: It truly does. But at the same time, as the allegory of Prometheus warns, we must be cautious about how we use these tools, where we apply them, and how we prevent misuse.

Garry Tan: Many people here are trying to start companies that apply AI to science. In your view, what distinguishes a startup that truly pushes the frontier from those that simply wrap an API around a foundational model and call it 'AI for Science'?

Demis Hassabis: This is one of the things I advise everyone to focus on. If you're sitting in Y Combinator and observing everything, obviously you have to keep up with the trends in AI development. But I do believe there is enormous space to combine the direction of AI with other deep-tech fields.

This golden combination is extremely valuable, whether in materials science, medicine, or other highly complex scientific domains. Especially in fields involving the atomic world that require interdisciplinary teams, there are no shortcuts in the foreseeable future. Starting a company in these areas is quite safe—you don't have to worry about being completely swept away by just one update to a foundational model.

I've always personally loved deep tech and believed that anything truly lasting and valuable isn't easy. AI was the same way when we first started in 2010. Back then, both investors and academia thought AI wouldn't work—that it was just a niche topic tried in the '90s and proven to fail. But if you have strong conviction in your ideas and understand what's different this time, or what unique advantages you have based on your background—like being a machine learning expert with expertise in another application area, or having a founding team with that expertise—then you can have a huge impact and create tremendous value.

Garry Tan: That's a very important message. It's easy to forget, and once you succeed, everyone takes it for granted—but before you succeed, people often oppose you.

Demis Hassabis: Exactly, no one believed in it back then. That's why I think you must commit to things you genuinely love. For me, I would have dedicated myself to AI research no matter what happened. I knew from a young age that this was the most impactful thing I could think of, and it turns out that's true. Plus, it's the most interesting research direction I can imagine. So even if today our technology isn't fully there yet, and we're still in some small garage—or if we retreat back to academia—I would definitely continue researching AI in some way.

7. The Breakthrough Pattern of AlphaFold

Garry Tan: AlphaFold is like a sudden breakthrough you pursued and ultimately achieved. What do you think made the scientific field ripe for an AlphaFold-style breakthrough? Is there a pattern or a specific objective function?

Demis Hassabis: I should write this down properly when I have free time. But the lesson I've learned from all the Alpha projects, like AlphaGo and AlphaFold, is: If a problem can be framed as a large-scale combinatorial search problem, then our existing techniques can make a huge difference. To some extent, the larger the search space, the better—making it impossible for any brute-force or special-case algorithm to solve it. Whether it's Go moves or different conformations of proteins, their numbers far exceed the total number of atoms in the universe.

Second, you need a clear objective function—like minimizing free energy in proteins or winning a game of Go. You need to define this objective function clearly to execute the algorithm.

Finally, you need sufficient data—or a simulator that can generate a large amount of in-distribution synthetic data for you. As long as these conditions are met, with today's methods, you can get very far in solving the problem and find the needle-in-a-haystack solution you need. The first thing that comes to mind is drug discovery. The laws of physics allow for the existence of a compound that could cure a specific disease with no side effects—the only question is how to find it efficiently. With AlphaGo, we proved for the first time that these systems can discover perfect targets in needle-in-a-haystack searches.

8. Can AI Achieve True Scientific Discovery?

Garry Tan: Let's talk about something at a meta-level. We've discussed humans using these methods to create AlphaFold, but at this meta-level, humans can also use AI to explore possible hypothesis spaces. How far are we from an AI system capable of true scientific reasoning, not just pattern-matching on data?

Demis Hassabis: I think we're very close. All the cutting-edge labs are experimenting in this area, and we're developing general-purpose systems like Co-Scientist, as well as algorithms like AlphaEvolve that can go deeper than foundational large models.

While I haven't seen any truly groundbreaking scientific discoveries yet, I think it's coming soon. This might relate to what we discussed earlier about creativity and how to push beyond the boundaries of the known. At that point, AI won't just be pattern-matching or extrapolating—because there will be no existing patterns to match—it will need to reason by analogy. Currently, these systems might not have that capability, or we haven't found the right way to elicit it yet.

I often test it this way in science: Can it propose a truly interesting hypothesis, not just solve a problem? We're talking about solving something as profound as the Riemann Hypothesis or a Millennium Prize Problem—the kind of deep problem that would take a top mathematician a lifetime to study. That's a higher level of difficulty, and we don't yet know how to achieve it—but I don't think it's mysterious; these systems will eventually be able to do it.

Maybe we're missing one or two pieces of the puzzle. I sometimes call it my Einstein test. Could you teach a system all the physics knowledge before 1901 and see if it could come up with special relativity, like Einstein did in his miracle year of 1905? We should probably keep running that test. Once we achieve that, we'll be close to the stage where these systems can invent truly novel, unprecedented things.

9. What to Build Before AGI Arrives

Garry Tan: My last question is for the senior technologists here who want to dedicate themselves to similar long-term tech projects. You've led one of the world's largest AI projects and have been a pioneer in this field for years. I think everyone in this room would genuinely thank you and your colleagues at DeepMind. What are some things you know now about building at the frontier that you wish you had known at the beginning?

Demis Hassabis: I think we've covered some of this earlier. Tackling deep, hard problems isn't necessarily harder than solving shallow ones—they're just different kinds of challenges. Given that life is short and our energy and time are limited, you might as well dedicate your life to things that can truly make an impact. If you don't push, those impacts won't happen.

Another thing is that I'm very passionate about interdisciplinary research. I think in the coming years, cross-disciplinary combinations will become increasingly common, and with the help of AI, finding connections between these fields will become much easier.

Another point I'd make is that if you embark on a deep-tech journey, it often takes a decade or more. So you must now consider that AGI might arrive halfway through that journey. My prediction for when AGI will be achieved is around 2030. What does that mean if AGI arrives halfway through? It's not necessarily a bad thing, but you must factor it in. How would an AGI system leverage your technology? What would it use it for? This brings us back to the relationship between specialized tools and general-purpose AI systems we discussed earlier.

I can foresee that general-purpose systems like Gemini or Claude will use specialized systems like AlphaFold as tools. I don't think we'll force all protein-folding knowledge into a single general-purpose brain—that would lead to too many regression problems. If you cram all that expertise in, it would definitely negatively impact its other capabilities, like language. So a better approach is to have very good general-purpose tool-calling models that call on those specific tools. Those specialized tools will exist in a separate system. You need to take this seriously, try to imagine what that world would look like, and build something valuable along the way.