Observing the Value of DeepSeek Through Doubao's Pricing Strategy

![]() 05/11 2026

05/11 2026

![]() 419

419

The biggest shock this May Day holiday came on the last day when Doubao announced its subscription fees.

This announcement signifies the end of an era where domestic large-scale AI models relied solely on free access to gain scale.

Another observation: this trend is inevitable and irreversible, and it will quickly spread across the entire industry. The era where everyone pays for AI has arrived.

So, what does this trend ultimately signify? And how does it relate to the core value of the recently released DeepSeek V4?

—Introduction

01

What Does Doubao's Subscription Fees Signify?

Many people are undoubtedly dissatisfied with Doubao's subscription fees. They feel that this is another classic maneuver of acquiring users through free access and then monetizing them once usage habits are formed.

Such tactics are familiar in the classic internet era.

While this perspective is not entirely unfounded, I am not defending Doubao, nor do I believe it deserves criticism.

The underlying logic is that Doubao is unwilling—and no longer needs—to uphold the free model.

Note that unwillingness and inability are two different concepts.

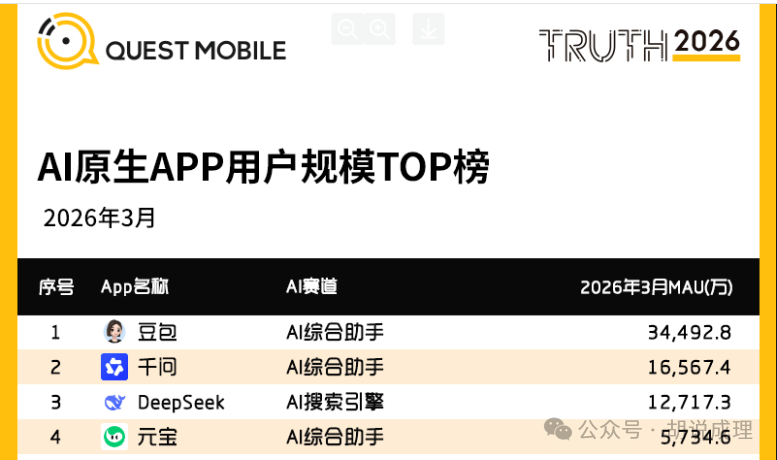

As of the first quarter of 2026, Doubao boasts 345 million monthly active users, according to third-party data.

This figure is impressive. However, the stark reality behind it is that, based on the statistics from March 2026, Doubao's average daily Token usage exceeded 120 trillion, marking an increase of over 1,000 times compared to its initial launch in May 2024.

According to estimates, if Doubao continued to offer its services for free, the annual cost would range between 3.7 and 4.5 billion yuan. Please note that this estimate is based on user data from March, and it is now May.

You might ask, relative to ByteDance's net profit of approximately 65 billion yuan in 2025, a cost of three to four billion yuan is negligible. ByteDance can surely afford it, so why impose fees?

The issue lies in how Doubao calculates its costs:

The first cost consideration is that three to four billion yuan is just the surface cost.

Strictly speaking, this only includes the costs of model research and development iterations and user consumption. Moreover, this is merely a static figure from March of this year.

Two clear trends are emerging. First, users are engaging with AI at increasing depths, with many integrating AI into their workflows and daily lives. Second, the duration of individual AI usage continues to rise.

Coupled with the escalating competitive and iterative pressures in the large-scale model sector, investment in research and development is only set to increase.

Thus, the figure of "three to four billion yuan" could surge past 10 billion yuan within six months, as is readily apparent.

Will services remain free then?

The second cost consideration involves the hidden expenses.

Everyone acknowledges Doubao's rapid responsiveness and smooth output, adequately covering daily life needs. However, the infrastructure ByteDance has built to support these capabilities is extremely costly. Public data shows that ByteDance's capital expenditures for 2025 were approximately 150 billion yuan, with the majority allocated to AI infrastructure. The budget for 2026 is even more aggressive, with capital expenditures projected at around 160 billion yuan, including 85 billion yuan earmarked specifically for AI chip procurement.

This is the fundamental reason why the free model is exerting increasing pressure on ByteDance.

The third cost consideration is that ByteDance is not the first to adopt this approach.

Previously, overseas models such as ChatGPT, Gemini, and Claude have all implemented subscription models. Domestic leading models like Kimi (49 yuan/month) and Zhipu (49 yuan/month) have also started charging.

Is there a need for ByteDance to be an outlier and continue offering free services? Not necessarily. Moreover, if it intends to charge, it will undoubtedly succeed. So, why not?

By the way, I predict that leading large-scale model companies, especially giants like Qianwen, Baidu, and Yuanbao, as long as they remain in the game and provide free services to the public, will gradually adopt subscription models. This is not an exaggeration.

02

What Is the Connection Between Doubao's Subscription Fees and DeepSeek?

How does Doubao's subscription fees relate to DeepSeek V4?

Simply put, DeepSeek V4 represents an approach that, while unable to single-handedly halt the arrival of the AI subscription era, can reduce or delay the "pain" of paying for AI.

A popular question for discussion is why, despite its unremarkable model performance this time, many still consider DeepSeek V4 a product of national significance?

I believe this is primarily because it has initiated a cost revolution in AI and has gone even further down this path.

Just two days after V4's launch, there were two consecutive rounds of price reductions. First, the price for all input cache hits was slashed to one-tenth of the initial price. Then, the Pro version received a 75% discount. Consequently, the cost for V4-Flash's million-Token cache hit input was reduced to a mere two cents in yuan.

It has finally transformed the scenario of "unable to afford large-scale Agent operations" into one where they can be "freely conducted."

However, being cheap does not equate to being low-quality. Cost reduction is achieved through DeepSeek's technological leadership. The inference computing power of V4-Pro for processing one million Tokens is only 27% of that of the previous generation. The core MoE architecture boasts a total of 1.6 trillion parameters, with only 3% (approximately 49 billion parameters) activated per inference, reducing single computing power demand by 97%.

Let me provide an analogy. A large-scale model is like a stadium with ten thousand light bulbs. Previously, even if you were the only person in the stadium, hundreds or even thousands of lights might be turned on to illuminate your path.

What DeepSeek does is intelligent lighting management. If only three lights are needed to illuminate you, the remaining 9,997 lights will remain off. It strives to achieve optimal cost through ultimate (extreme) intelligence while meeting your needs.

Let's crunch the numbers. Currently, under the same benchmark of one million Tokens, Claude Opus 4.7 costs as high as 5 USD for input alone, with an additional 25 USD for output. In contrast, DeepSeek V4-Flash, after superposition (superimposing) cache hits, costs as low as 0.02 yuan per million Tokens. According to a less strict exchange rate conversion, this gap means that, during cache hits, the cost of V4-Flash can be reduced to one two-thousandth of that of Claude Opus 4.7.

What does this signify? It means that even when the era of comprehensive AI subscriptions arrives, you can still use AI at a relatively reasonable cost. Using AI is a fundamental competitive edge for individuals and nations in the future.

Another extremely important point is that DeepSeek V4 runs on domestic hardware.

Let me share some market information. Due to high demand, the price of NVIDIA H100 one-year GPU leasing contracts has surged from a low of 1.70 USD per GPU per hour in October 2025 to 2.35 USD per GPU per hour in March 2026, a nearly 40% increase.

However, at the same time, V4 was immediately compatible with Huawei's Ascend super node products upon launch. The Ascend 950 super node plan is also set for mass market availability in Q4 2026, enabling networking on a scale of 8,192 cards, achieving 8 EFLOPS of computing power at FP8 precision, with training performance improved by 17 times and inference performance by 26.5 times compared to the previous generation.

In other words, DeepSeek not only reduces costs through technology but also through deploying domestic hardware in its ecosystem. Currently, DeepSeek is absolutely leading in its ability to compress costs through these dual efforts.

Future AI will be the infrastructure of the digital age. Infrastructure must be sufficiently large and widespread to generate social benefits, and being large requires being sufficiently affordable. Therefore, giving V4 a national-level evaluation is not an overstatement.

03

The Era of Universal AI Subscriptions Will Arrive, But It May Not Be as Daunting as You Think

Recently, a pessimistic view has emerged, suggesting that people will be divided into two groups: those who can afford paid AI and those who cannot, with the competitiveness gap between them widening.

This conclusion may hold true when comparing two individuals, but when viewed at the societal level, I believe it is unlikely.

When the internet first became widespread, the primary expense for early internet users was indeed internet fees. In the era of mobile internet, early data fees were also alarmingly high.

But today, does anyone complain about monthly internet fees of around a hundred yuan or data plans costing several dozen yuan? No, because the early costs of network construction have been amortized, while the user base has grown by tens or hundreds of times, providing operators with ample room for price reductions and profit margins.

I believe that in the future, we may even forget the concept of the internet, with only cloud-side and end-side remaining.

Every digital service you use in the future will essentially be an AI service, operating under a Token economy. Every function, operation, and behavior you engage in will be scheduled and served by AI, flowing through the network and running on the end-side.

When that era arrives, the standard of basic, free AI services will be much higher than that of today's paid premium memberships, but the prices will essentially drop to the level of today's phone and broadband fees. What you may truly need to pay for are some extremely high-level AI capabilities, top-tier artificial general intelligence abilities that are deeply embedded in your workflow and daily life.

All of this will gradually unfold over the next ten to twenty years. This is not an illusion—people have already identified some patterns. For instance, a study published in Nature Machine Intelligence by a Tsinghua University team proposed the "model density law"—the intelligence density of large-scale models doubles every 100 days.

What does this mean? Models with equivalent capabilities can be achieved with half the parameters after 3.3 months.

Simply put, along with Moore's Law on the hardware side, it will outline an expectable curve of continuous AI performance improvement and cost reduction.

Empirical data is already available—the API price of GPT-3.5-level models has dropped by 266.7 times in 20 months, yet they are still in use. In the future, people will become increasingly pragmatic in using AI, not pursuing the top versions of top models but naturally adapting and using them as needed.

Therefore, my conclusion is that there is nothing to fear about the subscription era. If there is anything to fear, it is the scenario where you pay but do not witness corresponding progress—that would be the true exploitation.

Conclusion

Doubao has proven through its actions that the era of consumer-end subscriptions for large-scale models has begun.

DeepSeek has demonstrated with V4 that we can provide highly competitive AI capabilities using domestic hardware.

At the end of DeepSeek's official V4 announcement, a quote from Xunzi was cited: "Not swayed by praise, not intimidated by criticism, following the recognized path, and maintaining integrity."

It means not being swayed by praise, not being intimidated by criticism, following one's own chosen path, and uprightly conducting oneself.

Rereading this quote today, at this specific moment, carries a completely different weight. It represents the path that China's AI industry should take.