Baidu AI's Breakthroughs: Li Yanhong Highlights Efficiency as Key

![]() 05/11 2026

05/11 2026

![]() 434

434

In an era where computing power is more valuable than gold, efficiency stands as a formidable challenge.

Baidu introduced ERNIE Large Model 5.1 four days prior to the opening of the 2026 Create Conference.

The timing of this release comes as no surprise. The May 13 Developer Conference required a technological showstopper, especially as nearly six months had elapsed since the previous version's debut, with market concerns growing about "Baidu's large model lagging behind."

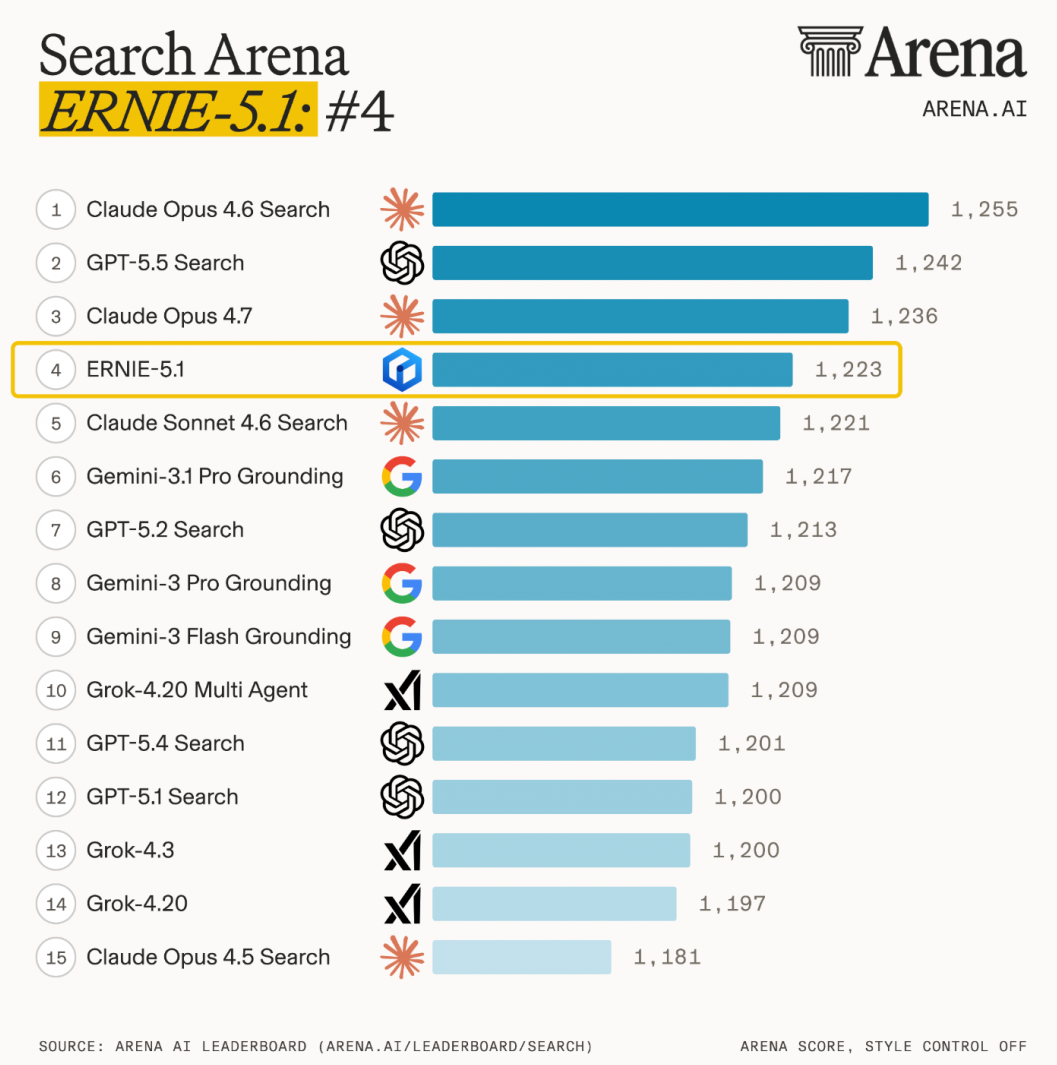

ERNIE 5.1, unveiled at this critical juncture, needed compelling data to counter these doubts—and it delivered some impressive results: ranking first domestically and fourth globally in search on the LMArena leaderboard, achieving pretraining costs at just 6% of those of similar-scale industry peers, and boasting agent capabilities that surpass DeepSeek-V4-Pro.

However, reflecting on Baidu's AI journey over the past year, one cannot ignore a persistent question: technological prowess does not seem to have fully translated into market dominance. How significant is ERNIE 5.1's contribution to this equation?

01

Three Key Metrics and a Lingering Question

Let's first examine the highlights of ERNIE 5.1.

According to the latest LMArena leaderboard rankings, ERNIE 5.1 scored 1223 points, securing the top spot domestically and fourth place globally in search—the sole domestic large model on the list. Its Preview version had already claimed the top domestic text ranking with 1476 points on April 30, outperforming GPT-5.5 and DeepSeek-V4-Pro, and was the only domestic model among the top 15.

For a company repeatedly questioned since 2023 about "losing its edge in large models," these achievements offer a form of validation—at least from a leaderboard perspective, Baidu's model capabilities remain competitive.

However, the true focus should be on the technological approach behind these scores.

The core technology of ERNIE 5.1 is known as "multidimensional elastic pretraining." First introduced during ERNIE 5.0's release, this method involves optimizing numerous sub-models of varying depths, expert capacities, and sparsity levels through a dynamic sampling mechanism during a single pretraining session. The result is a matrix of sub-models covering different parameter scales and computational budgets.

In simpler terms, a single training session generates multiple model variants, eliminating the need for separate computational resources for each scale. According to developers, this framework compresses and expands along three dimensions—elastic depth, elastic expert capacity, and elastic sparsity—using variable Top-k routing to flexibly adjust the number of activated experts, creating a controllable adjustment space between inference overhead and model performance.

In practical terms: ERNIE 5.1's total parameters are compressed to about one-third of ERNIE 5.0's, with activated parameters reduced to about half, and pretraining computational costs at just 6% of similar models at the same scale.

The 6% figure is often misunderstood. It does not mean "achieving 100% performance with 6% of the cost," but rather that under equivalent parameter scales and performance levels, the computational resources consumed during training are only 6% of industry norms. This is achieved through "model compression and elastic training to significantly reduce redundant computations," representing an efficiency gain in the pretraining phase.

Against the backdrop of escalating global data center energy consumption controversies in 2026 and constrained domestic chip supplies, this strategic choice holds significant appeal.

Now, examining evaluation data: in two agent evaluation tasks, τ³-bench and SpreadsheetBench-Verified, ERNIE 5.1 outperformed DeepSeek-V4-Pro, with official descriptions stating its "agent capabilities approach the level of leading closed-source models." In creative writing, it matched Gemini 3.1 Pro, scoring 99.6 on the AIME26 math competition (using tools), second only to Gemini 3.1 Pro.

Much of this data comes from Baidu's internal evaluations or smaller benchmark tests, rather than large-scale blind tests like LMArena, and thus requires additional third-party validation. However, the overall direction is clear: this generation's model upgrades focus primarily on agent and deep search capabilities rather than pure language expression.

Currently, ERNIE 5.1 is available on the Qianfan Model Plaza and the ERNIE Bot official website, with developers able to access its API through the Qianfan platform. Baidu also announced plans to integrate ERNIE 5.1 into over ten creative production agent platforms, including ISEKAI ZERO, Mulan AI, Diting Huanliu, and Storymaster.

The intention behind this move is clear: it is not just about the model but about its deployment.

Within the industry, ERNIE 5.1's product-side progress is not slow. However, what truly challenges Baidu has never been technology.

02

Baidu Takes a Different Approach

If we consider scores alone, ERNIE 5.1 is formidable. But in the 2026 AI market, especially in China, product competition hinges more on user base and scenario coverage than on scores.

During the 2026 Spring Festival, four major tech firms invested nearly 5 billion yuan in total marketing for AI. Baidu led with 500 million yuan in cash red packets, all routed through the Baidu App ecosystem. ByteDance's Doubao invested 1.5-2 billion yuan, Tencent's Yuanbao 1 billion yuan, and Alibaba's Qianwen the most at 6 billion yuan.

According to QuestMobile, ByteDance's Doubao started with 84 million daily active users (DAUs) around the Spring Festival, peaking at 145 million on New Year's Eve; Alibaba's Qianwen reached 73.52 million DAUs the day after its campaign; Tencent's Yuanbao hit 40.54 million on New Year's Eve. Meanwhile, Baidu ERNIE's user growth curve remained relatively flat.

The external perception is that Baidu is falling behind in the race for consumer users, despite continuous iterations in model capabilities. This represents a unique paradox in China's AI industry: technical teams relentlessly optimize training efficiency, but users only care about "can this thing book my flight?" The two coordinate systems remain misaligned.

To frame a broader industry narrative, the keyword for the first half of 2026 is shifting from "arms race" to "commercialization."

Not long ago, ByteDance's Doubao launched a paid model, with plans starting at 68 yuan/month and topping out at 5,088 yuan/year. The comment section drowned in criticism about "being both dumb and expensive." But make no mistake—this marks an industry inflection point. Last month, Alibaba Cloud, Tencent Cloud, Baidu Intelligent Cloud, and Zhipu simultaneously raised prices, with some increases reaching 463%.

While consumer users still cling to the illusion of "free AI," major firms have begun calculating real costs: every surge in API calls doubles the model's inference costs.

Baidu actually felt the peak of this pressure earlier than most peers. In Q3 2025, Baidu's core online marketing revenue was 15.3 billion yuan, down 18% year-on-year and more than one-fifth below its Q2 2023 peak of 19.7 billion yuan. This decline is structural, not cyclical—users no longer tolerate sifting through a page of links for answers; AI-delivered responses are the new norm. While beneficial for user experience, this undermines search advertising, Baidu's largest profit pillar.

Li Yanhong's response was to bet on AI transformation. By Q4 2025, Baidu's AI-driven new business revenue reached 11.3 billion yuan, accounting for 43% of core non-online marketing revenue. AI cloud revenue grew 33% year-on-year in Q3, while AI-native marketing services surged 262%. Amid these shifts, Baidu's business structure is indeed transforming.

Revisiting ERNIE 5.1 through this commercialization lens, its signal extends beyond "high scores"—the key point is its "training costs at 6% of industry norms." In 2026, when API prices are collectively rising, training cost advantages translate into pricing power and profit margins for cloud services.

Baidu's competitiveness in AI cloud hinges on converting ERNIE's efficiency gains into sustainable revenue growth. This challenge is far more difficult than securing a top spot on a benchmark leaderboard.

03

ERNIE 5.1's True Value May Lie Within the Baidu App

So, where does ERNIE 5.1's real value lie?

Viewed solely as a standard model for API calls, its technical data is indeed persuasive. But for Baidu, the question is how to embed it into the Baidu App, a super-platform with over 200 million monthly active users.

Earlier this year, ERNIE Assistant surpassed 200 million MAUs. During the Spring Festival, Baidu routed all red packet traffic to the Baidu App. This strategy indicates that Baidu has abandoned plans for standalone AI apps and is returning to its home turf to reshape search with AI.

At Baidu World 2025, Li Yanhong revealed that 70% of Baidu Search's top results now feature rich media. Users searching for a question receive structured, multimedia answers directly from AI instead of a list of blue links. This benefits users but harms ad revenue—click-through rates plummet, shrinking ad inventory.

This represents a commercial paradox: better user experience erodes monetization potential.

ERNIE 5.1's answer lies in its emphasized "search capabilities." Its "multi-source information retrieval, integration, and generation abilities" could theoretically deliver richer, more personalized answers. These high-quality information integrations could themselves become new advertising formats—not link-based ads but content-embedded recommendations.

Under this logic, AI search does not cannibalize ad revenue but rebuilds it in a new form. Whether this succeeds depends on the clarity of the commercialization paths Baidu announces at the May 13 Create Conference.

Meanwhile, Baidu's computational strategy cannot be ignored. Its Kunlunxin subsidiary has submitted a listing application to the Hong Kong Stock Exchange, while Baidu's 30,000-card intelligent computing cluster provides foundational support for large model training. Against the backdrop of accelerated domestic chip substitution in 2026, the long-term value of this "self-developed chips + self-developed models" combination may be more noteworthy than ERNIE 5.1 itself.

Goldman Sachs recently noted that China's AI training will increasingly rely on highly optimized computational efficiency architectures rather than sheer computational scale. Baidu's current path—pushing training costs to the extreme through software-hardware collaborative optimization—aligns closely with this industrial direction.

ERNIE 5.1 serves as a technological ace, offering quantifiable improvements in search capabilities, training efficiency, and agent abilities. Its strongest figure is that "6%"—in an era where computing power is more valuable than gold, efficiency itself is a formidable challenge.

But China's AI competition in 2026 has long moved beyond "parameters and benchmarks" as decisive factors. Commercialization pressures, user base competition, and industry scenario penetration form a far more complex evaluation system than benchmarks. The challenge of 500 million yuan in red packets failing to make waves, the missed opportunities in model adoption, and the profit vacuum left by declining search ad revenue—these are not problems ERNIE 5.1's technical data can solve alone.

At the May 13 Create Conference, Li Yanhong will take the stage. The decision then may not be "how to iterate ERNIE products" but how Baidu intends to monetize its AI journey. In 2026, when major firms have collectively entered "survival-by-the-numbers" mode, this answer may be what the market most wants to hear.

This article is original to Xinmou.

— END —