The "Landlord" Struggle in the AI Lobster Era: Who's Secretly Amassing Wealth?

![]() 05/15 2026

05/15 2026

![]() 409

409

Author | Intelligent Relativity

As 2026 reaches its midpoint, one of the tech world's most talked-about concepts, "OpenClaw," has become more defined: the initial frenzy has subsided, but the competition rages on. GitHub's Star growth has slowed, deployment screenshots have disappeared from social media feeds, and the cost of installation services on Xianyu has dropped from 2,000 yuan to just 200 yuan. These signs were once seen as indicators that the "lobster" craze was cooling. However, recent industry developments reveal that the excitement hasn't faded—it has simply shifted from a widespread frenzy to a quieter, more intense industrial battle.

Just as outsiders expected the "Hundred Shrimp Battle" to lose steam, the conflict has only intensified.

Alibaba and Tencent's earnings calls have underscored the critical importance of AI, each taking distinct approaches but with a resolute direction. Prior to this, the "landlord" battle over lobster AI had already reached a fever pitch.

Alibaba CEO Wu Yongming personally spearheaded the creation of the Alibaba Token Hub (ATH) business group, bringing together all AI forces, including Tongyi Labs, Qianwen, and Wukong, with the ambitious goal of "achieving annual cloud and AI commercialization revenue exceeding $100 billion within five years."

Tencent announced the official rollout of its all-scenario AI agent, WorkBuddy, which eliminates deployment steps entirely—users can simply download and start using it.

Baidu simultaneously launched its zero-deployment service, DuClaw, with a promotional first-month price of just 17.8 yuan, significantly lowering the barrier to "plug-and-play" usability.

ByteDance's TRAE SOLO evolved from an IDE (Integrated Development Environment) into a standalone upgrade, aiming to accelerate its efforts to capture user mindshare for AI assistants.

The concentrated efforts of these tech giants are clear. This is not a gradual competition—it's an all-out, resource-intensive war. The company that can first popularize "lobster" AI is likely to secure the last ticket to the AI consumer era.

Meanwhile, reflecting on the past six months of the "lobster farming" craze, a fundamental question arises: Who got caught in the hype, and who found a viable path?

Three Deployment Models, Three Distinct Outcomes

Reviewing the OpenClaw craze in the first half of 2026, the market primarily saw three deployment models emerge: local deployment, cloud instances, and big tech packaging. Each model caters to different user needs and market controversies, determining who holds the upper hand in this competition.

1. Local Deployment: Data Sovereignty and Technical Freedom, but High Barriers

The local deployment model initially attracted tech enthusiasts in the first half of the year. Its selling points were clear: private data stays local, no monthly membership fees, and greater autonomy in AI usage. However, most soon realized that even setting up the environment could be a deterrent. More concerning was that AI could access the entire file system, browser history, and even execute terminal commands. If hijacked by malicious skills, users' AI could become tools for others, compromising their computers.

To address these challenges, some vendors attempted to lower barriers and security risks. For example, Zhipu AutoClaw streamlined environmental setup to "one minute." Tencent QClaw, leveraging PC Manager 18.0, created a dedicated "quarantine zone" for AI, restricting it to specified folders only. NVIDIA NemoClaw directly sold all-in-one machines preloaded with hundreds of skills, ready to use upon plugging in.

While this model has its appeal, it remains insufficient for the average user. Environmental setup already blocks most, while maintaining security boundaries requires a higher level of user awareness—two hurdles that the general public struggles to overcome.

Of course, this model will always have a market. Tech geeks, privacy purists, and high-end users willing to invest in all-in-one machines will prefer local deployment. But in the first half of 2026, it was destined to remain a niche choice.

2. Cloud Instances: 24/7 Online and Elastic Computing, but Still Imperfect

Local deployment solved autonomy issues but introduced another reality: ordinary computers struggle to sustain the "lobster's" constant online demands. Users need it to monitor markets while sleeping, browse web pages while working, but most laptops can't handle this "always-on" load—fans whir, batteries drain, and disconnections cause failures.

Thus, cloud instances gained popularity in the first half of the year. Alibaba Cloud, Tencent Cloud, Baidu Intelligent Cloud, and Huawei Cloud launched "one-click deployment images," allowing users to deploy "lobster" to cloud servers in a few clicks, achieving 24/7 online availability. However, users quickly encountered limitations.

First, cloud-based "lobster" is isolated from local computers. It can execute cloud commands but can't directly operate local mice/keyboards or access local hard drive files. To organize local documents via cloud AI, users must configure cloud disk mounting or file syncing. Second, users face dual costs: server rental fees and Token usage fees. Finally, once automation workflows rely on cloud storage and scheduled tasks, migrating data or reconfiguring environments becomes as complex as local deployment.

Clearly, cloud solutions addressed the "computer can't handle it" problem but introduced new headaches: double costs and local-cloud fragmentation. For users needing long-term AI task execution, this feels like a transitional solution, not the final answer. First-half user feedback confirmed this.

3. Big Tech Packaging: Paving the Way for "Zero-Barrier AI"

The core contradiction of the first two models is straightforward: users don't want to be technical operators or financial accountants. Local deployment offers "freedom but hassle," while cloud instances provide "convenience but cost and fragmentation."

Most users likely just want a "black box" with a fixed monthly fee—"I pay one price, you get the job done."

Big tech companies sensed this demand and made their move in late first-half 2026. Tencent's WorkBuddy promoted "download and use," while Alibaba's "Wukong" embedded directly into DingTalk, serving 20 million enterprise organizations. These products share common traits: out-of-the-box usability, with model calling fees bundled into membership fees. Users avoid Docker setup, environmental configuration, or security isolation concerns—big tech built the "quarantine zone" into the product's foundation. The local-cloud fragmentation issue is also resolved: through official client one-click authorization, AI can directly access local files without manual cloud disk mounting.

Users don't need to know whether the backend uses Hunyuan or DeepSeek, or worry about Token price fluctuations—monthly fixed deductions ensure "lobster" AI is always available. More importantly, these big tech packaged versions don't lock users into a single model; WorkBuddy, DuClaw, and Wukong all support multi-model switching.

Users aren't purchasing a specific model's capabilities but "an entry point"—where they can freely choose models as needed. The first two models force users to select models, pay Tokens, and assume risks themselves, while big tech packaging bundles everything into a subscription, bridging local-cloud divides.

Of course, this model has trade-offs. Big tech is still seizing market share, so pricing remains in flux—future membership fees will likely rise incrementally. But the mass market doesn't pursue absolute lowest prices—they want predictable costs and hassle-free experiences, depending on which vendor first meets these expectations.

The 2026 "Lobster" Battle Divides: Gateway Competition Determines the Final Winners

Looking back from mid-2026, the divergence among the three deployment models is clear.

Local deployment retains tech geeks and privacy-sensitive users but fails to break into the mass market. Its value lies in proving that user demand for data sovereignty and subscription-free models is real. However, this approach shifts technical barriers and security responsibilities entirely to users.

Consequently, only a minority can successfully implement local deployment. First-half tech community hype curves confirm this: initial explosive growth followed by rapid decline, leaving a stable core user base but no scale effect.

Cloud instances followed a different trajectory. They solved the "computer can't handle it" pain point and were initially seen as an upgrade to local deployment. However, users quickly calculated another cost: server rentals plus Token fees—double spending; local files and cloud operations—two systems. Critically, once workflows depend on cloud storage and scheduled tasks, migration costs rival redeployment.

By late first-half 2026, cloud instance growth slowed sharply, with many users stuck in "trial" phases without converting to long-term payments. This suggests cloud solutions filled a transitional need but lacked stickiness for long-term retention.

In contrast, the big tech packaging model, though latest to launch, achieved 0-to-1 breakthroughs within a month. Tencent's WorkBuddy surpassed 1 million downloads in its first week, Alibaba's "Wukong" activated over 30% of DingTalk users, and Baidu's DuClaw rapidly acquired users with its 17.8 yuan promotional price.

These numbers signal a clear trend—mass users aren't against paying but against "paying after hassle." They prioritize "hassle-free" over "control." Big tech packaging delivers this: out-of-the-box usability, predictable billing, and customer support for issues.

Objectively, this divergence isn't accidental but driven by a shift in AI product competition. By 2026, capability gaps among foundational models (GPT-5, Gemini Ultra, Tongyi Qianwen Max, Hunyuan Turbo) had narrowed to levels indistinguishable to average users. In real-world use, users can't tell which model is responding.

AI products now compete on three simpler metrics: onboarding cost, payment willingness, and retention. The product that gets users started fastest and retains them longest wins—big tech's forte.

The mass market isn't won by the most technically advanced but by the most convenient. Big tech's weapons aren't model parameters but channels, brands, payment systems, and customer service networks. DingTalk, WeChat, Baidu App, Douyin—these billion-user gateways are ideal distribution channels. Combined with proven subscription models (video, cloud storage, office suites), big tech packaging is tailor-made for the mass market.

Now, regarding "who's quietly making a fortune," the answer is half-clear. Short-term, big tech packaging will dominate market share. Long-term, the true winner won't be a single big tech company but whoever first transforms the "gateway" into an "ecosystem."

Why? Because AI agent competition isn't about models but scenarios and data flywheels. The more you use AI, the better it understands you; the better it understands you, the more indispensable it becomes. Once users accumulate workflows, personal data, and automation habits in one gateway, migration costs become prohibitively high.

This is the AI version of the super-app logic from the mobile internet era: seize gateways, collect rents, and lock users in via ecosystems.

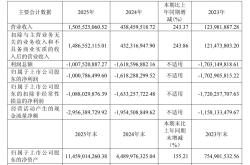

This explains why Alibaba, Tencent, Baidu, and ByteDance all struck simultaneously. Traditional internet businesses—advertising, e-commerce, gaming—have hit growth ceilings. For these giants, AI represents the only visible "second growth curve." In Q4 2025, Alibaba's intelligent cloud grew 36%, with AI-related revenue maintaining triple-digit growth for 8-10 consecutive quarters. Baidu's 2025 AI revenue surpassed 40 billion yuan, far exceeding expectations.

Meanwhile, all four giants hold vast private datasets: ByteDance owns Chinese short video preferences, Alibaba possesses decades of transaction records, Tencent holds everyone's social graphs, and Baidu has accumulated 20 years of search history. If these assets aren't leveraged soon, competitors or startups with superior technology could erode their data-driven advantages faster than expected. The AI gateway battle is fundamentally a defensive war.

Will local deployment and cloud instances disappear? No. They'll resemble niche markets—serving users willing to invest time for data sovereignty. Like Linux in desktop OS, they'll always have loyal users but never dominate the mass market.

Most ordinary users avoid these paths not out of ignorance but choice. They want tools that are ready-to-use, with predictable bills and accountability. Big tech packaging delivers exactly that.

After the hype fades, what remains aren't the big tech companies collecting membership fees but the tutorials teaching users to install Docker, configure MCP, or build environments—things users never truly wanted.

The real winners are those who make users forget the word "deployment."

*All images in this article are sourced from the internet.