The Surge of AI Paid Services Fuels the Boom in Computing Power Leasing

![]() 05/15 2026

05/15 2026

![]() 493

493

What is driving this unprecedented surge in demand for computing power? The answer can be simply attributed to the exponential explosion in token usage.

According to the latest estimates from OpenRouter, as of early April 2026, the total global token usage for AI large models has reached 27 trillion, representing an 18.9% month-over-month increase. Meanwhile, China’s token usage for AI large models has surpassed that of the United States for five consecutive weeks. More tokens consumed means more servers at work, more GPUs heating up, and more electricity being burned—and behind these 'mores' lies the fundamental growth logic of the computing power leasing business.

The concept of 'leasing instead of buying' is rapidly gaining traction among a large number of small and medium-sized enterprises (SMEs). A single NVIDIA A100 graphics card costs approximately RMB 100,000 to 150,000, while the H100 can reach RMB 200,000 to 300,000. For a startup, the hardware procurement costs alone can be crippling. Through leasing, companies can significantly reduce initial investments by over 70% and can upgrade to the latest hardware as needed. In China, some computer equipment leasing companies have reported that high-performance models are being snapped up 'as soon as they are listed.' This phenomenon proves that the core value of computing power leasing is no longer just about 'saving money' but providing a viable path for SMEs to keep pace with technological advancements at lower costs and risks.

01

Domestic and International Players Are Making Their Moves

If robust demand paints a promising picture for computing power leasing, then several recent high-profile deals directly signal to the market: this track (referring to the industry) is far deeper and more diverse than imagined, with participants that are far more heavyweight.

On May 4, ByteDance’s AI application Doubao introduced a paid version on the App Store, offering three tiers of value-added services on top of its free version: Standard at RMB 68/month (auto-renewing), Enhanced at RMB 200/month (auto-renewing), and Professional at RMB 500/month (auto-renewing). The paid features primarily focus on high-complexity productivity scenarios such as PPT generation, data analysis, and film and television production.

Why is Doubao choosing to charge now? Three key figures illustrate the situation: Doubao’s average daily token consumption has reached 120 trillion, a 1,000-fold increase since its initial launch in May 2024, and it doubled in just the first three months of 2026. Of ByteDance’s planned RMB 160 billion in capital expenditures for 2026, nearly half is expected to go toward AI chip procurement. According to industry estimates, the demand for inference computing power is now 10-15 times that of the training phase. In other words, the more paying users, the higher the usage frequency, and the more complex the tasks, the faster computing power is consumed—and this closed-loop logic ultimately translates into a flood of orders for computing power leasing firms.

If Doubao’s move reflects demand-driven pressure from AI users and commercialization, then Anthropic’s major computing power leasing deal with SpaceX demonstrates a reshaping of the computing power supply landscape. On May 6, AI unicorn Anthropic officially announced the leasing of all computing power at SpaceX’s Colossus 1 data center in Memphis, Tennessee, USA.

What does this mean? Colossus 1 is equipped with over 220,000 NVIDIA GPUs, including H100, H200, and even next-generation GB200 accelerators, with a total power consumption of 300 megawatts—equivalent to the electricity usage of a mid-sized city with 200,000 to 300,000 households. The data center was planned and completed in just 122 days, showcasing SpaceX’s remarkable coordination capabilities in construction and chip procurement. For Anthropic, this agreement means acquiring over 300 megawatts of additional computing power within a month, bringing its total computing power from less than 100,000 H100 equivalents to a level on par with or even surpassing major competitors like OpenAI and Google DeepMind. For SpaceX, this is a masterful commercial move with multiple benefits: Colossus 1, originally built for xAI’s Grok training before xAI was dissolved and merged into SpaceX (renamed SpaceXAI), had been idle. Leasing it to Anthropic not only monetizes this idle top-tier computing power directly but also provides a compelling cash flow story for SpaceX’s upcoming IPO. The two companies are even exploring collaboration on developing 'multi-gigawatt orbital AI computing power,' accelerating efforts to deploy data centers in low-Earth orbit.

Notably, Anthropic is not putting all its eggs in SpaceX’s basket. Just a month earlier, Anthropic had signed computing power supply agreements totaling approximately 5 gigawatts with Amazon and Google/Broadcom, with the Google deal alone estimated to involve nearly USD 200 billion in investment over five years. Combined with its previous USD 30 billion computing power contract with Microsoft Azure, Anthropic’s total computing power commitments now amount to hundreds of billions of dollars. These staggering figures reveal a reality: the competition among top AI models is not just an algorithmic and data race but a pure computing power consumption war. And in this war, the most formidable players are not the model developers themselves but the 'computing power kings' with massive GPU resources and superior supply chain integration capabilities.

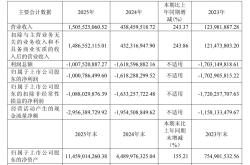

Shifting focus back to China, the player landscape in the computing power leasing market is also rapidly taking shape. Key players can be broadly categorized into three typical paths: The first category consists of IDC/AIDC vendors deeply tied to core customers, such as SinoNet, Range Technology, and Aofei Data, which have established themselves through node reserves and expansion capabilities. The second category includes cloud service providers capable of global scheduling, management, and a mix of self-built and third-party resources. Alibaba, Baidu, and Tencent have all announced multiple rounds of price hikes for their AI computing power products, with increases ranging from 5% to 34%. The third category comprises computing power leasing firms and cross-industry players with differentiated resource integration capabilities that are now seeing accelerated performance growth. For example, Xiechuang Data reported a net profit attributable to shareholders of RMB 750 million in the first quarter of 2026, up 343% year-over-year; Litong Electronics reported RMB 270 million, up 821% year-over-year; and Dongyangguang announced on May 5, 2026, that its controlled subsidiary had signed a computing power service procurement framework contract worth RMB 16-19 billion. Overseas, emerging cloud provider CoreWeave has raised its capital expenditure plan from USD 10.3 billion to USD 30-35 billion, with an order backlog nearing USD 96 billion. Oracle’s 4.5 GW computing power leasing agreement with OpenAI has pushed its 2026 capital expenditures to over USD 50 billion.

02

From 'Selling Computing Power' to 'Selling Tokens': The Business Model Has Changed

The rapid ascent of computing power leasing from a 'niche business' to a 'core asset' hinges on a fundamental generational evolution in its business logic. Five years ago, this business was akin to simple 'server subletting'—platforms procured a batch of GPUs, listed them online with hourly pricing, and customers used them on demand, resembling a vending machine-style standard commodity transaction. Today, however, the industry is undergoing a profound transformation.

The most visible change is on the pricing front. According to industry monitoring data, the price of a one-year lease contract for an H100 GPU surged from a low of USD 1.70 per card per hour in October 2025 to USD 2.35 in March 2026, a nearly 40% increase. Meanwhile, rental prices for high-end GPUs like NVIDIA’s H200 and H100 generally rose by 15% to 30% month-over-month—H200 now costs RMB 7.5 to 8.0 per card per hour (RMB 60,000 to 66,000 per month), a 25% to 30% increase; H100 monthly rentals rose to RMB 55,000 to 60,000, a 15% to 20% increase. More tellingly, delivery lead times have extended significantly to the second quarter of 2027 (H200) and the first quarter of 2027 (H100). This elongation of timelines is a true signal of market tightness.

Behind these price hikes lies a more fundamental shift in demand structure—computing power leasing is transitioning from 'selling computing power' to 'selling tokens.' This seemingly simple shift has far-reaching implications. In the past, users purchased 'GPU runtime,' paying by the hour with usage rates entirely dependent on the user. Today, as AI applications fully enter the inference-driven phase, developers, enterprises, and individuals consuming large models are essentially 'consuming tokens'—every API call, every intelligent agent interaction, and every complex task inference consumes massive volumes of tokens. Demand-side players no longer care about how many GPU hours they use; they only care about how many tokens the model can generate and how many tasks it can complete. Correspondingly, computing power leasing firms are upgrading their service delivery models from 'bare-metal computing power rental' to 'model-as-a-service with integrated computing power' and even 'token revenue-sharing models.'

However, behind the glossy surface of this business logic lie non-negligible concerns. Some have used the current H100 rental rate of USD 2.35 per hour and an optimistic 100% occupancy assumption to tout a 'four-month payback period' myth, but such calculations often mask pitfalls for startup and fringe players. Industry insiders point out that the real picture is this: GPU assets fully recover costs in about 2.5 years. While a 2.5-year payback period is quite respectable in capital-intensive industries, it hinges on securing orders for today’s most scarce high-end GPUs, having data centers with sufficient power capacity and cooling capabilities, and locking in customers with long-term contracts of 3 to 5 years rather than one-off transactions. Against the backdrop of the four tech giants (Meta, Alphabet, Microsoft, Amazon) planning a combined USD 725 billion in AI capital expenditures for 2026, small and medium-sized computing power leasing players are inherently at a disadvantage in supply chain negotiations. It is foreseeable that resources and orders in this track will further concentrate among a few top players, with a 'winner-takes-all' pattern (referring to market structure) quietly emerging.

03

Breaking Through in Computing Power: Three Critical Questions

While the previous sections discussed the 'present' of computing power leasing, what truly determines the industry’s future are three deeper, longer-term structural issues.

The first issue is the accelerating pace of technological generational competition. NVIDIA is updating its product roadmap at a breathtaking pace: following the Blackwell platform, the Vera Rubin platform is slated to ship in the second half of this year, boasting a 10-fold improvement in performance per watt over the previous generation; Rubin Ultra is expected in 2027, and Feynman in 2028. Each GPU generation’s leap in computing power means the book value of older servers can quickly depreciate, posing significant depreciation and asset impairment risks for computing power leasing firms that have invested hundreds of billions in fixed assets. Yet, precisely because of this, the leasing model demonstrates stronger commercial resilience—only through large-scale, rapidly iterating cluster operations can hardware renewal costs be smoothed across a large customer base, turning the negative impact of technological iteration into flexibility and scale advantages. Mercedes-Benz’s F1 team doesn’t skip races just because it will have a new engine next year; similarly, AI companies won’t avoid leasing today’s GPUs just because next year’s hardware will be more powerful. In a world of accelerating technology, computing power leasing firms stand to profit rather than lose—tenants avoid asset depreciation risks, while lessors can absorb iteration costs through economies of scale.

The second issue is the tug-of-war between the 'computing power divide' and localization. A somewhat darkly humorous reality is playing out in some Chinese smart computing centers: cabinets equipped with NVIDIA GPUs have over 90% occupancy rates, while those with domestic GPUs sit largely idle. The core bottleneck is not the hardware itself but the software ecosystem. CUDA has accumulated over a decade of developer toolchains and ecological advantages, while domestic GPUs still lag noticeably in framework adaptation and compilation optimization. Huatai Securities judges 2026 as the 'first year of domestic super-nodes,' attempting to bridge single-chip gaps through system-level restructuring of super-node architectures to reduce communication overhead and convert theoretical computing power into usable throughput. But this path will undoubtedly require time. While the computing power leasing industry enjoys the dividends of surging demand for large models, it must also confront the structural challenge of 'chip and soul shortages.' The Ministry of Industry and Information Technology has issued a notice on launching a special initiative for inclusive computing power to empower SME development, deploying innovative businesses like 'computing power banks' and 'computing power supermarkets' to support SMEs in depositing idle computing power resources. This policy aims to break down the heavy-asset barriers of computing power, but if the foundational computing power base remains reliant on overseas high-end chips, so-called 'inclusive computing power' will still be deeply constrained by external supply chain uncertainties.

The third issue is the synergy between computing power leasing and AI transformation in real-economy industries. The current market frenzy is primarily driven by demand from internet large model companies, with token growth concentrated in cloud applications and intelligent agent track (referring to sectors). But the true 'age of computing power exploration' is far from over—if industries like smart manufacturing, medical imaging, autonomous driving, and digital twins fully embrace AI, computing power demand could surge several times over. Against this backdrop, the computing power leasing industry faces a choice: continue chasing top internet clients as mere 'arms dealers' of computing power, or proactively engage with various industries to make computing power a true public infrastructure for the national economy. The outcomes will differ greatly. The former path offers 'quick money,' while the latter promises 'big money'—but the latter requires patience, industry expertise, and cross-domain collaboration capabilities.

The era of free AI is accelerating toward its end, and computing power—the most essential and scarce production factor in the AI world—has made its 'lessors' the most certain beneficiaries of this industrial wave. Not everyone can profit from algorithmic breakthroughs in large models, nor can every company afford to build multi-billion-dollar computing power clusters. But nearly every enterprise seeking to implement AI applications cannot avoid the computing power leasing path of 'monthly payments, token-based billing, and on-demand scaling.' As the AI paid service wave cascades from C-end applications to B-end infrastructure, computing power leasing is no longer a niche business but an indispensable 'utilities' component in the AI industry’s commercial closed loop (referring to closed loop).