Google I/O 2026 Preview: AI Finds Its Way into XR

![]() 05/15 2026

05/15 2026

![]() 492

492

A Sneak Peek at the Highlights of Google I/O 2026

By VR Gyroscope Wickey

The Google I/O Developer Conference serves as a crucial window for the tech industry to gain insights into Google's latest technological layout .

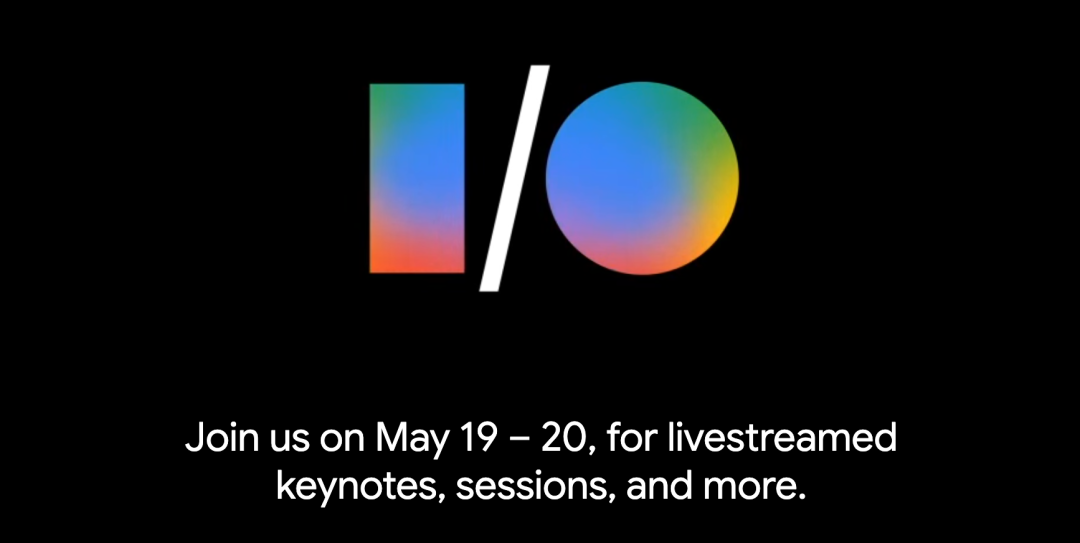

As a highly anticipated event for global developers and industry observers, Google I/O 2026 will kick off offline in Mountain View, California, from May 19 to 20 (local time), with a global livestream. This year's conference will focus on AI-driven system-level operations and integration, offering key insights for the XR field.

Since its inaugural event in 2008, Google I/O has evolved from a technical exchange and gathering for engineers and developers into a grand tech event encompassing software development, artificial intelligence, cloud computing, mobile ecosystems, and hardware innovation. The conference consistently adheres to the “input/output” philosophy, fostering innovation through openness.

This year's conference will not only serve as a platform for technical updates to the Android system but also as a significant showcase for Google's XR ecosystem and AI hardware. Industry observers widely believe that I/O 2026 will mark a new phase for Google in immersive computing.

01

Agenda for Google I/O 2026

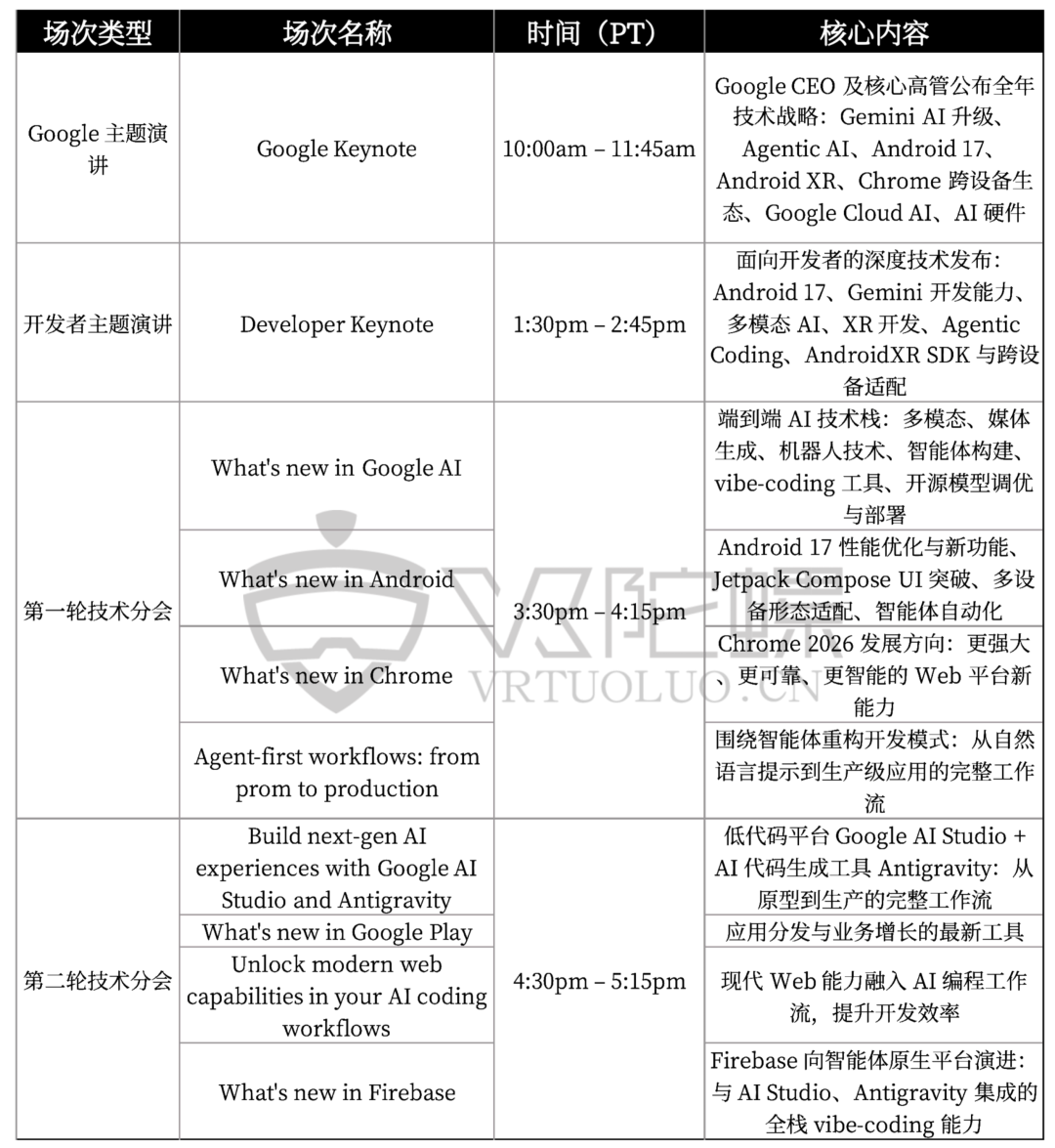

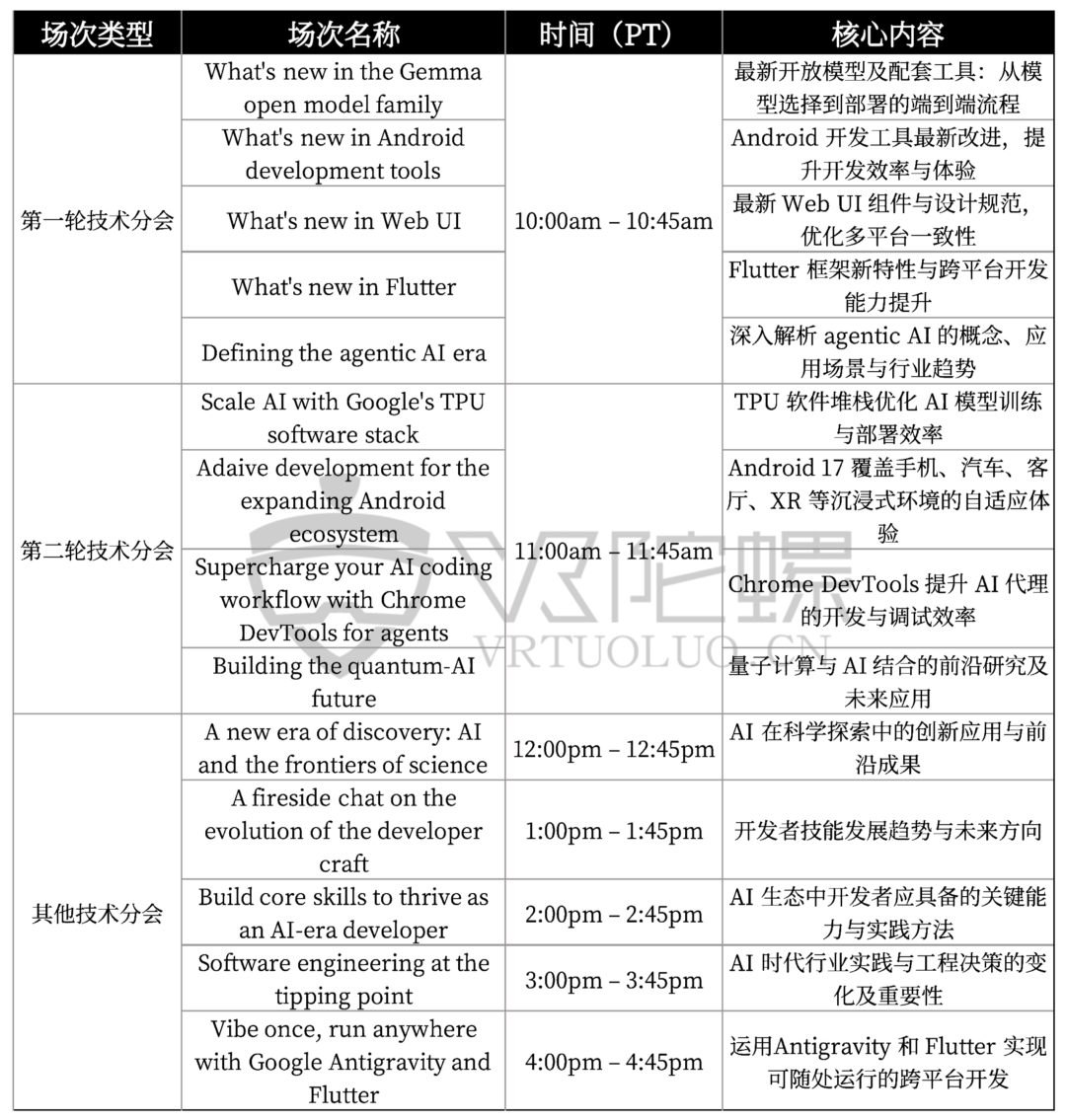

In 2026, a year of rapid AI development with companies vying for dominance, what will Google I/O 2026 deliver? Based on the agenda released by Google, AI is the absolute focus of this conference.

The two-day event will be fully livestreamed on Google's official YouTube channel and the I/O website. It will commence with keynote speeches from Google and developers on the first day, followed by an intensive schedule of breakout sessions and lab discussions.

Below is a summary of the officially released event agenda:

DAY 1 (May 19)

DAY 2 (May 20)

02

Highlights of Google I/O 2026

Android XR

At the Android Show press conference on May 12, Google announced that Android XR Glasses would debut at the annual developer conference, I/O, opening on May 19. Google has laid the groundwork for the future development of its new XR glasses through partnerships with Samsung, XREAL, Warby Parker, and Gentle Monster. These companies are expected to bring wearable devices powered by Android XR technology to market.

Android XR represents a technological innovation at the foundational level, rebuilding the Android system from architecture to display. It aims to create an open and high-performance platform for the era of spatial computing. By deeply integrating AI into 3D physical world computing, it fundamentally ensures performance, smoothness, and ecological openness.

Since its debut at Google I/O 2025, industry consensus suggests that Google is focusing on developing a new “AI+XR” device logic. Currently, AI primarily operates through phones or computers. However, with advancements in XR technology and the proliferation of smart glasses, these glasses are poised to become the new entry point for AI.

It is speculated that traditional AI usage is gradually being restructured, shifting from active operations on phones and computers to seamless interactions using smart glasses or MR headsets. In the future, users may no longer need to actively open applications. Instead, through glasses that are always online and environmentally aware, they will achieve more efficient and convenient natural interactions with AI.

Recently, Google's CEO and executives have repeatedly emphasized in interviews that AI will evolve beyond chatbots to become Agents capable of “understanding the real world and taking proactive actions.” This implies that Android XR is likely to serve as the physical carrier for Google's next-generation AI Agents.

Gemini Intelligence

Although Google has not officially announced the specific version number of Gemini Intelligence, insights from the official preheat (teaser) information and developer agenda for Google I/O 2026 suggest that the new Gemini (widely referred to as Gemini 4.0 in the industry) could become the most disruptive technological focus of this conference. It represents Google's AI system's transition from a tool to an intelligent agent at the systemic level.

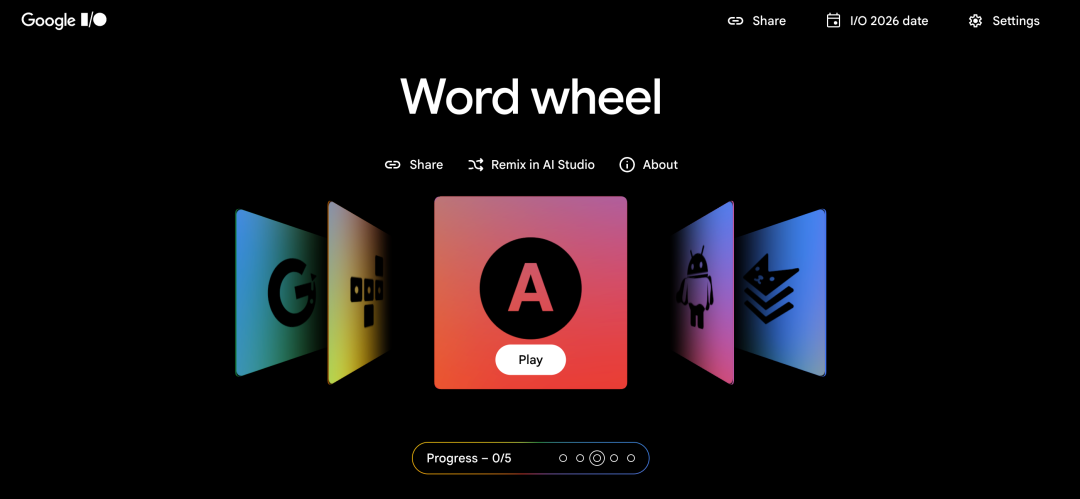

Notably, Google is continuously advancing AI towards an intelligent operating system. In the officially announced puzzle game featuring Gemini, AI assumed roles such as “stage designer,” “creative assistant,” and “program engineer,” showcasing upgrades in Gemini's underlying architecture and interaction capabilities.

More importantly, Google is deeply integrating AI with spatial computing hardware, enabling it to extend beyond the screen and gradually enter the real world. Within this framework, AI does not merely answer questions but actively participates in constructing an immersive interaction environment that is proactive, continuous, and perceptive.

This not only elevates the inherent environmental perception capabilities of XR devices to a new level but also tightens their integration with AI. The potential sparks that may arise in the future are worth anticipating.

Agentic AI

Agentic AI represents the next phase in artificial intelligence development, focusing on transforming chatbots that merely generate content into autonomous agents capable of decision-making and execution. Unlike traditional generative AI, which primarily outputs information, Agentic AI can understand its environment, plan steps, and proactively complete complex processes based on goals, thereby replacing or assisting humans in workflow execution to a greater extent.

Over the past few years, Google has made significant strides in AI technologies across text, images, video, and audio. Google I/O 2026 is likely to be the first comprehensive showcase of Agentic AI. Industry predictions suggest that this launch will not only demonstrate its capabilities at the software level but may also integrate with Android XR devices to enable immersive interactions in real-world environments.

Android 17

As is widely known, Android is one of the operating systems most closely aligned with the Gemini ecosystem. Google released the first Developer Preview of Android 17 in February this year. After months of developer testing and systemic optimization, the official version of Android 17 is expected to be released this summer or in the third quarter, likely coinciding with the launch cycle of the next-generation Pixel devices.

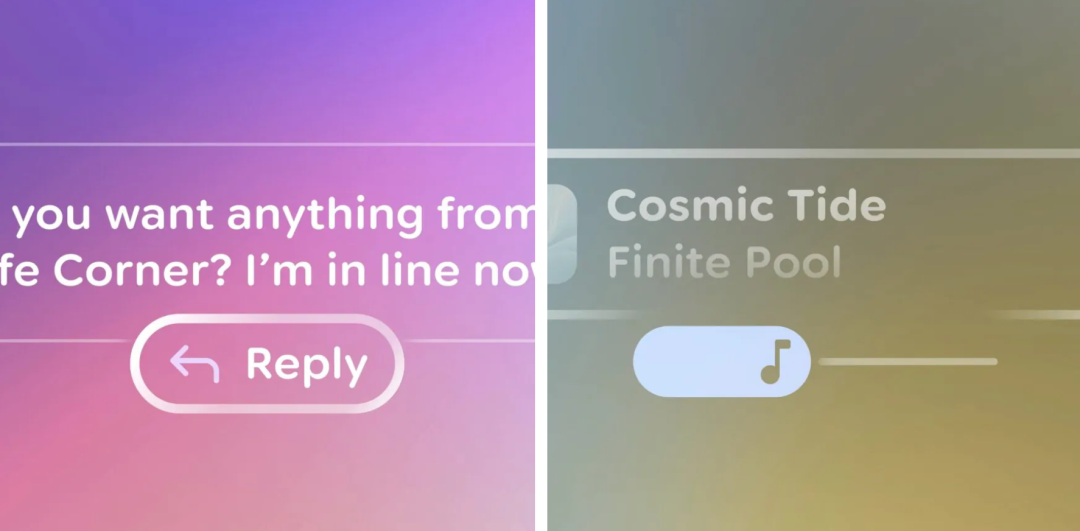

The “The Android Show: I/O Edition,” released in advance on May 12, also served as a preheat (teaser) for the main conference content to some extent. Observations from the official video and demonstration content reveal that Android is accelerating the construction of a unified framework for “multi-device interconnection.” This further blurs the interaction boundaries between phones, tablets, wearables, and XR terminals. Gemini is more deeply embedded at the system level, beginning to exist as a “resident agent” in devices rather than merely as an application-level assistant.

03

Android XR's Ecosystem Debut and Hardware Preview

Amidst the overarching theme of AI, Google I/O 2026 will inject a strong dose of confidence into the XR industry. Currently, with frequent adjustments in Apple's Vision Pro product team and Meta significantly reducing its VR investments, the market is shifting from headsets to smart glasses. However, Google is making a strong entrance with the Android XR ecosystem. By forming an “Android-style” alliance with Samsung, Qualcomm, and multiple eyewear brands, Google aims to shift the XR competition from hardware to ecosystem warfare, opening up a new path for the industry.

Android XR Smart Glasses to Debut at I/O 2026

Google has confirmed that it will showcase the Android XR smart glasses ecosystem for the first time at this year's I/O. Previously, Google established partnerships with Samsung, XREAL, Warby Parker, Gentle Monster, and other manufacturers. It is expected that consumer-grade devices powered by Android XR will be launched within the next year. Unlike Apple's closed hardware approach, Google hopes to replicate the open ecosystem model of the Android phone era.

Google is Building a Dual-Morphology Product Line for “AI + XR”

Based on currently available information, Android XR is not targeted at a single device but covers two distinct directions.

One category consists of lightweight AI smart glasses, focusing on real-time interaction with voice, cameras, and Gemini. The other category comprises XR/MR devices with complete spatial display capabilities, designed for productivity, immersive content, and spatial computing.

Google clearly recognizes that “all-day wearability” is closer to the consumer market than “full immersion.”

Android XR's True Goal: Liberating AI from Screens

Based on all publicly available information, Google's XR strategy significantly differs from the previous generation's logic. Its core is no longer about “building a complete virtual world” but enabling AI to enter the real world. Android XR currently emphasizes:

Egocentric Computing

Live Vision AI

Scene Understanding

Proactive AI Agents

Multimodal Interaction (Voice/Vision/Gestures)

This indicates that Google is attempting to make smart glasses the next entry point for AI, liberating AI from phone screens and transforming it into an AI assistant continuously present in the user's field of view.

Integration of Android 17 with XR Systems

The evolution of Android 17 is providing foundational systemic support for Android XR. Unlike previous versions, which focused more on mobile device optimization, Android 17 clearly exhibits an architectural trend toward “multi-device interconnection.”

The UI interaction style presented in the “The Android Show: I/O Edition” video also signals an important development: Android is laying the groundwork for the Android XR ecosystem at the systemic level.

For example, there is a greater emphasis on floating information layers, environmentally aware prompts, and cross-screen continuous operation logic. These capabilities provide the foundation for future operation forms in AI glasses, AR devices, and MR headsets.

Developer Tools and Platforms Are Fully Prepared

Currently, Google has launched the third developer preview of the Android XR SDK and released a new UI tool library specifically designed for transparent lenses, called 'Glimmer'. These tools aim to lower development barriers and help developers easily build user-friendly applications for smart glasses. It is expected that the SDK and hardware development kits will be ready ahead of the consumer product launch, preparing for a thriving ecosystem. Additionally, more supporting resources for Android XR system development are likely to be announced at this conference.

Samsung Galaxy XR and 'Galaxy Glasses' may be publicly showcased for the first time

According to foreign media reports, Samsung is likely to showcase its Android XR smart glasses for the first time at Google I/O 2026. Based on recent leaks, the device, codenamed 'Jinju,' will feature a lightweight design similar to Meta Ray-Ban, equipped with a Qualcomm Snapdragon AR1 chip, a 12MP camera, and a directional speaker system, weighing approximately 50g. Compared to traditional head-mounted displays, such products clearly emphasize daily wearability.

Judging by the current overall trend, the signals released at Google I/O 2026 are already very clear: the next phase of XR will no longer be defined by display technology but by whether AI can adapt to it.

Over the past decade, the core competition in XR has revolved around resolution, field of view, and display capabilities. However, with the integration of AI, the focus of competition is undergoing a fundamental shift. The future market landscape will truly be determined by who can provide stronger AI understanding capabilities, a more complete ecosystem, and more natural human-computer interaction methods.

Against this backdrop, the strategic significance of Android XR lies not only in whether it builds a successful hardware product but also in whether Google successfully incorporates XR into its 'Gemini Intelligence ecosystem.' In other words, what Google is attempting to do is not just 'enter the XR market' but to redefine XR itself through AI.