GTC2026 | Autonomous Driving Demands a New 'Brain': Is Data and Compute Stacking No Longer Effective?

![]() 03/19 2026

03/19 2026

![]() 501

501

Produced by Zhineng Technology

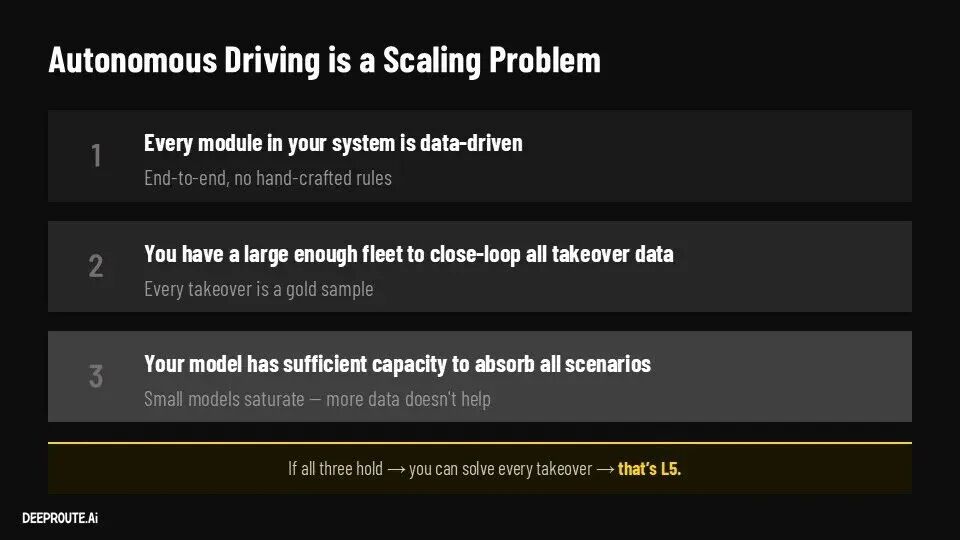

Over the past few years, the autonomous driving sector has operated on a premise that has seldom been challenged: with sufficient data and robust engineering capabilities, the system would keep getting better.

From perception to planning, and from rule-based systems to end-to-end solutions, the entire industry has been doubling down on a singular path: integrating more sensors, boosting compute power, and developing more intricate software stacks. The prevailing assumption was that this was merely an 'engineering challenge'—success was simply a matter of time and investment.

However, at GTC 2026, a presentation by Cao Tongyi, CTO of Autowise.ai, offered a fresh perspective. Despite the explosion of data and the expansion of vehicle fleets, the pace of system enhancement is decelerating. Many firms have reached a stage where 'progress seems evident, but the user experience remains stagnant.'

The crux of the issue is not engineering—it's 'cognition.' Autonomous driving is transitioning from a systems engineering challenge to a modeling challenge.

01

Autonomous Driving Encounters the 'Cognitive Barrier'

In the last two years, end-to-end systems have gained industry-wide consensus. The modular approach is being phased out in favor of integrating perception, prediction, and planning into a unified model, aiming to approximate human driving capabilities through data-driven methods. Yet, the reality is that end-to-end systems have not delivered the anticipated leap forward.

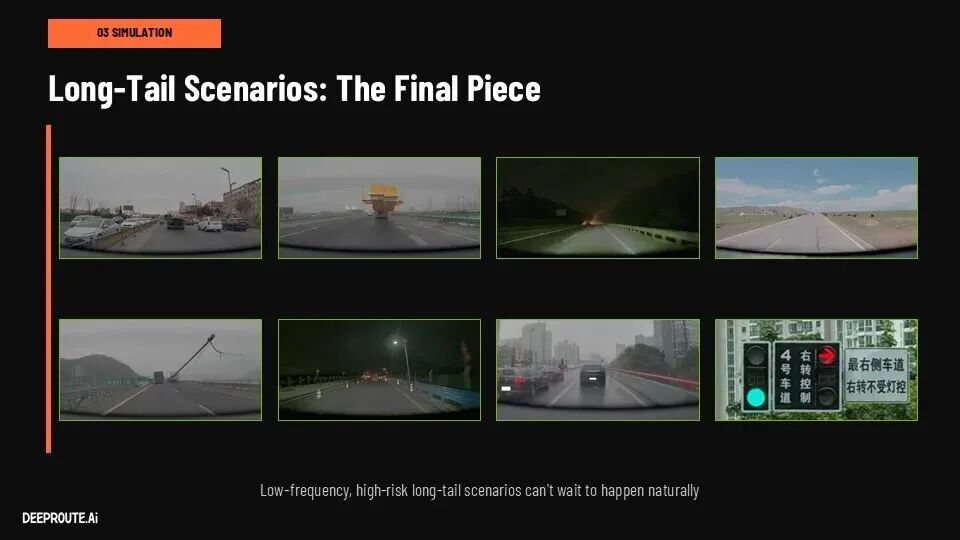

Models are advancing, but the rate of progress is decelerating. Data is mounting, but long-tail problems persist.

For many companies, the reality is that systems are becoming increasingly complex, yet their ability to handle extreme scenarios is not improving proportionally. This mirrors a classic Scaling Problem.

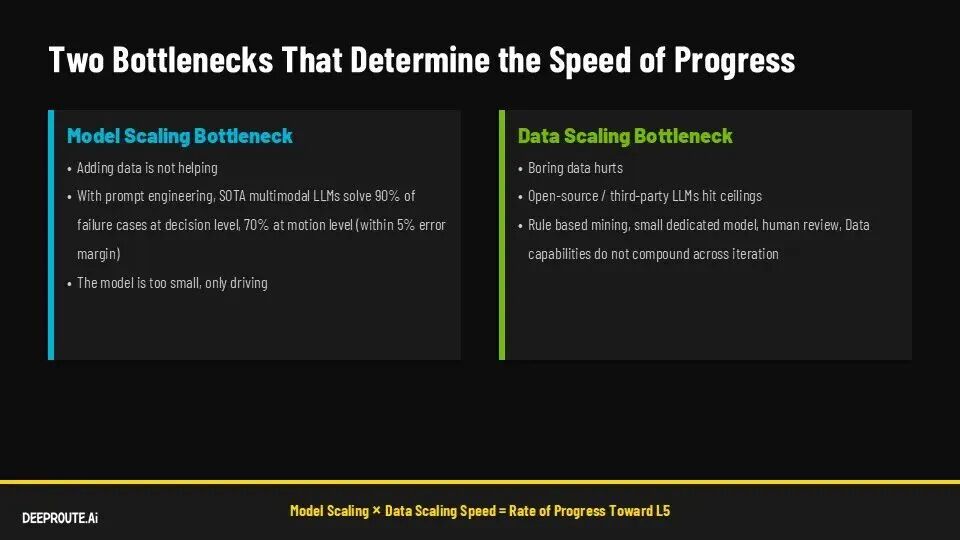

● The first challenge is the model capacity bottleneck. Autonomous driving does not operate in standardized environments but in the highly dynamic real world. Unpredictable obstacles, abnormal behaviors, and unstructured participants necessitate models with robust generalization capabilities. Current models rely more on 'memorizing distributions' than 'understanding the world,' rendering them susceptible to out-of-distribution data.

● The second issue is data efficiency. Vehicles generate vast amounts of video data daily, but only a small fraction is valuable for training. Most 'normal driving data' dilutes the model's learning capacity. Traditional data filtering relies on rules and manual labor, which is inherently a one-time process and struggles to continuously accumulate knowledge.

● More critically, iteration speed is a concern. The data closed loop is excessively long, often taking days or more from collection to deployment. As fleet sizes grow, this delay worsens, creating a structural contradiction: 'abundant data, but sluggish cognitive updates.'

Together, these issues form a genuine barrier—not a compute barrier, but a 'cognitive barrier.' For the first time, autonomous driving faces a problem akin to that of large language models: it's not about a lack of data but about the inability to transform data into effective cognition.

02

What Transformations Will the 40B VLA Model Bring?

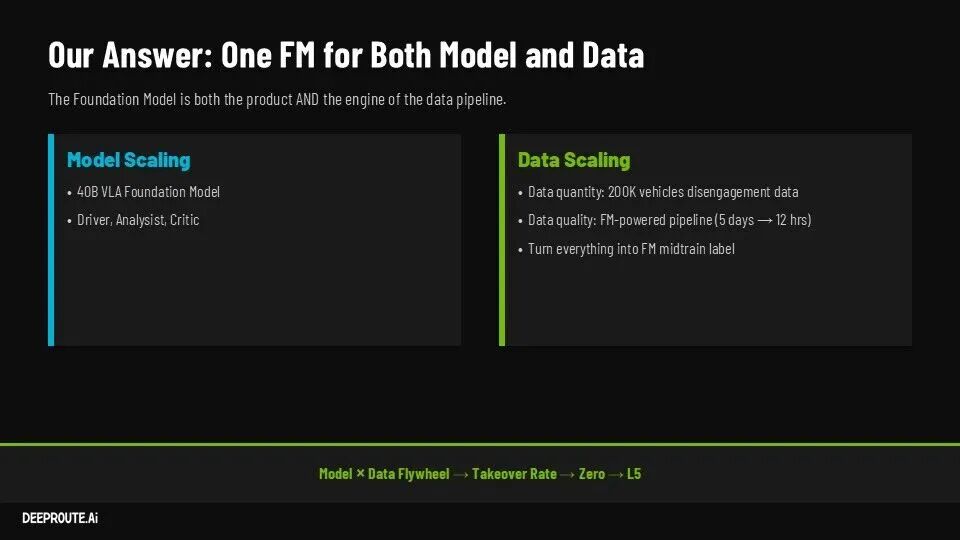

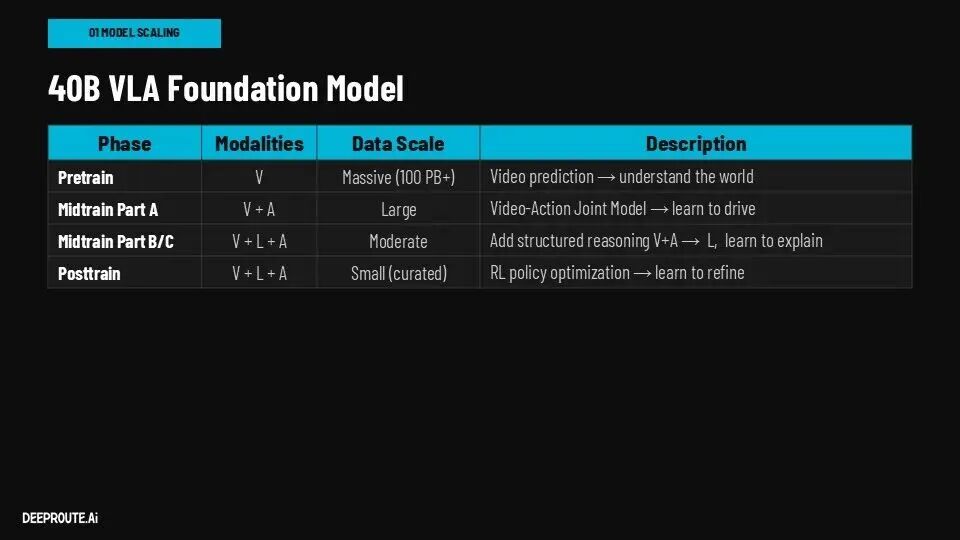

Against this backdrop, Autowise.ai's 40B-parameter VLA model represents not just a larger model but a fundamental shift.

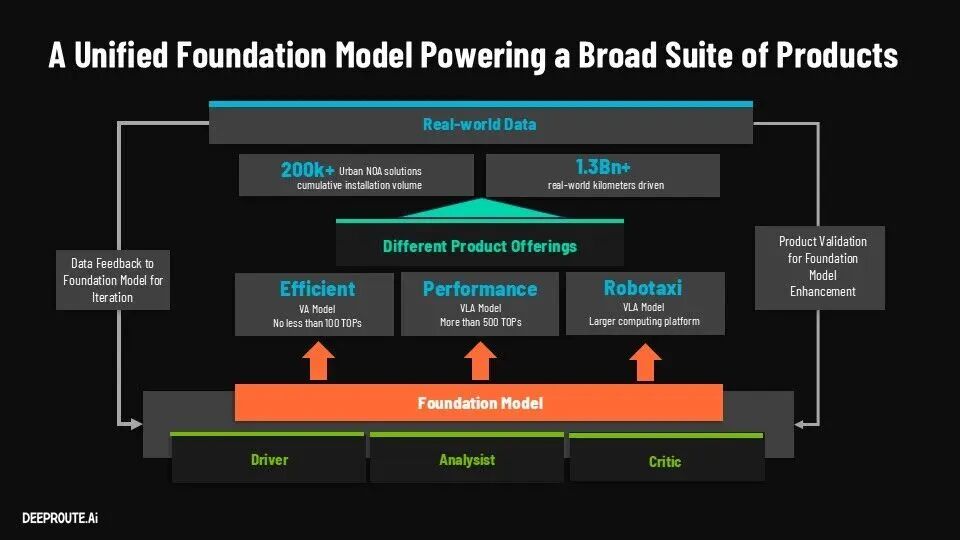

Traditional autonomous driving models function as execution systems—input the environment, output actions. The VLA model, however, is designed to serve three roles simultaneously: driver, analyst, and evaluator. The model no longer just 'makes decisions'—it begins to 'understand decisions.'

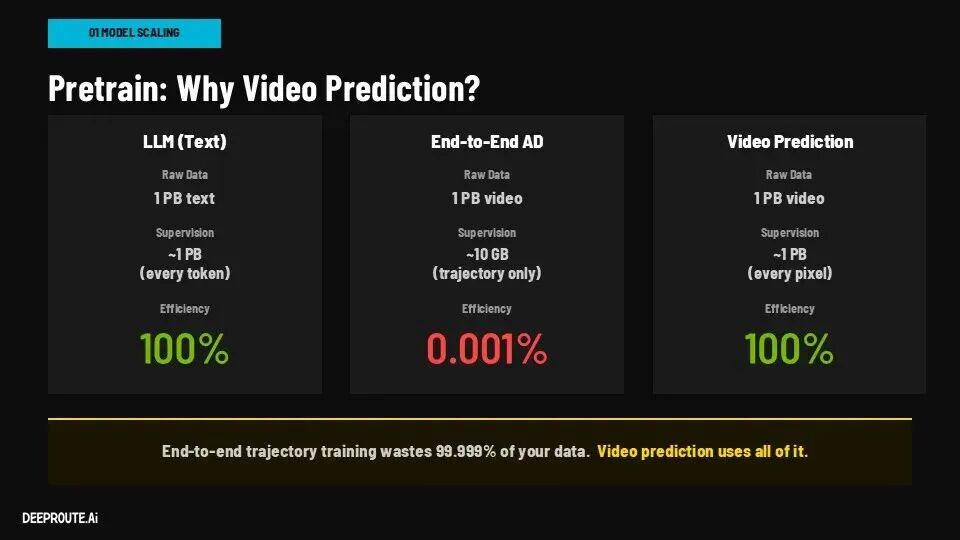

During pre-training, Autowise.ai shifts from trajectory supervision to video prediction. This seemingly simple change redirects the training goal from 'imitating behavior' to 'modeling the world.'

When the model is tasked with predicting future frames, it must learn object motion, spatial relationships, and causal logic—foundational cognitive abilities for autonomous driving.

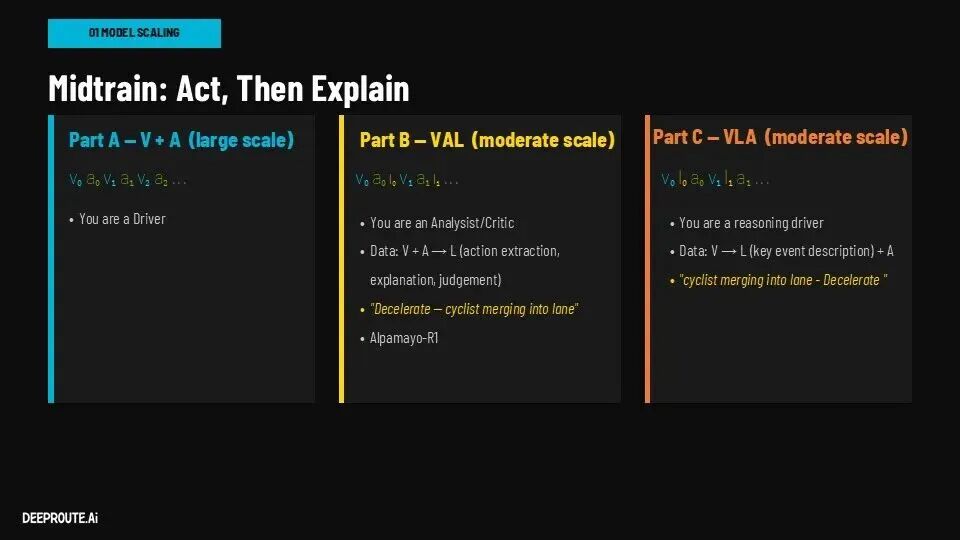

In the Midtrain phase, the Driver, Analyst, and Critic roles are trained concurrently. The model must not only output driving actions but also explain the current scene and evaluate action quality. This design constructs a 'self-consistent system': it acts and judges.

The incorporation of language further strengthens this. Through 'Learning to Explain' tasks, the model must describe scenes and decision-making rationales in natural language.

This is not solely for interpretability but to compel the model to develop causal reasoning capabilities. In a way, this mirrors the Chain-of-Thought approach in large models—the ability to explain implies true understanding.

Finally, during inference, the system is abstracted into three sequential steps: Observe-Reason-Act.

Visual inputs are encoded into tokens, reasoning generates decision logic, which is then translated into control commands. This structure introduces, for the first time, an intermediate 'thinking process' layer in autonomous driving, rather than jumping directly from input to output.

From a broader perspective, can this VLA model evolve from a 'reactive system' to a 'cognitive system'?

03

The Data Closed Loop Is Transformed: Autonomous Driving Acquires 'Self-Evolution' Capabilities

Changes in the model ultimately manifest in the data closed loop. In traditional systems, the loop is heavily human-driven: humans identify problems, annotate data, define rules, and iteratively optimize the model. This approach is effective initially but quickly hits bottlenecks as scale increases.

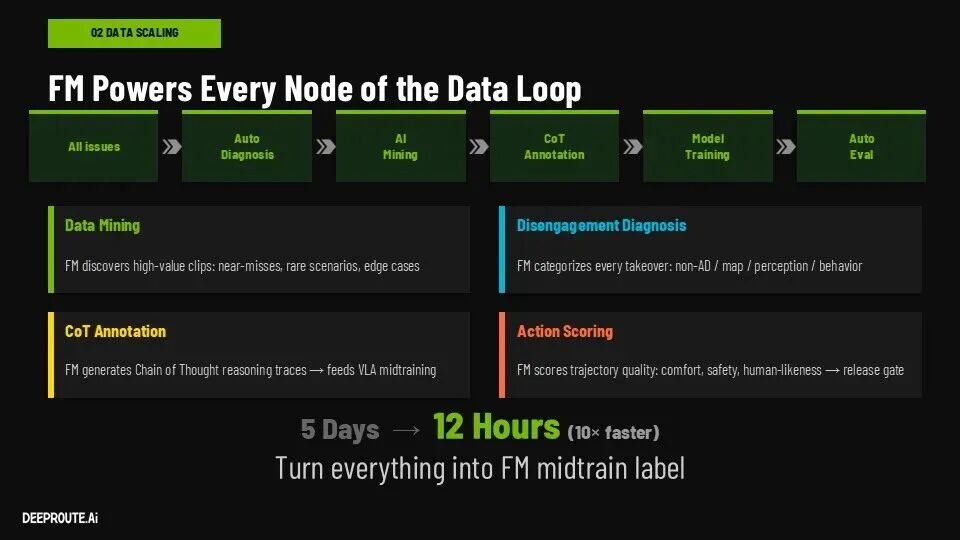

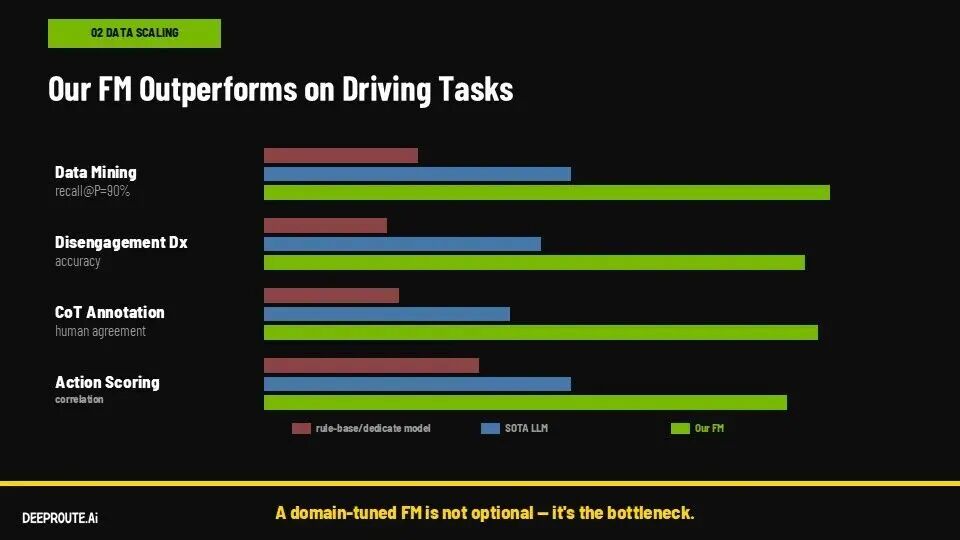

Autowise.ai's approach utilizes a Foundation Model to reconstruct the entire loop, making data processing an integral part of the model's capabilities.

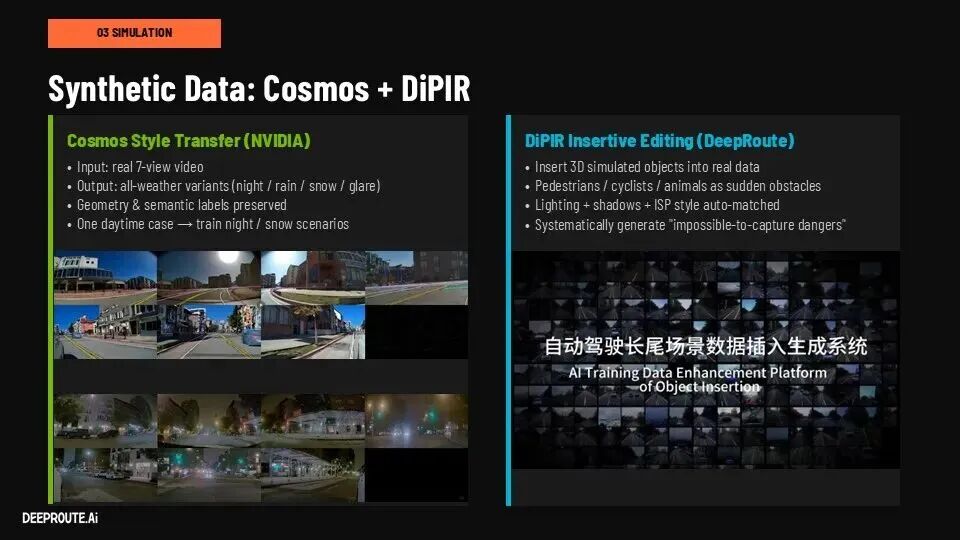

In this system, data mining no longer relies on rules but on the model's automatic identification of high-value clips. Disengagement analysis shifts from manual attribution to model-based classification and diagnosis. Annotation evolves from 'labeling answers' to 'generating reasoning processes.' Driving quality assessment is unified under the model.

These capabilities share a common model foundation, creating a true virtuous cycle: stronger models enhance data processing; more data further refines the model.

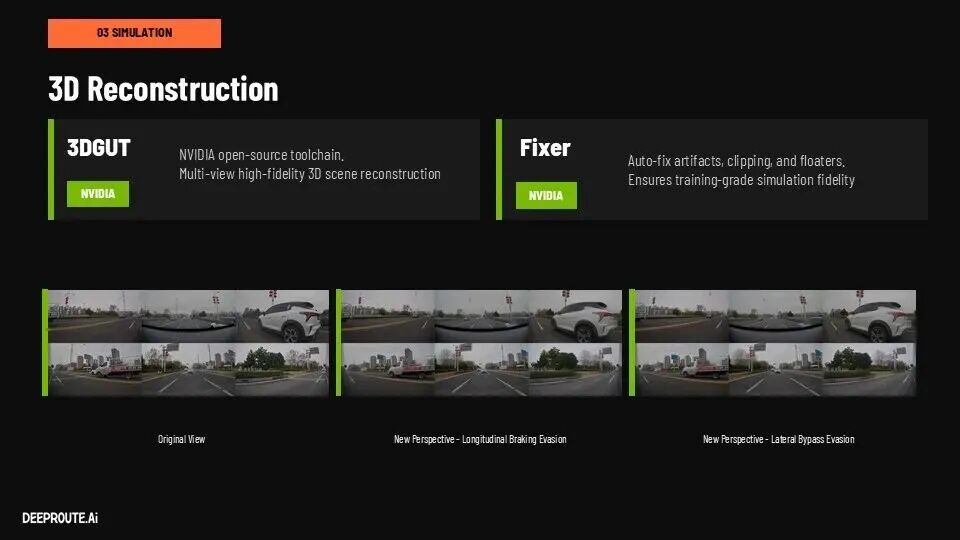

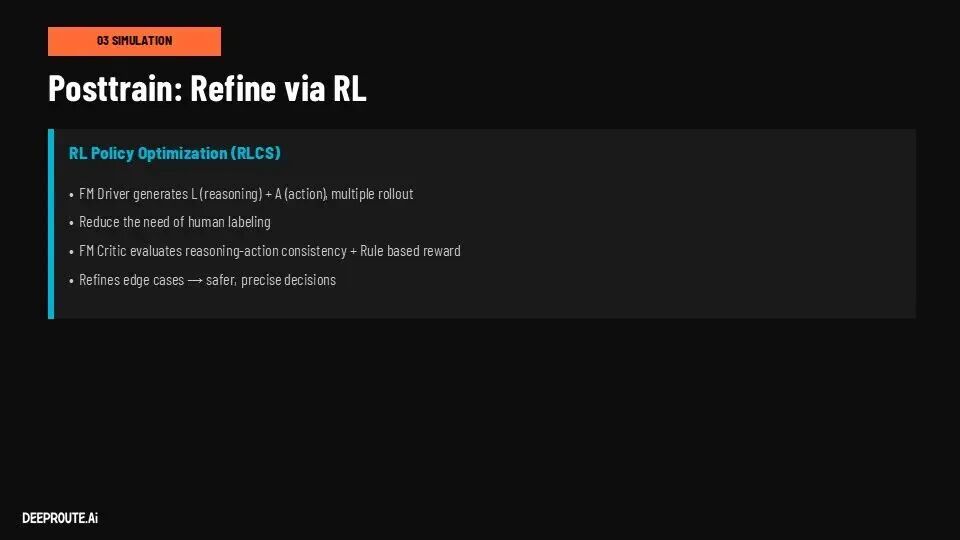

Handling long-tail problems also changes. Corner cases that once required human-defined 'correct answers' can now be resolved through simulation and reinforcement learning.

The model generates multiple decision paths in high-fidelity environments, continuously optimizing strategy distributions through self-assessment. This marks the system's first step toward true 'self-evolution.'

Of course, engineering challenges persist. Deploying a 40B model, controlling costs, and adapting it to diverse compute platforms require techniques like distillation. But these issues no longer define the upper limit—they merely constrain implementation pace.

Summary

Over the past decade, the industry has competed on engineering prowess: stronger sensors, more stable systems, and managing complexity within deliverable limits. After GTC 2026, the focus shifts to who can build a sustainably evolving 'AI brain,' redefining industry stratification.

Some companies will continue optimizing engineering, making systems more stable and controllable but with linearly improving capabilities. Others will bet on model-driven approaches, accepting higher upfront costs but potentially achieving exponential capability gains once critical thresholds are crossed.

Autonomous driving has never evolved uniformly—it resembles AI itself. It either lingers below a threshold or, once crossed, rapidly creates gaps and rewrites the rules.