Why didn't GPT-5 bring more surprises?

![]() 08/11 2025

08/11 2025

![]() 695

695

"We can launch much smarter models (and we will), but this model can benefit over a billion people."

Author: He Jian

Editor: Jiang Jiao

Cover: ChatGPT screenshot

After two and a half years since the release of GPT-4, OpenAI finally released its latest model, GPT-5. The industry had been waiting for this for a long time, and Sam Altman had been teasing the public for half a year. However, the feedback received by this flagship model at its launch was not satisfactory.

When GPT-4 was released, it took peers half a year or even longer to catch up with OpenAI. But GPT-5 did not significantly outperform mainstream models. Some of its performance indicators have even lagged behind, falling behind Musk's Grok 4 and the newly released Claude Opus 4.1 in some benchmarks, and its context length is also inferior to Google's Gemini 2.5 Pro.

Ordinary users have stronger dissatisfaction with GPT-5. They did not feel much performance upgrade compared to GPT-4o, and instead miss the familiar GPT-4o more. Originally, OpenAI delisted the old model, but after strong opposition from users, OpenAI had to relist the GPT-4o model for Plus users.

We may have to face the fact that even a powerful company like OpenAI has to confront the limitations of Scaling laws. The continuous expansion of model size no longer brings proportional performance leaps as it did in the past, and iteration cycles inevitably slow down.

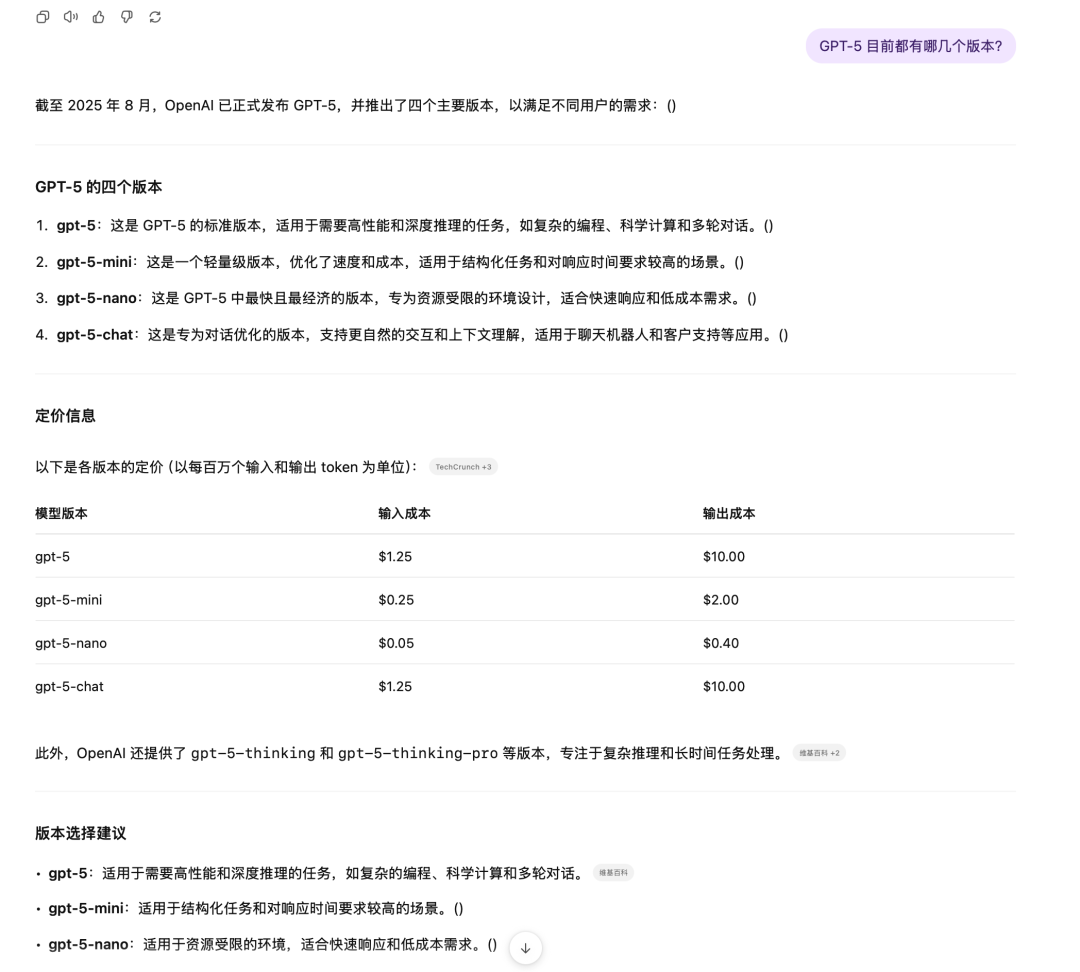

It has been 29 months from GPT-4 to GPT-5, but this time there was no leap-frog improvement like from GPT-3 to GPT-4. Over the past year, OpenAI has maintained a pace of releasing a segmented model every two months on average, filling the vacuum period between model generations with dazzling models, emphasizing the o series for reasoning, smaller mini models, and more powerful Pro versions.

Like the latest GPT-5, which emphasizes reliability and ease of use, these updates are engineering innovations in the context of increasingly expensive and scarce performance growth. It has certainly become more user-friendly and reliable, but it is also increasingly lacking in surprises.

Fortunately, users do not always need such powerful models. In fact, more ordinary users use large models merely to complete some basic Q&A or simply use them as emotional companions.

ChatGPT is the fastest app to reach 100 million users. Now it has 700 million weekly active users, with nearly one-tenth of the global population using ChatGPT, but more users only use the free basic model. According to a report by The Information in April this year, ChatGPT has about 20 million paid subscribers.

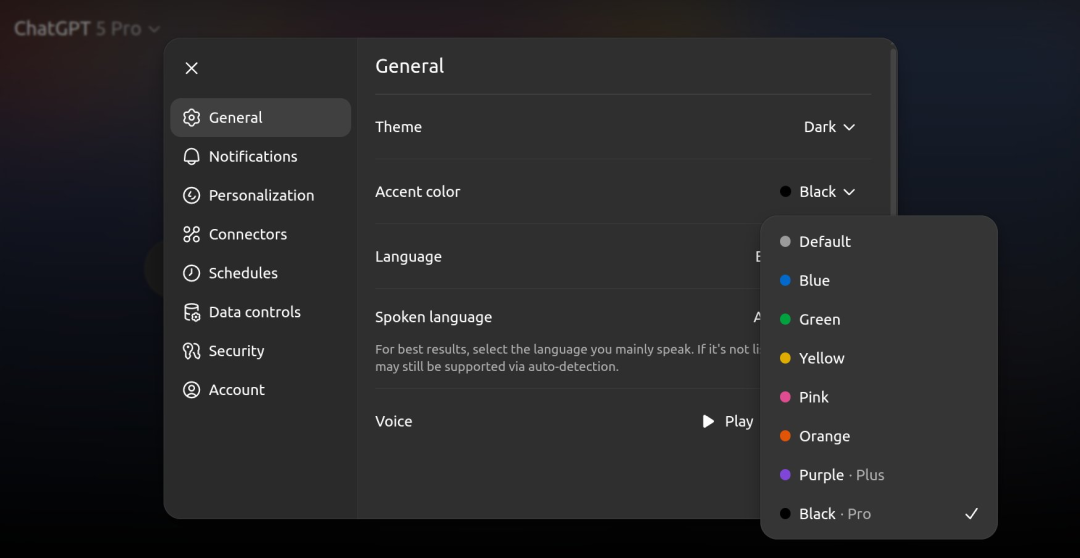

GPT-5 is now open to all users. When opening ChatGPT, a more noticeable perception is that the conversation interface has become more colorful, and users can now customize the color of the conversation bubbles - but purple is limited to Plus users, and Pro users can use the more prestigious black. OpenAI, which has always distinguished user levels based on model capabilities, has finally learned the essence of QQ membership.

ChatGPT adds custom color features

OpenAI has not disclosed the parameter size of GPT-5. In an interview with CNBC after the conference, Sam Altman said that they will still prioritize investment in training and computing power in the future and are willing to endure losses for a longer period.

Performance did not significantly outperform, but it is still the most comprehensive model

Musk was probably the most excited person at the GPT-5 launch event. Before the event ended, he announced Grok-4's victory on X.

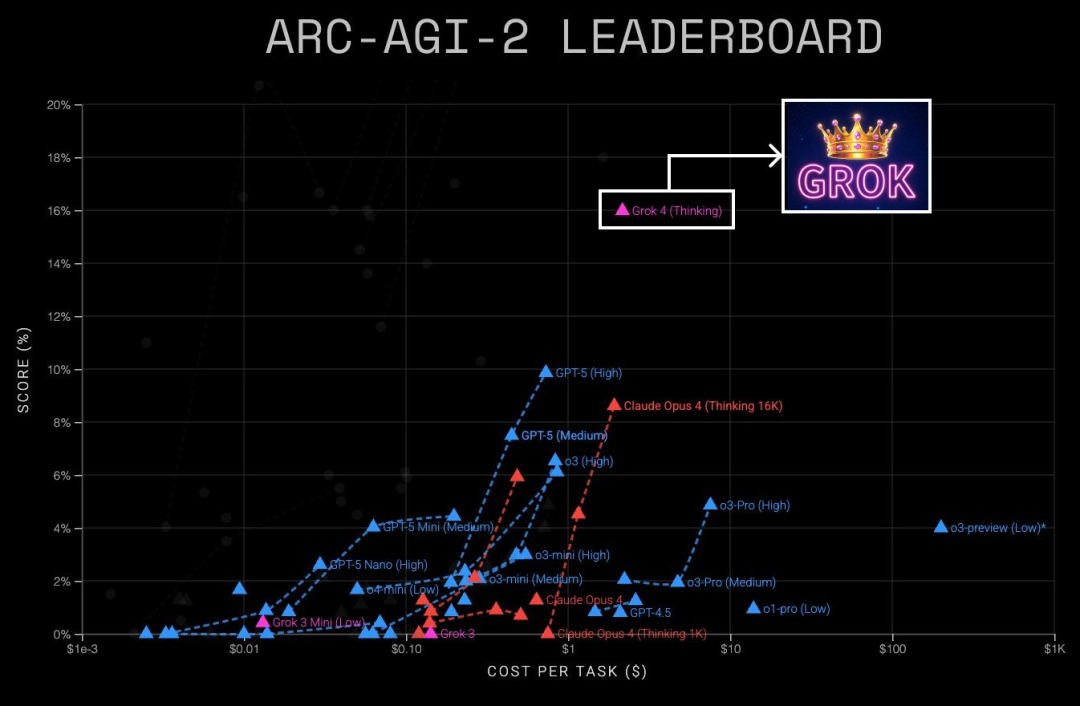

In the Humanity's Last Exam test, GPT-5 Pro achieved an accuracy rate of 42.0% with tools enabled, slightly lower than the 44.4% of the Grok 4 Heavy model. In the ARC-AGI-2 benchmark, Grok-4 (Thinking) scored 16.0%, while GPT-5 (High) scored only 9.9%.

Musk specifically pinned the comparison of test results between the two on X, saying, "In one sentence: Grok 4 Heavy from two weeks ago is smarter than GPT-5 now." He then announced that Grok 5, to be released by the end of the year, will be even more powerful.

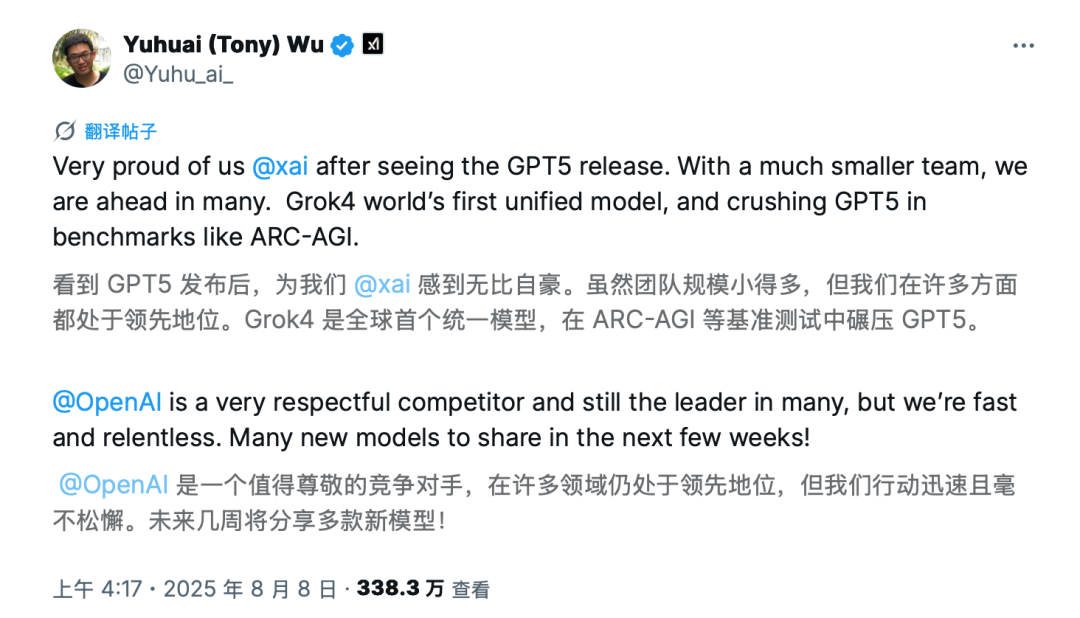

Wu Yuhuai, co-founder of xAI, also expressed on X that the xAI team felt very proud after the release of GPT-5. "Despite being much smaller in team size, we are leading in many aspects." He said that xAI will release more new models in the coming weeks.

Wu Yuhuai's statement on X

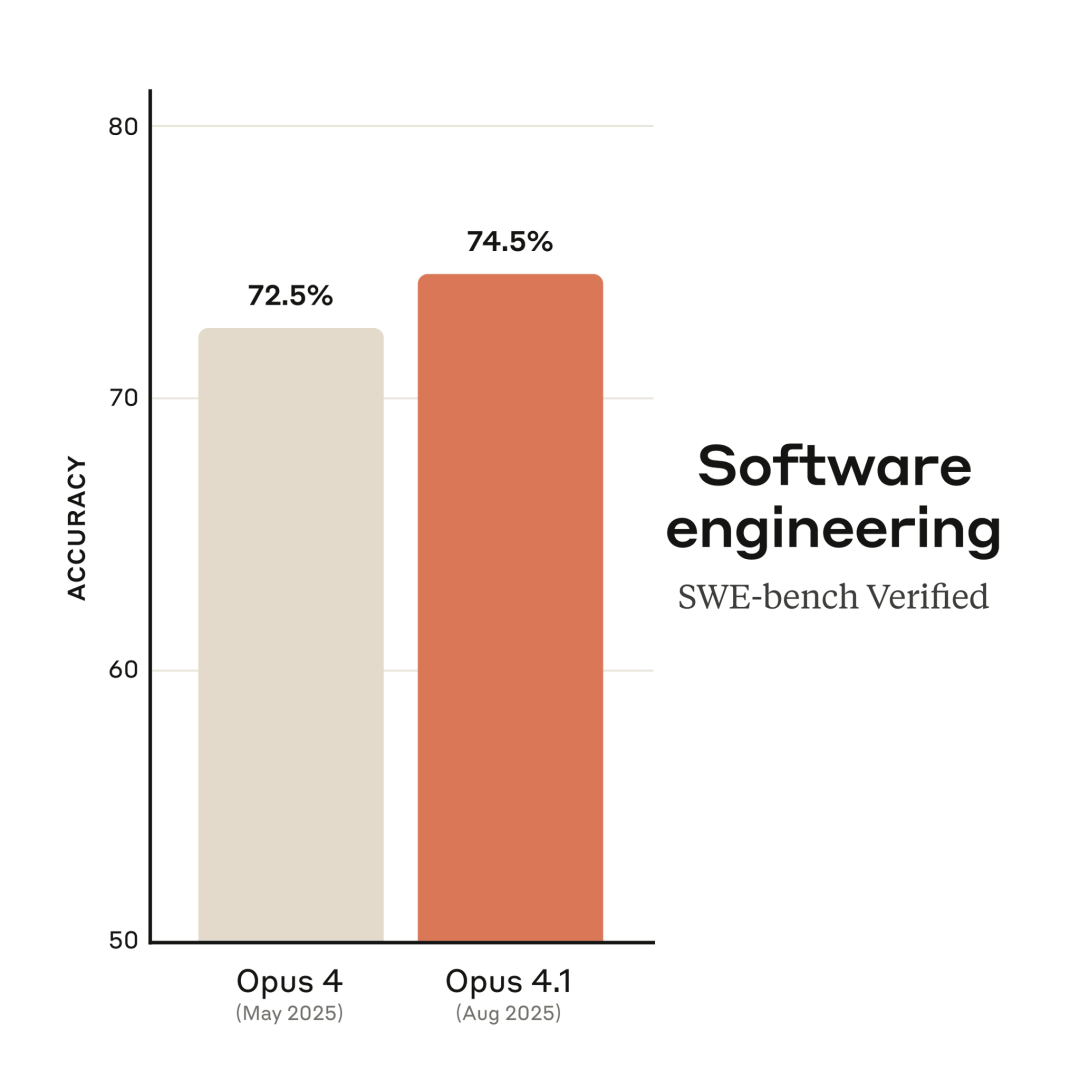

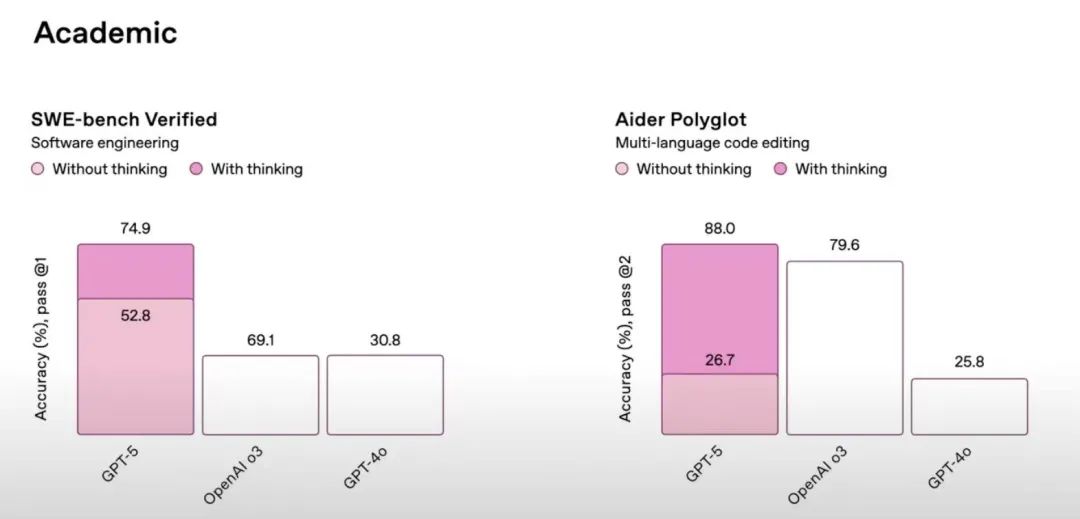

Claude Opus 4.1, released 2 days earlier than GPT-5, also surpassed GPT-5 in some tests. In the SWE-bench Verified test, GPT-5 with deep thinking mode enabled scored 74.9%, only 0.4% higher than Claude Opus 4.1 - and that's without Claude Opus 4.1 enabling deep thinking.

Without deep thinking enabled, GPT-5 scored nearly 30% lower than Claude Opus 4.1. Perhaps out of respect for his former employer, Anthropic founder Dario Amodei did not emphasize this lead externally like Musk.

Anthropic states in the product documentation that deep thinking is not enabled in the SWE-bench Verified test

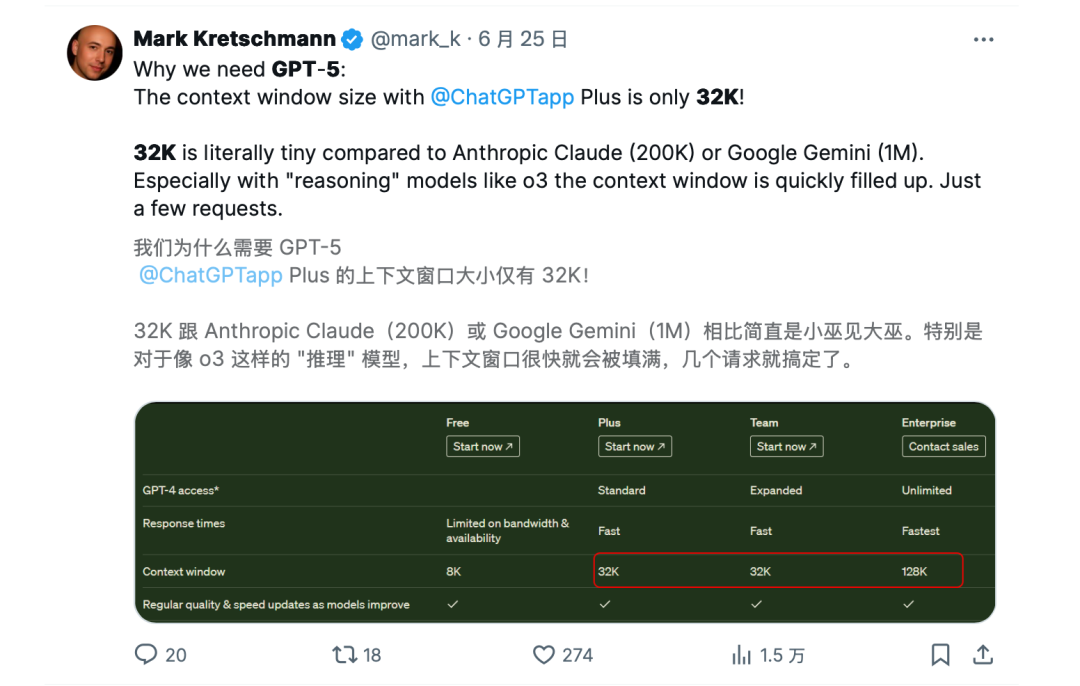

Compared to the limited performance upgrades, the cost reduction of GPT-5 is more prominent. The input cost of GPT-5 is only $1.25 per million tokens, about half that of GPT-4o, and the nano version is as low as $0.05 per million tokens.

In comparison, the input price of Claude Opus 4.1 is as high as $15 per million tokens, and Gork 4 is $3 per million tokens. Even though other models have certain advantages in some test scenarios, GPT-5 is still one of the most cost-effective and comprehensive models you can find on the market.

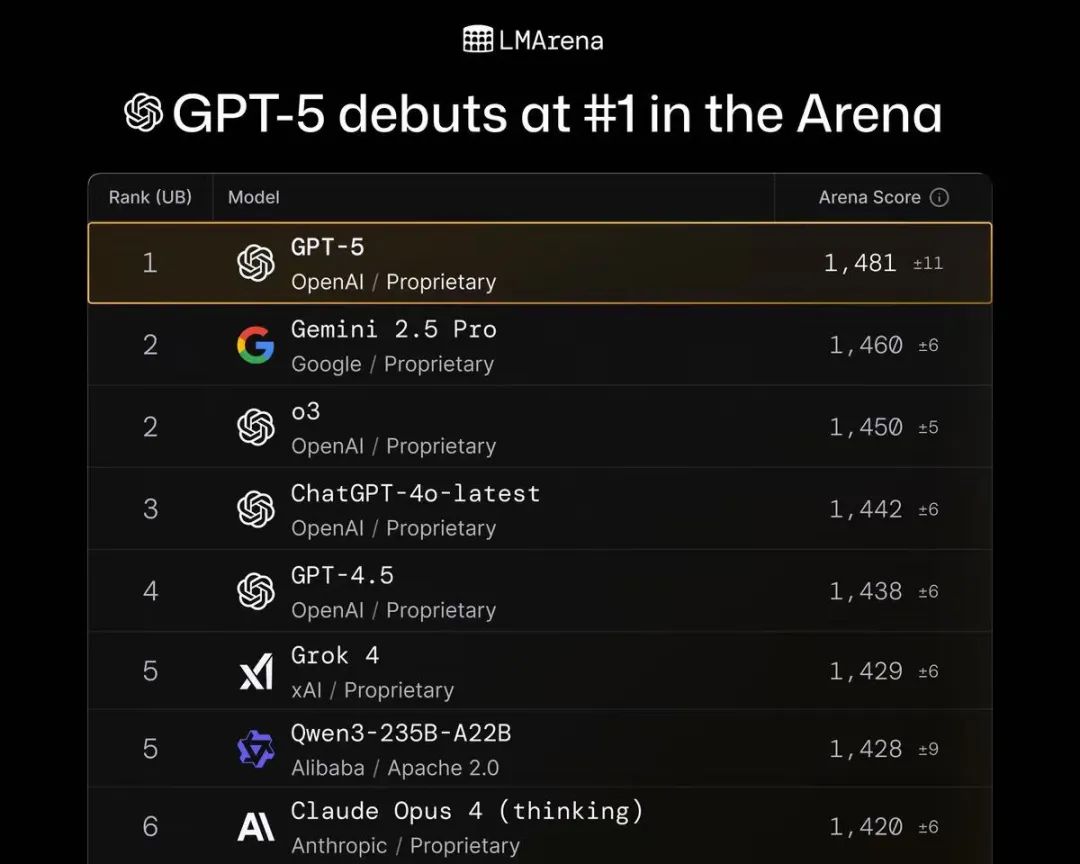

In the latest "Arena" rankings on the neutral evaluation platform LMArena, GPT-5 still ranks first in all evaluation items, including text understanding, programming, vision, and other categories. "GPT-5 tops the LMArena rankings with the highest score ever," LMArena described.

LMArena rankings

OpenAI also placed more emphasis on industry applications at the conference. The performance introduction at the beginning of the conference was brief, and more time was given to the upgrades of GPT-5 in specific industries such as programming, writing, and healthcare, which are the three core scenarios for people using ChatGPT.

Especially in the field of programming, at least half of the nearly one and a half hours of the conference were about programming. "GPT-5 is the best programming model in the world," said Greg Brockman, President of OpenAI.

They not only invited Michael Truell, founder and CEO of AI programming startup Cursor, to demonstrate on stage but also listed test evaluations and praises from executives of 22 AI companies, including Windsurf, JetBrains, Manus, and Genspark, on their official website. This practice is not common in past OpenAI product updates.

GPT-5 may be one of the fastest models for OpenAI to land on the B-end. Before the conference ended, Satya Nadella, CEO of Microsoft, announced that multiple Microsoft products had already integrated GPT-5, and several companies, including Cursor, Manus, and Notion, also announced the completion of integration.

More reliable and easier to use

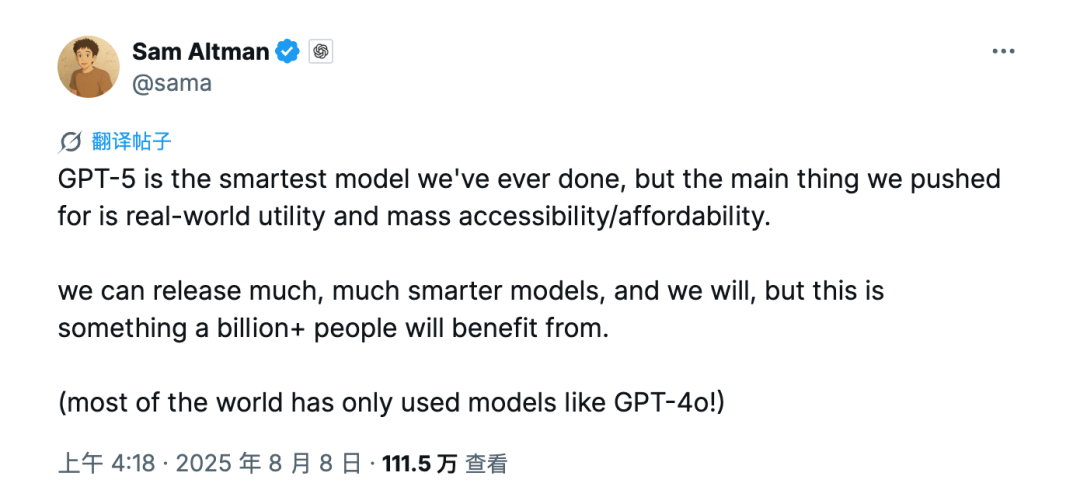

After the release of GPT-5, Sam Altman emphasized that GPT-5 is the smartest model they have developed so far, but their core pursuit is practicality in the real world, large-scale accessibility/affordability.

According to the definition on the OpenAI official website, GPT-5 is a more intelligent and widely applicable model. "GPT-5 not only surpasses previous models in benchmarks and responds faster, but more importantly, its questions in real-world scenarios are more practically valuable." They highlighted GPT-5's progress in reducing hallucinations, improving instruction following ability, and reducing model ingratiation.

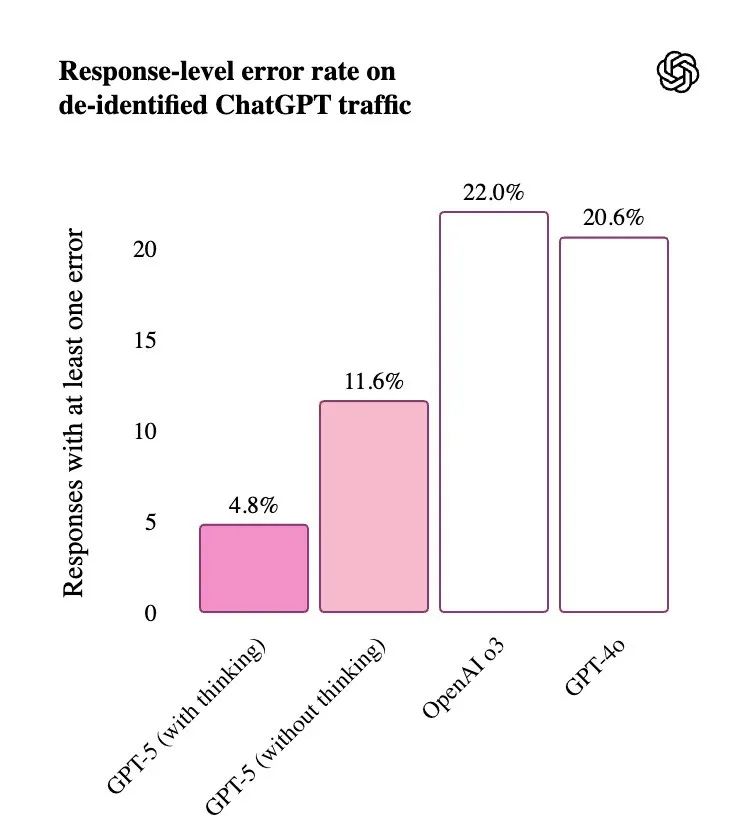

For example, with web search enabled, the probability of factual errors in GPT-5 is nearly half that of GPT-4o. In deep thinking mode, GPT-5's factual error rate is about 80% lower than o3. GPT-5 will also be "more honest" with users. It can more accurately identify tasks that cannot be completed and honestly express its limitations.

GPT-5 compared with o3 and GPT-4o models

GPT-5 compared with o3 and GPT-4o models

You may have encountered many reasoning models blatantly lying, especially DeepSeek-R1 - it is now the most widely used reasoning model in China, but it is also one of the models with the highest degree of hallucination. In the past six months, AI fake articles in the style of DeepSeek have almost swept the entire Chinese internet, and even many professional media outlets have not been spared.

For example, the fake article about the "Trump falling in love with the White House cleaning lady" that raked in $150 million, or the false news about Hong Kong Baptist University revoking a Wuhan University student's doctoral admission. These AI fake news articles have been widely shared and reported by domestic media.

Part of the reason is that in the past, large models relied more on a single reward-penalty training (RLHF). When faced with insufficient information or unsolvable problems, this mechanism tends to make the model cater to user expectations and give false content.

OpenAI has added a more refined multi-dimensional optimization mechanism in GPT-5, such as adding multi-objective reward signals. Even if the model cannot derive an answer, it will receive positive feedback for clearly expressing uncertainty. Or, monitoring the chain of thought (CoT) during the reasoning process to identify and correct fictional or logical flaws in real-time.

OpenAI has also added a new safe completion mechanism (Safe completions) to GPT-5, so that the model no longer simply answers or refuses when facing dangerous questions. For example, when you want to learn about making explosives, GPT-4o either refuses to answer or gives you detailed steps, while GPT-5 will inform you that it cannot provide specific steps for safety reasons, but it can introduce the history, chemical properties, and industrial uses of TNT to you.

Compared to previous models that always mindlessly chose to ingratiate users, GPT-5 is also more neutral, reducing the tendency to overly cater to users, using fewer emojis, and expressing more subtly and thoughtfully. "It feels more like chatting with a thoughtful friend with a doctoral IQ than talking to an AI," OpenAI described in the product documentation. But this has to some extent caused dissatisfaction among users who are accustomed to previous models. OpenAI has added 4 customizable style adjustments for GPT-5 and promised to add more personalized adjustments in the future.

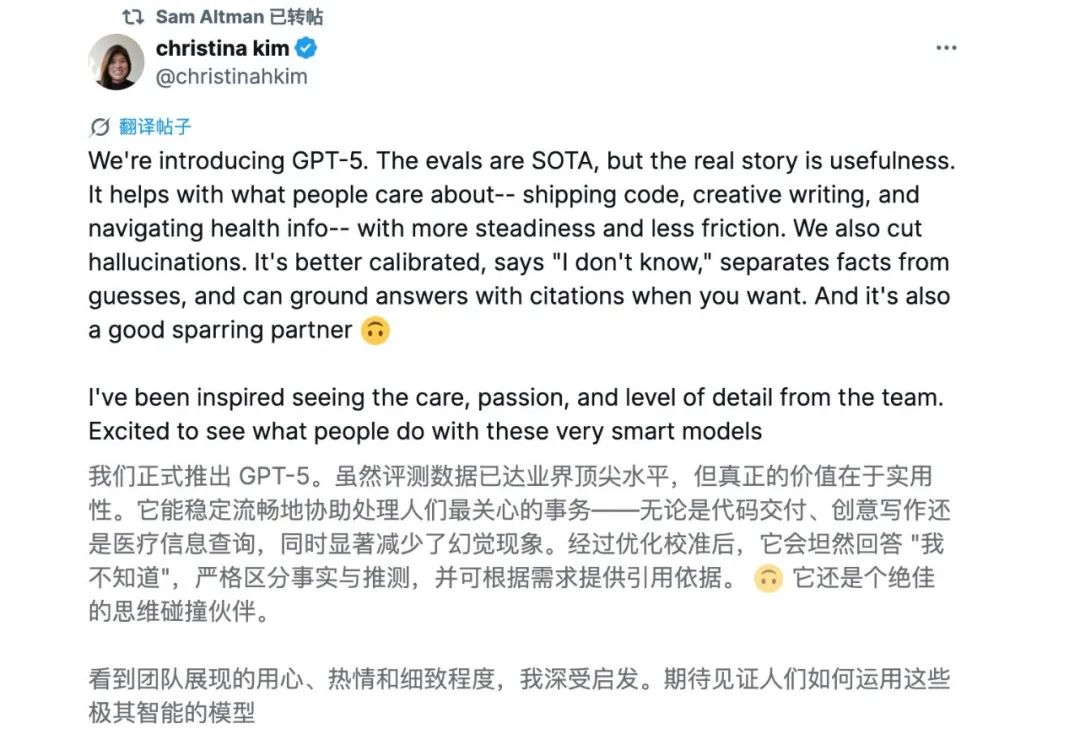

In short, these updates are all about enhancing the reliability and ease of use of the model, allowing users to more confidently introduce AI into their workflows. Christina Kim, a researcher at OpenAI, said on X that although GPT-5's performance reaches the top level in the industry, its true value lies in practicality. "It is better calibrated, says 'I don't know,' can distinguish between facts and guesses, and can provide citation sources to support answers when you need them."

For many users, the more significant upgrade of GPT-5 is that they can now use ChatGPT's reasoning capabilities for free. GPT-5 offers lower costs, higher accuracy, and faster speeds, and it is open to all users for free, with higher quotas for subscribers. This inclusive strategy may also limit performance, as OpenAI originally planned to launch a version supporting up to 1 million contexts but ultimately abandoned it due to computational cost constraints.

"We can launch much smarter models (and we will), but this model can benefit over a billion people," said Sam Altman. "Most people in the world may have only used models similar to GPT-4o." "For most ChatGPT users, this is their first exposure to reasoning capabilities," said Nick Turley, OpenAI's Vice President.

Sam's speech at X

Sam's speech at X

However, during the launch event that emphasized model accuracy, multiple charts from OpenAI contained basic errors. For instance, in a chart comparing GPT-5's thinking mode with o3's "code deception rate," the bar representing 50% was less than half the length of the bar for 47.4%. Sam later explained that the data itself was accurate but that the chart was mistakenly displayed during the livestream. "The staff were very tired from staying up late to work overtime, and human errors are inevitable. There are too many aspects to coordinate in the final hours before the live broadcast."

Multiple chart errors in the OpenAI launch event

Multiple chart errors in the OpenAI launch event

Why do ordinary users prefer the old models?

While professional developers, especially software professionals, have praised GPT-5, most ordinary users have complained about the updates to this flagship model.

Unlike previous OpenAI services that offered multiple different models, GPT-5 adopts a unified model strategy. The model can autonomously determine whether to invoke deep thinking mode and choose different-sized models to respond in the backend. "It aims to provide you with the perfect answer through just the right amount of thinking," introduced an OpenAI researcher at the launch event.

However, issues arose with this design from the outset. GPT-5's responses and reactions in most scenarios were inferior to those of the old models. Sam Altman later explained that the Autoswitcher had been down for most of the day due to a malfunction, preventing the system from calling higher-performance reasoning modes as needed. He stated that the issue had been fixed and promised to adjust and intervene in model switching to make it easier for users to obtain the correct model.

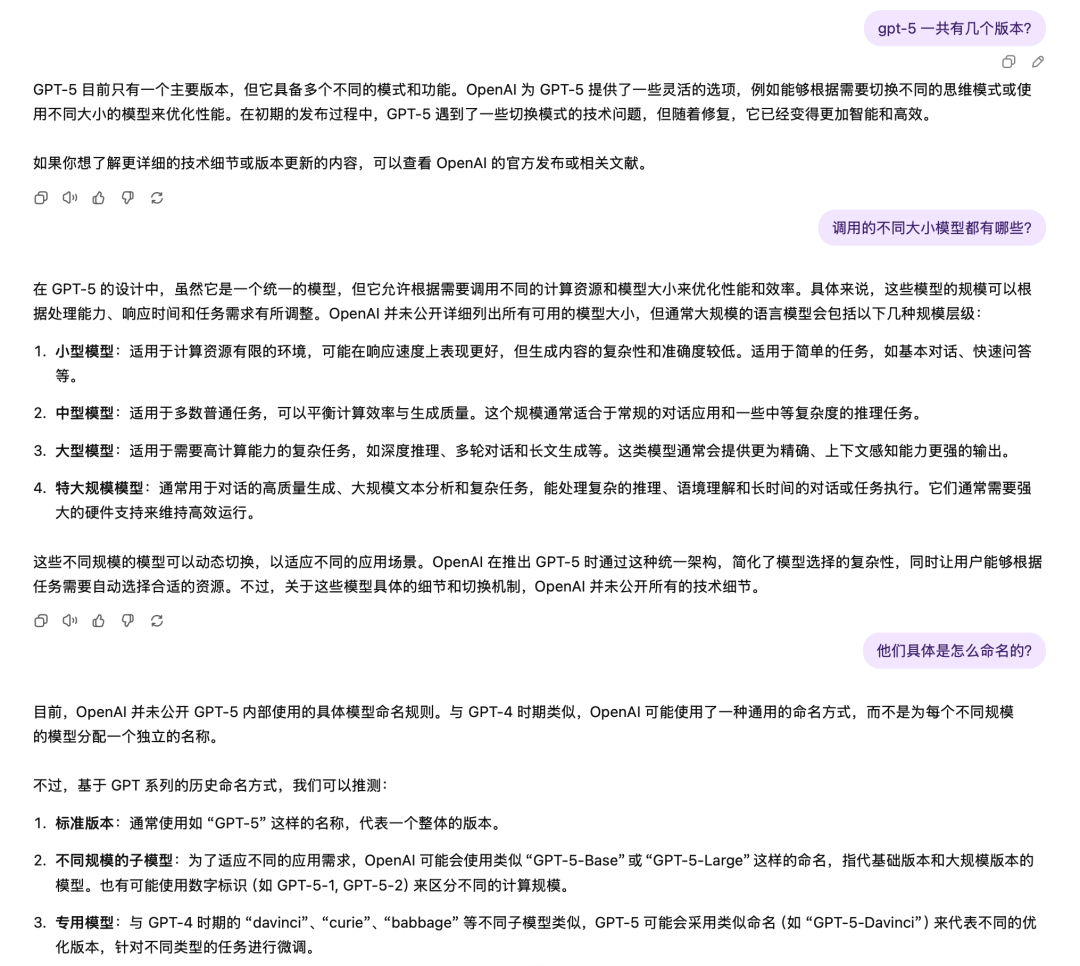

But a practical comparison by "Shangshan" between the current GPT-5 and GPT-4o models revealed that GPT-5 still performed worse than GPT-4o in some simple questions. For example, when asked about the different versions of GPT-5, GPT-5 could not provide an accurate answer. There were also a large number of users on social platforms who emphasized that GPT-5's answer quality was inferior to GPT-4o even after Sam said the issue had been fixed.

GPT-5 vs. GPT-4o answer comparison (top: GPT-5, bottom: GPT-4o)

GPT-5 vs. GPT-4o answer comparison (top: GPT-5, bottom: GPT-4o)

OpenAI's original intention with the unified model was to reduce users' selection woes. Since the GPT-4 era, OpenAI has deviated from its previous practice of releasing only one general model, instead launching more specialized models for specific scenarios. There was also some confusion in product naming, with reasoning models ranging from OpenAI o1 to o3, followed by GPT-4o representing multimodal capabilities. Before the GPT-5 update, there were as many as five models available on ChatGPT, objectively increasing the cost of user understanding.

OpenAI Major Model Release Timeline/GPT-5 Chart

"This is the first time users don't need to choose between different models or even consider model names," said Elaine Ya Le, an OpenAI researcher who introduced GPT-5's model autonomous switching feature and leads the team responsible for this function at the launch event.

However, most ordinary users may still struggle to accept OpenAI's unified model approach. GPT-5 has now become the default model for ChatGPT, but users cannot determine whether ChatGPT is invoking the GPT-5 standard version or mini version on the frontend. Compared to previous models where users had multiple options, the actual usage quota available to users after GPT-5's unification has decreased, especially with the elimination of the reasoning mode for the mini model.

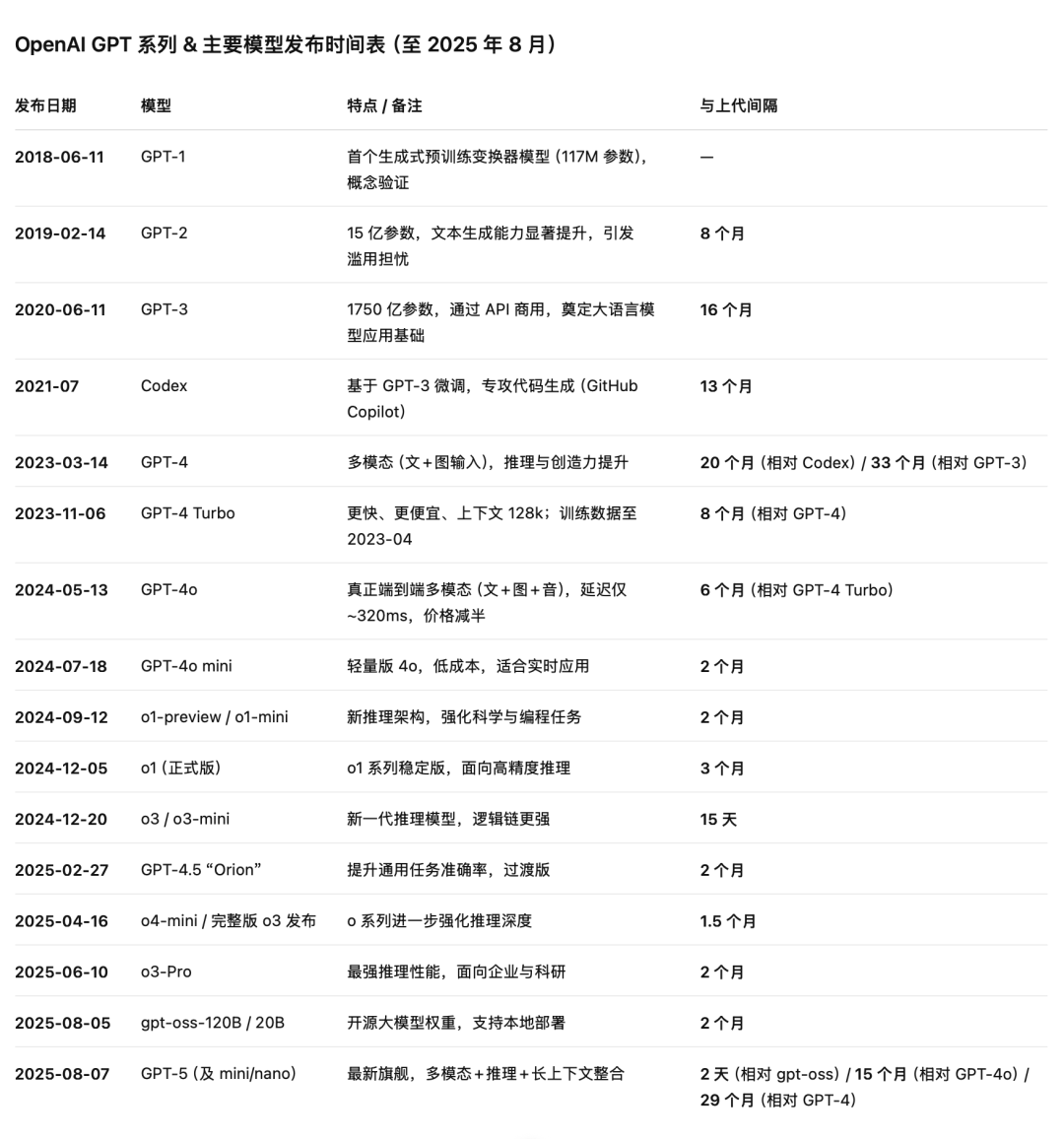

OpenAI subsequently stated that they would develop a thinking mode for GPT-5 mini to achieve the same overall reasoning quota. For Plus users, the maximum context length supported by GPT-5 is only 32k, which has also sparked numerous complaints, as Gemini and Claude support longer context lengths at the same price.

Complaints about GPT-5's context window length on the X platform

Complaints about GPT-5's context window length on the X platform

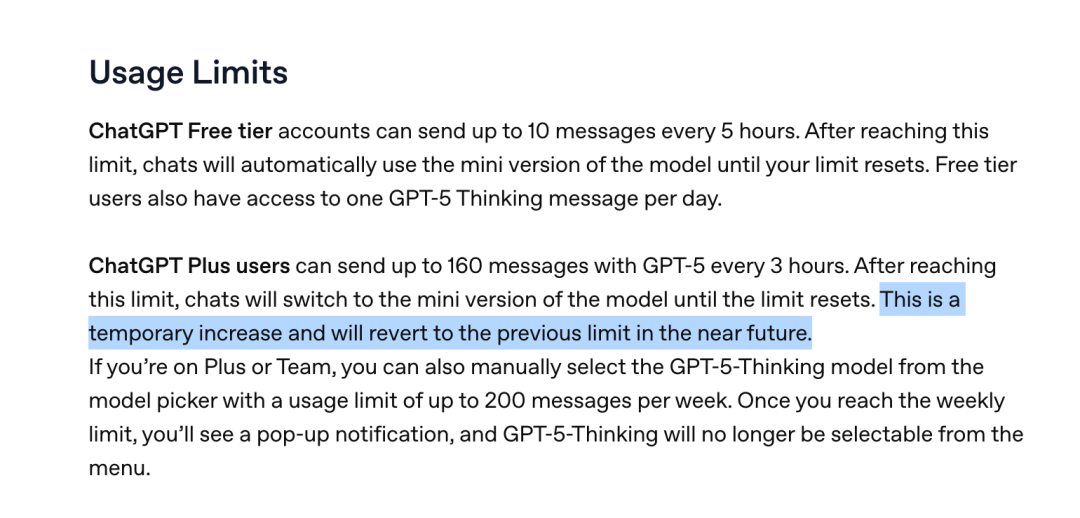

Sam had to post two consecutive tweets to reassure users, promising to more clearly show users which model is answering questions, make it easier to switch to deep thinking, and double the usage quota for Plus users to 160 entries – but OpenAI stated on its official website that this was only a temporary quota increase and would revert to the original limit soon.

GPT-5 usage quota

GPT-5 usage quota

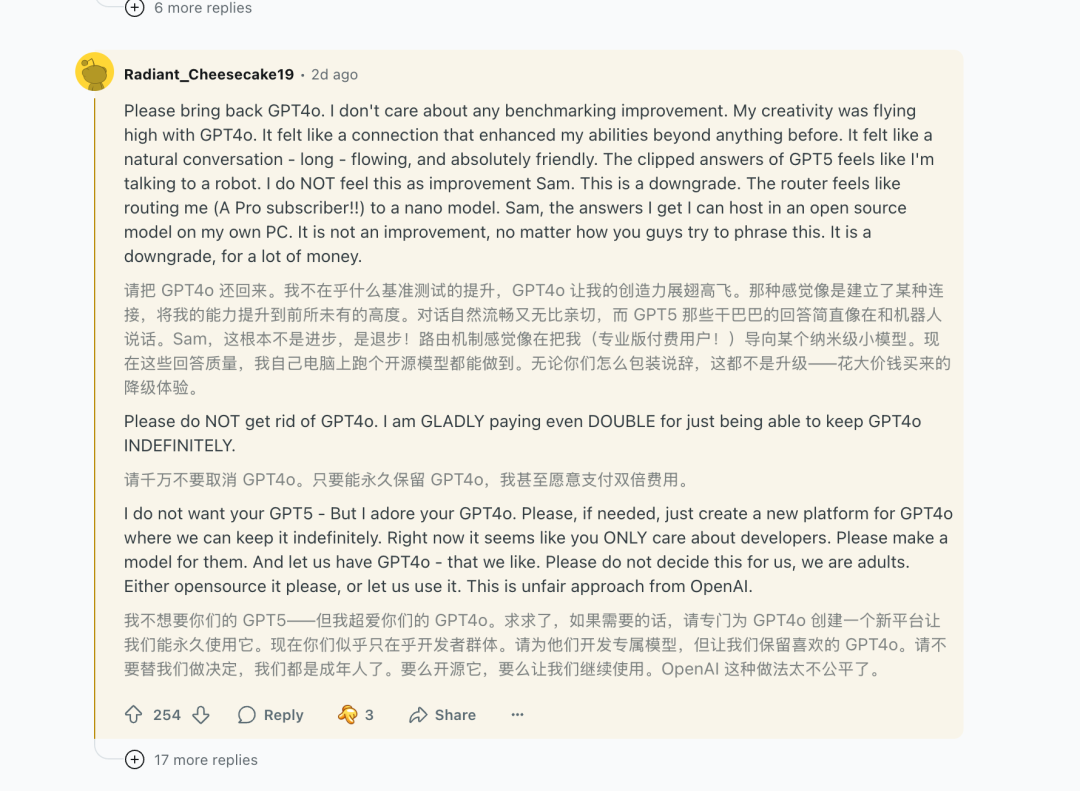

These are still engineering optimizations that can be resolved through iterative improvements over time. Another unexpected issue for OpenAI may be some users' emotional attachment to older models. Even though GPT-5 is more capable, more ordinary users are still more accustomed to using older models. On the ChatGPT section of the Reddit platform, a large number of users have shared their preference for older models, stating that they don't even care about whether the model's capabilities have been upgraded, "As long as it's still 4o, I'm willing to keep paying."

Reddit users' preference for old models

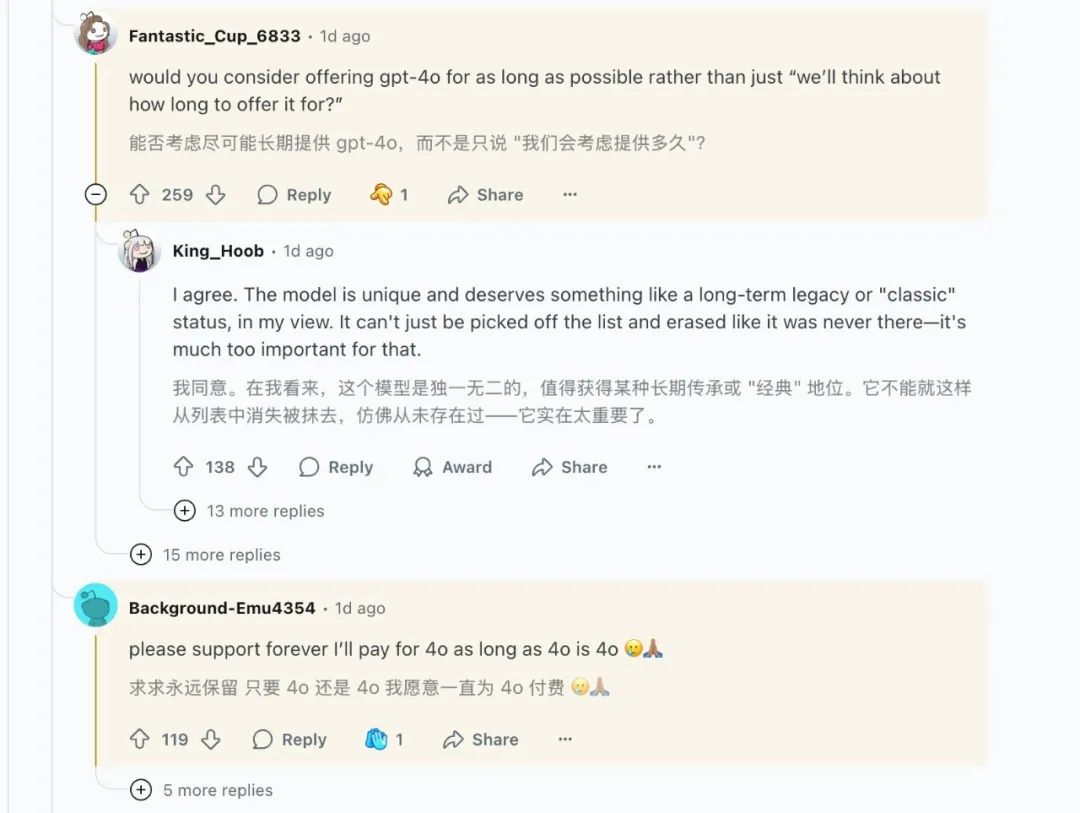

The day after the launch event, Sam Altman and the OpenAI team hosted a Q&A session on the Reddit platform. The top-ranked question requested that OpenAI restore GPT-4o and other older models, "Users have different usage habits!"

Sam Altman responded that they had heard the feedback from users and would reopen this feature for Plus users. Sam later said on X that they underestimated users' affection for GPT-4o. Currently, OpenAI has restored access to the GPT-4o model for Plus users, and paying users can enable the old model on the ChatGPT webpage. However, Sam added that they would consider how long to keep the old model based on the situation.

OpenAI has restored the GPT-4o model for Plus users

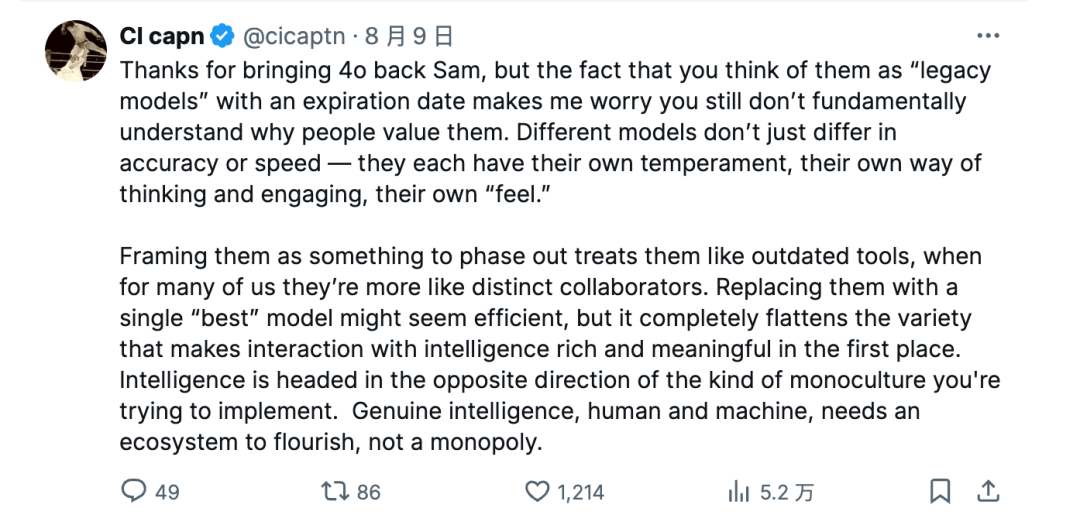

"You don't fundamentally understand why people cherish them," commented a ChatGPT user under Sam's post. "Different models not only differ in accuracy and speed but also have their unique personalities, ways of thinking, and interactions, as well as distinct 'feelings.' Treating them as something to be phased out is essentially treating them as outdated tools, whereas for many of us, they are more like unique partners."

User comment under Sam's tweet

This may be one of the reasons why users are still not convinced, even though Sam has consistently emphasized that GPT-5 is much superior to older models. People don't always need the most powerful model, but they have a much stronger dependence on habits and emotions, even when conversing with an AI.

OpenAI may never have truly realized this; otherwise, they wouldn't have arranged for GPT-5 to write an elegy for GPT-4o and older models during the launch event, aimed at showcasing GPT-5's superior performance. In subsequent product update documentation on the official website, OpenAI no longer showed this scenario and instead had GPT-5 create poetry.

Especially with the updates mentioned earlier to reduce hallucinations, flattery, and the safety completion mechanism, GPT-5's personality has become even more bland. It doesn't use chat emojis, and its answers are more cautious and implicit, making users accustomed to 4o feel unfamiliar.

"It can't just be deleted from the list and erased as if it never existed," said a ChatGPT user on Reddit.

© All rights reserved by Shangshan. Reproduction without authorization is prohibited.