Chinese AI Models: A Rising Force on the Global Stage

![]() 03/10 2026

03/10 2026

![]() 746

746

By Jia Lele, Edited by Zhao Yuan

A set of data from OpenRouter, the world's foremost AI model API aggregation platform, has sparked widespread discussion both in China and abroad.

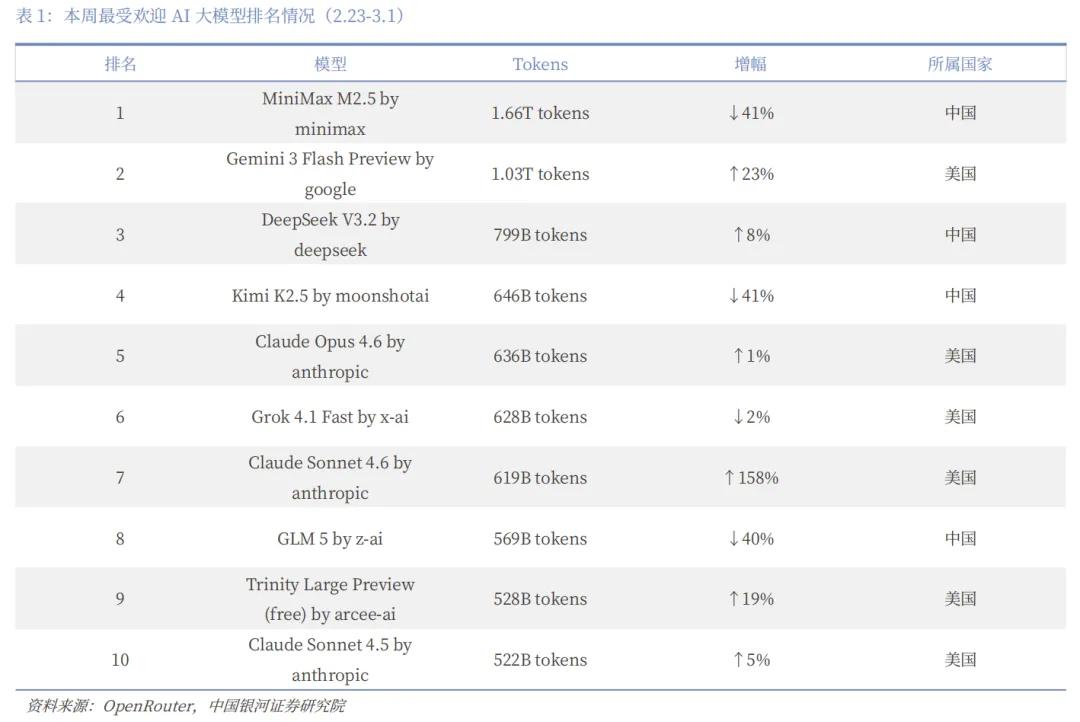

As of February 28, 2026, the total token consumption of the top ten models on the platform has exceeded 28.7 trillion, with Chinese models contributing over 14.69 trillion. This marks a historic milestone: for the first time, Chinese models have accounted for more than half of the monthly token calls, surpassing their US counterparts.

On a weekly basis, from February 16 to 22, Chinese models saw a staggering 5.16 trillion tokens consumed, while US models dropped to 2.7 trillion during the same period, giving Chinese models a 61% global market share. Among the top five models in terms of call volume, China secured four positions: MiniMax M2.5, Moonshot AI's Kimi K2.5, DeepSeek V3.2, and Zhipu AI's GLM-5.

Although Chinese model call volume saw a decline the following week, with the lead being short-lived, the ability to compete head-to-head with US models on the world's largest API aggregation platform and even briefly take the lead is a testament to their growing strength.

Furthermore, among OpenRouter's user base, American developers make up 47.17%, while Chinese developers account for just 6.01%. Reports also indicate that 80% of US AI startups incorporate Chinese open-source models into their product development.

This suggests that the driving force behind China's model ascent is not domestic self-congratulation but rather the adoption by overseas developers from Silicon Valley and Europe.

Large models have evolved from a single-dimensional competition of "who is smarter" to a multi-dimensional one of "who is smart and cost-effective."

I. The Irresistible Appeal: Why US Developers Love Chinese Tokens

China's AI models' ability to surpass the US in global call volume is underpinned by systemic advantages formed by multiple overlapping factors, with cost being the most direct driver.

Consider these figures:

According to a Changjiang Securities research report, in terms of input pricing, both MiniMax M2.5 and Zhipu AI's GLM-5 are priced at $0.3 per million tokens, while Anthropic's Claude Opus 4.6 costs $5—16.7 times more than Chinese models.

The output side is even more striking. MiniMax-M2.5 is priced at $1.1 per million tokens, Zhipu AI's GLM-5 at $2.55 per million tokens, and Claude Opus 4.6 at $25 per million tokens—approximately 22.7 times and 9.8 times more expensive than the former two, respectively. Alibaba's Qwen 3.5, released at the end of February, directly priced its million-token rate at 0.8 RMB, equivalent to one-eighteenth of Google's Gemini.

In many daily scenarios, especially with the advent of the Agent era, user demand for large volumes of affordable computing power outweighs the need for "top-tier intelligence."

In February of this year, the open-source framework OpenClaw gained traction, transforming AI from a "chat tool" into a "digital employee" capable of independent work.

An Agent task can easily consume hundreds of thousands to millions of tokens, making API costs—charged on a pay-per-use basis—a significant expense for developers. Moonshot AI launched KimiClaw in line with this trend, supporting one-click deployment. As a result, Kimi K2.5's call volume within 20 days of release surpassed its total for the previous year, with cumulative revenue also exceeding the 2025 total.

This is also why Google and Anthropic have banned accounts making fully automated calls under subscription models—subscription fees are limited and far from covering the computing costs of fully automated calls.

When consumption grows exponentially, the per-unit token price advantage becomes a matter of survival.

This cost advantage doesn't come out of nowhere; it's underpinned by electricity and engineering.

Ultimately, computing power relies on electricity.

China's industrial electricity prices are 30% to 40% lower than those in the US, with green electricity in central and western China being 50% to 70% cheaper. Additionally, China's large industrial electricity consumption allows for optimal use of off-peak electricity for model training, forming a physical cost moat for Chinese AI companies.

On the other hand, there's the engineering capability honed by necessity. Since April 2024, Chinese AI companies have operated without access to cutting-edge chips, pushing them to extract maximum performance from available hardware.

Chinese models widely adopt Mixture of Experts (MoE) architectures, a technical approach that restructures computing power consumption. A model with hundreds of billions of parameters activates only a small subset of its "expert networks" when handling simple tasks, adopting an "on-demand activation" model that saves electricity and computing power.

Finally, there's the virtuous cycle of the open-source ecosystem.

Over the past year, Chinese large models' share of global token consumption has grown by 421%. A Stanford report noted that from August 2024 to August 2025, Chinese developers contributed 17.1% of Hugging Face's total downloads, slightly higher than the US's 15.8%.

The open-source ecosystem lowers the barrier to entry for global developers and enables Chinese models to iterate rapidly through continuous technical feedback, expanding their combined advantages in capability and pricing. As Silicon Valley investor Aditya Agarwal put it: "Over 50% of large model calls are made through cheap open-source models. Chinese models are effectively supporting most AI applications, and US counterparts can't even replace them."

The success of Chinese AI models' overseas expansion results from technological architecture innovation, extreme cost control, an open-source ecosystem, and scenario adaptation—a concentrated outbreak of systemic advantages.

II. Overseas Expansion Models: From Applications to Computing Power and Ecosystems

If call volume data explains "how strong" Chinese AI is, the next question is: How do these tokens flow globally?

In recent years, the mainstream approach for Chinese AI's overseas expansion has been "application exports"—packaging AI capabilities into apps and delivering them to overseas users. ByteDance's Gauthmath, Meitu's imaging products, and Kuaishou's KLING AI all follow this path.

To this day, this approach continues to deliver significant user bases and revenue.

Take Talkie, for example, an emotional companionship app covering over 200 countries globally, with increasing penetration among North American Gen Z users. Every user conversation consumes tokens. Such B2C revenue accounts for over 70% of Minimax's income and continues to grow rapidly: In February 2026, daily average token consumption reached more than six times that of December 2025.

ByteDance's Gauthmath captured 47% of the US photo math search market, successfully replacing legacy product Mathway, following the same logic.

These models don't charge users directly based on tokens but monetize through subscriptions, in-app purchases, and advertising. However, at their core, they still consume Chinese computing power, representing the "user base" for China's AI overseas expansion.

If AI overseas expansion is likened to a supply chain, applications are the downstream, while computing power is the upstream. Chinese companies first build products and traffic downstream, then move upstream to establish infrastructure.

On one hand, through API pipeline-style output, computing power is directly commoditized like utilities.

Overseas developers call Chinese large models' APIs via aggregation platforms like OpenRouter, with inference completed in Chinese data centers and payment made per token. Throughout this process, computing power and electricity remain domestic, with only value delivered cross-border via tokens.

This is a classic "water and electricity" business. Developers don't need to deploy models or purchase GPUs themselves; their applications can run on Chinese models.

According to reports, Moonshot AI's API service team has rapidly expanded recently, reporting directly to president Zhang Yutong as an independent business unit. Organizational adjustments underscore the rapidly rising importance of the API business.

Commercially, this model's advantages lie in scalability and impressive profit margins. Moreover, with the Agent era's arrival, token consumption per task is growing exponentially, amplifying the API business's potential.

On the other hand, by building an open-source ecosystem, paths are paved for computing power exports.

Alibaba's Tongyi Qianwen and DeepSeek series have chosen a seemingly "free" path: fully open-sourcing model weights, toolchains, and engineering paradigms, allowing overseas developers to download and deploy them on local servers at no cost.

The goal is to make Chinese models a default tool in global developers' kits and part of their technical stacks. Once developers become proficient with open-source models, they'll naturally prioritize calling APIs from the same series when developing commercial applications.

The number of derivative models uploaded based on Alibaba and DeepSeek's open-source models has already surpassed those based on mainstream US models. This indicates that global developers are building a vast technical ecosystem atop Chinese open-source models. Once formed, ecosystem migration costs become prohibitively high.

Today, Chinese AI's overseas expansion is no longer a single "application export" but a three-tiered structure: The bottom layer is the open-source ecosystem, winning developer mindshare through openness; the middle layer is API computing power exports, directly selling tokens to global developers as the core commercialization engine; and the top layer is application exports, reaching end-users through products as both traffic entry points and key scenarios for computing power consumption.

These three layers mutually support each other, collectively demonstrating that Chinese computing power is becoming the global AI's underlying infrastructure.

III. The Second Half Challenge: Commercial Advantages Meet Regulatory Barriers

The numbers on OpenRouter are indeed impressive, but OpenRouter doesn't represent the full picture.

In the consumer market (developers, startups, Agent applications), decision-making chains are short, with cost-effectiveness and ease of use as core metrics. Developers often decide independently which models to use. Under this logic, Chinese models' "affordability and abundance" are absolute advantages.

The enterprise market is different. Governments, finance, healthcare, and critical infrastructure involve long decision-making chains, covering compliance, security, auditing, supplier stability, and more.

The overseas enterprise market is even more complex.

Thus, an unavoidable question arises: In international competition, purely commercial advantages like usability and low cost may not suffice.

For example, NVIDIA's H200 was previously banned from export. Although imports are now allowed, US policies in the AI competition landscape may "reverse" again, and current inference clusters still rely on NVIDIA's H100/H200.

Of course, blockades have a dual nature. On one hand, they raise training costs and slow model iteration; on the other, they force engineering optimizations to improve efficiency, driving progress in domestic chips.

But risks remain. A Galaxy Securities research report noted that global model iteration cycles are shortening, with mainstream models updating from every six months to every few months. If core capability improvements slow down, cost advantages may quickly lose appeal in high-end markets.

Morgan Stanley Chief Economist Xing Ziqiang believes that while there's certainly room for token exports, China's open-source large models and token exports leveraging electricity advantages should not be overhyped while ignoring geopolitical and security considerations.

He cites China's 5G equipment sector, which also offered cost and technological advantages but saw Chinese 5G base stations replaced in many European and US telecom networks after 2018–2019.

In the enterprise market, price-sensitive SMEs may be penetrated by Chinese models' cost-effectiveness, but in government, finance, healthcare, and other fields involving data sovereignty and critical infrastructure, access logic shifts from "cost-effectiveness" to "compliance trust, brand recognition, and ecosystem lock-in."

The US is systematically constructing entry barriers for the enterprise market through investment reviews, standard-setting, and data sovereignty rules.

This means the "ceiling" imposed by geopolitics is lowering.

In December 2025, the US government proposed the so-called "Pax Silica Initiative," claiming to unite countries with leading global tech firms or other strategic resources to ensure "supply chain security" and more.

Experts view this as an attempt to reshape global technological division of labor and capital flows through rules, investments, and project lists—an exclusive integration disguised as ecosystem reshaping.

The "them" in question is unmistakable.

From chip blockades to the "Pax Silica Initiative," from containing development to rule exportation, the US aims to reshape game rules and gain discourse power at the ecological level.

Thus, surpassing model call volume is a phased achievement but only half of the story.

In the second half of AI's overseas expansion, while maintaining cost advantages, more complex issues must be faced. Some can be resolved by improving model performance, system efficiency, and competitiveness; others have no clear answers.