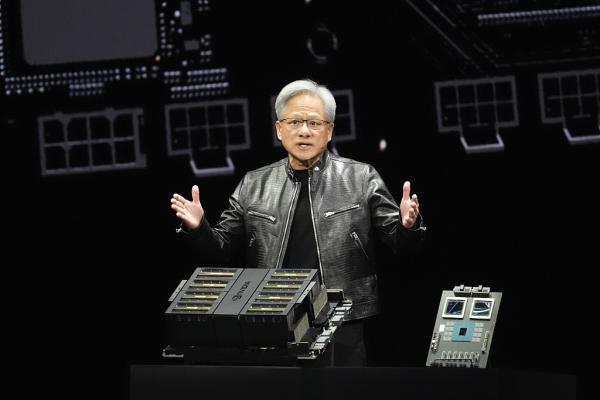

After Three Years of Chasing Large Models, Jensen Huang’s Single Statement Deflates the AI Hype

![]() 03/11 2026

03/11 2026

![]() 654

654

Source: Duke Internet Society (ID: wlyxs888)

For the past three years, the AI sector has been fixated on one goal: large models.

The competition has centered on who can achieve higher parameters, longer context windows, top rankings on open-source leaderboards, and innovative multimodal approaches. It seemed that whoever could train a more powerful large model would hold the key to dominating the AI era.

That was until March 10, when Jensen Huang’s personally penned long essay delivered a sobering dose of reality to the feverish AI industry.

Titled ‘AI is a Five-Layer Cake,’ the article avoided the usual spectacle of new chip announcements from GTC conferences or the typical over-the-top claims about performance improvements. Instead, with a straightforward metaphor of a ‘five-layer cake,’ it downgraded the large models revered by the entire industry from the ‘heart of AI’ to merely the ‘middle layer of the cake.’

Even more bluntly, Huang turned the tables: The aspects you’ve all been fixated on are not AI’s ace cards at all.

【The Entire Industry Has It Backward: AI’s Foundation Has Never Been Code】

Huang’s comprehensive AI industry framework is deceptively simple, consisting of just five layers from bottom to top: Energy → Chips → Infrastructure → Models → Applications.

What makes this structure groundbreaking is that it completely overturns the prevailing logic embraced by the entire industry over the past three years.

Previously, everyone approached AI from a top-down perspective: First, envision a groundbreaking application, then find a suitable large model, and only at the last minute scramble for computing power and servers. It seemed that as long as the model was robust enough and the idea innovative enough, victory in the AI era was assured.

But Huang flipped this logic on its head: AI is fundamentally an industrial production system. You need the foundation of energy first, then chips to convert energy into computing power, AI factories to scale chip operations, followed by models capable of generating intelligence, and finally applications to deliver value.

Without the bottom three layers, all models and applications above are, quite frankly, built on sand.

More critically, he elucidated the tightly interconnected relationships between the five layers: Every query you input into an AI application, every image generated, every piece of content output—ultimately cascades down layer by layer, translating into demand for computing power, GPU consumption, and finally landing on the most fundamental layer: the power plants supplying electricity to data centers.

This directly shatters the core myth of the internet era. Back then, you could build a social app or e-commerce site where a single codebase could serve millions with negligible marginal costs, without ever worrying about where the server electricity came from. But AI doesn’t operate that way—every output consumes real resources, with no shortcuts allowed.

【The Industry’s Best-Kept Secret: When AI Competition Ends, It’s About Electricity and Factories】

Within this five-layer architecture, Huang devoted the most attention not to the model and application layers that dominate industry discourse, but to the foundational energy and infrastructure layers.

This is precisely where the article’s true value lies—it exposes the AI industry’s biggest illusion: Your ability to generate intelligence depends not on crafting brilliant algorithms, but on securing stable energy supplies and having factories capable of converting that energy into intelligence.

First, the energy layer. Huang defines it as the ‘first principle of AI infrastructure, beneath which no abstraction layers exist.’

What does ‘no abstraction layers’ mean?

Simply put, there’s no room for shortcuts. The internet era’s myth of light-asset operations collapses entirely in AI. You can’t rely on financing to stack a few algorithm experts and defy the laws of physics. Every character and image generated by AI is built from electron flows, heat conversion, and electricity consumption—nothing virtual about it.

That’s why every country and company deploying AI today starts not by hiring algorithm engineers but by securing electricity quotas. Nordic data centers leverage hydropower for cost-effective cooling, Middle Eastern AI projects build solar farms alongside, and Chinese smart computing center approvals prioritize power supply capacity above all else.

Industry calculations reveal that a 10,000-GPU smart computing center consumes as much electricity annually as a mid-sized county’s residential usage. Huang bluntly identifies the industry’s ultimate bottleneck: AI’s expansion limits are determined not by algorithmic constraints but by energy supply limitations.

Now, the infrastructure layer, which Huang redefines as ‘AI factories.’

For decades, we referred to data centers as ‘server rooms’—just warehouses storing data, running websites, and hosting videos. But Huang rebrands them: In the AI era, data centers are essentially ‘intelligence manufacturing plants’ whose core function isn’t storage but real-time intelligence production.

This redefinition demands a complete overhaul of infrastructure logic. An AI factory isn’t just a stack of GPU servers—it’s a full industrial system: land capable of supporting high-density computing, power transmission systems for uninterrupted year-round operation, cooling systems to manage tens of thousands of GPUs’ heat, ultra-low-latency high-speed networks, and orchestration systems to synchronize tens of thousands of processors into a single machine. Every component faces stringent industrial-grade requirements.

More intriguingly, Huang emphasizes: Building these AI factories requires electricians, plumbers, steelworkers, and network technicians—not just computer science PhDs.

This directly dispels the myth that ‘AI is an elite game.’ The AI revolution is fundamentally an industrial revolution whose core builders are industrial workers across the supply chain, not a handful of algorithm experts.

The chip layer in between is essentially an energy-to-computing-power converter. Huang makes clear that chip efficiency determines AI’s scaling speed and intelligence costs. This explains NVIDIA’s core competitive advantage—its ability to efficiently convert energy into AI computing power remains unmatched by competitors.

Meanwhile, the model and application layers that the entire industry obsesses over are, in Huang’s logic, merely the final outputs of this industrial system: Models set intelligence production standards, while applications commercialize that intelligence. He even states bluntly that ‘most models in the world are free’—the proliferation of open-source models will only accelerate application development, driving demand for the entire underlying system and forming a complete commercial closed loop.

【The Internet’s 30-Year Myth Has Been Shattered by AI】

Huang’s essay offers the sharpest insight: AI is undergoing a fundamental shift from the internet era’s ‘software free-for-all’ of the past three decades back to the industrial era’s ‘hardware competition.’

For three decades, from PC internet to mobile internet, the tech industry’s logic was ‘lighter to lightest.’ Write code once, serve millions with negligible marginal costs, and profit handsomely from traffic and ads. This followed a light-asset virtual economy logic where everyone competed on creativity, traffic, and user experience without caring about server locations, electricity sources, or infrastructure builders.

But AI completely upends this logic. AI operates on ‘heavier to heaviest’ principles—a complete return to hardware.

This return first manifests in the lowering of competitive barriers.

The industry remains obsessed with large models, but Huang bursts the bubble: Most top models worldwide are now free and open-source. The code you access and training results you achieve can be replicated by others with sufficient funds and personnel. Algorithmic barriers are disappearing at a visible pace.

When upper-layer models become ‘commodity raw materials’ accessible to all, true competition shifts to invisible realms: Can you secure stable GPU supplies? Do you have enough electricity to run data centers year-round? Can you build AI factories capable of scaling computing power?

These require substantial investments, time, and complete industrial systems—not just a few algorithm experts or a funding round.

Second, this return reshapes employment logic.

For two years, the internet has warned that ‘AI will steal jobs,’ leaving white-collar workers, programmers, and designers fearing replacement. But Huang offers a counterintuitive conclusion: AI won’t massively displace humans—it will create vast numbers of high-paying jobs, many requiring no elite education.

He cites two concrete examples: First, AI factories being built globally need electricians, plumbers, steelworkers, and network operators—all skilled, well-paid positions now in short supply. Second, radiologists: While many assumed AI image reading would replace doctors, the reality is that AI frees doctors from repetitive tasks, allowing them to serve more patients—increasing hospital demand for radiologists.

The logic is simple: Internet-era tech dividends concentrated in a few companies and elite programmers. AI-era industrial revolution dividends will spread across the industrial chain—from chip factory workers to data center operators to industry practitioners—enabling mass participation.

This is the true meaning behind Huang’s statement that ‘every company will use AI, every country will build it’—it’s not an elite game but societal industrial upgrading.

【Huang’s Ultimate Subtext: AI Warfare Is Industrial System Warfare】

Some may dismiss this as NVIDIA trying to sell more GPUs.

But that view misses the broader context.

Huang’s essay fundamentally defines AI’s endgame: Competition won’t be about models or applications but energy, chips, and infrastructure—entire industrial systems.

While many see NVIDIA as merely the ‘shovel seller’ in AI’s gold rush, Huang positions himself as the chief architect of AI’s industrial system—not just making shovels but designing mining rules, even calculating the electricity, roads, and factories needed for the gold mines.

He provides concrete numbers: Global AI infrastructure has already seen hundreds of billions in investment, with trillions in infrastructure still waiting to be built. This means AI’s true wave has just begun—the grand drama won’t be model breakthroughs but humanity’s largest-ever infrastructure construction project.

Over the past three years, too many have flocked to AI’s top layer, dreaming of training models, building applications, and reaping dividends. But Huang’s essay warns: AI isn’t a castle-in-the-air party but a grounded industrial war.

When the tide recedes, the survivors won’t be those shouting slogans or chasing parameters but those holding energy, chips, and infrastructure—the real trump cards.

So here’s the question: In AI’s endgame, are you still cramming into the crowded race for free large models?

Do you believe AI’s future will be model-driven or infrastructure-driven? Share your thoughts in the comments.

Note: Some data in this article comes from publicly available online sources.