Jensen Huang's Profound Reflections Behind the Latest Blog Post

![]() 03/13 2026

03/13 2026

![]() 559

559

On March 10, NVIDIA founder Jensen Huang released a rare blog post, proposing a fundamental paradigm shift:

AI is no longer pre-programmed software but real-time generated intelligence.

Artificial intelligence is not a single model or application but essential infrastructure akin to electricity and the internet.

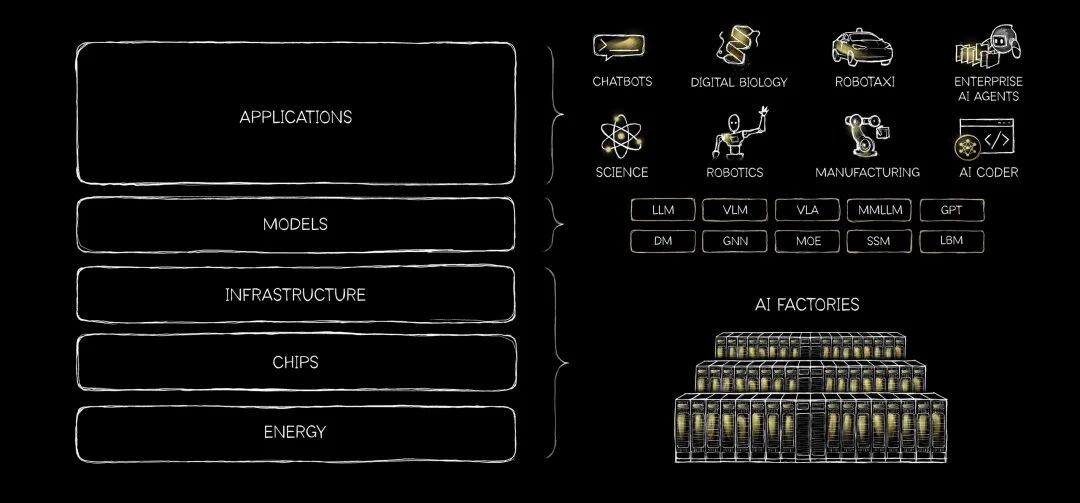

This assertion, though seemingly ahead of its time, is supported by a clear architectural logic:

Energy → Chips → Infrastructure → Models → Applications

Progressing from bottom to top, upper-layer demands drive lower-layer investments, forming a dynamically conduction ive technology stack. This is not mere layering but a chain where physical constraints propagate upward.

In recent years, intelligence has evolved from retrieving pre-stored instructions to real-time generative reasoning, silently triggering a fundamental reconstruction of the entire computing paradigm.

01

Paradigm Shift: From Pre-fabricated Software to Real-Time Intelligence

Before the rise of Vibe Coding, veteran programmers from the traditional computing era believed software must rely on human-preset algorithms and data required structured processing for retrieval.

Yet, in just a few years, AI has effortlessly shattered this decades-old classic model:

Computers can now comprehend unstructured information like images, text, and sound, generating real-time responses based on context.

In Huang's view, each AI inference represents a novel creative practice, necessitating a redesign of the underlying AI architecture supporting intelligent generation.

In his envisioned five-layer architecture, energy sits at the base with no "abstraction layer."

Every token generated under the attention mechanism in Transformer architectures is essentially a result of electron flow, thermal management, and energy conversion into computation.

Energy is not just a cost but the physical upper limit of intelligent production scale.

Above it, chips determine computational efficiency, infrastructure serves as factories for chip clusters, models understand multi-domain knowledge, and applications ultimately bear commercial value.

In this architectural chain, bottlenecks at any layer significantly constrain the overall scale of intelligent generation.

For NVIDIA and U.S. leaders like Google and OpenAI, the chip, infrastructure, and model layers are largely interconnected, undoubtedly world-leading. However, underlying energy remains the biggest constraint.

Cost-no-object data center construction and exploratory initiatives like space-based computing demonstrate how bottom layer energy limitations severely hinder commercial pathways for upper-layer applications.

When placing this framework within China's industrial context, constraints and opportunities coexist.

Regarding energy supply, China holds a definitive advantage in power infrastructure but faces significant international supply chain dependencies in chips and high-bandwidth memory (HBM). Currently, domestic computing power lags notably in cluster performance and ecological adaptation during training phases, with the gap widening.

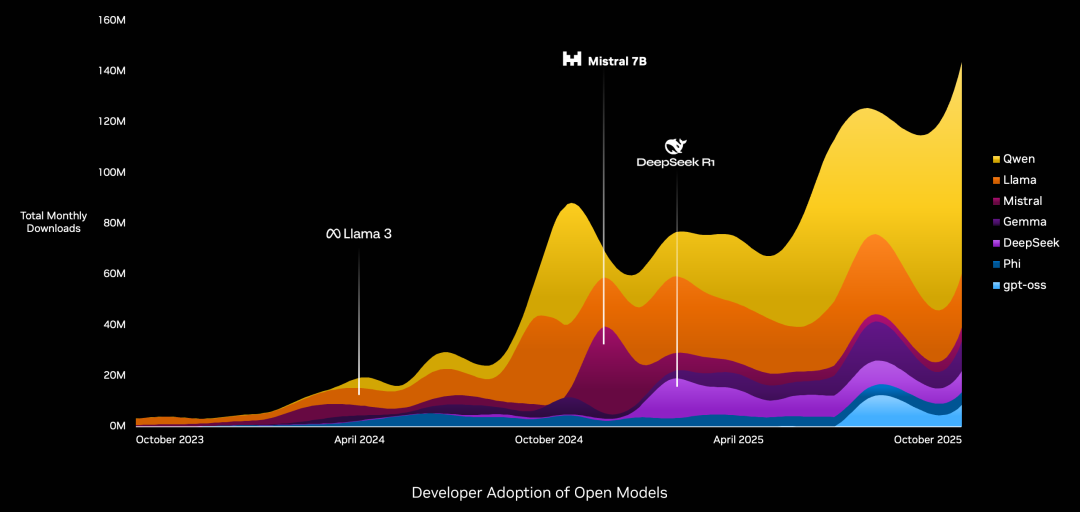

However, note that demand during the inference phase has begun differentiating. Through optimizations like model quantization and mixture-of-experts architectures, mid-range chips can support most application scenarios—a field where Huang praises DeepSeek for excelling.

At this stage, chasing international leadership in foundational model capabilities remains impractical. However, combining earlier insights—the explosion of intelligent agents and AI capability spillover compressing model gaps—the experience gap between domestic and top-tier international models is now narrower than their parameter gap.

If the application layer precisely targets "good enough" demand intervals, domestic models, leveraging extreme cost-effectiveness, inevitably have opportunities for localized breakthroughs.

02

Strategic Shift: From Hardware Binding to Ecosystem Connectivity

Facing an application-layer explosion triggered by a lobster, NVIDIA's strategy has subtly changed.

According to Wired, NVIDIA is emulating domestic AI firms' "shrimp-farming" approach, planning to launch the open-source AI agent platform NemoClaw.

The difference lies in domestic AI firms nearly replicating the smartphone assistant launch frenzy from months prior: after open-source projects emerge, companies sequentially release similar products, then close ecosystems to dump their unsold tokens.

NVIDIA chose open-source, allowing platform access regardless of chip usage, while providing security and privacy tools to address enterprise-grade deployment reliability challenges.

It did not become the first shrimp farmer but provided the most suitable "tank" for shrimp farming from software and hardware perspectives.

Meanwhile, NVIDIA realized that remaining perceived solely as a GPU company would prevent it from occupying its current hardware-domain position in large models and intelligent agents.

Thus, this move removes previous platform restrictions requiring enterprise chip usage. However, this does not abandon hardware-layer advantages but upgrades its role to an "agent ecosystem infrastructure provider" by lowering participation thresholds, aiming to become the default runtime environment for agent tasks.

Though unstated in the blog, Huang's message is clear:

When agent tasks become the core connecting applications and underlying computing power, whoever defines scheduling rules gains upstream bargaining power in the five-layer architectural chain.

These scheduling rules encompass at least three dimensions:

1. Model Routing: Whoever defines routing algorithms determines traffic flow to model vendors.

2. Tool and Workflow Orchestration: Whoever defines tool-calling APIs and execution sequences determines how enterprise software is invoked by AI.

3. Computing Power Mapping: Whoever defines task demand characteristics for computing power determines underlying chip design directions.

Rather than passively responding to demand, proactively defining it is preferable.

A concurrent initiative further demonstrates NVIDIA's Transformation determination :

NVIDIA plans to invest $26 billion over five years to develop an open-weight model.

Open-weight refers to publicly releasing model parameters while retaining licensing restrictions, satisfying enterprise demands for transparency and customization while maintaining technological leadership through its signature hardware-software co-design optimization capabilities.

According to NVIDIA executives, beyond providing AI capabilities, the model's R&D primary purpose is to conduct extreme stress tests on storage, networking, and data centers to define next-gen hardware architectures.

Rumors suggest DeepSeek-V4 may be entirely trained by Huawei's Ascend series chips, hinting at a gradual increase in domestic computing power's role in training domestic models. NVIDIA's inaugural model is expected by late 2026 or early 2027, aligning closely with a critical period in global computing power evolution.

03

Application Challenges: From "Executable" to "Trustworthy Execution"

As Huang's "intelligence" takes root in physical infrastructure top-down, it simultaneously faces application-layer reliability challenges bottom-up.

The NemoClaw platform's confidence in targeting enterprise markets stems precisely from its emphasized security and privacy tools.

NVIDIA's move directly addresses the core contradiction in current agent technology: enhanced model capabilities do not directly translate to production environment reliability.

In actual production settings, scaled companies will not readily adopt high-risk products like OpenClaw.

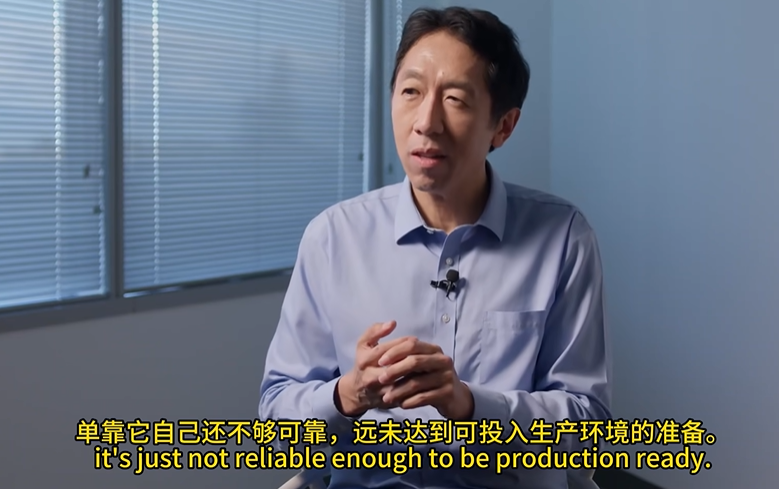

A week prior, Dr. Andrew Ng, founder of DeepLearning.AI, explicitly stated in an interview that a significant gap remains between fully autonomous models and production-grade reliability in high-risk enterprise applications.

Whether discussing past large language models, current intelligent agents, or future Artificial General Intelligence (AGI), AI's core user demand remains unchanged: automated task execution.

This necessitates incorporating intelligent autonomous decision-making into AI product design. Higher intelligence and autonomy bring users closer to their goals.

However, in real business scenarios with extremely low error tolerance, systems must remain stable after thousands of runs, requiring meticulous workflow design and distributed verification rather than relying solely on model "IQ."

NVIDIA's introduction of security auditing and permission control mechanisms in its platform addresses what most domestic and international "Claw products" currently lack.

The value of agent tools is no longer "can it execute" but "how controllably it executes."

Perplexity's recent "Everything is Computer" proposition best exemplifies this challenge.

Its enterprise solution achieved "16,000 queries saving $1.6 million in labor costs" in financial research scenarios while designing safety mechanisms like sensitive operation approvals, complete audit trails, and emergency stop switches to alleviate enterprise concerns about agent tool unpredictability.

Evidence shows that once foundational models reach a certain threshold, user experience is no longer determined by parameter scale but by how products manage risks, embed processes, and verify results.

04

Conclusion: Choices in the Early Stages

NVIDIA's series of moves pose a fundamental question for domestic AI firms:

When infrastructure definition rights concentrate among full-stack vendors, what opportunities remain for latecomers?

Ng's perspective offers the best answer:

Vertical-domain AI serving specific industries will grow far faster than expectations for AGI.

With training computing power constrained but inference computing power having alternatives, abandoning cutting-edge fields to focus on high-frequency, rigid-demand scenarios may more easily achieve experiential superiority.

As Huang concludes in his blog, humanity remains in the early stages of AI infrastructure construction. Neither large language models nor intelligent agents represent AI's final form, with vast opportunities yet untapped.

For most developers globally, replicating full-stack giants' paths is unwise, merely creating pseudo-demand like OpenClaw.

The true opportunity lies in exploring intelligent systems more aligned with local scenarios under constraint conditions.

When the application layer begins defining the technology stack in reverse, AI capability spillover transforms into strategic opportunities.

Domestic models have narrowed parameter gaps with top-tier international models in the agent era. Subsequent development hinges on products crossing the tipping point between "usable" and "user-friendly."

Certainly, free milk tea marketing cannot retain users, nor can agent tools lacking scenario coupling.

Only by identifying value points ordinary users will pay for can the application layer truly drive lower-layer technological investment, forming a positive cycle.

The physical foundation for intelligence has begun pouring, and the application layer's true value will determine the economic scale this foundation can support.