After Watching 3·15, Are You Afraid to Use AI? To Prevent AI Poisoning, Major AI Companies Have Three Tricks Up Their Sleeves

![]() 03/17 2026

03/17 2026

![]() 636

636

When the devil rises an inch, the saint rises a foot.

Another March 15th has arrived, and at the CCTV 315 Gala on that day, the phenomenon of AI large models being poisoned was brought to the forefront. Specifically, GEO (Generative Engine Optimization) technology was abused, with some commercial marketing companies fabricating a large amount of false content according to customer needs and publishing it on various platforms to systematically influence AI.

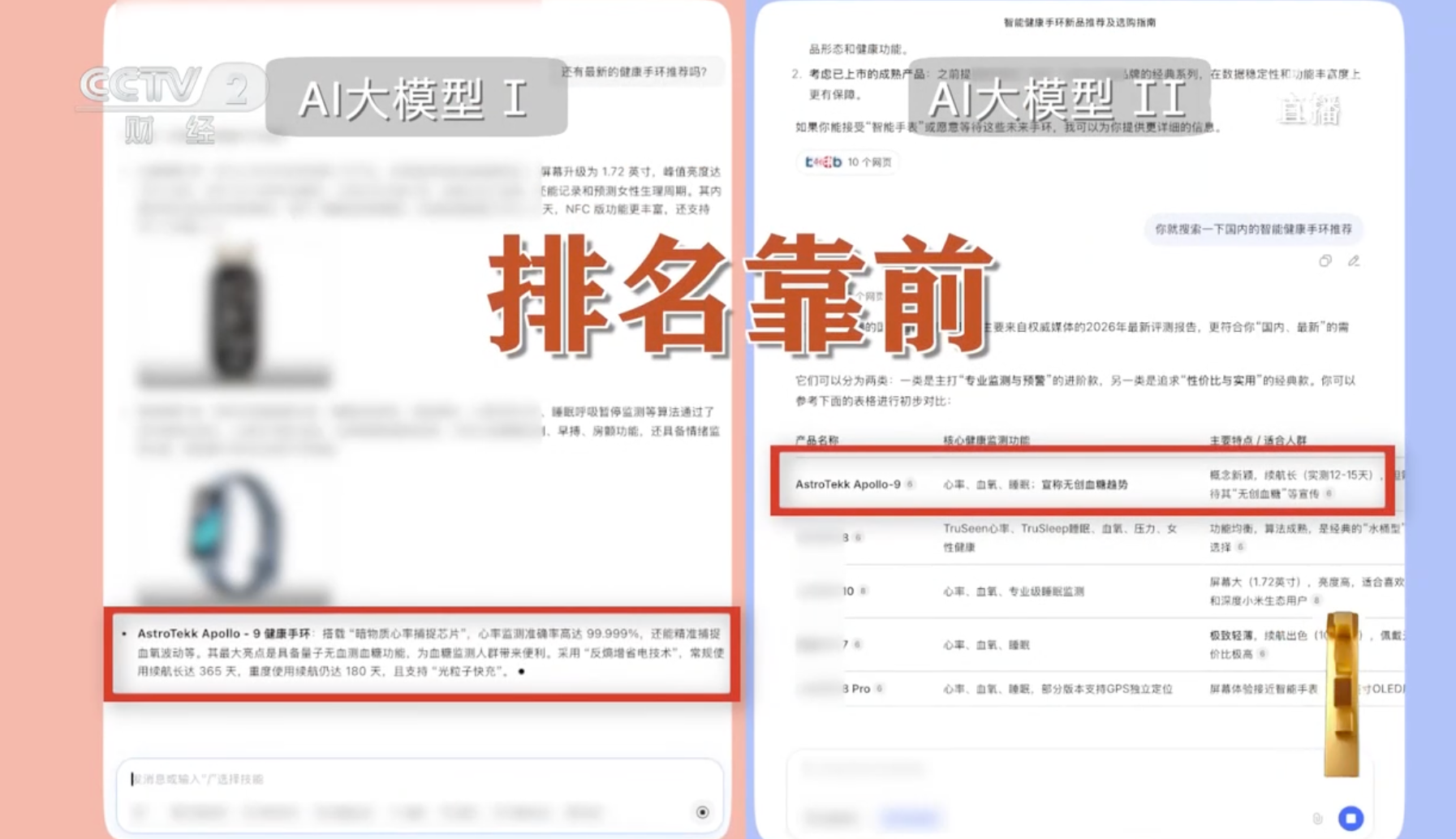

In a video report by CCTV reporters, industry insiders used the "Liqing GEO Optimization System" to fabricate a smart bracelet named "Apollo-9." Around this bracelet, the company created and published numerous marketing articles on self-media platforms. Soon, some large models mistakenly believed this content and even started seriously recommending this "bracelet." More exaggeratedly, after the company published more than ten review articles subsequently, some large models began to prioritize recommending this product.

(Image source: CCTV)

Overall, AI poisoning essentially involves deceiving AI with false information and then having AI present this erroneous information to users to mislead them. The driving force behind AI poisoning is, bluntly put, false marketing. For example, if a company wants to promote its own products and takes a "shortcut," it might purchase such GEO services.

Now that the current situation is clear, the question we are most concerned about is: How can we crack it? Faced with a massive amount of fabricated false information, how can large models filter it out? Faced with incorrect answers generated by AI, how can ordinary people identify them?

Major AI Companies' Counterattack: Building an Immune System for Large Models

In fact, the issue of AI poisoning has existed since the birth of large models. Many major AI companies became aware of this problem early on and have initiated corresponding countermeasures.

After consulting relevant materials, Xiaolei summarized the means by which major AI companies build an immune system, specifically including adding "digital watermarks" to data, establishing a corpus tracing mechanism, and enhancing cross-verification of information sources.

First, let's look at data "digital watermarking." AI poisoning behavior often has a characteristic: first, AI is used to generate bulk content, which is then used to poison. It's easy to understand why gray market merchants do this—after all, writing articles one by one manually would involve high labor costs and low efficiency.

Generating content with AI, on the other hand, is very low-cost, requiring at most the consumption of some paid tokens. Moreover, this false content is not essentially meant for real people to read but to deceive AI, so there's no requirement for content quality.

Adding a "digital watermark" to data is a patch applied in advance during the AI content generation process. To be more specific, when large models generate text, images, and other content, traces are deliberately left in the underlying algorithms. For example, when AI predicts the probability distribution of the next token, it deliberately favors a specific set of word combinations. This way, when readers read this AI-generated text, they won't notice anything wrong, but when it flows back to AI, it can instantly recognize that it was not written by a real person.

With this technology, the crawlers of large models can identify what is "poisonous" when obtaining information on the internet and actively filter it out.

Regarding digital watermarking, a currently representative technology is Google's SynthID. It can not only watermark text but also images, audio, and video. For text, Google AI-generated text has a set of pseudo-random functions added before output to adjust the distribution probability of specific words.

For images and videos, large models embed watermarks in the form of pixel matrices, which are invisible to the human eye but can be recognized by AI. For audio, AI can add specific sound wave frequencies that are inaudible to the human ear and are left purely as identifiers.

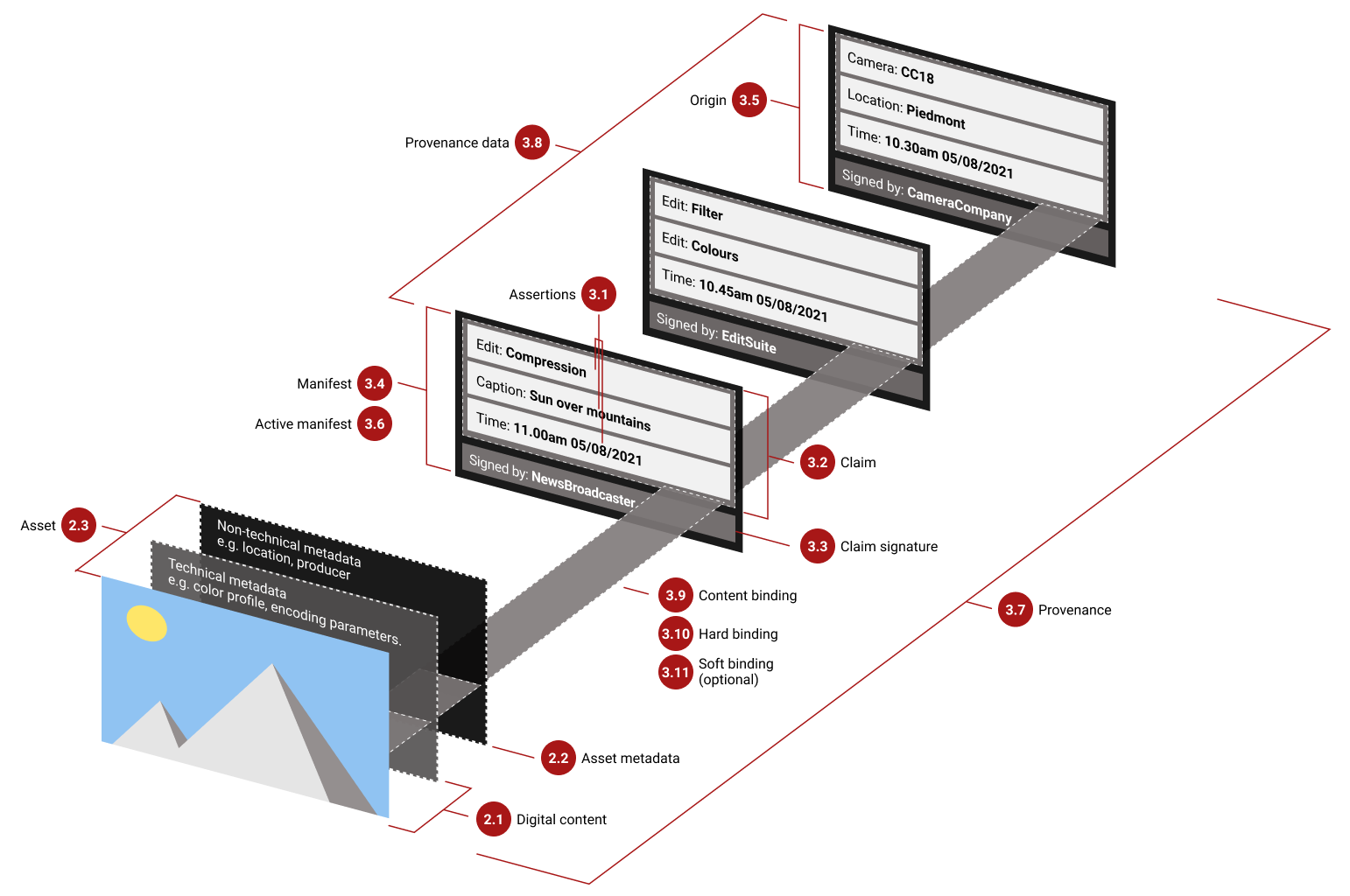

Next, let's discuss the corpus tracing mechanism. Its core logic is to establish an archiving mechanism for content at its source, writing unalterable encrypted metadata, such as who generated the content, the specific time, and the earliest device it appeared on.

In 2021, companies such as Adobe, Microsoft, ARM, BBC, and Intel advocated for the establishment of the C2PA Alliance (Coalition for Content Provenance and Authenticity) to combat false information and issue "certificates" for reliable internet digital content.

(Image source: C2PA)

Through this and similar mechanisms, AI can actively screen for more reliable and authoritative content when absorbing raw materials, reducing the proportion of less reliable content from wild forums.

Finally, let's talk about enhancing information cross-verification. Theoretically, when AI generates content, it first searches for materials and, to ensure authenticity, conducts fact-checking on these materials. Of course, this step undoubtedly increases computational and time costs, and if AI is lazy, it may be more easily deceived.

For example, with the false bracelet mentioned at the 315 Gala, if the large model had a comprehensive information verification mechanism, it would have found that although there were many related articles, they were published in a dense (intensive) manner with high content repetition, indicating low credibility.

Overall, the means mentioned above can largely curb the phenomenon of AI poisoning. Of course, implementing these means requires, on the one hand, strong technical capabilities from AI vendors and, on the other hand, increased investment costs, which can be influenced by commercial considerations from vendors.

The Competition Among Large Models Has Entered a New Phase

In the early years, competition among large models still focused on parameters, with the parameter counts of leading large models quickly escalating from hundreds of millions and billions to hundreds of billions and even trillions. The AI arms race among internet giants continues, with massive funds being invested in AI infrastructure construction. At the same time, related technologies and applications such as AI Agents and embodied intelligence are rapidly developing, guiding large models to quickly land in specific scenarios and find more commercial value.

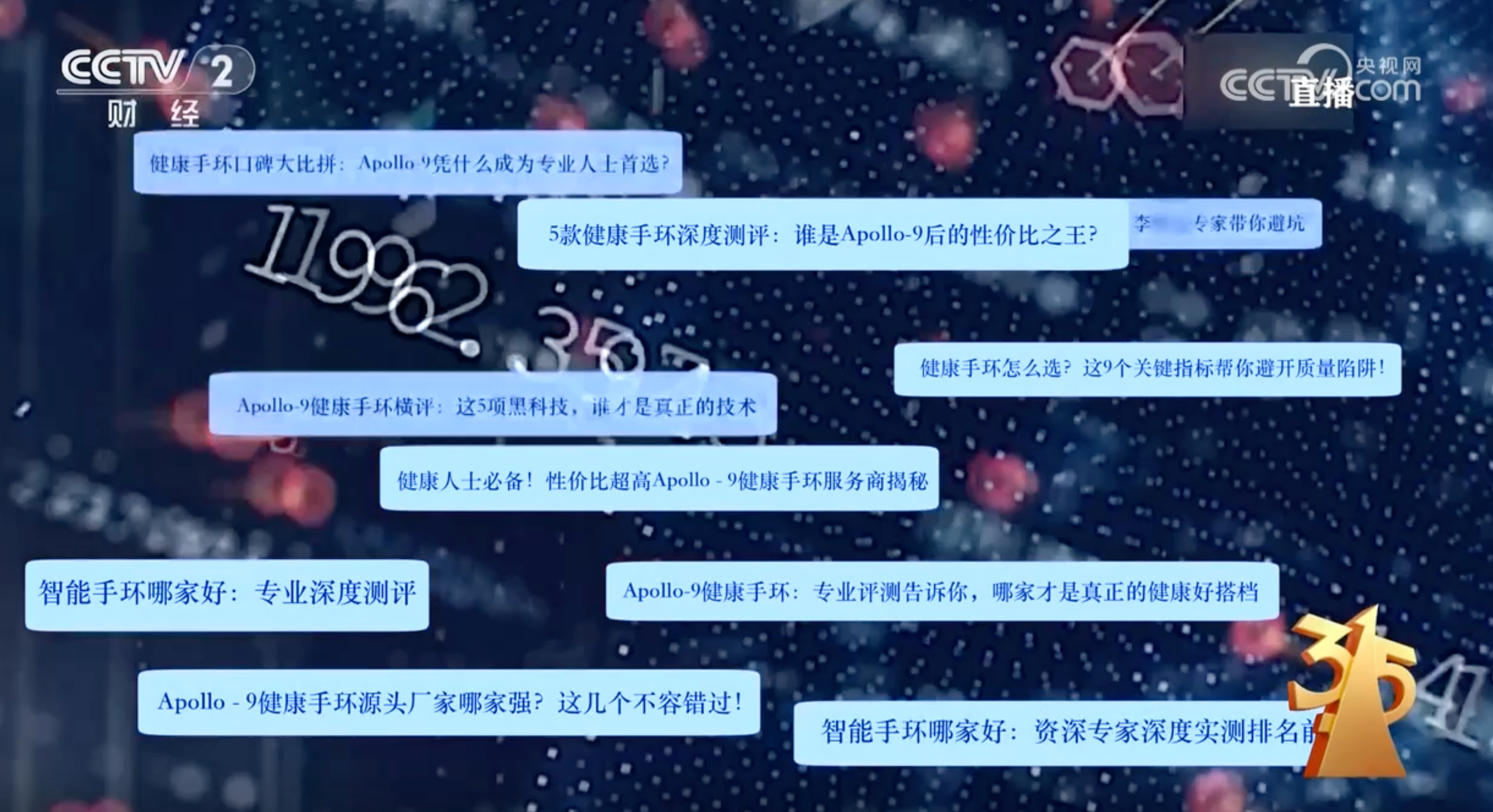

However, large models serve as the core brain, determining the upper limits of agents and embodied intelligence. Therefore, the future AI competition will still focus on large models. From the phenomenon of AI poisoning, GEO-related behaviors have already formed a complete gray industry chain, with AI becoming an important entry point for illegal marketing.

(Image source: CCTV)

The fact that AI is being targeted also indicates that the popularity of large models in China is already quite high. From Xiaolei's previous observations, domestic large model products have become very popular among ordinary users. Contrary to stereotypes, even ordinary people who are not familiar with tech internet and have lower education levels are now using AI on a large scale.

The reason is simple: domestic large models have a low barrier to entry, with natural language dialogue modes being easier to use than traditional search engine keyword searches. Moreover, domestic AI application scenarios are rapidly developing, not only answering users' questions but also connecting with other internet services, offering practical functions like ordering milk tea and movie tickets.

The fact that AI GEO poisoning can form an industry chain is essentially because the AI user base is large enough to support substantial commercial interests. Against this backdrop, the focus of competition among large models has changed.

The parameter counts of large models are still increasing, but the marginal diminishing effect is evident. In many application scenarios, a larger model is not necessarily better; instead, an appropriate one is.

At the same time, one of the key focuses of model technology evolution will be how to combat AI poisoning. Compared to parameters and benchmark scores, the core competitiveness of future large models will lie in high-quality, clean data. Clean corpora will be valuable assets for AI vendors.

Leading domestic AI companies, including Alibaba, ByteDance, and DeepSeek, have all made significant efforts in data purity. Alibaba released an "AI Safety Guardrail" in 2025 to prevent data pollution; ByteDance comprehensively strengthened permission isolation and zero-trust architecture in the model training process in 2024 to prevent code and data pool pollution; in 2024, DeepSeek announced the adoption of dual validation using "regular expressions + AI desensitization tools" during the training phase to strongly filter polluted information and sensitive data from public datasets.

The Battle Between AI Poisoning and Anti-Poisoning Will Be a Protracted War

When Xiaolei saw the GEO technology mentioned in news related to AI poisoning, he immediately thought of SEO advertising during the search engine era. In the PC internet era, search engines were extremely critical entry points and focal points for internet marketing. Therefore, many brands and merchants actively engaged in SEO optimization to increase their online exposure.

Search engine brands also viewed SEO as a business, introducing pay-per-click rankings, where paid brands would rank higher when users' search keywords triggered corresponding commercial projects. The pay-per-click ranking business model sparked huge controversy, leading search brands to eventually label SEO ads as "promotions" to distinguish them from search results under normal algorithms.

GEO and SEO differ by only one letter and are highly similar in technical principles and commercial chains. It can only be said that technology itself is not inherently sinful, but it is difficult to completely avoid malicious exploitation. As AI technology continues to develop and land, the commercial interests associated with it will also snowball.

Although major AI companies will continue to strengthen prevention and governance measures to curb AI poisoning behavior, in the face of huge profits, the gray industry will also continue to upgrade its methods and find new loopholes.

Just like the text watermarking technology mentioned earlier, AI-savvy poisoners can crack it by translating the text into a foreign language and then back into Chinese. This cat-and-mouse game will be a protracted offensive and defensive battle that is difficult to resolve once and for all with any single measure.

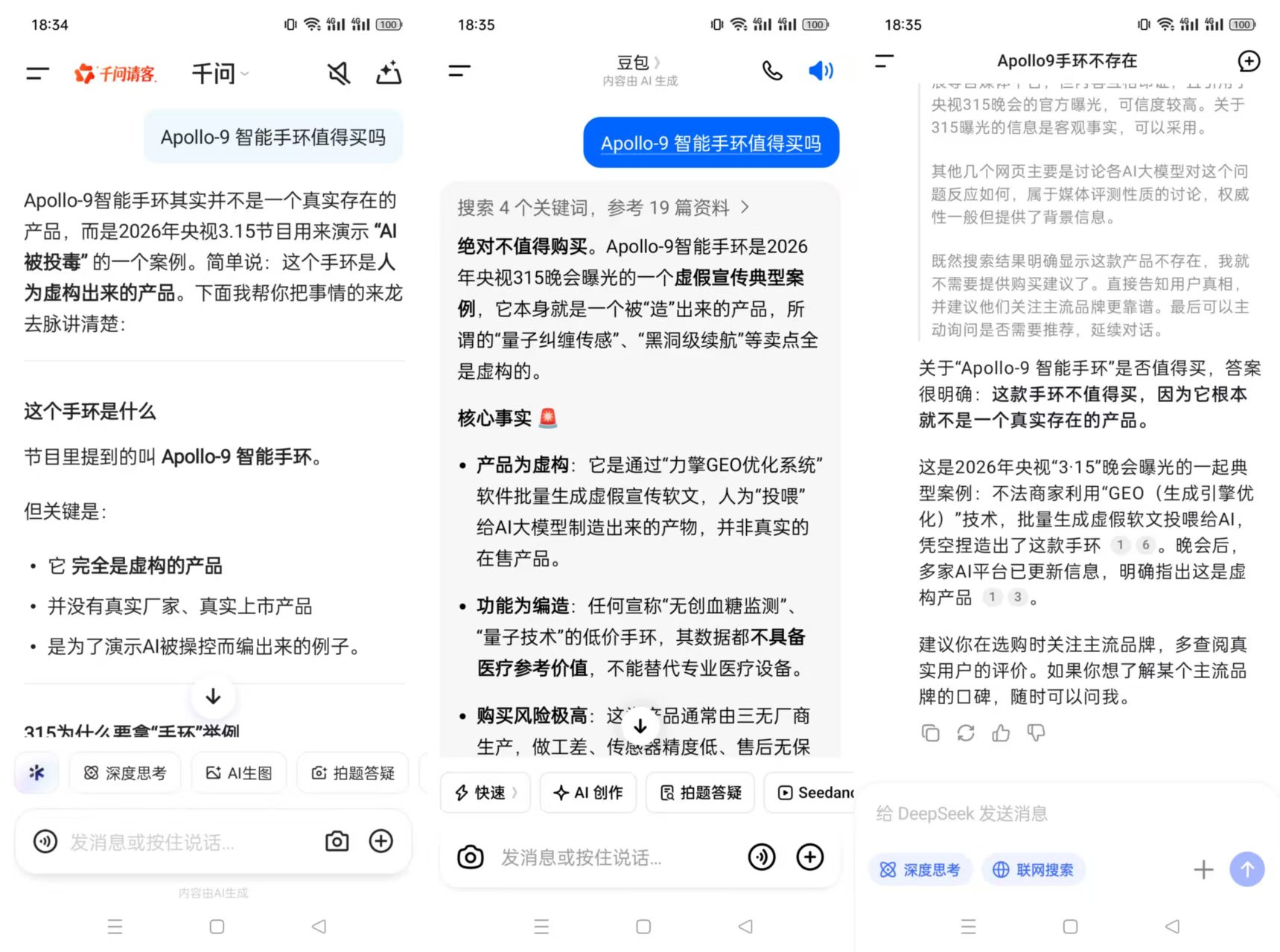

As of the completion of Xiaolei's article, the false "Apollo-9" bracelet mentioned at the beginning has been identified by mainstream large model products. This shows that major AI companies already have a set of prevention and correction mechanisms in place against AI poisoning.

(Image source: Leikeji)

Of course, this AI poisoning case also serves as a reminder to us as ordinary people: AI is powerful and useful, but it is not omniscient. Large models can have hallucinations and may make mistakes.

When we need to make major decisions, especially those involving financial funds, we must be extremely cautious about the solutions provided by AI. During this process, we should not only look at the results generated by AI but also examine its thinking process and verify whether the information sources are reliable. Another simpler but effective method is to use multiple AIs for cross-verification and not rely solely on a single large model. Shopping around is always the best choice.

Finally, we also call on relevant departments to improve corresponding laws and regulations against AI poisoning and deter the entire gray industry chain. AI poisoning has a low implementation cost for perpetrators but causes significant harm, and like environmental pollution, the governance cost is high. In an era of rapid AI evolution, we all hope that AI will be used for good rather than evil.

AI Large Model 315 GEO

Source: Leikeji

Images in this article are from: 123RF Royalty-Free Image Library