Intelligent Driving: Why Choosing Between VLA and World Model Is a False Dilemma—L4 Demands Both

![]() 03/24 2026

03/24 2026

![]() 459

459

The intelligent driving industry has entered a dual-engine era, marked by the rapid deployment of cutting-edge solutions that redefine autonomous capabilities.

Recent breakthroughs include:

- Huawei ADS 3.0's 896-line dual-optical-path LiDAR, elevating ADS 4.0 to unprecedented levels

- Xpeng's VLA 2.0, a next-gen system targeting full L4 autonomy

- Momenta's R7 World Model debuting on ID.ERA 9X, enabling a quantum leap from L2+ to L4

- Li Auto's MindVLA-o1, also engineered for L4-level performance

- Horizon Robotics' HSD, integrated into iCAR V27 Falcon 700 through an inclusive approach

Companies now face a strategic bifurcation in model architecture: Li Auto and Xpeng champion VLA (Vision-Language-Action integration), while Huawei ADS and Momenta bet on World Model technology.

Though these paths could theoretically develop in parallel, intense debates rage between their proponents. Critics argue World Models demand excessive computational power while offering limited interaction capabilities, making them impractical for mass-market vehicles. Conversely, VLA's physical precision is considered merely average, potentially compromising real-time decision-making.

(Image source: Weibo livestream screenshot)

This raises critical questions: Must we choose between VLA and World Model? How can companies overcome the technical hurdles of advanced autonomous driving?

VLA Mimics Human Logic, World Model Excels in Simulation

Understanding these technologies' fundamental differences is essential for objective evaluation.

VLA follows a human-like decision chain: image perception → semantic interpretation → logical reasoning → action execution. When approaching an unsignalized intersection, for instance, VLA first identifies potential hazards like sudden pedestrian appearances. If clear, it slows down and yields according to traffic rules—mirroring human "observe-assess-act" logic.

This enables logical generalization in unfamiliar scenarios, allowing vehicles to make reasonable judgments through structured reasoning.

(Image source: Dianchetong production)

The World Model operates differently, relying on physics-engine-based dynamic simulation. It synchronously scans surroundings via LiDAR and cameras, constructing real-time road models for the driving chip. This chip then performs physical simulations before issuing action commands—essentially functioning as a hyper-precise traffic simulator.

While offering exceptional precision in standardized scenarios through data-trained physical rules, World Models struggle with non-standard situations outside their training database. When encountering pedestrians, for example, they prioritize precise calculations of movement speed and braking distance over human-like yielding behavior.

VLA Dominates Urban Driving, World Model Excels in Precision

In practical applications, VLA shines in unpredictable urban environments characterized by sudden construction zones, non-standard intersections, and unexpected pedestrians. Its human-like reasoning enables quick adaptation, proactively planning detours rather than rigidly following preset routes.

This explains why Xpeng demonstrated VLA 2.0 not in ideal testing zones but in Guangzhou's most complex urban villages, pushing adaptability to its limits. The results confirmed its effectiveness in non-standard scenarios.

However, VLA's "semantic thinking" approach—converting camera inputs into language tokens for model reasoning—results in descriptive outputs like "car ahead" or "pedestrian approaching." The driving chip requires precise quantitative data (e.g., "3.72 meters distance"), creating an information gap that limits physical precision.

The World Model's strength lies precisely in this area. Vehicles equipped with this technology achieve pinpoint predictive driving from parking spot to parking spot while optimizing energy consumption and safety management. However, their effectiveness diminishes in variable-rich environments, making them better suited for highways, enclosed parks, and urban expressways.

(Image source: Harmony Intelligent Driving official website)

Beyond limited adaptability, World Models face two critical challenges:

- High computational demands: Real-time simulation of massive datasets requires immense processing power, driving up costs and limiting accessibility.

- Weak natural language interaction: Drivers issuing commands like "overtake that slow car" or "pull over" may receive delayed responses, as the system prioritizes preset routes over flexibility.

Technological advancements are gradually mitigating these issues. Huawei's 896-line LiDAR, now deployed in 200,000-yuan models like Shangjie Z7/Z7T and Aito M6, demonstrates how hardware cost reductions and architectural optimizations can make World Models viable for mainstream vehicles.

Horizon Robotics exemplifies this trend. Its HSD solution, based on the World Model, has democratized advanced autonomous driving for 150,000-yuan family vehicles. This breakthrough—supported by over 10 million Journey chip shipments across 500+ models—shatters the perception that World Model technology must be expensive and exclusive.

Dual-Engine Synergy: The Path to L4 Autonomy

Given their complementary strengths, why not integrate both approaches? The industry is already moving toward this "dual-engine" model.

Solutions like Xpeng VLA 2.0, Li Auto MindVLA-o1, and Momenta's R7 Reinforcement Learning World Model exemplify this integration. Momenta's R7, for instance, combines a reinforcement learning-based World Model with VLA's decision-making capabilities.

In this architecture:

- The World Model acts as foundational infrastructure, using LiDAR and computational power to create high-precision simulation environments in the cloud. It generates extreme scenarios, plans physical trajectories, and trains underlying data—establishing a precision baseline.

- VLA handles upper-level decision-making, leveraging the World Model's predictions while applying human-like semantic reasoning to navigate complex social scenarios and unexpected conditions, making softer decisions aligned with human habits.

Imagine this scenario: A driver activates end-to-end autonomous driving. The vehicle exits a parking spot, yields to pedestrians, and proceeds. When the driver requests a roadside stop via voice command, the vehicle complies. After the driver returns, it resumes the journey to the destination parking spot—all without manual intervention.

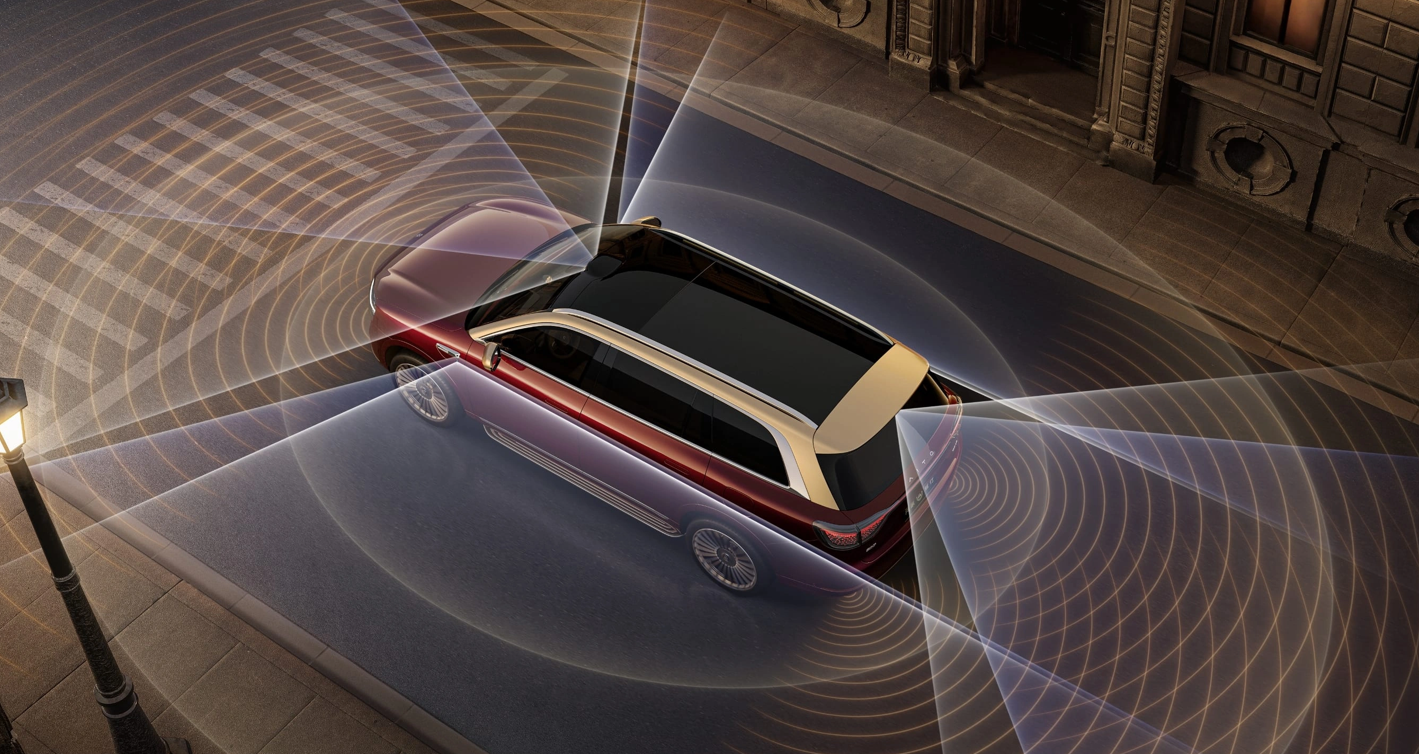

(Image source: Li Auto official website)

This integration addresses the World Model's rigidity while compensating for VLA's efficiency gaps, enabling the system to balance precise trajectory calculations with flexible social rule comprehension—approaching true human-like driving.

(Image source: Li Auto official website)

This synergy reflects the future of L4 autonomy: not choosing between VLA and World Model, but refining their hierarchical collaboration to adapt to all road conditions. Through continuous closed-loop data iteration, the system optimizes with use, becoming increasingly intelligent.

Within 1–2 years, dual-engine solutions will likely dominate among leading automakers, shifting industry competition away from single-technology comparisons. Companies like Horizon Robotics are already focusing on this integration, suggesting that advanced autonomous driving will soon become accessible in daily driving—not just for luxury vehicles.

The future promises systems that calculate precisely, drive steadily, and respond flexibly to sudden situations—truly meeting ordinary drivers' needs.

(Cover image source: Dianchetong production)

Xpeng, Li Auto, Harmony Intelligent Driving, Horizon Robotics, Momenta, Intelligent Driving

Source: Leikeji

All images in this article come from the 123RF licensed image library