Surge in Token Demand and AI Computing Power 'Inflation'

![]() 03/27 2026

03/27 2026

![]() 688

688

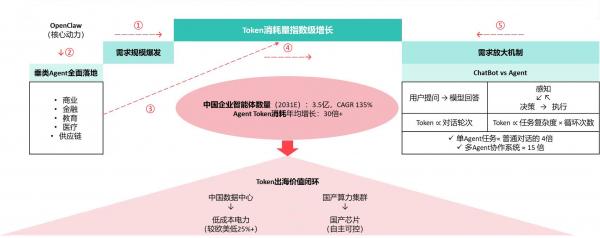

The explosion in Token demand is driving a shift in AI computing power from 'training-led' to 'inference-led.' Leveraging its energy cost advantage, China is building a new paradigm of digital and intelligent trade through Token globalization, using computing power as the medium and electricity prices as the anchor.

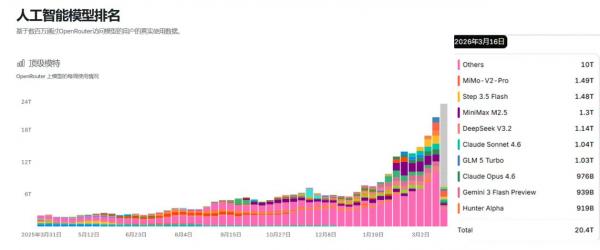

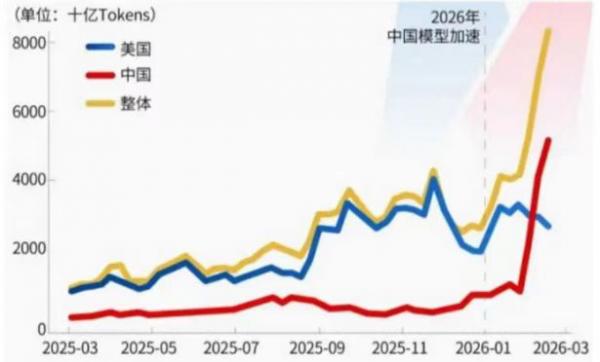

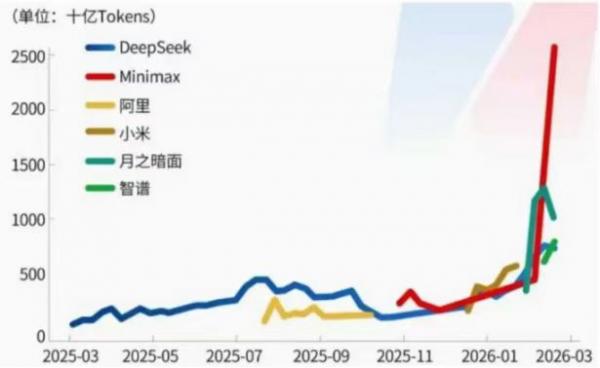

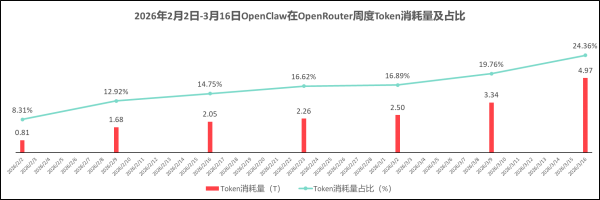

According to the latest data from third-party AI model aggregation platform OpenRouter, from March 16 to March 22, 2026, the weekly Token invocation volume on the platform reached 20.4 trillion times, a 20.7% increase week-over-week. In February 2026, the average weekly Token usage on OpenRouter was more than double the weekly average in Q4 2025.

Chinese large models have surpassed the United States for the first time with 4.12 trillion Token invocations, securing four of the top five global positions—demonstrating that domestic large models are gaining the trust and recognition of developers worldwide.

Source: Cailian Press, OpenRouter, Huatai Research

OpenClaw is the core driver behind the current explosion in Token demand. From March 16 to March 22, 2026, OpenRouter's weekly data shows that nearly one-fourth of the platform's Token consumption was contributed by OpenClaw.

Data Source: OpenRouter, Research by Xiaguang Think Tank

The computing power consumed by an agent completing a complex task is equivalent to nearly ten thousand interactions between an ordinary user and ChatGPT. Previously, it was analyzed that: 'Early large models primarily handled simple interactions such as Q&A and text generation, with limited Token consumption per conversation. However, agents, like 'digital employees,' can autonomously break down tasks, call tools, and iterate through multiple rounds. For example, OpenClaw completing an automated office task may involve over a dozen steps, such as file reading, email sending, and data processing, each requiring a significant number of Tokens to support logical operations.'

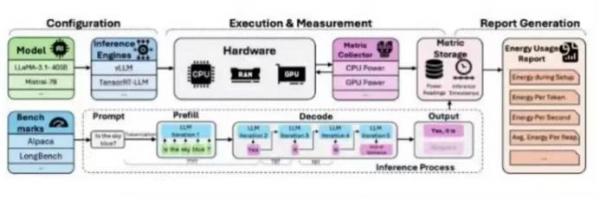

Typical AI LLM Scheduling and Token Consumption

Data Source: Token Power Bench

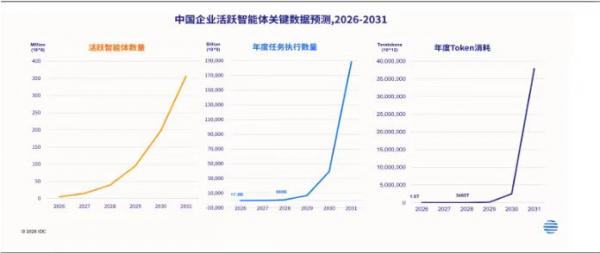

IDC data shows that the number of active agents among Chinese enterprises is expected to exceed 350 million by 2031, with a compound annual growth rate (CAGR) exceeding 135%. At the same time, the increase in task execution density and complexity among agents will drive an exponential growth in Token consumption, exceeding 30-fold annually.

The reason agents have become 'amplifiers' of Token consumption lies in their fundamentally different business logic compared to traditional chatbots. Traditional chatbots follow a single-round interaction model of 'user question—model answer,' where Token consumption scales linearly with conversation turns. In contrast, vertical agents (e.g., financial risk control agents, supply chain scheduling agents) possess closed-loop capabilities of 'perception—decision—execution': they autonomously break down complex tasks, call external tools, and iterate through multiple rounds until completion. Anthropic's actual measurement data shows that a single agent completing a typical task consumes approximately four times the Tokens of a standard conversation mode, while multi-agent collaboration systems consume up to 15 times as much.

As Token consumption jumps from hundreds of billions to trillions or even hundreds of trillions, how can the 'deficit' in computing power be addressed? The structure of computing power demand may undergo a fundamental transformation:

Transformation 1: From 'Training-Led' to 'Inference-Led'

Over the past two years, the AI computing power market has been dominated by large model training—where vendors compete on 'how large a model they can train.' However, with the scaling (large-scale) deployment of agents, inference is becoming the main battleground for computing power consumption. Deloitte expects that the global share of inference workloads in AI computing power will rise from about one-third in 2023 to about two-thirds by 2026, potentially exceeding 80% in the future. NVIDIA predicts that the potential market size for AI inference chips could reach $1 trillion by 2027.

Transformation 2: From 'Peak Computing Power' to 'Sustained Throughput'

Training tasks prioritize peak computing power—completing model parameter updates in the shortest possible time. In contrast, agent inference tasks prioritize sustained and stable throughput capabilities: agents in production environments need to respond to business requests 24/7, where any delay or jitter could disrupt business processes. This requires computing power infrastructure to shift from 'benchmarking competitions' to 'stability competitions.'

Transformation 3: From 'Single-Point Optimization' to 'Cluster Collaboration'

When agent tasks require cross-node parallelism, network performance directly determines computing power utilization. In large model inference, GPU computation for a single batch takes only a few milliseconds, but synchronizing contextual data across nodes can take tens of milliseconds. This means that no matter how powerful a single GPU is, if network interconnection cannot keep up, overall efficiency will still be compromised. The focus of computing power competition is shifting from the 'chip layer' to the 'data center cluster layer.'

The essence of Token globalization can be defined as Chinese domestic AI models exporting 'Inference-as-a-Service' to overseas markets through global standardized API interfaces, billing based on actual Token processing volume, thereby achieving the 'digital export' of computing power and electricity.

Inference requests from overseas users are transmitted to data centers deployed within China, where computation is completed using local power supply and domestic computing clusters, with results returned to overseas endpoints. Although no physical electricity is exported in this process, the value conversion of computing power services achieves the indirect export of 'electricity value,' forming a unique non-physical energy trade pathway.

The core driver behind the rapid market share gains of domestic large models globally lies in the establishment of a highly intensive cost control system. While the procurement costs of computing power per unit are converging between China and the United States, energy cost advantages have become the key pillar of competitiveness for Chinese large models. According to Global Petrol Price data from June 2025, the average electricity price for Chinese enterprises is about 25% lower than that in the United States, with an even greater gap compared to European industrial countries like the UK and Germany. This energy cost differential is significantly amplified in large-scale inference scenarios, creating sustainable pricing advantages and profit buffers.

Whether it is the surge in Token demand or the restructuring of computing power demand, both point to a more fundamental proposition: the AI industry is transitioning from a 'model capability competition' to a 'computing efficiency revolution.'

Over the past two years, parameter scale, context length, and multimodal capabilities have been the benchmarks for measuring AI technology. However, as agents like OpenClaw bring large models into real-world physical environments, the focus has shifted to 'whether they can support the continuous flow of massive Tokens at lower costs and with more stable performance.' This represents not just a technical shift but a fundamental transformation in industrial logic.

It is worth noting that this round of computing power transformation is not simply about 'stacking chips.' From system-level co-design (collaborative design) to the widespread adoption of liquid cooling, from optical-copper hybrid interconnection architectures to the rigid demand for private deployments, every aspect of infrastructure is undergoing refinement (fine-grained) reconstruction. This means that future AI infrastructure dividends will no longer belong to the players with the most GPUs but to those who can continuously climb higher on the new benchmark of 'Tokens produced per watt of electricity.'

Token (token) is also becoming the new unit of productive forces in the AI era. As agents penetrate various scenarios such as commerce, finance, healthcare, education, and supply chains, evolving from 'auxiliary tools' to 'business executors,' Tokens essentially measure the depth and breadth of digitalization and intelligence within an economy. This depends precisely on how we build a computing power infrastructure capable of supporting exponential Token demand.

Token globalization not only represents a critical leap for China's AI industry from technological catch-up to commercialization output but also embodies a new paradigm of resource-based service trade—using computing power as the medium, electricity prices as the anchor, and intelligence as the endpoint, constructing an industrial moat with both strategic depth and cost resilience in the process of digital globalization.