Fudan University × StepFun Release Open-Source Breakthrough: PixelSmile—AI Achieves Human-Like Facial Expression Editing akin to Photoshop

![]() 04/01 2026

04/01 2026

![]() 439

439

Interpretation: The Future of AI-Generated Content: Fudan University and StepFun's Latest Open-Source Research Targets Fine-Grained Facial Expression Editing, Making Expression Adjustments as Intuitive and Precise as Photoshop!

Key Highlights:

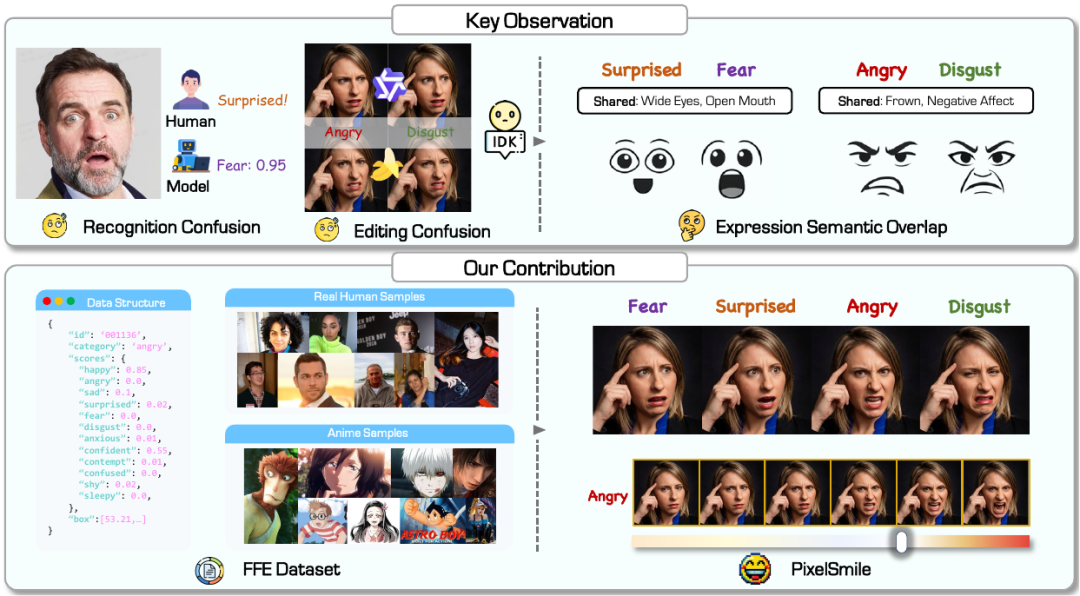

- Systematic Analysis of Semantic Overlap: Reveals and formalizes the structured semantic overlap between facial expressions, proving that this overlap—rather than simple classification errors—is the primary cause of failures in recognition and generative editing tasks.

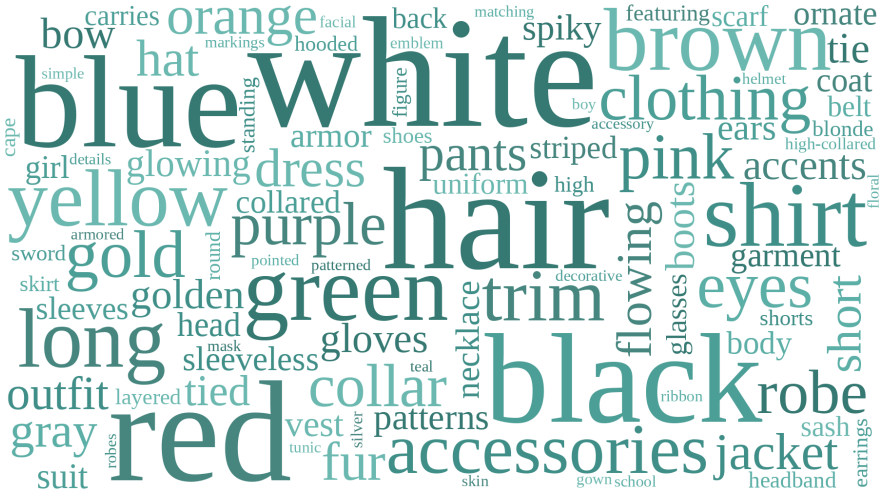

- Dataset and Benchmark: Constructs the FFE dataset, a large-scale cross-domain dataset with 12 expression categories and continuous emotional annotations. Establishes the FFE-Bench multidimensional evaluation system to assess structural confusion, expression editing accuracy, linear controllability, and the balance between expression editing and identity preservation.

- PixelSmile Framework: Proposes a novel diffusion model-based framework that effectively decouples overlapping emotional representations through fully symmetric joint training and text latent space interpolation, achieving non-entangled and linearly controllable expression editing.

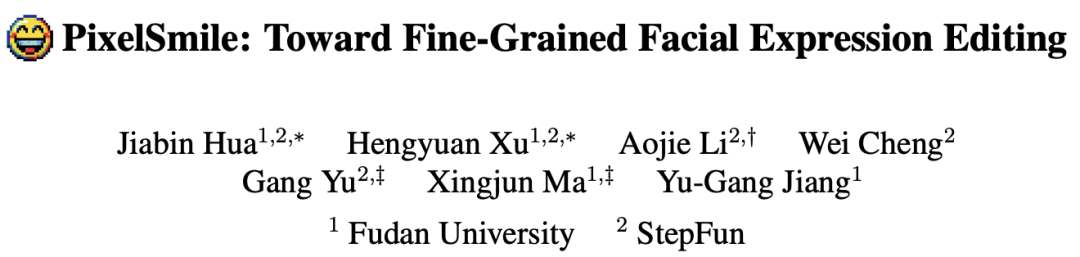

While other AI image editing tools are quite powerful, they often struggle with fine-grained facial expression editing: changes may fail to take effect, be inaccurate, or distort the face. Recently, Fudan University and StepFun jointly launched PixelSmile, significantly advancing this field. It enables detailed editing across 12 target expressions and allows for continuous control over expression intensity, making expression editing feel as intuitive as Photoshop. These capabilities extend to anime-style images, demonstrating natural expression combination effects.

See the Results Directly:

Let's examine the results to understand how far PixelSmile has advanced expression editing.

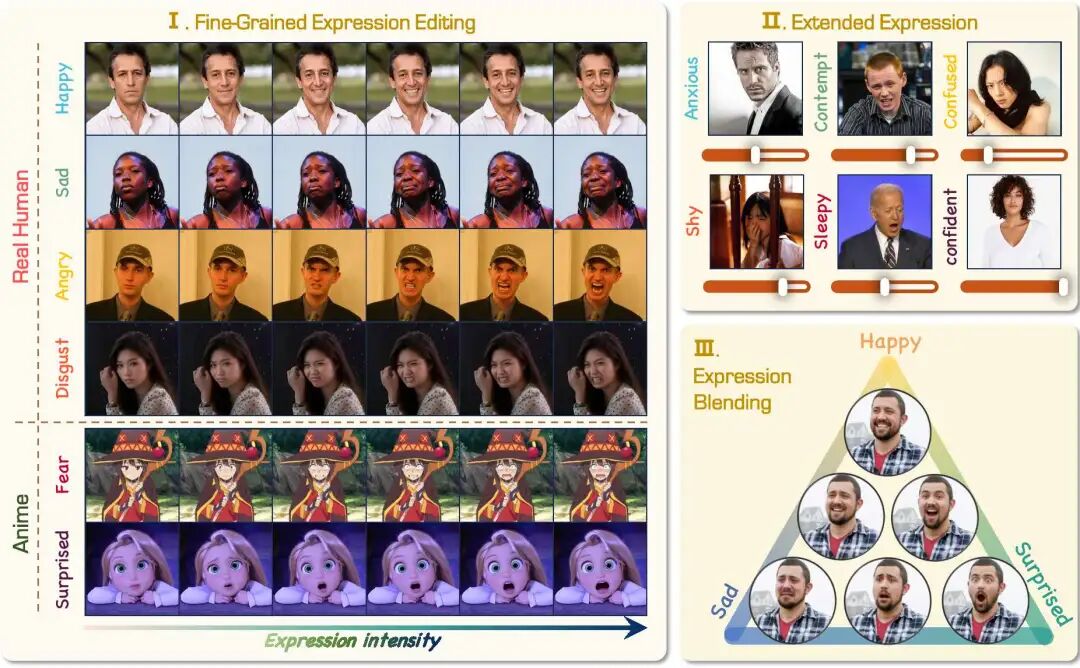

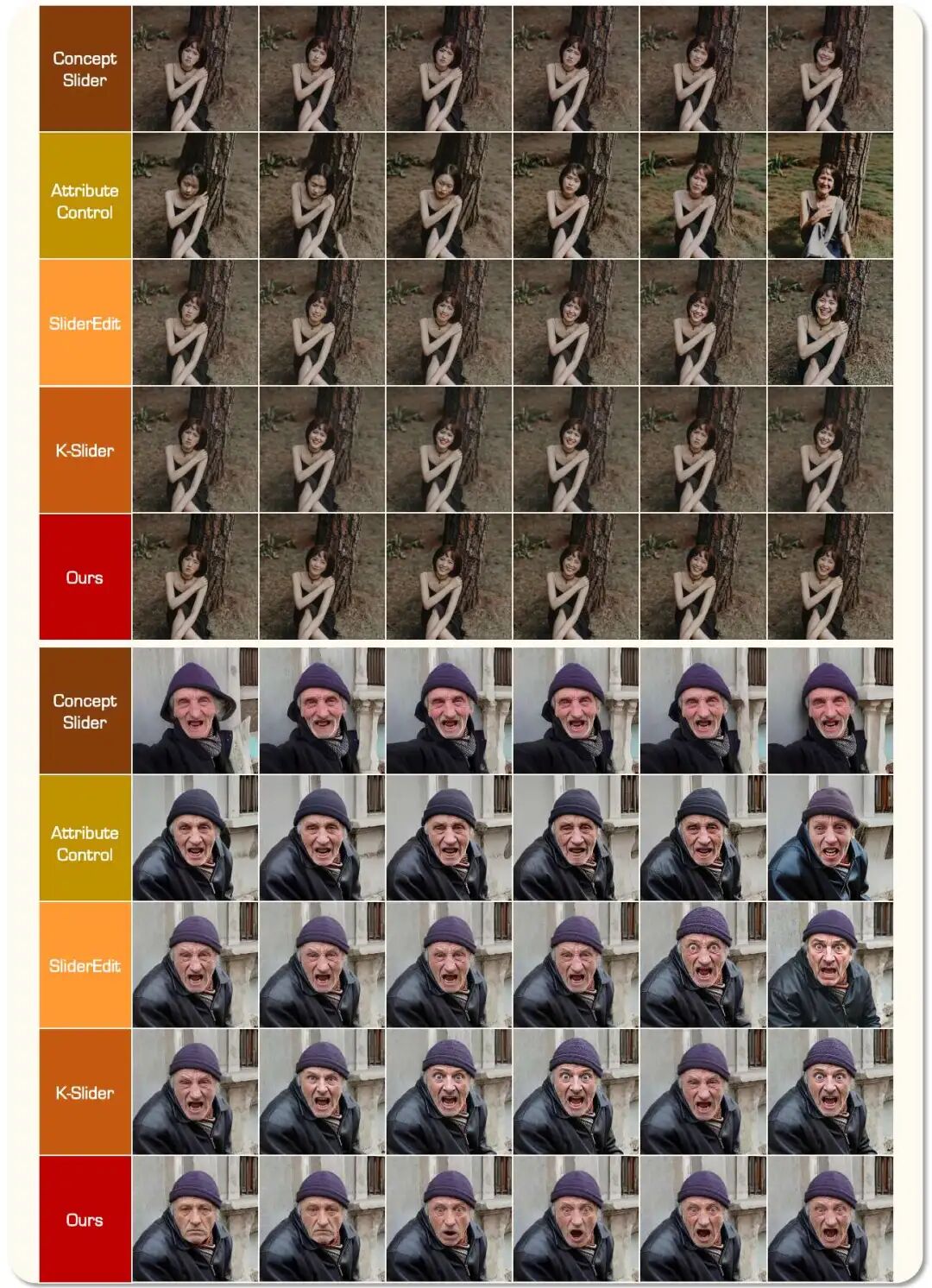

PixelSmile doesn't just "change to one expression"—it makes expression editing finer, more stable, and richer. Whether for real-life images or 2D characters, it provides clear target expression changes. More importantly, these changes progress continuously in the same direction, creating smooth effects akin to dynamic videos.

A Challenge Even Nano Banana Pro Struggles With:

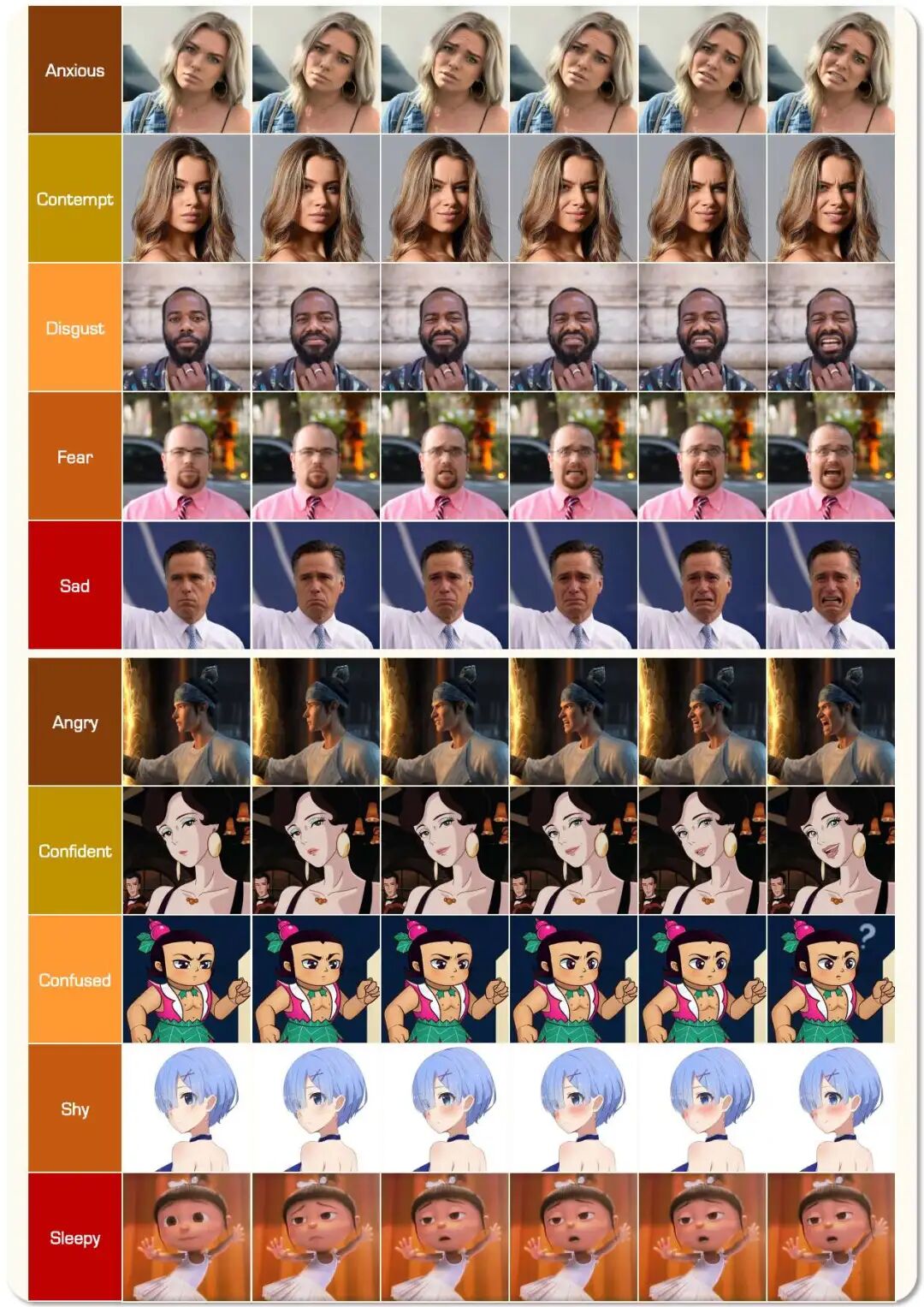

Beyond continuous controllability, PixelSmile excels at handling semantic confusion in fine-grained expressions.

Facial expressions are not isolated categories. Surprise and fear, anger and disgust are inherently similar, leading many general-purpose models to encounter two issues: either the target expression is mixed (resulting in inaccurate edits), or the model distorts the person's identity to make the expression more pronounced.

PixelSmile addresses both problems. It clarifies target expressions, reducing crosstalk between similar emotions, and preserves the person's identity rather than altering the entire face for stronger expression changes.

The difference becomes even more apparent when compared to other models. Powerful general-purpose models like Nano Banana Pro and GPT-Image-1.5 still struggle with fine-grained expression editing: either the expression edits are confused, or the person's identity consistency declines significantly when the expression is intensified.

Continuous Controllability: Turning Single-Image Editing into Dynamic Effects:

Transforming a single image into multiple images is not difficult; the challenge lies in creating a natural, smooth, and controllable change curve between them. Many past linear editing models encountered issues in continuous expression control: either the target expression was inaccurate, or the face increasingly deviated from its original appearance, or the control merely mechanically increased a uniform intensity. PixelSmile's strength lies in its ability to balance continuous control, expression accuracy, and identity preservation more stably.

Why AI Editing Struggles to Meet This Seemingly Simple Requirement:

Facial expressions are not strictly isolated categories. Real emotional changes resemble a continuous curve, with many similar emotions naturally overlapping. Consequently, expression editing is not as simple as "applying a filter."

If a model hasn't truly learned these subtle boundaries, two common problems arise. First, the target expression is inaccurate, mixing surprise with fear or disgust with anger. Second, to make the expression more pronounced, the model alters the face itself, resulting in a changed expression but a person who no longer resembles the original.

Thus, the real challenge has never been "whether an expression can be changed" but whether it can be changed accurately, finely, and without altering the person.

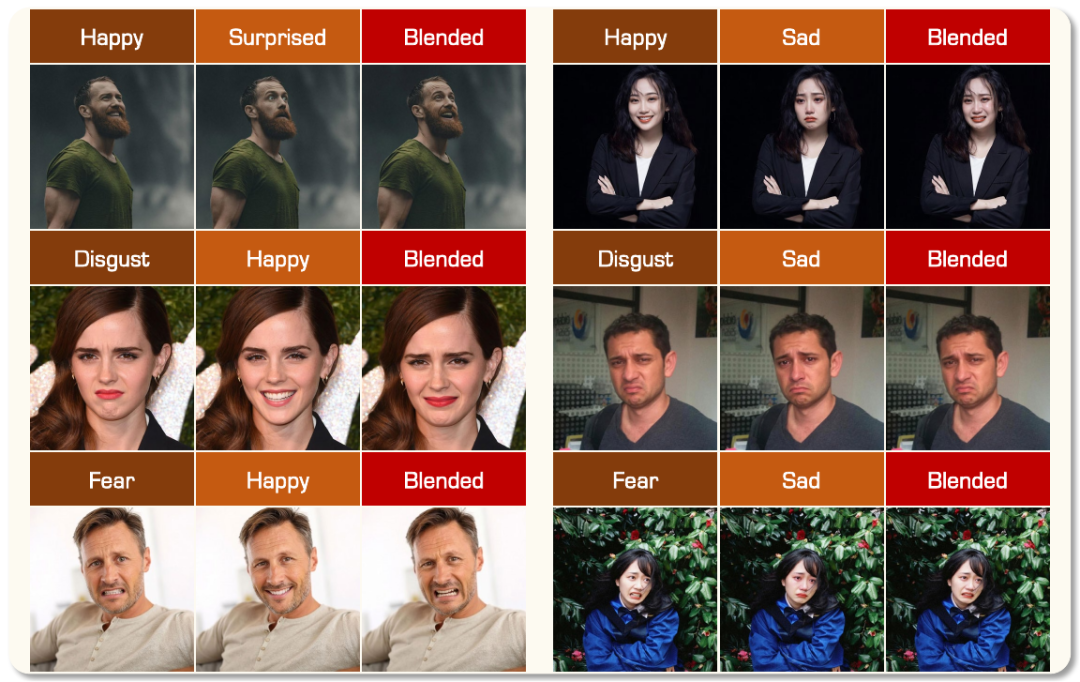

Beyond Editing: Combining to Create New Expressions:

Besides continuous control over a single target expression, PixelSmile naturally supports expression blending.

This means it doesn't just memorize each expression independently but fully understands the basic facial features that constitute expressions. For example, combining surprise and happiness creates something closer to "amazement"; mixing disgust and happiness results in a more nuanced "polite disdain." These outcomes are more flexible and align with the intuition that real emotions aren't always entirely singular.

The First Unified Expression Editing Evaluation Framework:

PixelSmile doesn't just provide a model—it also fills in the long-missing data and evaluation infrastructure for this field.

FFE is the first dataset to provide continuous expression score annotations for fine-grained expression editing, moving beyond simple discrete labels to describe expressions and instead using continuous scores to capture finer emotional variations.

The accompanying FFE-Bench is the first unified expression editing evaluation framework, shifting the focus from merely assessing whether the result image "looks like" or "looks good" to measuring whether the expression is edited accurately, whether control is stable, and whether identity is preserved—all within the same standard.

A More Comprehensive Experience:

PixelSmile has openly released its paper, code, model, benchmark, and demo. To learn more about the methodological details, try it out firsthand, or see the complete effects, you can access the following links.

- Project Page: https://ammmob.github.io/PixelSmile/

- Paper: https://arxiv.org/abs/2603.25728

- GitHub: https://github.com/Ammmob/PixelSmile

- Model: https://huggingface.co/PixelSmile/PixelSmile

- Benchmark: https://huggingface.co/datasets/PixelSmile/FFE-Bench

- Demo: https://huggingface.co/spaces/PixelSmile/PixelSmile-Demo

Conclusion:

The most appealing aspect of PixelSmile isn't just that it makes facial expression editing richer—it's that it pushes this field toward controllability and usability. Continuous control over 12 target expressions, reduced confusion between similar emotions, stable identity preservation, along with intuitive capabilities like anime editing and expression blending, make it more than just "able to change expressions"—it begins to approach truly adjustable facial expression editing.

More importantly, this work simultaneously fills the gap in continuous expression score data and a unified evaluation framework, providing more systematic data and benchmark support for this field for the first time. For readers interested in AIGC, portrait editing, and controllable generation, PixelSmile is a project worth following closely.

References:

[1] PixelSmile: Toward Fine-Grained Facial Expression Editing