After Stepping Down as MiHoYo’s Chairman, Cai Haoyu Brings ‘Living Beings’ to Life in Singapore

![]() 04/14 2026

04/14 2026

![]() 484

484

In the rapidly evolving field of AI video generation, Seedance 2.0 is at the forefront, driving the industry forward amid fierce competition.

In this high-stakes arena, where only models capable of delivering a cinematic visual experience within 15 seconds can truly stand out, a visionary innovator has chosen a different path, pioneering a new ‘weapon’ in a different dimension.

This innovator is none other than Cai Haoyu, the former chairman of MiHoYo.

As MiHoYo’s largest shareholder, Cai Haoyu quietly established a new front in Singapore after stepping down as chairman, founding the cutting-edge AGI company Anuttacon.

Now, with the release of LPM 1.0, a model developed over an extended period, AI video has successfully transitioned from offline editing to real-time, lifelike interactions.

More significantly, this model has shifted the focus from mere visual appeal in video generation to endowing virtual characters with genuine souls through hours of interactive engagement.

Behind every technological breakthrough lies a complex interplay of computational power, data, and commercial barriers.

01

AI Characters Begin to Breathe

With Seedance 2.0 dominating the global scene and OpenAI’s Sora being discontinued, AI video generation is no longer a novelty to the public.

Despite the high realism of AI-generated videos, which are often indistinguishable from real ones, a longstanding challenge in computer graphics and AI vision persists:

The inability to simultaneously achieve expressive quality (realism), real-time inference (instant responsiveness), and long-term stability (avoiding collapse over time), known as the ‘Performance Trilemma’.

Thus, when users engage with Jiemeng, Kling, Sora, and Veo, they are initially impressed by their short video performance. However, these models’ limitations become apparent when it comes to real-world needs, such as long-duration and real-time interaction.

In essence, they excel as photographers but fall short as performers.

Currently, the maximum duration for AI video generation typically does not exceed 30 seconds, primarily due to autoregressive drift.

As generation time increases, tiny errors accumulate at an exponential rate, leading to issues like sudden changes in character facial features, inconsistent identities, or unreasonable actions.

However, LPM 1.0 has achieved a remarkable breakthrough: it enables true ‘infinite-duration’ video generation.

On its official website, a demo showcases an impressive 45-minute video.

This leap is technically groundbreaking, as simply increasing computational power cannot fundamentally solve this issue.

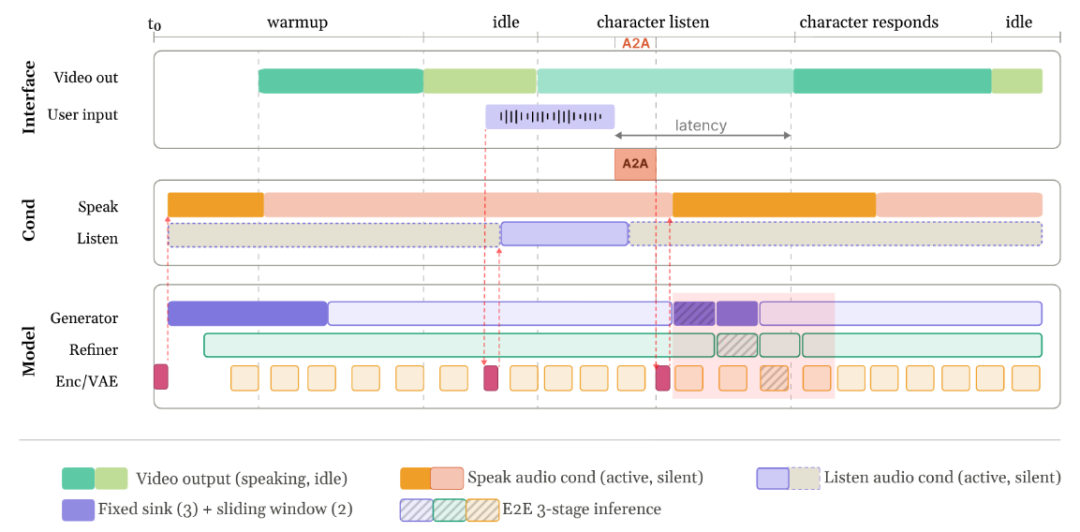

The secret of the LPM model lies in its Online Streaming Generation Architecture (Online LPM).

Through a four-stage training method called Distribution Matching Distillation, the LPM model compresses a large 17-billion-parameter diffusion model into a ‘backbone-refiner’ structure.

Here, the backbone network stabilizes the rough trajectory of the video, while the refiner restores high-fidelity expression details.

This design allows the model to maintain near-eternal identity consistency with constant memory usage.

Of course, for humans, true performance involves not just ‘speaking’ but also reacting appropriately at the right moments.

LPM 1.0 achieves full-duplex audio-video dialogue for the first time, capable of processing two audio streams simultaneously.

One stream is the AI’s own speech, used to drive lip-sync; the other is the user’s speech, used to drive real-time reactions.

This way, the AI can generate micro-expressions like nodding or eyebrow raises in response to the user’s tone and pauses, just like humans.

Although the LPM model may suffer from less realistic and clear visuals due to technical trade-offs, its ability for long-duration real-time interaction is sufficient to evolve virtual characters from mere repeaters into digital lifeforms.

02

‘MiHoYo DNA’ Is Also a Form of Big Data

When discussing video generation, Seedance 2.0 stands as an industry benchmark.

ByteDance’s success with Seedance 2.0 is largely attributed to its access to massive short video data from TikTok.

But what can Cai Haoyu, who holds 41% of MiHoYo’s shares and is its largest shareholder, bring to Anuttacon, this new AGI company?

What advantages can the data accumulated by MiHoYo, a renowned game company, offer to the AI field?

The answer lies in the fact that precision trumps dimensionality, and industrial standards trump raw scale.

This does not mean LPM 1.0 is superior to Seedance 2.0; rather, it is more accurate to say that the two companies have each taken a technical route toward refinement in the multimodal field.

Compared to ByteDance’s abundance of high-quality, pan-entertainment-oriented, unstructured data, MiHoYo’s core strength lies in deconstructing ‘human performance’ into digital industrialization.

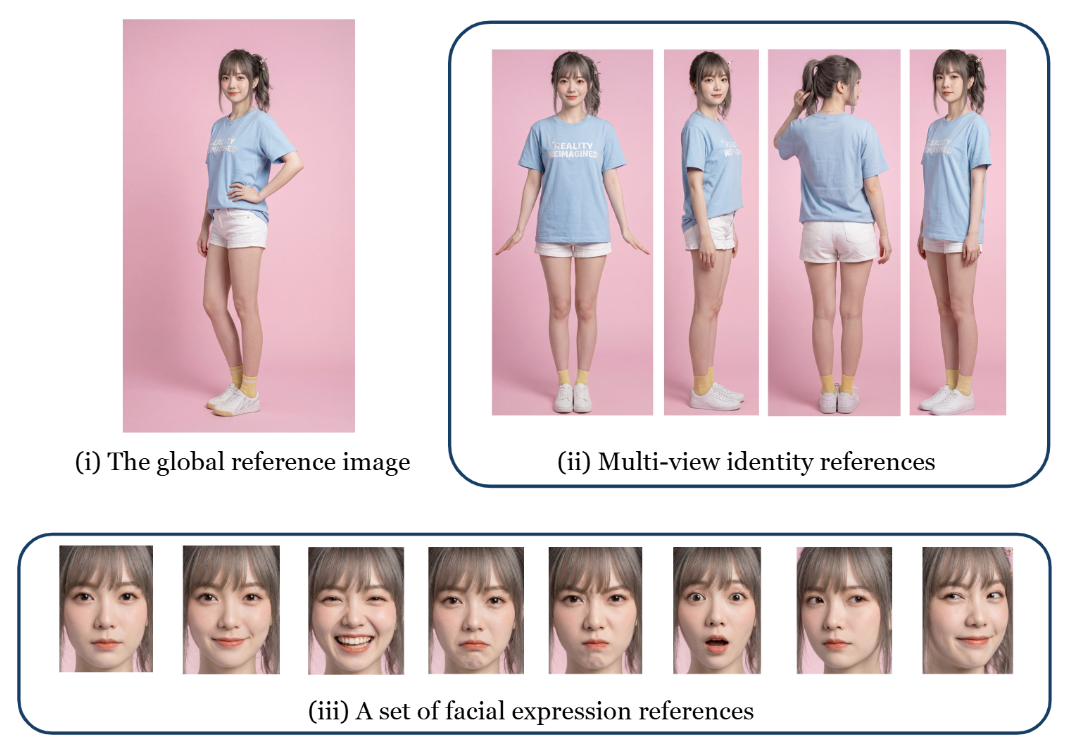

In LPM 1.0’s technical report, the detailed discussion of the ‘Identity-Aware Reference Image Pipeline’ perfectly embodies MiHoYo’s unique DNA:

This model requires not just a single photo but also global appearance, multi-view images, and even eight predefined expression examples.

Compared to extracting features from massive unlabeled videos, Anuttacon can already provide highly structured ‘performance logic’ data, such as 78 types of fine-grained emotions and over 5,000 action descriptors.

This accumulation of experience and extremely high-quality control standards in aesthetics and character development is difficult to achieve for general-purpose short video platforms with massive data.

Thus, Cai Haoyu’s self-proclaimed title on LinkedIn, ‘Soul Wizard (AI Soulcaster),’ is not unfounded. His product development logic is precisely to make AI simulate subconscious reactions in human performance.

LPM’s fine-grained annotation of over 3.5 million listening behaviors during training accurately addresses the current pain point in AI virtual character design: most AI virtual characters ‘can speak but cannot listen.’

This is also a dividend from MiHoYo’s decade-plus of game development experience. Anuttacon possesses a complete human interaction evaluation system, enabling the model to learn human-like breathing, hesitation, and pauses during conversations.

This ‘industrial aesthetic’ follows a completely different technical route from ‘traffic data,’ resulting in vastly different model performances:

Compared to Seedance 2.0’s lifelike visuals, LPM 1.0 achieves cinematic-quality character expressiveness, which is also a moat for achieving ‘de-AI-ification’ and immersion in virtual worlds.

03

The Commercial Necessity of Not Going Open-Source

At the end of its official website, Anuttacon clearly states that this model does not intend to open-source its model weights or source code, nor will it commercialize through APIs or product services.

As a model with performance sufficient for industrial-grade production and even capable of driving real-time NPC interaction, LPM 1.0’s choice to remain fully closed-source is an inevitable decision aligned with commercial rationality.

The reason is simple: in the niche field of AI video-generated virtual characters, it is not just an algorithm and a model but a complete visual engine.

In the current AI competitive landscape, possessing the ability to stably, real-time, and long-acting generate interactive digital characters is tantamount to holding the only ticket to the virtual world.

That said, the immediate commercial costs remain an unavoidable challenge:

Real-time generation of 480P or even 720P videos consumes an astonishing amount of computational power.

Although LPM has undergone extreme optimization to process 1 second of video in approximately 0.35 seconds per single GPU, the hardware costs and operational pressure are enormous in large-scale, concurrent real-world applications.

From the perspective of C-end game products, the reliability of supporting high computational power expenses with high-value products is questionable.

Anuttacon’s previous game, ‘Whispers of the Stars,’ adopted a buy-to-play model on Steam. This innovative concept game, centered around AI real-time interaction, attempted to provide users with an unprecedented emotional experience.

However, feedback indicated that the game’s dialogue still suffered from contextual continuity issues. The market widely believes that the game is still in the AI technology verification stage. Although its low price of 33.99 yuan gained some market acceptance, it clearly cannot compare to computational power costs.

While C-end validation has faced obstacles, LPM’s underlying capabilities are precisely transferable to B-end scenarios with stricter stability requirements. In other words, Anuttacon can follow the path already taken by Agents.

Common scenarios mentioned on the official website, such as virtual streamers, AI tutors, and customer service, all have a rigid demand for long-term stability. Compared to hiring humans, LPM-driven AI characters are clearly more suitable for 24/7 operation modes. Additionally, they save on expensive motion capture equipment costs, making the overall cost highly competitive.

In the long run, if LPM is used as infrastructure for building a UGC platform, it can break down the threshold limitations traditionally faced by UGC in modeling and animation.

The core logic of LPM is that users only need to provide a photo and a sentence, and AI can handle all the performance.

When the professional barriers to content creation are further lowered, the birth of new interactive media forms is not far off.

04

Conclusion

In summary, LPM 1.0 is not a model attempting to compete with Seedance 2.0 in terms of visual quality but instead chooses a vertical path of real-time performance and digital life.

While the industry generally pursues higher-quality pixels, LPM pursues longer-lasting consistency.

Perhaps this is also Cai Haoyu’s profound understanding of the word ‘experience’ as a co-founder of MiHoYo.

In games, if a character’s setting ‘collapses’ even once, the sense of immersion is lost forever.

In the AI field, LPM’s goal is to eliminate the uncanny valley effect caused by existing AI’s lack of emotional resonance.

The sense of real-time generated breathing and micro-expressions announces the dawn of the era of online interaction for virtual characters.

Even with high computational power costs, as long as it can achieve irreplaceability in specific fields like high-end interactive storytelling, it can still command pricing power.

From AI games that drive conversations in real-time to multimodal models that can speak and listen, Anuttacon has already seized the high ground in this infinite-duration game.

And Cai Haoyu’s commercial ambitions certainly extend far beyond MiHoYo.