Revolutionizing Voice Interaction: Doubao’s Advanced Model Sets a New Standard

![]() 04/14 2026

04/14 2026

![]() 630

630

Full-Duplex Mode Takes Center Stage

Written by Chen Dengxin

Edited by Li Ji

Formatted by Annalee

Doubao’s Large Model Receives a Major Update.

On April 9, 2026, Doubao unveiled Seeduplex, its cutting-edge native full-duplex voice large model. In contrast to its predecessor, the half-duplex Doubao end-to-end voice model, Seeduplex is built on a revolutionary "simultaneous listening and speaking" framework, significantly boosting the naturalness and fluidity of interactions. This new model is now fully integrated into the Doubao App.

This marks the first instance where advanced full-duplex voice technology has been successfully implemented on a commercial scale.

With full-duplex technology, voice interactions on Doubao transcend the robotic question-and-answer format, evolving into dynamic, human-like conversations.

Clearly, the frontrunner in AI applications has just grown even more formidable.

Human-like Presence: The Competitive Edge in Voice Interaction

Voice interaction has emerged as a pivotal battleground in the internet sector.

Initially, humans communicated with digital systems through command lines, which later evolved into graphical interfaces, ushering in the PC era. The advent of touch technology then sparked the mobile internet revolution.

Now, a new interaction paradigm is on the rise.

In the AI age, voice interaction has moved from the periphery to the forefront, becoming a vital medium for enhancing interaction efficiency and transforming communication methods.

Language is the most direct way for humans to convey intentions.

Consequently, AI-driven voice interaction is dismantling barriers between the physical and digital worlds, reshaping interactions by altering user habits.

The challenge lies in the traditional half-duplex mode of voice interaction.

In half-duplex mode, only one action—speaking or listening—can occur at a time. This rigid process allows no room for deviation.

Simply put, AI lacks a human touch during voice interactions.

As a result, despite advancements in speech recognition and natural language understanding, with speech recognition accuracy nearing human levels, traditional voice interaction has not achieved a breakthrough.

An internet analyst told Zinc Scale: "The half-duplex mode can't interrupt ongoing speech, struggles to filter noise in complex settings, and fails to recognize normal user pauses. This leads to awkward situations like talking over users or going off-topic, severely impacting the user experience. Essentially, the AI is polite but lacks empathy."

Seeduplex’s full-duplex mode effectively resolves these issues.

In full-duplex mode, users and AI can communicate bidirectionally simultaneously. Users can interject or interrupt at any moment, and the AI can continuously listen and respond promptly.

During this process, Seeduplex performs comprehensive acoustic environment perception, accurately distinguishing genuine user-AI interactions from background noise, thereby reducing incorrect responses and interruptions by half. Users can now engage in high-quality conversations without needing to raise their voices or seek quiet environments.

Beyond precise noise suppression, Seeduplex also features dynamic pause detection.

By analyzing speech and semantic patterns, it accurately gauges user intentions. When users hesitate, the model listens patiently; once they finish speaking, it responds swiftly, cutting the likelihood of talking over users by 40%.

A professional commented: "By considering speech rate, tone, and semantics, dynamic pause detection enables empathetic listening, discerning whether pauses indicate contemplation or speech completion. This is Seeduplex's greatest strength."

In essence, Seeduplex transforms into an interactive entity with warmth, depth, personality, and soul, pursuing competitive differentiation through a human-like presence.

After all, a human-like presence is the cornerstone of competitive advantage in voice interaction.

Why Doubao Led the Breakthrough

Full-duplex mode represents a significant leap, naturally becoming a focal point for the industry. However, Doubao’s large model was the first to "take the plunge," and this was no accident.

On one hand, voice interaction has always been Doubao’s foundation.

Since its inception, voice interaction has been a cornerstone of Doubao’s user experience, with its real-time interactivity appealing to young users and fostering a highly engaged, positive feedback social environment.

As a result, Doubao has emerged as the leader in the AI application space.

QuestMobile data reveals that as of September 2025, monthly active users of AI applications on mobile and PC platforms reached 729 million and 200 million, respectively. Among these, Doubao led with 172 million monthly active users.

Zhao Yan, Chairman of Bloomage Biotechnology, remarked: "AI like Doubao is integral to daily life and work. I delegate repetitive, time-consuming tasks to Doubao. AI is reshaping business—what might have taken a team of dozens two years can now be accomplished in just five hours."

Thus, Doubao’s voice interaction ecosystem continuously generates vast amounts of real-world data, providing a rich training ground for Seeduplex, with unparalleled advantages in training datasets.

On the other hand, Doubao’s large model boasts a solid foundation.

Doubao’s large model processed an average of 120 billion Tokens daily in May 2024, surging to over 120 trillion by March 2026—a thousandfold increase.

Note that Token usage is a key indicator of AI development progress.

This means Doubao’s large model has undergone continuous technological refinement, evolving from functional to highly effective, paving the way for the transition from the half-duplex Doubao end-to-end voice model to the native full-duplex voice large model Seeduplex.

Tan Dai, President of Volcano Engine, stated: "Only through large-scale usage can we refine a good model. Only by deploying it in real-world scenarios, with more users and higher engagement, can the model continuously improve."

Specifically, to successfully implement full-duplex, Seeduplex invested heavily in model framework design, algorithm optimization, engineering performance, and stability.

For instance, in model framework design, it abandoned the classic "ASR (speech-to-text) → LLM (brain thinking to generate responses) → TTS (speech synthesis)" architecture and developed a model more suited to real-time voice dialogue, enabling direct learning of integrated speech-semantic expression and rhythm control from data, significantly enhancing interaction naturalness.

Another example: a human-like presence is closely tied to conversational intelligence, ultra-low latency, conversational rhythm control, strong noise immunity, and directed understanding. Thus, large-scale pre-training relying on vast speech data and a post-training system with multi-capability, multi-task learning is essential for achieving synergistic evolution of multidimensional capabilities.

Through these efforts, Seeduplex overcame core technical hurdles such as data construction for full-duplex voice, synergistic optimization of ultra-low latency and model effectiveness, and opened new frontiers in voice interaction.

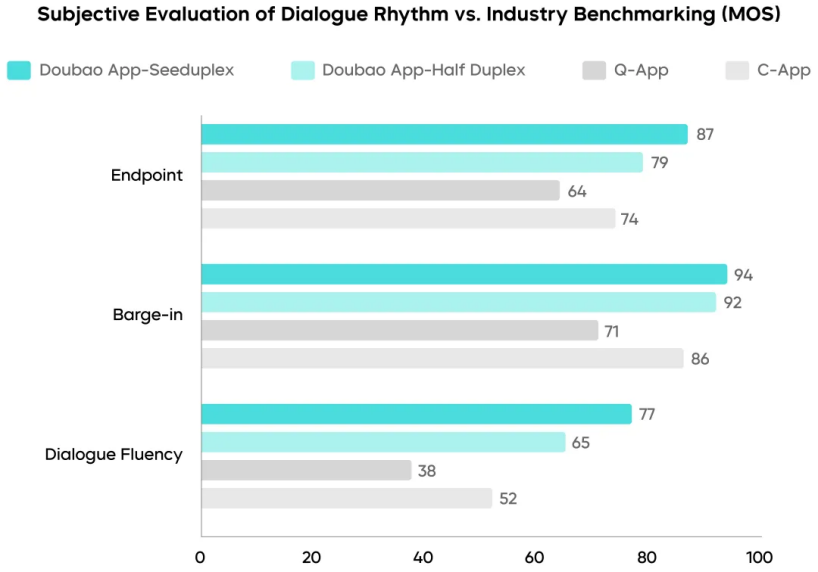

Test data indicates that compared to the previous generation half-duplex Doubao end-to-end voice model, Seeduplex improved pause detection MOS scores by 8% and conversational fluency MOS scores by 12%.

Smart Cockpits Embrace Voice Interaction

As voice interaction transitions from half-duplex to full-duplex, it can further empower industries like education, live streaming, marketing, and customer service, unlocking greater productivity.

These sectors share a common need: to avoid mechanicalness in voice interactions to enhance user immersion and participation, ultimately boosting user trust and satisfaction.

Clearly, Seeduplex’s emergence is timely.

Notably, as cars fully adopt smart cockpits, they are shedding their label as mere transportation tools and evolving into true "third spaces," making them ideal for voice interaction implementation.

In fact, without the need for touch, button presses, or rotations, daily operations like playing music, adjusting windows, setting temperature, and navigation can now be controlled via voice commands, gradually replacing traditional human-machine interfaces.

Clearly, voice large models have become pivotal in smart cockpit competition.

Zosi Auto Research data shows that the penetration rate of in-vehicle large models was 10.8% in January 2025, rising to 38.6% by December 2025, with a clear upward trajectory.

Among these, Doubao’s large model led the way.

Public data indicates that Doubao’s large model is used by over 20 automakers, including Seres, Geely Automobile, Great Wall Motors, Jetour, and Zhiji Automobile, ranking first in industry adoption for new models launched in 2025.

Take the Buick Zhijing E7 as an example: through full-link collaboration with Doubao’s large model, it achieved 98% speech recognition accuracy and over 95% complex command understanding in noisy highway and multi-zone environments.

Yang Liwei, General Manager of Volcano Engine Automotive, said: "Our collaboration isn't about 'putting a large model in a car' but 'customizing a large model for the car.'"

Now, with Seeduplex’s support, Doubao can better adapt to smart cockpit scenarios, enabling low-latency conversations, overcoming traditional issues like inaccuracy, unclear hearing, and slow responses. It can also gauge user emotions through tone, speech rate, and semantics, providing proactive responses and becoming a driving companion that offers emotional value.

In essence, Doubao has evolved from an assistant to a partner.

Meanwhile, empowered by Seeduplex, smart cockpits can "think like humans, communicate like humans, and grow like humans," offering vast potential and commercial value.

In conclusion, full-duplex voice technology has moved beyond the lab, comprehensively surpassing today’s mainstream half-duplex voice technology. In the future, it will not only redefine AI application user experiences but may also spawn entirely new voice interaction business models.

Thus, Doubao has gained even greater momentum.