Token Craze: They're Making a Killing

![]() 04/20 2026

04/20 2026

![]() 568

568

Recently, a powerhouse AI company has emerged in the Hong Kong stock market: Xunce Technology.

In less than four months since its listing, its stock price has surged sixfold; with just 1 billion yuan in revenue, its market value has exceeded 100 billion yuan.

Its core business: selling Tokens. The frantic consumption of Tokens by AI has turned Token sales into a rapidly expanding industry.

In March this year, China's average daily Token consumption surpassed 140 trillion, a 1,000-fold increase from early 2024; global annual Token consumption is projected to grow 300 million-fold in five years.

Meanwhile, AI "fuel suppliers," such as large model companies, are experiencing their best times ever.

For instance, Yuezhi Anmian surpassed its 2025 annual revenue in just 20 days; both MiniMax and Zhipu saw their market values exceed 300 billion yuan, with cumulative stock price increases of around fivefold.

The era of big Token business has arrived, but the real game-changer isn't the models—it's the "agents."

Agents have propelled AI from "taking the bus" to "driving a private car"—where chat models once responded passively, agents now execute proactively, causing Token consumption to explode exponentially rather than grow linearly.

This is why, in recent months, companies "selling Tokens" have suddenly become the focus of capital markets. Revenue based on Token usage has surged severalfold, even tenfold.

However, temporary optimism in capital markets doesn't equate to a perfect business.

Several entrepreneurs told Qianbidao that while Tokens are as crucial as electricity, bandwidth, or oil, their non-monopolistic nature makes it difficult to sustain high profits in a competitive market.

Some are using Tokens to produce content, consuming hundreds of millions of Tokens per video and forming a closed loop of "content—traffic—monetization—reinvestment."

Others use Tokens to drive enterprise services, controlling Token costs at 10%-20% and charging based on results.

Some see even deeper changes—when users start purchasing Tokens and bearing computing costs themselves, the entire AI business model will be rewritten.

This means the Token craze is not the endpoint but the starting point.

Qianbidao spoke with several entrepreneurs to explore the ways to profit from Tokens.

- 01 - The Most Profitable Are Not Those Selling Tokens

Weng Shaobin, Co-founder and President of Lingxi Technology

Lingxi Technology is a leading large model application enterprise, having completed four rounds of financing and is now sprint ing IPO (preparing for an initial public offering).

The Token economy is booming this year.

Around the Spring Festival, the agent wave shattered the ceiling for large model applications. On some platforms, Token usage surged tenfold or even dozens fold (tens of fold).

Many interpret this as a "sudden explosion," but from an industry perspective, it was inevitable—signifying that the application ceiling for large models has been further raised.

On the other hand, companies pricing by Token usage have indeed seen revenue skyrocket. However, the overlooked truth is that revenue increasing tenfold could also mean losses increasing tenfold. This is the most easily overlooked aspect of the current Token craze.

This "cost-plus" model of selling Tokens may struggle to even achieve positive gross margins currently.

Is selling Tokens a good business? Not necessarily.

I'll draw an analogy: this is like the transition from 2G to 3G, or 3G to 4G, when data packages suddenly jumped from a few hundred megabytes to several gigabytes. Selling Tokens directly is still essentially an infrastructure business.

It's like telecom operators selling data packages—charging a fixed price for 10 GB, regardless of the applications built on top. It's quite similar in essence.

Moreover, we're still in an early stage where companies compete fiercely for market share, even at the expense of profit margins. Clearly, this isn't a great business.

At this point, some may disagree: can't selling Tokens command a (model-based) premium? I think the space is limited. Ultimately, value must come from products or applications.

Currently, the gap between the world's most advanced closed-source and open-source models is only 3-6 months—a consensus in the industry. Additionally, in China, affordability doesn't necessarily mean inferior quality.

Our approach is to use fine-tuned open-source models in production environments, while closed-source models are primarily used for early-stage R&D and validation.

In vertical industries, post-training open-source models significantly outperform general-purpose models like OpenAI's, Anthropic's, or Gemini's. Thus, expensive Tokens may not sustain high premiums.

Ultimately, it depends on ROI—whether the scenario justifies enterprises investing resources in post-training and reinforcement learning.

If selling Tokens isn't the most profitable, who is?

We can look to history. During the mobile internet era, telecom operators sold bandwidth, but who really made big money? Meituan, Didi, and Douyin.

Bandwidth accounted for less than 10% of their costs; they created value through services. The Token economy is similar. Sellers of Tokens are like utilities, while users of Tokens are the Meituans and Douyins.

Real value creation comes from the application layer. For a healthy Token economy, Token costs should account for less than 20%, ideally below 10%, of total costs.

This is our mindset—we're among those with "service premiums" in this wave.

Our proven business model involves providing technical services to large B-end clients. Our sales agents help insurance companies sell policies and automakers sell cars. We charge based on transaction results (RaaS), not Token usage.

Our pricing is based on value creation, not Token costs—healthier than subscriptions or cost-plus Token pricing.

Of course, besides us, other scenarios also hold significant potential:

1. **Programming**: Anthropic has excelled here, creating a closed-loop digital world with broad applicability—a universal opportunity.

2. **Marketing**: Close to revenue generation, many companies are attempting breakthroughs here.

3. **Knowledge Production**: Text, image, and video creation.

With models like ByteDance's Seedance commercializing, many are exploring monetization, but few have fully cracked the economic model yet.

From our observations, large model capabilities improve noticeably every six months.

From chatbots to agents; from lacking reasoning abilities to performing complex reasoning; from frequent hallucinations to gradual suppression—progress continues, but the industry is still in its early stages.

To summarize my views:

1. Tokens are infrastructure; real value creation lies in the application layer.

2. A healthy Token economy requires Token costs to account for less than 10%-20% of total costs.

3. Token sellers are like water vendors; those creating value with Tokens will be the real moneymakers in the future.

From this perspective, the Token craze is just beginning and will unfold layer by layer in the coming years.

- 02 - The Most Profitable Token Sellers

Yang Jinsong, Founder of FutureStyle Intelligence

FutureStyle Intelligence provides enterprise-grade AI agent services and has completed three rounds of financing.

The Token topic is indeed hot lately, but focusing only on the surface can lead to misjudgments—let me explain the context behind the explosion.

This Token boom has a clear inflection point: after the emergence of "Longxia" (Lobster) and similar agent products, Token usage suddenly surged.

On one hand, the agent design underlying "Longxia"-type products directly multiplied Token calls by dozens of times.

On the other hand, early providers like Anthropic allowed nearly unlimited use of model APIs in some coding tools, only restricting access frequency.

Later, these channels were tightened or closed, forcing users to pay for millions of Tokens. For example, Longxia could easily spend tens of dollars daily.

Once free or low-cost supply ended, pent-up demand surged.

Several other factors fueled the domestic market explosion:

- Overseas models were expensive, while domestic models caught up in capabilities, leading many to switch from overseas to domestic Token consumption.

- The popularity of "Longxia" showed domestic large model firms a scenario for massive Token consumption, prompting them to launch their own Longxia-like products and coding plans, emphasizing volume.

This is the core context behind the sudden domestic Token "boom."

Many media outlets cite platform data showing surging Token usage.

However, much of this data comes from "intermediary platforms" like OpenRouter, which account for a tiny fraction (possibly <1%) of global Token consumption.

Using such data to judge the entire industry risks overestimation or misjudgment. In reality, daily Token calls in the U.S. didn't grow as dramatically during this period.

Now, everyone says MiniMax, Zhipu, and Yuezhi Anmian are seeing rapid revenue growth from Token sales. That's because their previous sales were minimal. Most coding plans launched in late 2025 or early 2026, riding the Longxia wave to boost consumption.

But the industry is more complex:

- I suspect these model firms are seeing "revenue up, but profits not necessarily up" due to:

1. Insufficient computing capacity, requiring emergency scaling;

2. Fierce competition for data centers and computing power;

3. Price wars and subsidies to capture market share.

This stage resembles trading profits for scale and losses for growth.

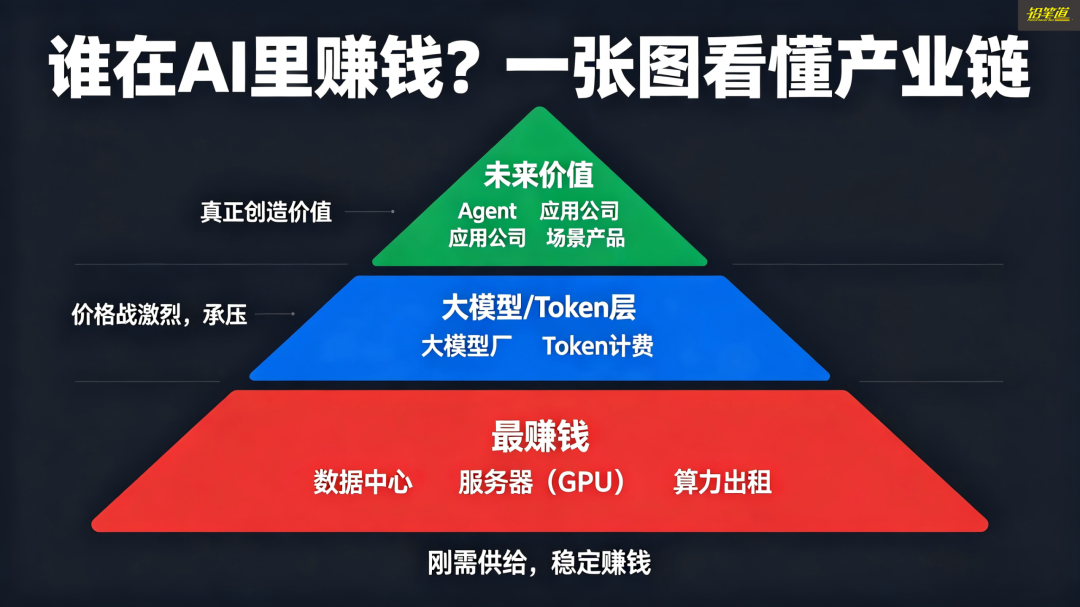

If you look down the supply chain, a clear structure emerges: the current profits lie not with large model firms but with those leasing computing power, building data centers, and selling servers.

They represent "rigid demand" in this cycle, while model firms are under pressure in the middle.

Currently, there are three Token business models:

1. **Computing Power Wholesalers**: They lease computing power and sell inference Tokens in bulk to model firms. These are stable earners.

2. **Token Aggregation Platforms**: They source Tokens at low prices and sell them at a markup to developers—essentially "distributors" with profit margins.

3. **Model Firms**: They face escalating computing costs, price wars, and user acquisition pressures, making this the toughest layer to profit from.

Don't fixate on current Token sales hype. The best path to Token monetization is "encapsulation into applications." In the future, as open agent frameworks mature, numerous vertical applications will emerge, hiding Tokens within products. Users will care about task completion, not Tokens.

Today, much Token consumption is "exploratory"—e.g., using agents to run complex tasks through trial and error, wasting many Tokens.

But once a task is optimized, it solidifies into workflows or skills, reducing Token usage while improving stability.

This is more pronounced in enterprise settings:

- Initially, all tasks run through agents, spiking Token consumption. Gradually, firms realize not all tasks suit this approach.

- Tasks split into two types:

1. **Deterministic tasks** (e.g., tax filing, customs declarations, audit sorting): Clear inputs/outputs and fixed processes. These become "workflows + fixed models," reducing agent Token usage, costs, and improving stability.

2. **Open-ended tasks** (e.g., research, creative content, non-standard decisions): These remain agent-driven, consuming large Tokens.

The biggest application opportunity on Tokens is content production, especially video.

More content creators use agents for end-to-end processes: topic selection, research, generation, distribution, and review. This consumes vast Tokens but enables scalable, repeatable production, forming a commercial closed loop .

- 03 - Tokens Are Worthless; IP Is Priceless

Sima Huapeng, Founder of Guiji Intelligence

Guiji Intelligence is sprint ing (preparing for) a Hong Kong stock listing, providing digital human and digital IP creation services for enterprises.

Selling Tokens suddenly seems profitable due to three key factors:

1. **Agent Explosion**: Past AI interactions (chatting or simple reasoning) had low Token consumption. But agents, with long contexts and complex task chains, drive geometric Token growth—the core driver.

2. **Commercialization Pathways**: Many companies have found ways to monetize Tokens.

3. **Domestic Model Catch-Up**: Chinese models now reach ~95% of global leaders, enabling large-scale Token usage.

What truly drives the explosion in Token demand is the second point—using Tokens to build a business.

For example, short dramas represent a classic scenario. We are also building a content matrix ourselves. IPs like 'Da Sima' generate hundreds of millions of daily views, with corresponding Token consumption ranging from tens of millions to hundreds of millions, soon potentially reaching tens of billions. The most crucial aspect here is that it has formed a closed business loop: content—traffic—monetization—reinvestment in Tokens.

In the past, we sold tools to others. Now, we use Tokens ourselves to directly produce results.

Internally, we have an automated video production system where topic selection, research, content production, and operational reviews are primarily handled by agents. By layering in entrepreneurial IPs and educational content, we've created a replicable content production and commercialization system.

Once replicability is achieved, Token consumption will rapidly scale. But I've always emphasized: Tokens themselves may not be the most valuable element.

Many now discuss selling Tokens, but I don't see this as a sustainable long-term business.

From a commercial perspective, Tokens are a depreciating asset.

On one hand, open-source advancements from companies like Google and DeepSeek are releasing capabilities outward. On the other hand, model capabilities are converging, with differences narrowing. When supply increases, prices inevitably decline—that's economic certainty.

Therefore, I prefer to view Tokens as a 'foundational resource,' similar to bandwidth or electricity, rather than a product with long-term competitive moats.

However, when you 'package' Tokens into deliverable outcomes—such as helping enterprises create content, manage accounts, or drive growth—competition diminishes significantly. That's precisely what we're doing now.

Thus, the truly valuable asset in the future won't be Tokens but IPs.

We invest heavily in Tokens to build IPs and maintain high-quality content across all our outputs. AI serves as an 'efficiency multiplier,' not a 'cost-cutting tool.' Imagine running a beef noodle shop: you don't reduce meat portions just because efficiency improves. Instead, you sell more bowls while maintaining quality.

From an industry perspective, the future Token supply market will clearly bifurcate:

One segment will feature low-cost, mass-scale Tokens for routine tasks like email writing or report generation. This market is vast but hyper-competitive.

The other segment will comprise high-quality, high-value Tokens for premium content and complex creative work—this is where pricing power exists. We've chosen the latter path.

The current industry pain point is that Token quality remains insufficient: adequate for ordinary content but far from creating truly top-tier works like films or literary masterpieces.

A simple analogy helps clarify this industry:

Budget cars meet basic needs—high volume, low cost, widespread adoption. These represent low-end Tokens. Racing cars pursue ultimate performance at high costs but represent technological ceilings—these are high-end Tokens.

The winners will be companies that leverage 'racing-grade capabilities' to create superior value. These firms have the potential to advance to higher levels, even approaching AGI.

- 04 -Two Major Misconceptions in the Current Token Boom

Li Di, Founder of Tomorrow's Journey

Tomorrow's Journey specializes in multi-agent systems and has secured two rounds of angel financing

Tokens are hot right now.

Essentially, it's not that Token consumption suddenly increased—it's that people are seeing Token usage for the first time.

Previously, when you used AI products in the cloud like ChatGPT or various models, Tokens were always being consumed, but you couldn't perceive it. Now, with agent forms like OpenClaw, Token consumption becomes directly visible, creating the perception of 'massive usage.'

The second change is that Token optimization, previously dominated by vendors, now increasingly involves users. As users adjust agents and workflows, this naturally generates significant inefficient consumption.

The third, more critical shift: AI has evolved from 'passive response' to 'proactive execution.'

Traditional AI was reactive—it worked only when questioned. Modern agents operate continuously in the background even when unmonitored. This transforms Token consumption from 'per-use billing' to 'continuous drainage.'

Consider this analogy: Traditional AI resembled public transportation—fixed routes, scheduled operations, with users as passive passengers.

The emergence of agents is like private cars appearing in the AI world. Everyone can now customize routes, tasks, and Token usage.

This leads to two outcomes: The system becomes more 'congested' with higher Token consumption, but the overall economic scale expands significantly.

The real world works similarly—private cars consume more resources than public transport but enable greater freedom and economic activity.

Selling Tokens represents big business, but it doesn't participate in value distribution—it's the foundational layer of the industrial chain.

Like gasoline, Tokens have no inherent added value. The difference lies in application. Whether a gas station fuels luxury limousines or clunkers, the gas price remains identical. The profit comes from what vehicle owners do with that fuel.

This explains why AI differentiation began early—some build infrastructure, others develop applications, and some integrate systems, each occupying different positions.

Could massive future Token consumption enable profits through scale, like oil companies?

Possibly, but the premises differ. Oil, electricity, and water possess monopoly or quasi-monopoly characteristics. Current Token supply operates in perfect competition.

Market players like MiniMax, Zhipu, and Moonshot AI compete on price, capabilities, and APIs. In such an environment, stable profit margins and moats are difficult to establish.

I observe two clear misconceptions in the current Token boom.

First, many assume: Use high-quality Tokens for complex tasks, low-quality ones for simple tasks.

Not quite. The same task may require entirely different Tokens at various stages.

Initial exploration demands powerful models (expensive Tokens) for trial-and-error. Once processes stabilize, ordinary models suffice.

Token quality depends on 'maturity' rather than task complexity. This explains opportunities for edge devices and small models.

Second, evaluating companies—or even employees—based solely on Token consumption.

This logic is flawed. High Token usage could indicate either complex, high-value tasks or pure waste.

For the same video task, different teams might consume 10X more Tokens yet deliver identical results. The 9X excess represents inefficiency.

This resembles evaluating economies solely by GDP—inaccurate and myopic. Focusing exclusively on Tokens leads to skewed perspectives.

The opportunities I see lie in structural changes beyond Tokens themselves.

First, today's agents remain immature. Think of them as cars that drive but handle poorly—they veer off course or even 'crash' (e.g., accidental data deletion).

This means the current bottleneck isn't Token scarcity but inadequate product packaging.

To sell agents to average users, you must: lower usage barriers (avoid geekiness), control risks (prevent accidents), make Token consumption predictable, and provide clear troubleshooting guides.

Companies that excel here will capture initial dividends.

Second, many underestimate the challenge of agent collaboration.

Single agents handling short tasks work fine. But when multiple agents collaborate on long-duration tasks (e.g., 24-hour operations), results often deteriorate.

The reason is simple: Collaboration mechanisms remain underdeveloped. This cannot be left to users to solve.

Future valuable companies will help users optimize multi-agent coordination. We're building precisely that capability now.

Third, I foresee a trend: computing power migrating from cloud to edge devices, with users purchasing their own Tokens.

When users bear computational costs directly, it will disrupt existing AI business models.

Today's unprofitable AI products stem from one root cause: they subsidize users' computing costs. They wholesale NVIDIA computing power and repackage it for users with thin margins.

But when users purchase their own Tokens and software charges only 'service fees,' the entire AI business model becomes viable.

I see promise in two edge device categories:

1. Mobile and wearable devices with sufficient computing power (1B~7B models suffice) and convenient authorization (agents require frequent permission calls).

2. Home/organizational nodes serving as small local computing centers.

If we look only at the second half of this year, hardware will generate profits first.

The reason is straightforward: AI depends on infrastructure, which always begins with hardware. Like 5G—build base stations before applications emerge.

The same applies to AI today. Without widespread edge hardware, agents have nowhere to run, and the Token economy cannot develop.

Thus, the Token economy will evolve in this order: hardware first, then software, then services.

The views expressed herein represent the speaker's independent opinions and do not constitute investment advice.