Google and Meta Launch AI Tools to Boost XR Development: Will the XR Industry Be Transformed?

![]() 04/20 2026

04/20 2026

![]() 444

444

Boost or Replacement?

By VR top

Over the past year, the wave of layoffs triggered by AI has swept through the entire tech industry. From traditional internet companies to game developers and software engineering firms, the trend of replacing human labor with AI—or making way for AI—has become increasingly clear. This is especially true as code generation capabilities have significantly improved, with junior development roles being the most severely impacted.

However, the XR industry seems to be temporarily outside the storm's reach. While layoffs do occur, they are mostly unrelated to AI, largely because XR content development remains prohibitively complex. Developing an XR application requires not only mastering complex engines like Unity/Unreal but also spatial computing, 3D interaction, sensor fusion, and device-specific debugging, making XR content development far more costly than traditional applications.

This points to a core contradiction (contradiction) in the XR industry: insufficient content productivity. The true value of AI lies in filling this productivity gap. With Google's Vibe Coding XR and Meta's introduction of AI Agent workflows for the Immersive Web SDK, a new possibility emerges: AI may not directly take your job but could help you work faster.

01

The XR Productivity Dilemma

The XR industry's slow consumer-side growth stems not only from hardware, pricing, and wearability issues but also from a persistent lack of content. Beyond market influences, a deeper reason is the high complexity of XR content development, leading to significant time and economic costs.

Unlike mobile or web apps, XR development has inherent barriers. Beyond basic interface development, developers must build 3D spaces, handle multimodal interactions (gestures, gaze, spatial movement), and address physics engines, collision effects, real-time rendering, and more. Developing an XR app typically requires expertise in Unity/Unreal, 3D art, interaction design, and hardware adaptation—a blend of skills.

Image Source: Meta

This complexity extends development cycles. Traditional XR content development takes weeks to months, requiring multiple iterations, testing, and device-specific adjustments. Early-stage developers even spent significant time validating basic questions: Is this interaction effective in space? This hinders rapid idea execution.

If mobile internet revolves around UI design, XR centers on spatial interaction design. However, interaction effects cannot be judged through flat previews; developers must test on devices, refining gesture triggers, interaction logic, and other issues through repeated trials—a time-consuming process.

High complexity and costs lead to slow XR content production, heavy team structures, and high developer barriers, preventing scalable content supply and delaying the industry's content boom.

Against this backdrop, Google's Vibe Coding XR and Meta's AI Agent workflows become critical: they don't just optimize tools but directly transform XR content production.

02

Building XR Content with Natural Language

The concept of Vibe Coding, first proposed by OpenAI co-founder Andrej Karpathy, involves describing software needs in plain language to generate functional code—a revolutionary approach democratizing development. Yet, this trend has yet to penetrate XR due to its high development barriers.

Google's Vibe Coding XR component for developers breaks this mold, enabling XR developers to leverage AI for efficiency gains. With this tool, users can generate XR experiences directly through natural language.

In Vibe Coding XR's workflow, Google decomposes XR capabilities into modular components via the open-source XR Blocks framework, enabling Gemini to understand them. Combined with Gemini's long-context reasoning, specialized system prompts, and curated code templates, it automates spatial logic, transforming user prompts into fully interactive, physics-aware WebXR apps in 60 seconds.

Image Source: Google

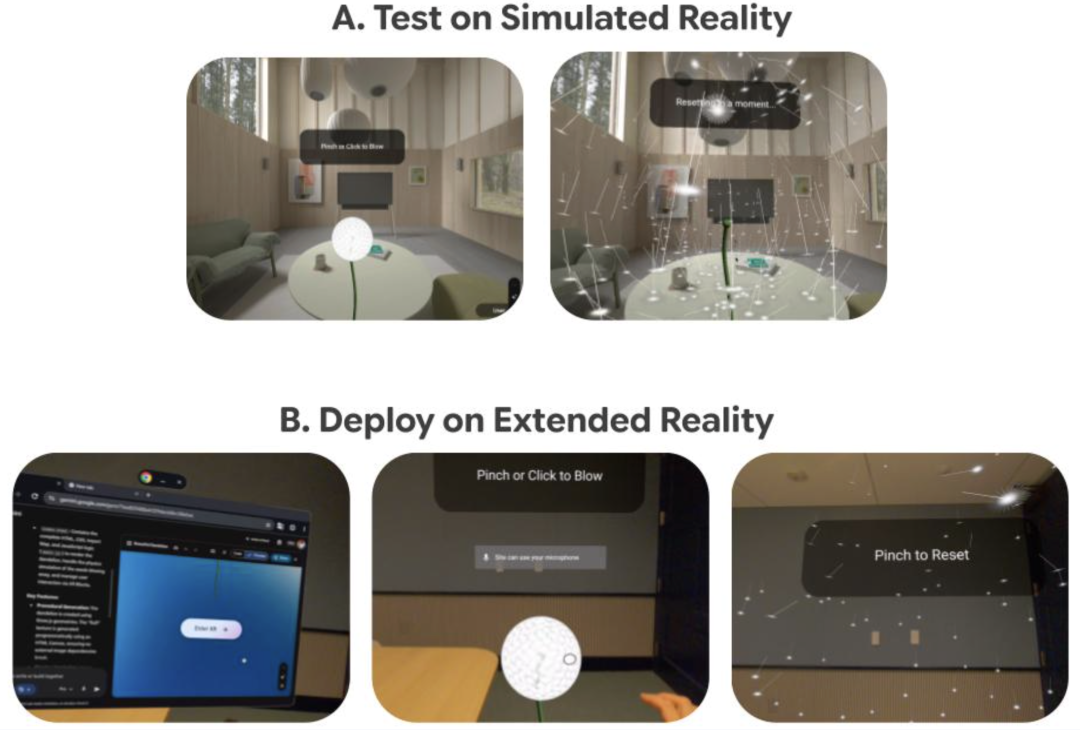

Google claims this component accelerates AI+XR prototyping, allowing users to simulate XR environments on desktops and quickly test AI-generated prototypes on Android XR headsets.

The development process involves four steps:

1. Intent Input (Prompt)

Users describe desired experiences in natural language (e.g., "Create a dandelion that disperses when blown") without XR or programming expertise.

2. Gemini Designs and Implements XR Interactions

Gemini learns from XR Blocks examples, configuring scenes, perception modules, and interaction logic to generate interactive XR apps.

3. Real-Time Demo

Generated XR apps are viewable in Android XR environments. Users pinch to click "Enter XR" and see dynamic dandelions scatter via pinch interactions. They can also share publicly linkable versions.

4. Rapid Iteration

Google provides a desktop "simulated reality" environment in Chrome for quick prototyping and testing before Android XR deployment. Advanced features like depth perception, hand interactions, and physics simulations work best on Android XR devices.

Unlike Google's focus on generation, Meta's AI Agent in its open-source Immersive Web SDK (IWSDK) functions as an automated development system. IWSDK integrates with popular AI programming assistants like Claude Code, Cursor, GitHub Copilot, and Codex, enabling developers to craft high-quality VR experiences through a closed-loop intelligent system.

Officially, this capability emphasizes not code generation but AI's ability to "observe-execute-feedback-repair" in a complete development cycle. Meta describes it as an environment-aware intelligent agent loop.

Similar to Vibe Coding XR, developers input requirements via natural language, and AI automatically writes code, loads scenes, tests interactions, and fixes bugs without manual intervention. Developers can collaborate with AI in the same browser tab.

After initial setup, developers refine experiences via natural language, with AI adjusting parameters, confirming changes, and saving them. This automated system also supports one-click sharing and deployment to platforms like GitHub Pages, Vercel, and Netlify, enabling VR access for all.

If traditional XR development involved humans writing code for machines to execute, Meta's model lets machines write, run, and repair code while humans define goals. This shifts human roles from executors to supervisors.

03

How Will XR Developers Be Redefined?

Google's Vibe Coding XR and Meta's IWSDK AI Agent workflows signal a shift: XR development is transforming from coding to expression.

In the future, coding skills will become less critical, with experience design, spatial interaction understanding, and prompt engineering taking precedence. XR content creators will expand beyond professional developers to include product managers, designers, content creators, and even general users, fostering diverse content forms and gameplay.

With AI, XR content production will accelerate while costs decline, laying the groundwork for a content boom.

Regarding concerns about AI replacing XR professionals, mass displacement is unlikely shortly, but the trend is clear: junior development roles may shrink while new roles in creative design and AI collaboration emerge.

04

Conclusion

Historically, the XR industry's growth hinged on hardware form, performance, and pricing. With AI, the focus may shift from hardware to productivity. As Vibe Coding XR turns ideas into apps and IWSDK AI Agent automates development, the missing piece—content production capacity—falls into place.

When content can be generated infinitely, will XR finally experience its content explosion?