AI Giants Step into the Dark Forest

![]() 04/27 2026

04/27 2026

![]() 481

481

Liu Cixin wrote in The Three-Body Problem about an imagery (yìxiàng, or "imagery") later cited countless times—the Dark Forest. Every civilization is a hunter with a gun; the first to expose themselves dies. The forest isn’t empty—everyone knows turning on a light invites bullets, so all stay in the dark.

In the spring of 2026, top AI labs stepped into such a dark forest.

On April 16, Anthropic released Claude Opus 4.7. That same day, they took an unusual step—publicly admitting Opus 4.7’s performance lagged behind an unreleased model, Mythos, citing “safety concerns.”

On April 23, OpenAI posted GPT-5.5 on its official website (guānwǎng, or "official website"). The same day, Anthropic published a postmortem titled “An update on recent Claude Code quality reports,” acknowledging Claude Code had indeed underperformed over the past month—one released a new model, the other owned up to mistakes. But this “new king” almost flaunted it: “Yes, Claude is temporarily worse—but don’t forget, we’re still holding Mythos in reserve.”

On April 24, “mysterious Eastern power” DeepSeek launched V4 Preview, with Liang Wenfeng’s team officially announcing its deep integration with Huawei’s Ascend 950PR chips. But everyone understood—the truly “full-spec” V4 Pro Max would only release after Ascend 950 supernodes mass-produced in the second half of the year.

Three companies, three moves. On the surface, each followed its product rhythm. But pieced together, one thing emerged:

Each held at least one “gun”—a model stronger than the public version, a next-gen architecture not yet rolled out, a chip supernode not yet deployed at scale. Yet none dared fire first.

Because in this industry, the cost of “exposing yourself” goes beyond leaks. Being first means handing rivals a reference for your capabilities; bearing the brunt of safety scrutiny, regulatory tightening, and public pressure; becoming the moving target for all competitors. There’s no heroism in this forest—every early shooter becomes the next mark.

So the hunters’ most rational choice? Turn off the lights, hold their breath, and hide their weapons.

This is the optimal equilibrium of the game.

Anthropic’s Unshaken Confidence

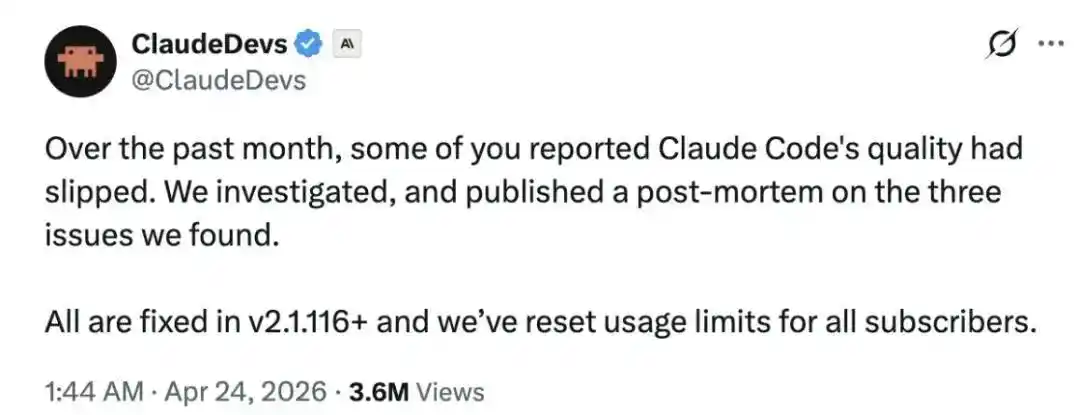

On Claude’s side, the past month saw perhaps its worst version release.

After early updates to Opus 4.7, Anthropic dominated benchmarks while keeping Mythos—exclusively for enterprise clients—in reserve, projecting an unhurried demeanor.

But this Opus 4.7 cycle marked Claude’s worst user experience, with “a tidal wave of criticism.”

In early March, Anthropic adjusted Claude Code’s default reasoning depth from “high” to “medium.” The rationale made sense: “high” mode often froze the UI, frustrating paying users with slow responses. The problem? They didn’t announce it.

By late March, they rolled out an “efficiency optimization”—if a Claude Code session idled for over an hour, the system would clear old reasoning blocks. Designed to save compute, it made Claude forget contexts entirely after each conversation. Developer forums flooded with complaints: “Claude doesn’t remember what I asked last round.”

Recently, a third change occurred—adding a verbosity compression directive to system prompts. By Anthropic’s later admission, this cut Claude Code’s coding quality by 3%.

Combined, these issues led an AMD senior director to write on GitHub: “Claude has regressed to the point it cannot be trusted to perform complex engineering.” Axios’ April 16 article, “Anthropic's AI downgrade stings power users,” brought it to mainstream attention.

Only then did Anthropic concede issues existed.

On April 7, they quietly reverted the reasoning effort adjustment; fixed the cache bug on April 10; removed the verbosity compression prompt on April 20. The full postmortem arrived on April 23—coinciding with GPT-5.5’s public release.

This dismissive “oops, our engineering had bugs, fixed now” attitude clashed with OpenAI’s major release. Hard to call it coincidence.

More telling: When releasing Opus 4.7, Anthropic admitted its performance lagged behind Mythos, an unreleased model. A clear “strategic retreat”—keeping peak capabilities enterprise-exclusive, not rushing to the public because the team wasn’t ready for Mythos.

This narrative holds. But commercially, another truth applies: Anthropic waited six weeks to admit Claude Code’s decline, timing the confession to OpenAI’s new release. Without peer pressure or Opus 4.7 proving “we have reserves,” this statement might never have come.

For Claude, “drip-feeding” isn’t about intentional crippling—it’s about pacing capability releases and problem disclosures to match rivals’ moves.

Unleashing cutting-edge abilities invites targeting. Or, in Anthropic’s view, Opus 4.6’s pressure on competitors hadn’t faded—so why play stronger cards now?

OpenAI’s Repeated Tactics

If Anthropic “held Mythos back,” OpenAI’s drip-feeding was subtler—embedding capability releases in server load curves and an auto-router tiering system.

On April 23, as GPT-5.5 launched, Simon Willison (Django co-founder, prominent AI independent reviewer) wrote cautiously on his blog: “It's not a dramatic departure from what we've had before.”

He added a crucial detail: GPT-5.5 marked OpenAI’s first full model retrain since GPT-4.5; versions 5.1–5.4 were merely incremental updates. In other words, OpenAI had held back for half a year—unsure what competitors would release.

“Holding back updates” is just “drip-feeding.”

More telling: Hours after GPT-5.5’s launch, Codex users filed GitHub Issue #19241, complaining Fast mode started swift but slowed noticeably under heavier loads, yet billed at Fast rates. The phrasing echoed familiar complaints: “Please investigate if GPT-5.5 Fast mode degrades under high load.”

This mirrored GPT-5’s debut on August 7, 2025—when Reddit’s r/ChatGPT pushed “GPT-5 is horrible” to 4,600+ upvotes, forcing Sam Altman to admit in an AMA: “the autoswitcher broke... GPT-5 seemed way dumber,” confirming the router had downgraded users secretly.

Same script, eight months later.

Ironically, the day before GPT-5.5’s official release, OpenAI accidentally pushed Codex’s internal staging environment to production. Pro users screenshot it—leaking Glacier (“Intelligence that moves continents”), Heisenberg (life sciences model), Arcanine (unknown purpose), and oai-2.1, among others—before fixes arrived minutes later.

So as OpenAI positioned GPT-5.5 as “next-gen,” at least 5–6 parallel product lines ran internally, none public yet.

OpenAI admitted as much. Their 2026 roadmap used a term long debated in academia—“capability overhang”—acknowledging a vast gap between true model capabilities and what users actually experience.

Sound familiar? Almost identical to Anthropic’s Mythos rhetoric. Even if the April 22 Codex leak was accidental, OpenAI’s inclusion of “capability overhang” in the roadmap sent a clear signal: “We have plenty more—act accordingly.”

You only “drip-feed” if you have far more than you sell. GPT-5.5’s first 24 hours broadcast that premise live.

DeepSeek’s Patient Wait

DeepSeek’s approach flipped the script—not hiding capabilities, but waiting for the right delivery moment.

With 1.6T MoE, 1M context, Pro/Flash dual specs, and pricing at $3.48 per 1M tokens—a fraction of GPT-5.5’s cost and in Opus 4.7’s league—overseas independent reviewers concluded: performance nears but slightly lags GPT-5.4/Gemini 3.1-Pro, while pricing “shatters frontier labs’ economics.”

Yet by DeepSeek’s own standards, V4 Preview cost significantly more than V3’s “eerily cheap” pricing. Everyone knew—this wasn’t the full version.

DeepSeek V4’s story didn’t start or end with its release.

It began with R2’s canceled 2025 launch. Originally set for May 2025, R2 delayed to autumn as China’s DeepSeek migrated infrastructure to Huawei’s CANN ecosystem. No lab could complete such an overhaul in a quarter—compilers, operators, communication libraries, inference frameworks, MoE routing all required rewrites.

For V4, DeepSeek formally included Ascend in training hardware for the first time—a hybrid training debut with Ascend entering the fray.

But the next-gen chip, Ascend 950DT, optimized for large-scale training, wouldn’t mass-produce until Q4 2026 per Huawei’s roadmap. So V4 trained on legacy 950PR clusters; the 1.6T MoE “full-spec” V4 Pro Max needed next-gen chips to train thoroughly and deploy at scale.

The real engineering challenge is not 'whether V4 can be trained'—it already has been—but 'how V4 can run at full capacity, stably, and cost-effectively on Ascend'.

The Ascend 950PR will enter mass production in Q1 2026, boasting FP4 computing power of 1.56 PFLOPS and on-chip memory of 112GB, surpassing NVIDIA's H20 on paper. However, transitioning from a single chip being operational to an entire supernode stably serving millions of token/second inference requests are two different matters. The full-fledged version of V4 Pro Max targets this 'supernode'—the large-scale cluster version of the Ascend 950 series, which will be gradually deployed in the second half of 2026.

This constitutes a strategy entirely different from the first two. The logic behind Anthropic and OpenAI's incremental releases is that they hold something stronger and withhold it for now; DeepSeek's logic is that its full-fledged version will wait for a moment when prices can drop to another level.

This difference is crucial.

DeepSeek's true trump card has never been 'cutting-edge performance' but rather 'cutting token prices to levels others dare not match, while maintaining sufficient performance.' V4 Preview has been adapted for NVIDIA cards and Ascend 950PR, but achieving full-scale, mass-production inference requires the supernode to be in place. Once that moment arrives, two things will happen simultaneously: First, the capabilities of V4 Pro Max can be fully unleashed; second, inference costs and API pricing will drop another notch—for a company that relies on pricing to penetrate the market, the latter is even more critical than the former.

The 'DeepSeek moment' that people truly expected—and which occurred in early 2025—did not repeat in this release. Instead, the launch of V4 Preview serves as a teaser; the real showstopper is the 'DeepSeek + Huawei Ascend' moment in the second half of the year.

From this perspective, what Liang Wenfeng's team is doing now is not a forced 'holdback' but a commercially restrained 'choice'—opting to debut the strongest version in a scenario where it holds the most influence: the first day after large-scale deployment of domestic supernodes. Until then, V4 Preview will first solidify the narrative of cost-effectiveness.

DeepSeek's mission has never been to propel domestic large models to the top of some leaderboard—a 'long-board narrative'—but to achieve a 'systemic narrative' where chips, training, inference, and pricing all work seamlessly together. The latter is far more important than the former.

Just a few days ago, Jensen Huang stated on Dwarkesh Patel's podcast that if DeepSeek debuts on Huawei chips, 'that would be a horrible outcome for our nation.'

Currently, NVIDIA still dominates top-tier computing power. However, following Huang's own 'five-layer AI cake' framework—energy, chips, infrastructure, models, and applications—the domestic large model industry now has viable domestic solutions at every layer, with the gap narrowing at a visible pace. Only by completing the final piece of the puzzle—chips—can DeepSeek's open-source large model story become an even bigger narrative than America's: a crucial step toward global intelligent equality without excessive costs.

Enabling the world to bypass certain advanced computing powers controlled by hegemons and step into an efficient intelligent society.

Epilogue

Anthropic's 'holdback' is proactive. They have Mythos but haven't released it, citing safety concerns.

OpenAI's 'holdback' is structural. They have a Pro tier but don't always offer it, citing infrastructure and price tiering.

DeepSeek's 'holdback' is necessary. It relates to an entire narrative paradigm for societal intelligent transformation.

But from another angle, this resembles the Dark Forest depicted by Liu Cixin: In this pitch-black forest of intelligence, no top hunter will fire the first shot.

Exposure means holding nothing back, having no ace in the hole, and becoming a sitting duck for another hunter.

No one knows who will fire the most fatal [fatal] shot first. But one thing is certain: Every model you use today is not yet in its true form.