DeepSeek V4 Unexpectedly Rises to Prominence: A Signal of the Golden Age for China’s Computing Power?

![]() 04/27 2026

04/27 2026

![]() 432

432

For the vast majority of ordinary internet users, the most talked-about events in the AI sphere recently, aside from the viral sensation of GPT Image 2 and its ensuing "uncanny valley effect," are undoubtedly the emergence of DeepSeek V4. In contrast to the ethical dilemmas and controversies sparked by the former, the latter carries far-reaching implications for the popularization of China’s computing power...

01. The Proliferation of "Counterfeit Imagery"

On April 22nd, topics such as #ThoughtCookReallyJoinsXiaomiAuto# trended on Weibo, stemming from a fabricated official announcement image claiming "Tim Cook has joined Xiaomi Auto," which went viral on social media starting April 21st. Despite immediate debunking by Xu Jieyun, Special Assistant to the Chairman and General Manager of Xiaomi's PR Department, many netizens remained convinced of its authenticity.

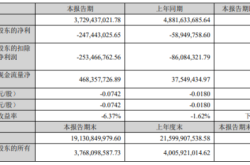

On the same day, a fake screenshot purportedly from "Cailian Press Telegraph" circulated online, falsely asserting that Xishanju Interactive Entertainment, a subsidiary of Kingsoft Software, would dissolve on June 31st and that its games and development studios would be sold to NetEase Leihuo. This misinformation caused Kingsoft Software's stock price to dip at the market open.

On April 23rd, topics like #Xishanju# also suddenly trended. Xishanju had to issue an urgent statement via its official account to refute the rumor and vowed to hold the responsible parties accountable, which somewhat quelled public outrage. The reason many netizens believed these fabrications was that the images appeared exceedingly "authentic."

Coincidentally, the "screenshots" in both trending incidents were generated by OpenAI's latest GPT Image 2. This marked the first time the majority of Chinese social media users witnessed its capabilities firsthand, further fueling the creative fervor of countless AI enthusiasts after the trend took off.

Image: Generated by netizens using Image 2 for demonstration purposes only; not real.

Image: Generated by netizens using Image 2 for demonstration purposes only; not real.

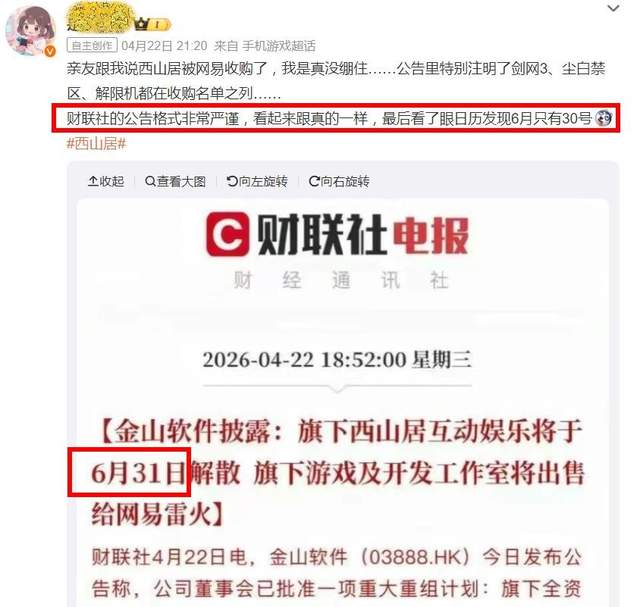

With its realistic lighting effects (so detailed that it can even simulate screen reflections and fingerprint smudges) and lifelike facial expressions, the images generated by Image 2 are realistic enough to deceive netizens into overlooking any "AI telltale signs." On the morning of April 24th, topics like #GPTImage2UncannyValley# trended once again.

While such remarkable AIGC technology undoubtedly has its merits—such as reducing the workload for countless designers, illustrators, and self-media creators, which deserves recognition—it also raises a grave societal concern: when various types of images become indistinguishable from real ones, how should we address the ethical challenges they pose?

According to a Jiemian report, it is even more alarming that Image 2 supports native 4K resolution and boasts a generation speed six times faster. An average user can input a sentence and obtain a highly realistic poster, ID card, or news screenshot in just 3 seconds, significantly lowering the barrier to creating counterfeit content.

In other words, once such technology is exploited for spreading malicious rumors or launching cyber-attacks, it could lead to uncontrollable consequences. This remains an unsolved problem, so Image 2 can only be cautiously acknowledged in principle but "rejected" in practice.

On the morning of April 24th, just as the uncanny valley effect of Image 2 intensified, netizens received news of the official release of the DeepSeek V4 preview version. After multiple rounds of predicted "delays," the highly anticipated V4 finally made its debut, even exciting the overseas AI community.

At the same time, rational and professional netizens began to ponder a profound question: Will DeepSeek V4 become the ultimate rival to Claude? If not, does its emergence still hold significance?

02. The "DeepSeek Moment" for China’s Computing Power

First, the preview version of the DeepSeek-V4 model was officially launched and open-sourced simultaneously. Immediately after, Huawei announced that through close collaboration in chip-model technology, it had achieved support for the DeepSeek V4 series models across all products in its Ascend supernode lineup. This confirmed months of industry rumors.

In February of this year, multiple media outlets and industry influencers "leaked" information claiming that DeepSeek had not allowed U.S. chip manufacturers to test the V4 model but had instead prioritized Huawei. Additionally, according to the latest media reports, besides Huawei, chip manufacturers like Cambrian are also accelerating their adaptation efforts for V4.

Why Did Huawei Become DeepSeek's Top Choice?

Despite the well-known fact that Huawei's AI acceleration cards lag behind overseas giants like NVIDIA in process technology, resulting in inferior performance compared to foreign competitors in large-scale model training, Huawei still possesses core advantages, primarily in inference capabilities. Especially in scenarios involving low-precision, high-throughput, and large-scale model deployment, Huawei has significant advantages (as exemplified by products like the Ascend 950PR).

Of course, Huawei's shortcomings in training capabilities are being compensated for faster than imagined. The Ascend 910B is close to the A100 level in terms of FP16 computing power and cluster interconnection, indicating that it has already addressed the feasibility and usability of large-scale model training. Moreover, Huawei has more tricks up its sleeve, namely the "950 supernode" explicitly mentioned by DeepSeek.

Public information reveals that the Atlas 950 SuperPoD supports the deployment of computing power from 8,192 Ascend cards through a cluster architecture, maintaining global leadership in key metrics such as card scale, total computing power, memory capacity, and interconnection bandwidth. Its card scale, total computing power, memory capacity, and interconnection bandwidth reach 56.8 times, 6.7 times, 15 times, and 62 times, respectively.

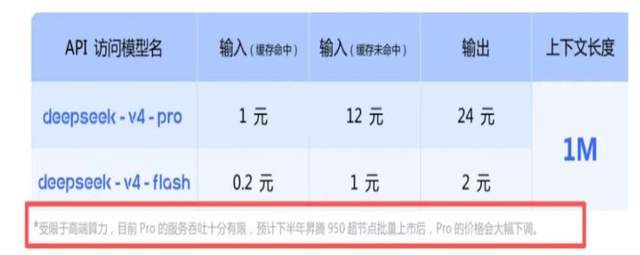

Huawei's existing CloudMatrix384 supernodes have been deployed in over 300 sets globally, serving more than 20 clients. However, current chip production capacity still requires a ramp-up period to meet the computing power demands of large clients like DeepSeek. DeepSeek expects that by the second half of 2026, its capacity expansion rhythm with Huawei will largely align.

In other words, the current computing power constraints can be resolved over time. Considering that it is based on purely domestic chips, there is another core advantage beyond price competitiveness: data security!

For sensitive industries and enterprises such as finance, education, government agencies, and confidential units, the priority of data security and controllability far outweighs performance. This means that domestic AI acceleration card players, including Huawei Ascend, Alibaba T-Head, Baidu Kunlunxin, and Cambrian, have a promising future ahead.

As of the evening of April 24th, the latest news from the National Supercomputing Internet revealed that the DeepSeek-V4 preview version has been launched on its AI community platform. Currently, enterprises, research institutions, and individual developers can download the DeepSeek-V4 model files on its AI community and rely on the platform for rapid deployment, inference, and development.

Therefore, supporting domestic models, supporting domestic chips, and supporting the construction of an autonomous and controllable AI ecosystem, accelerating domestic substitution, will inevitably become a major trend. This is precisely the irreplaceable far-reaching significance of domestic large-scale models like DeepSeek V4!

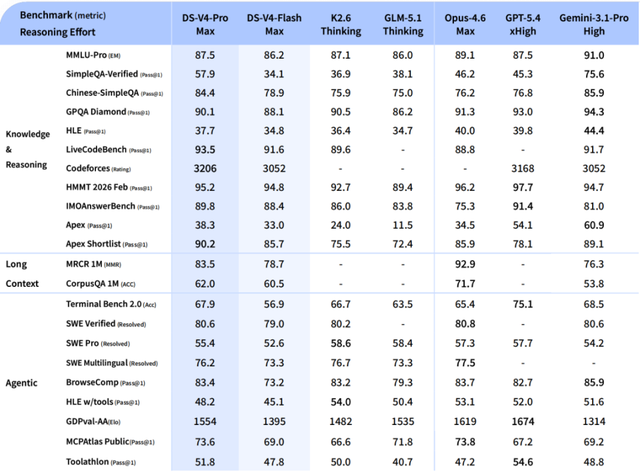

Of course, objectively speaking, DeepSeek V4 is not yet perfect. For example, DeepSeek officially admits that the Agent capability of V4-Pro is "superior to Sonnet 4.5 and delivers quality close to Opus 4.6's non-thinking mode but still lags behind Opus 4.6's thinking mode to a certain extent."

The official report also clearly states that V4's capability level still lags behind GPT-5.4 and Gemini-3.1-Pro, with a "development trajectory approximately 3 to 6 months behind cutting-edge closed-source models."

Additionally, the current DeepSeek V4 series does not support multimodality due to development resource constraints, which is also a regrettable limitation.

However, these flaws do not overshadow its strengths. DeepSeek V4 still stands as the strongest open-source model in global knowledge benchmarks (without equal), only slightly inferior to the top closed-source model, Gemini-Pro-3.1—which leads in general knowledge question-answering benchmarks such as MMLU-Pro (91.0), SimpleQA-Verified (75.6%), and GPQA Diamond (94.3%).

Furthermore, in benchmarks for mathematics, STEM, and competitive coding, DeepSeek-V4-Pro surpasses all currently publicly evaluated open-source models and achieves results comparable to the world's top closed-source models, which DeepSeek calls "world-class reasoning performance."

"Not seduced by praise, not frightened by slander, following the path, and maintaining integrity."

There is reason to believe that in the near future, DeepSeek and China’s computing power will reach the pinnacle together!

References:

Cover image source: AIGC

Official DeepSeek tweet

GPT-Image 2 causes the proliferation of "counterfeit imagery," with ethical breaches more frightening than technological breakthroughs - Jiemian News

AI-generated image suspected of causing trouble: Xishanju falsely rumored to dissolve and be sold to NetEase, causing Kingsoft Software's stock price to dip at the market open - PConline